All pages referring or tutorials for Azure Virtual Desktop.

This is the multi-page printable view of this section. Click here to print.

Azure Virtual Desktop

- Update your Kerberos configuration with Azure Virtual Desktop (RC4)

- I tested Azure Virtual Desktop RemoteAppV2

- Azure Virtual Desktop V6/V7 VMs imaging

- Remove Microsoft Print to PDF and OneNote printers script

- Azure Virtual Desktop FSLogix and Native Kerberos authentication

- FSLogix and maximum Azure Files security

- Azure Virtual Desktop RDP Properties

- Azure Compute Gallery and (AVD) VM images

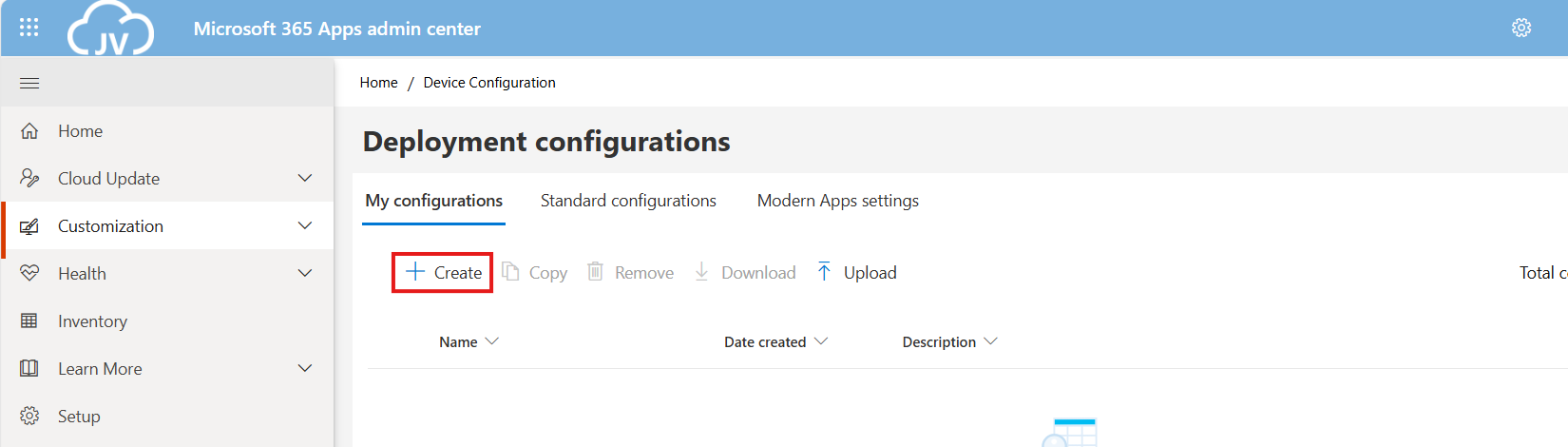

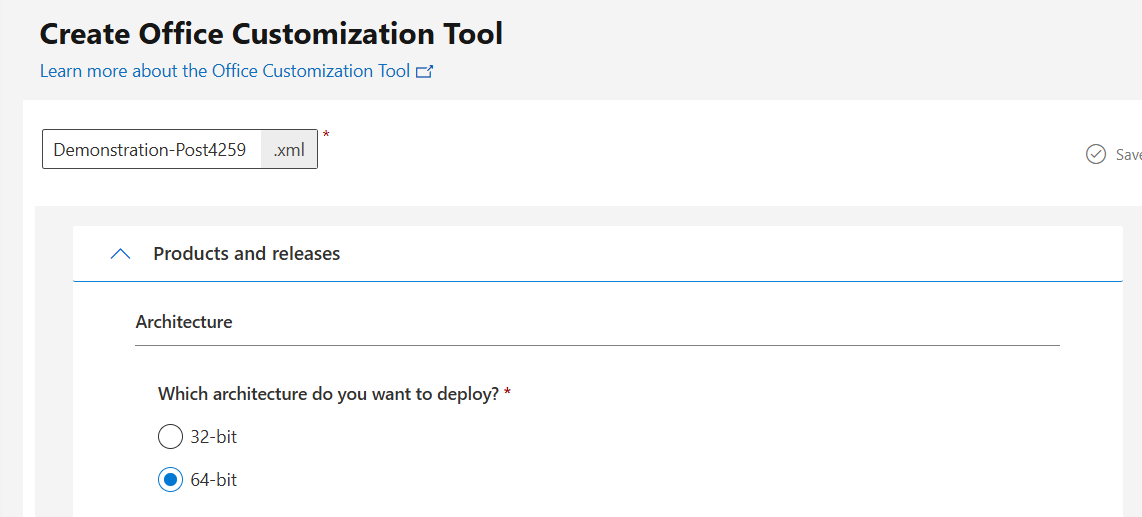

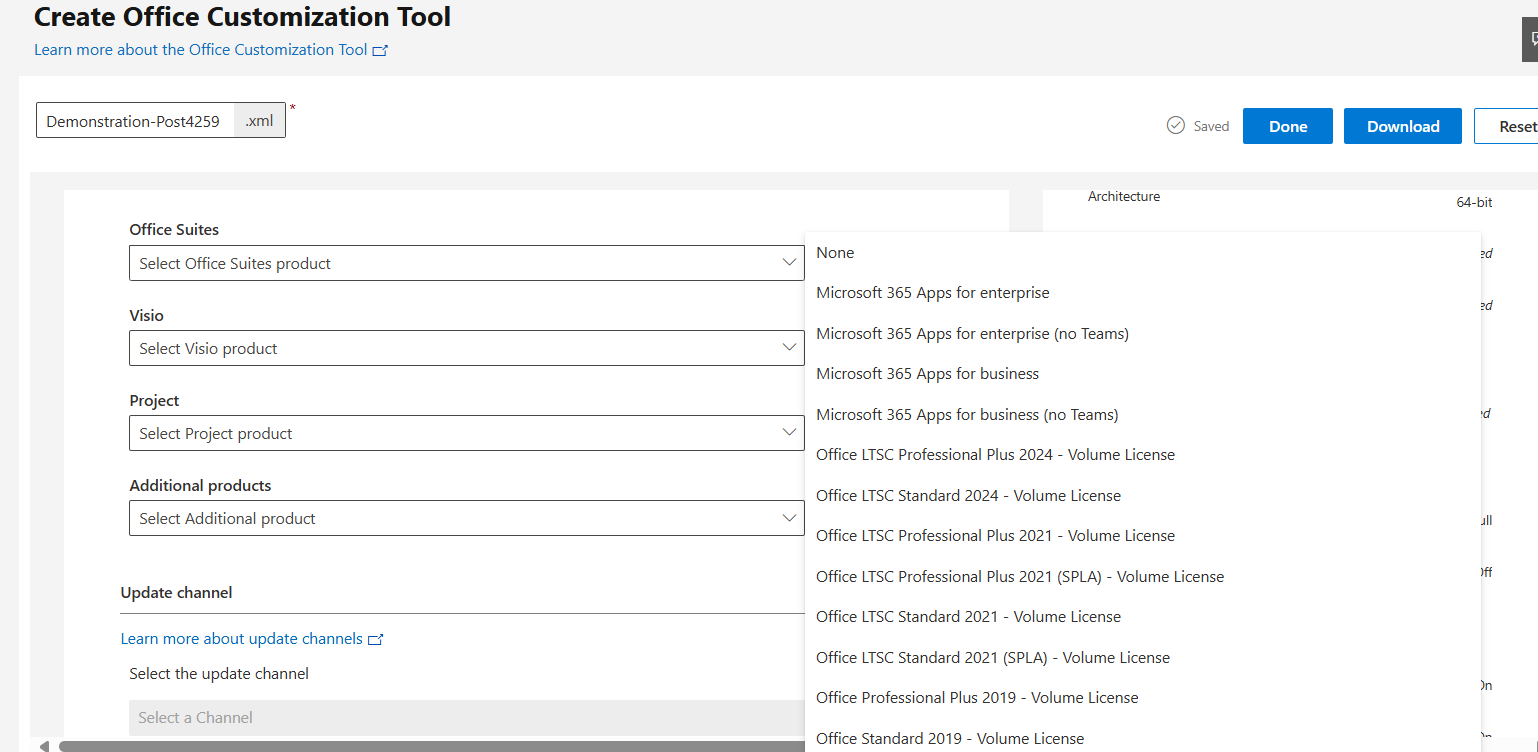

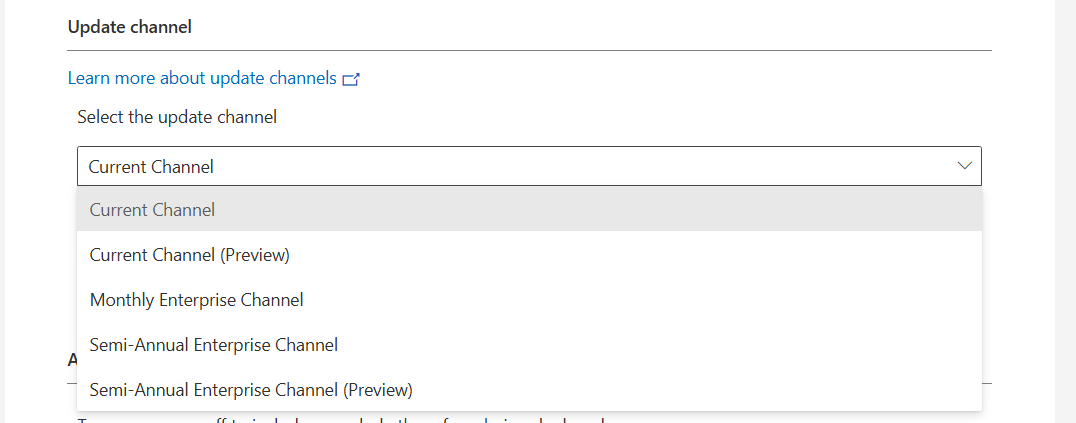

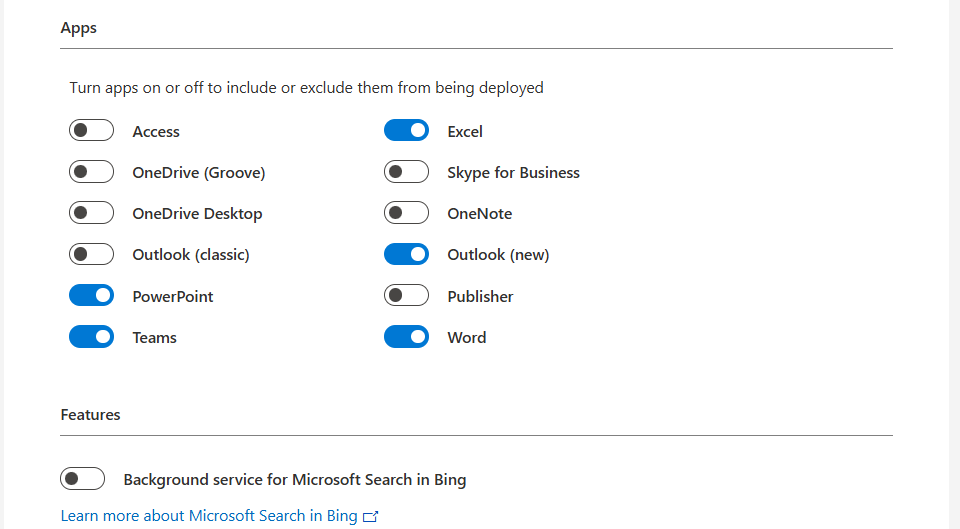

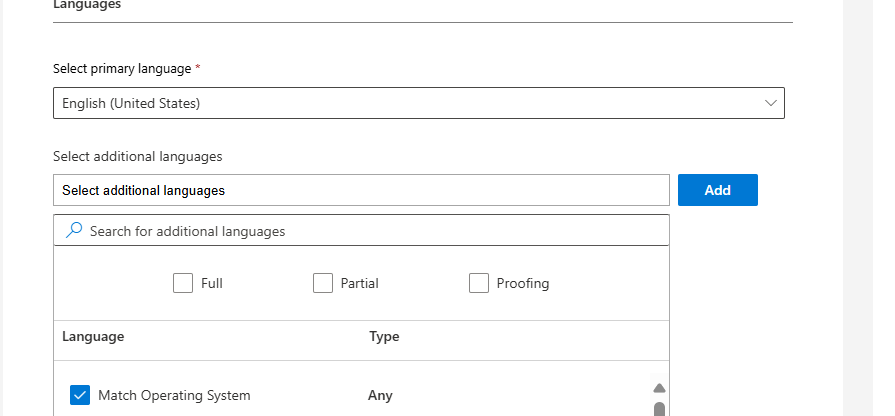

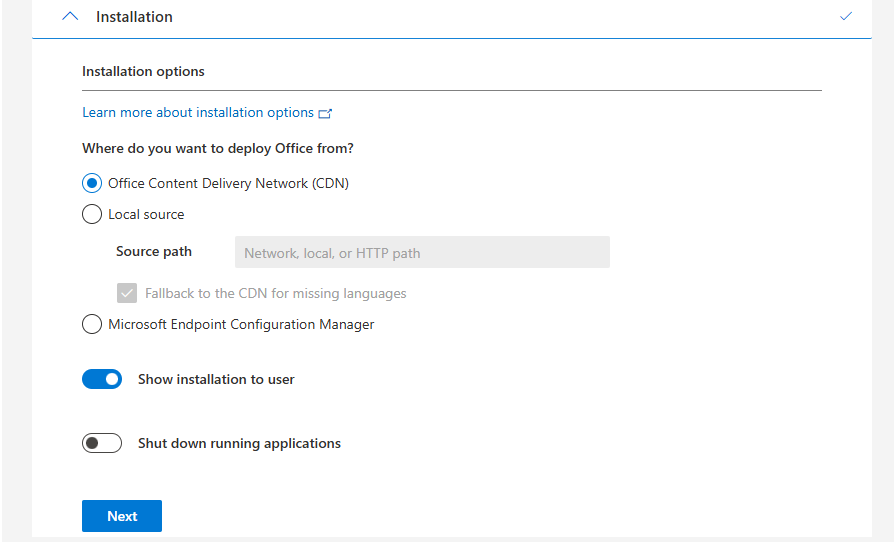

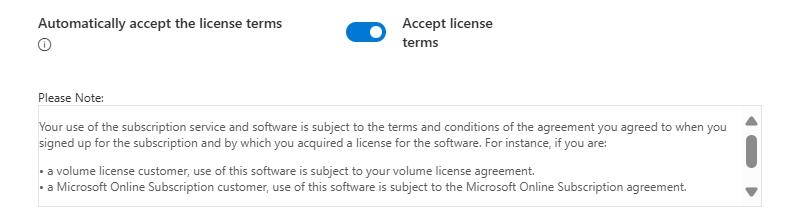

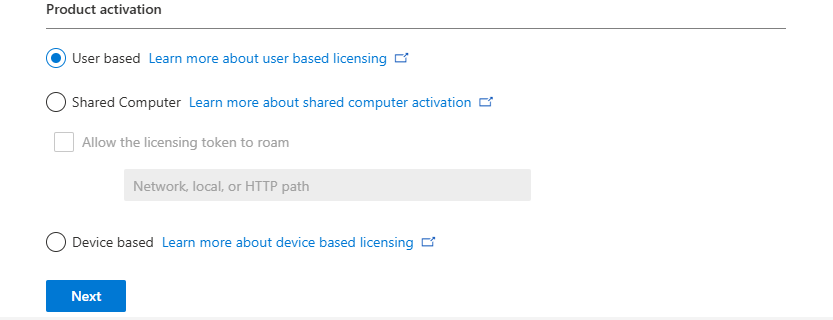

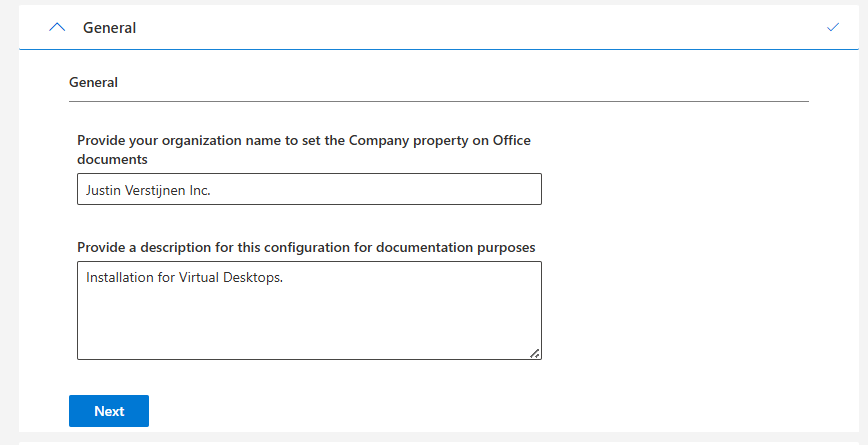

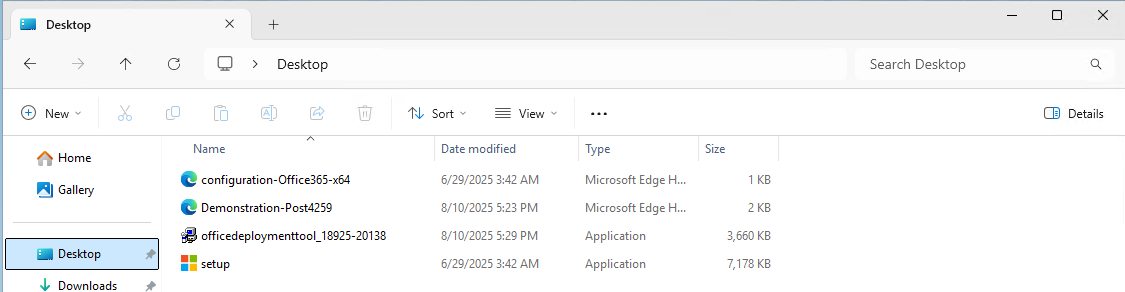

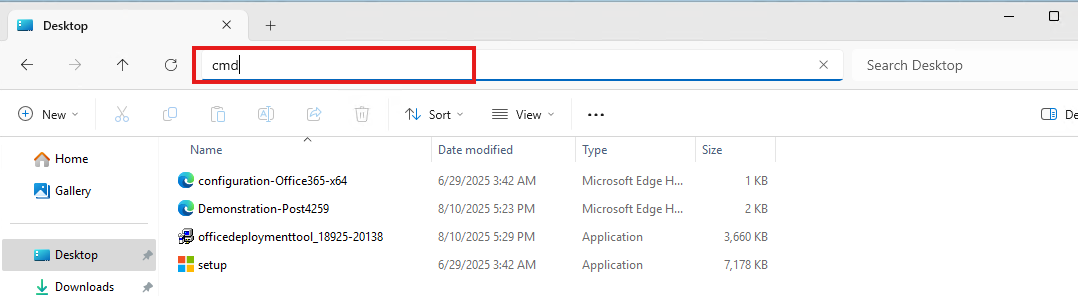

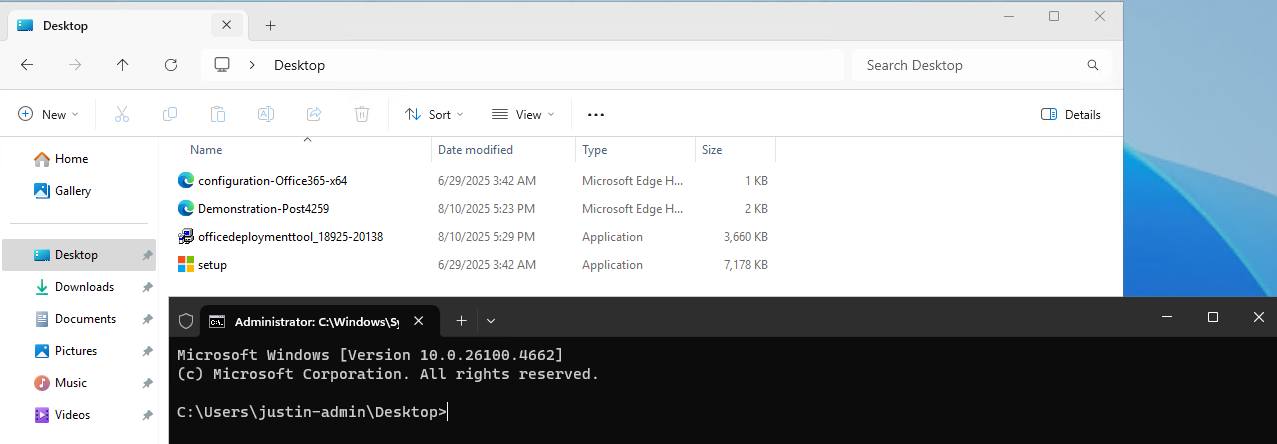

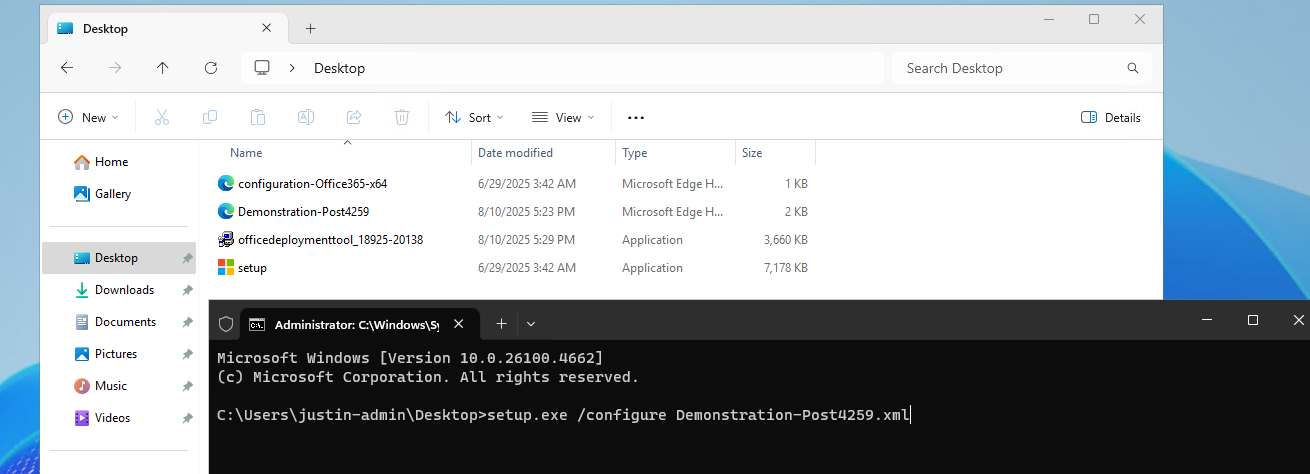

- Customize Office apps installation for Azure Virtual Desktop

- Joining storage account to Active Directory (AD DS)

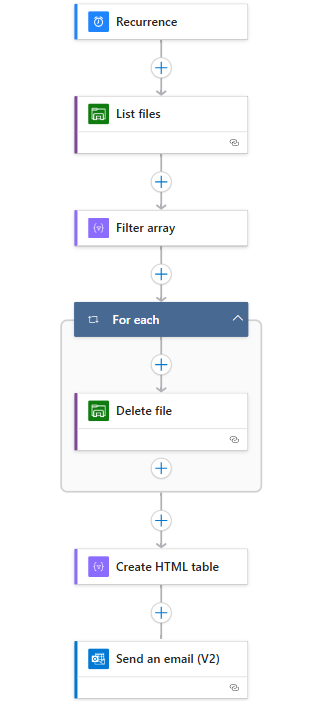

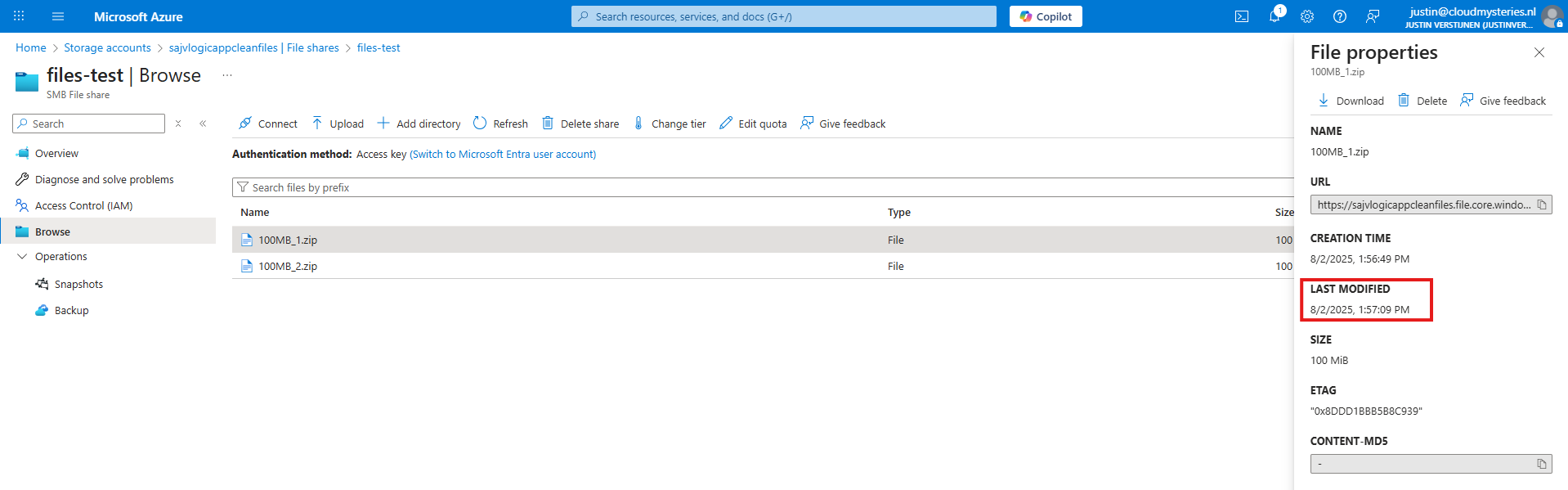

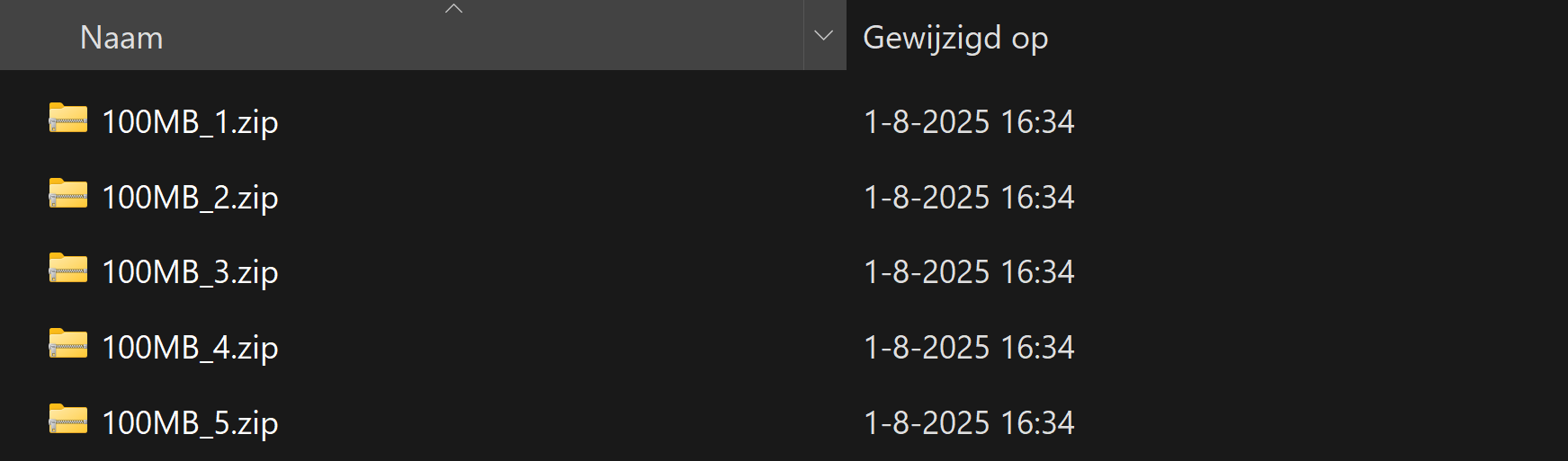

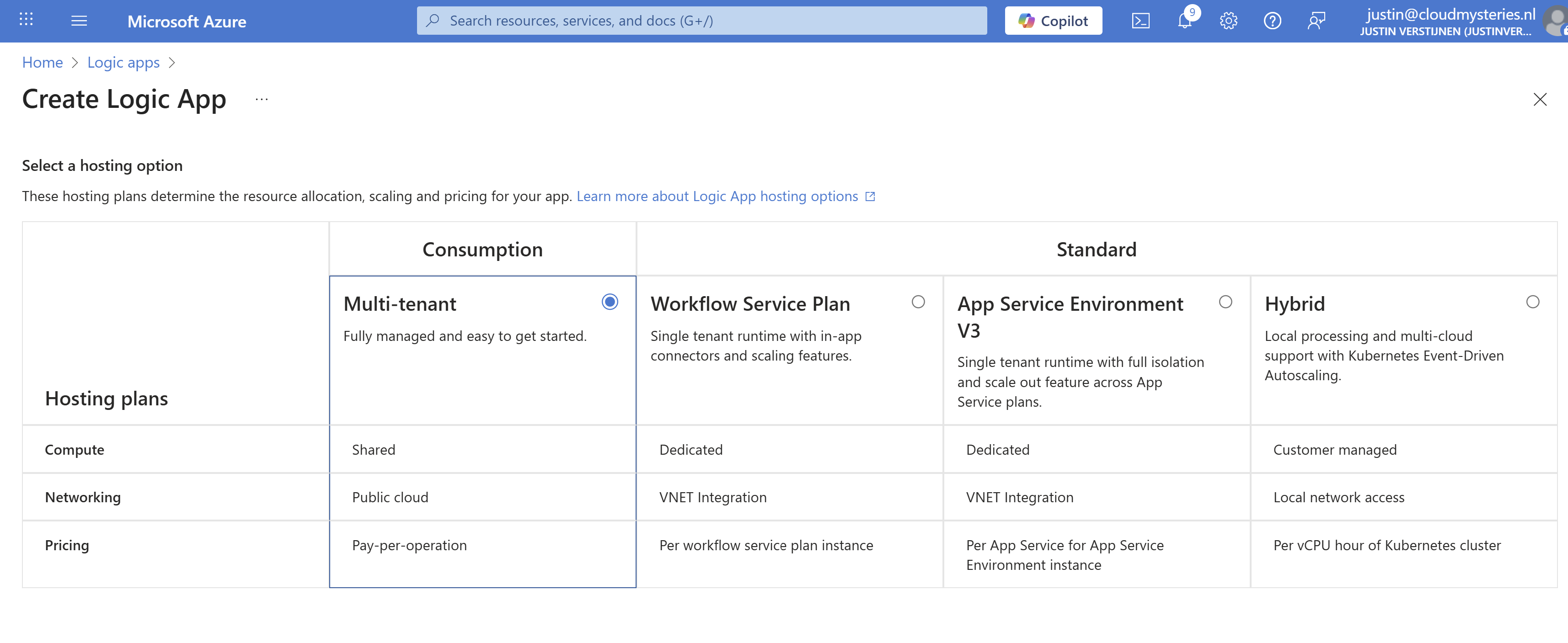

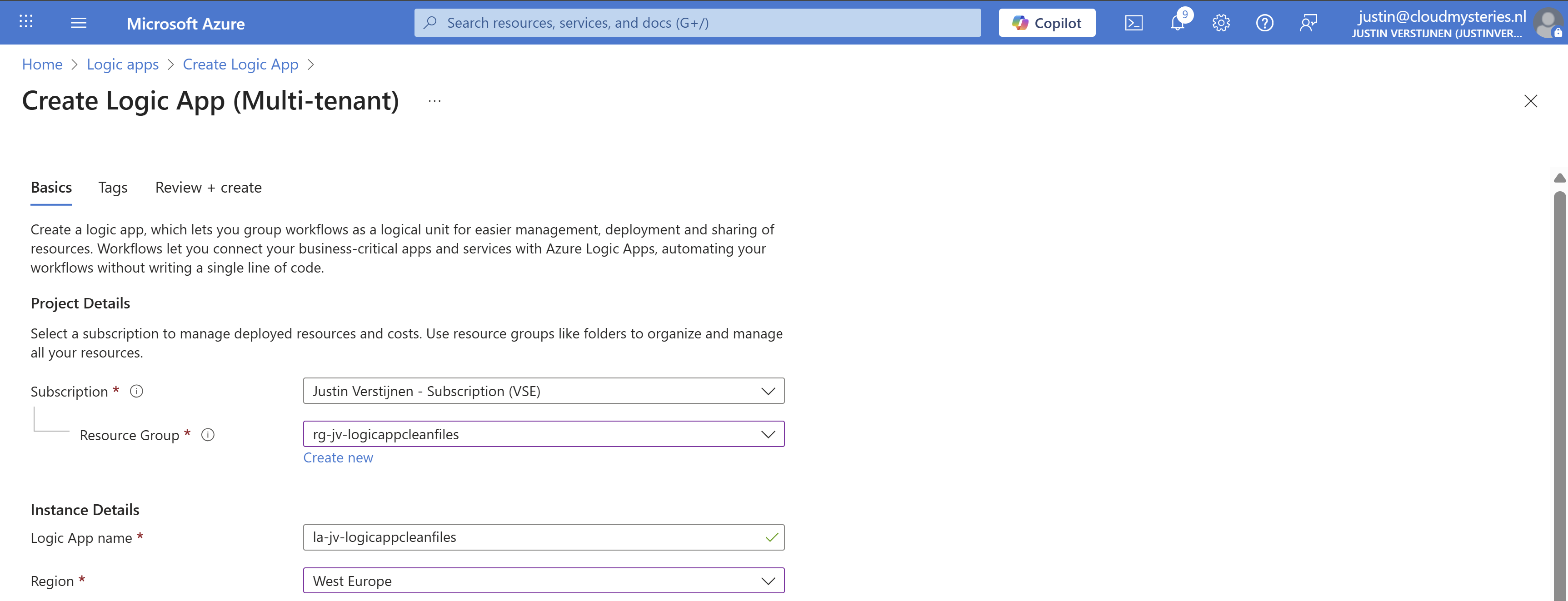

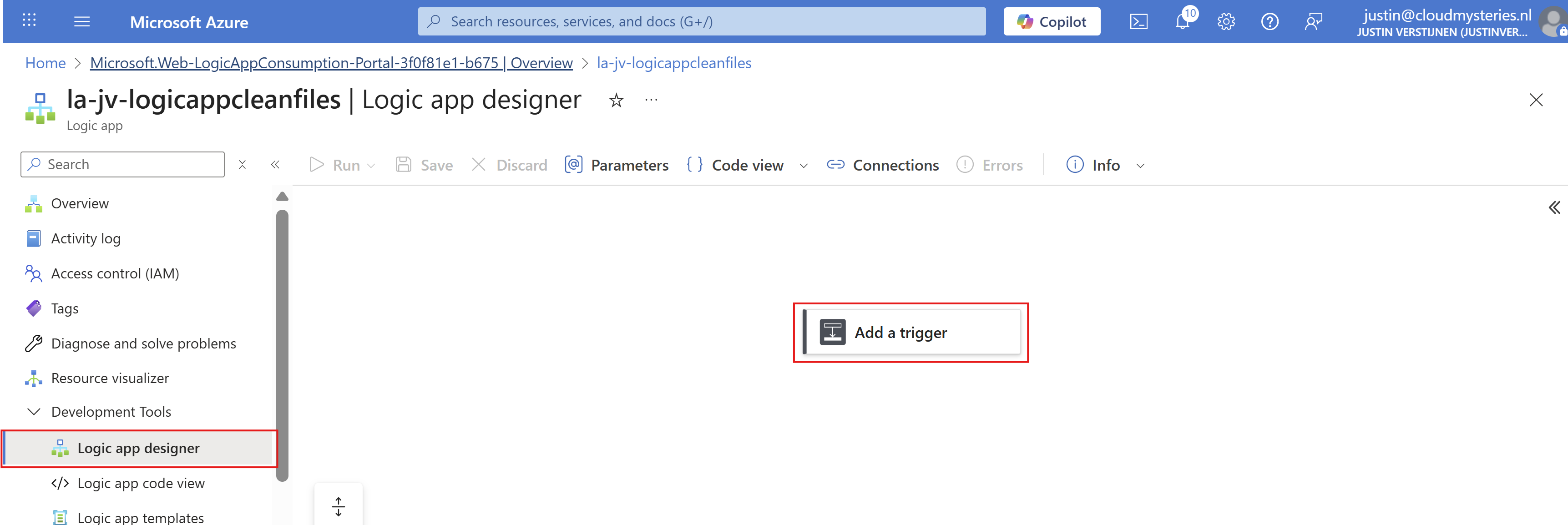

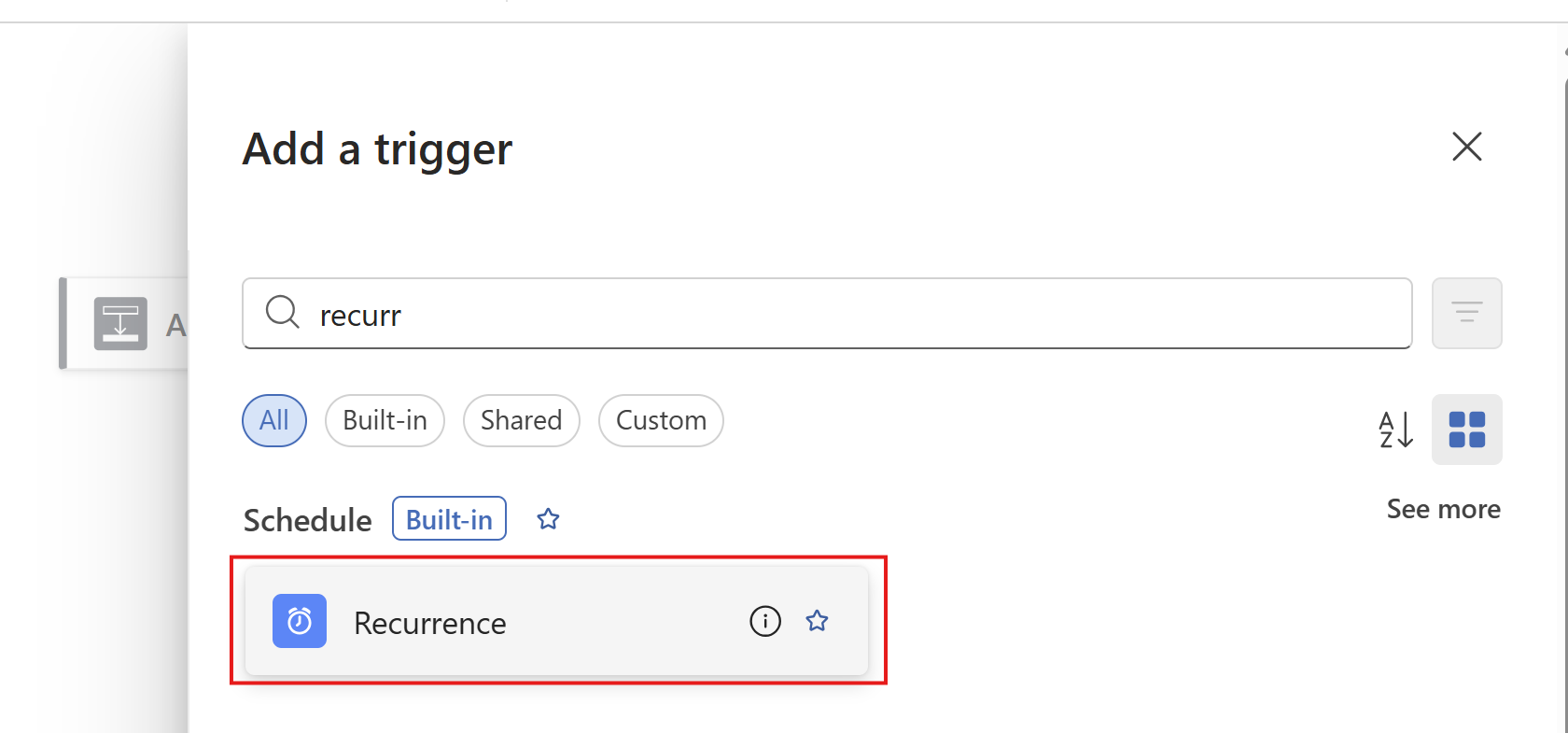

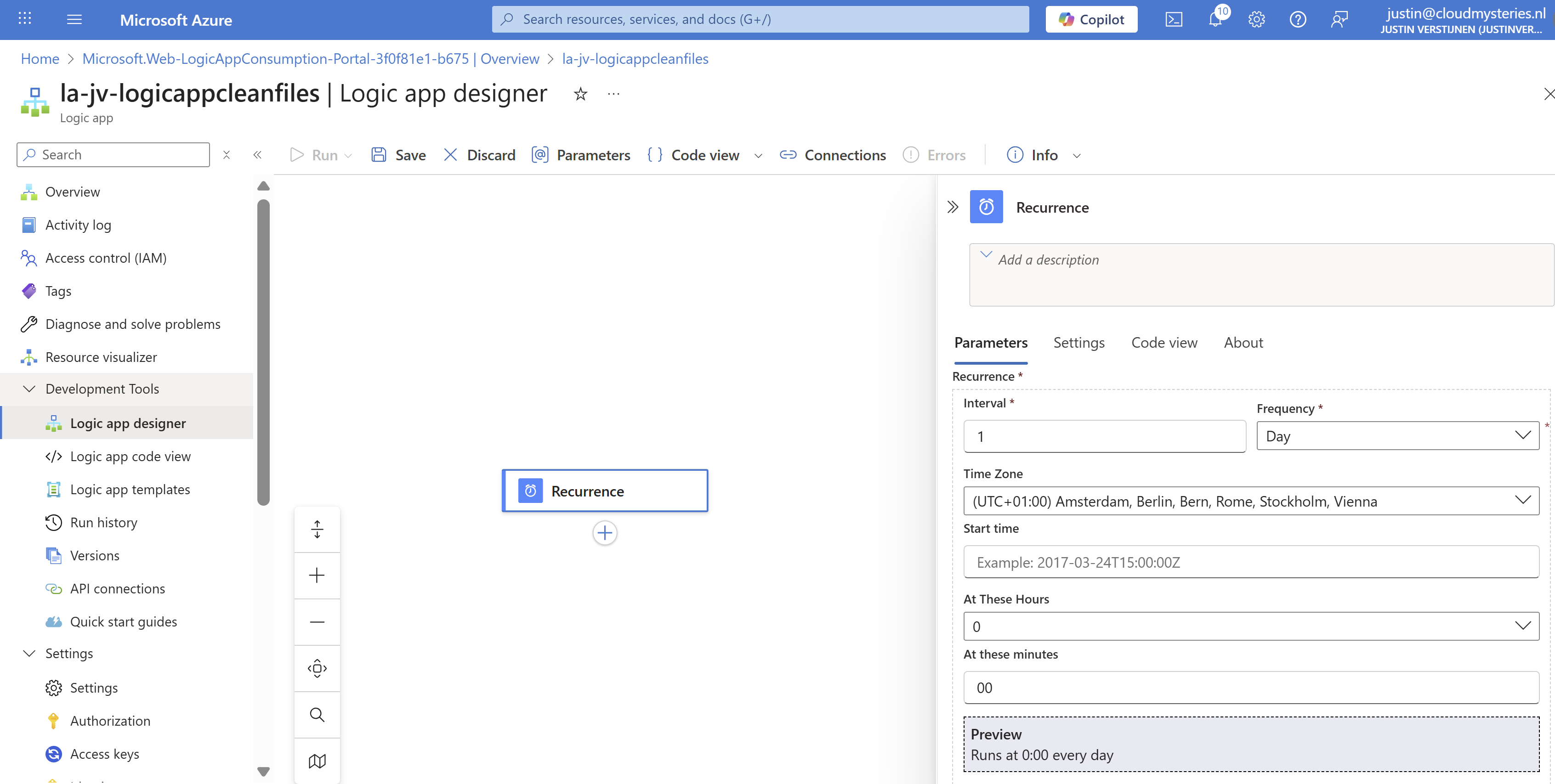

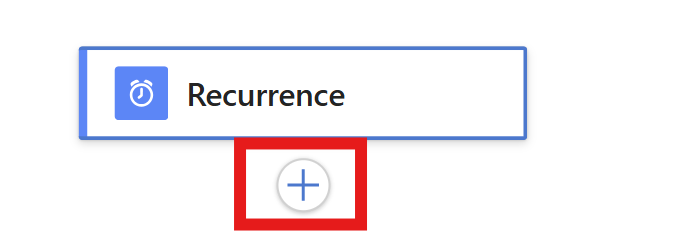

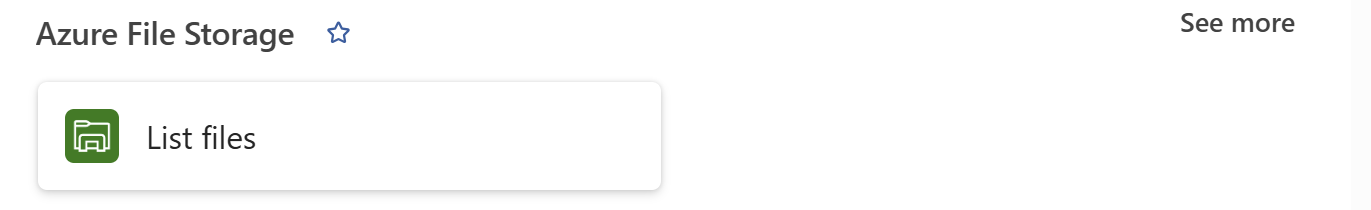

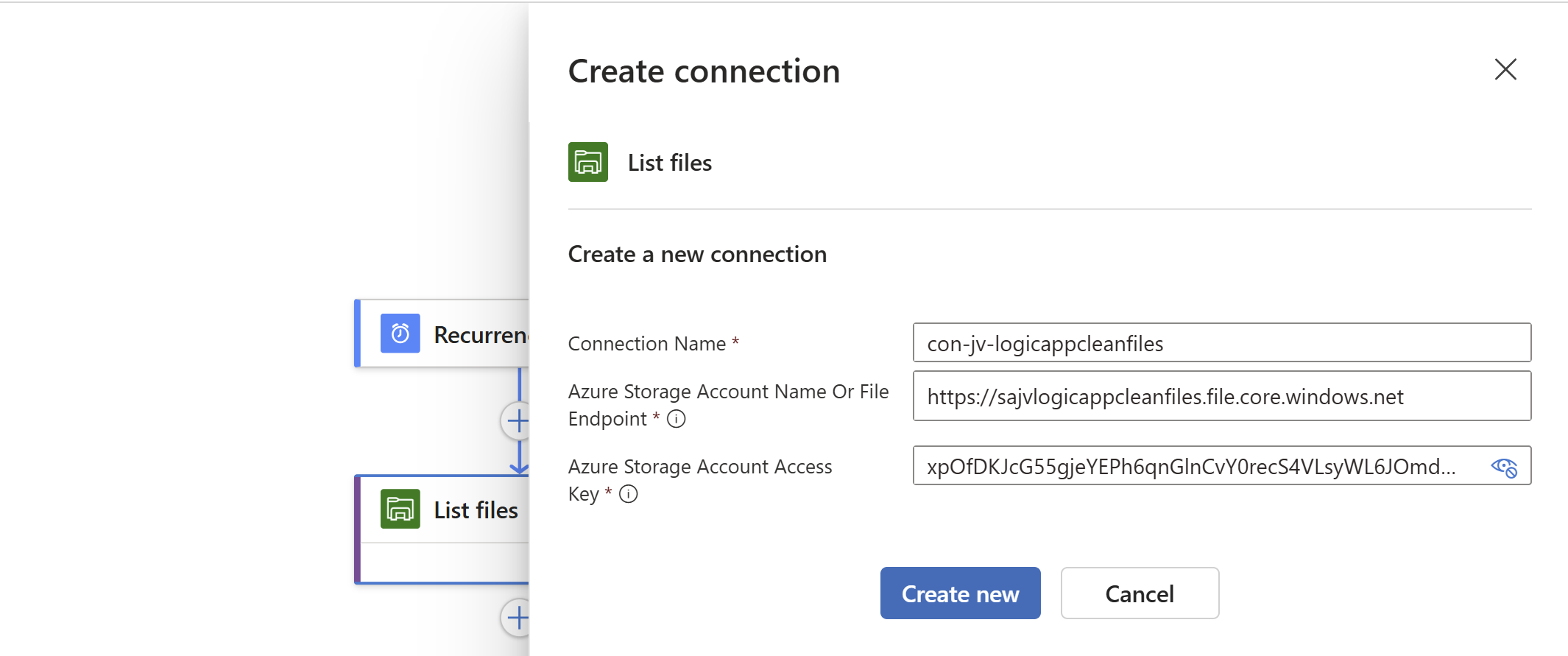

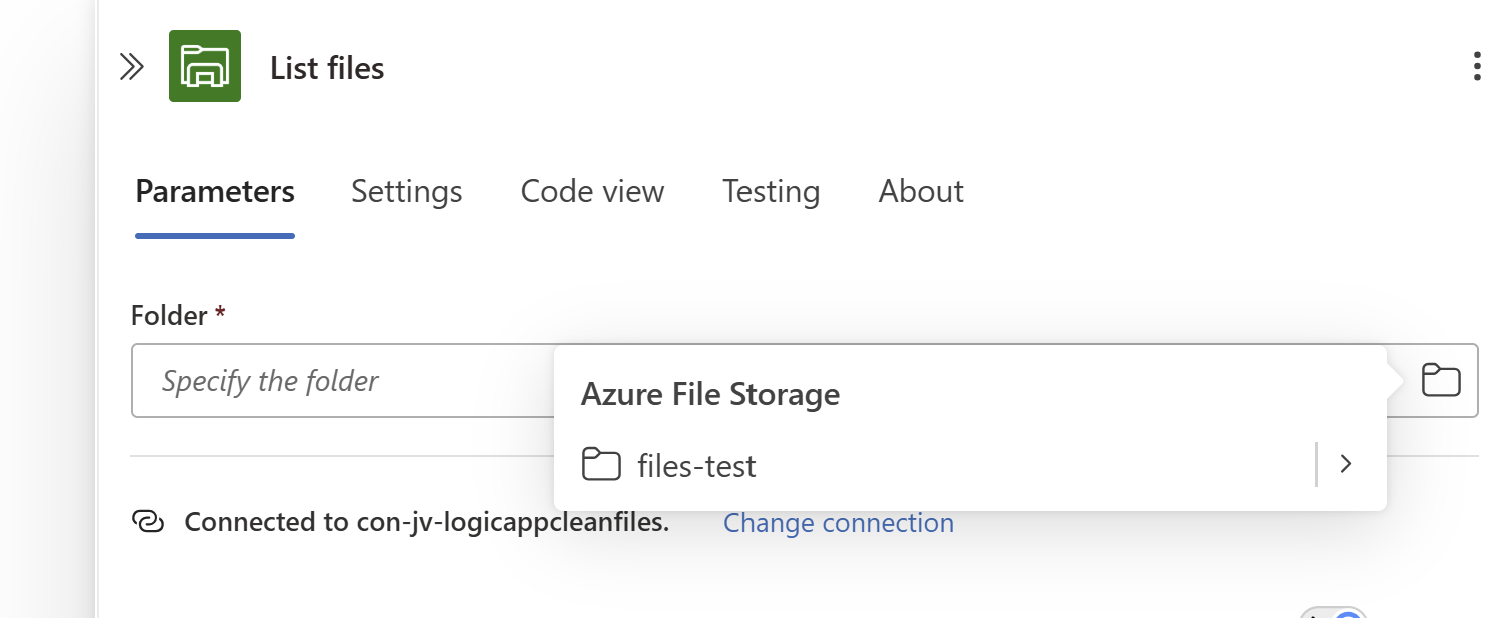

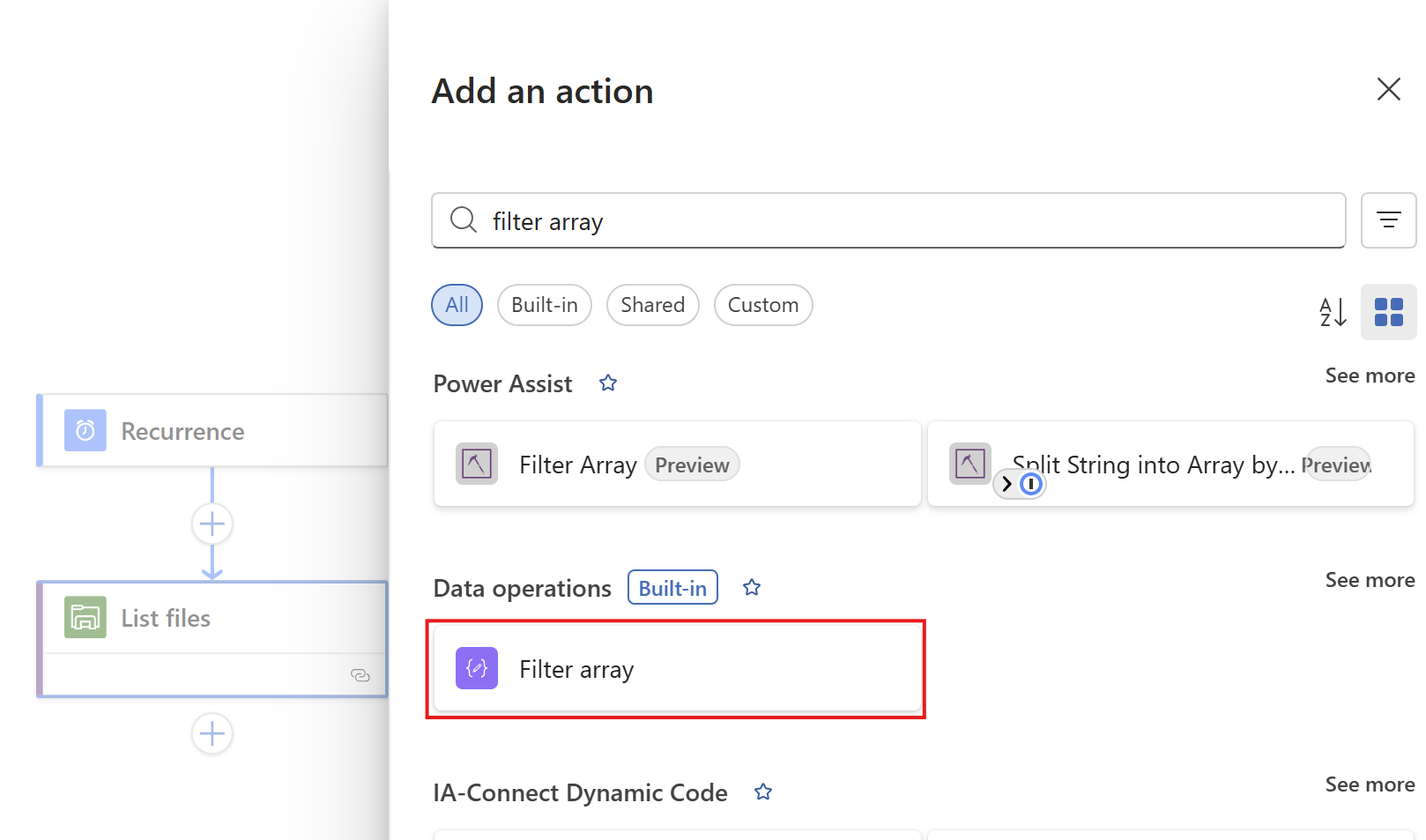

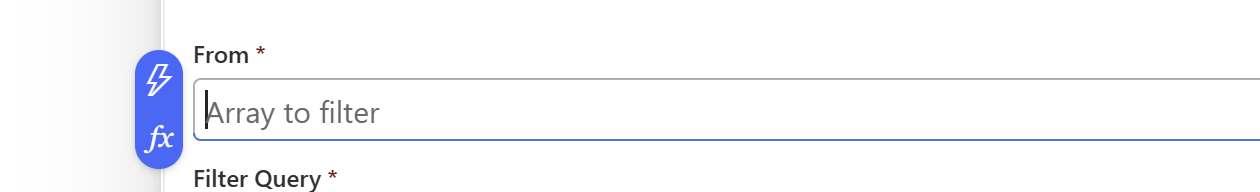

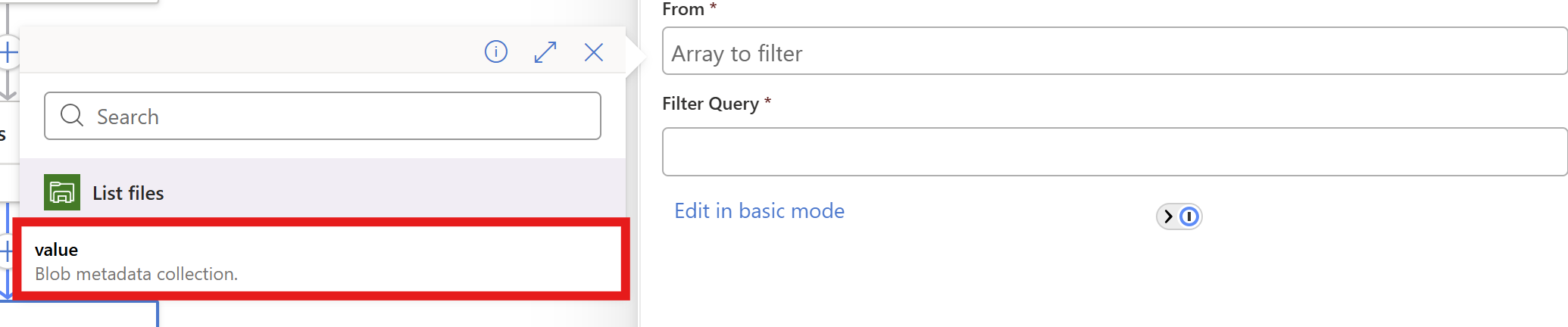

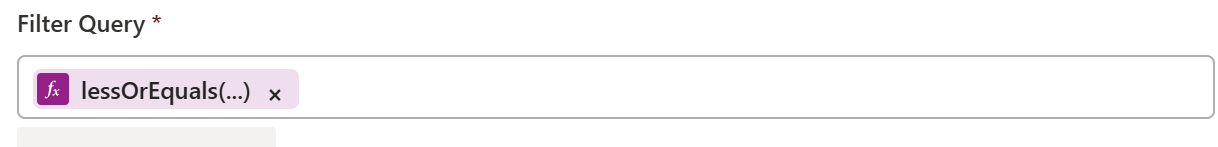

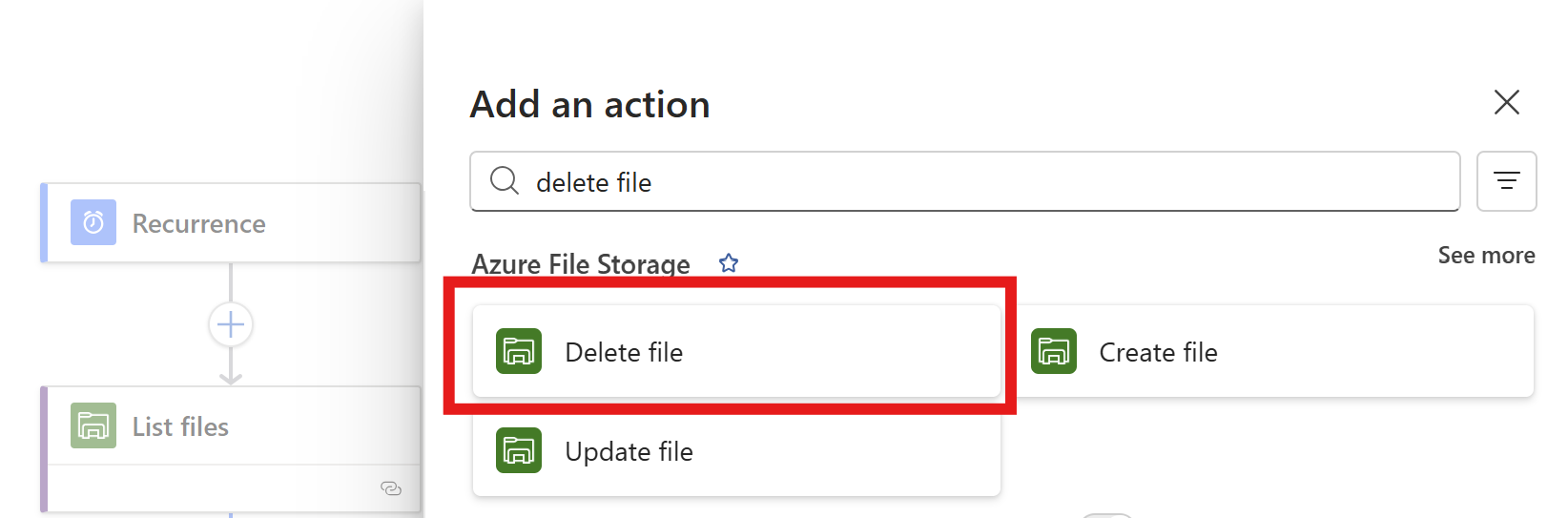

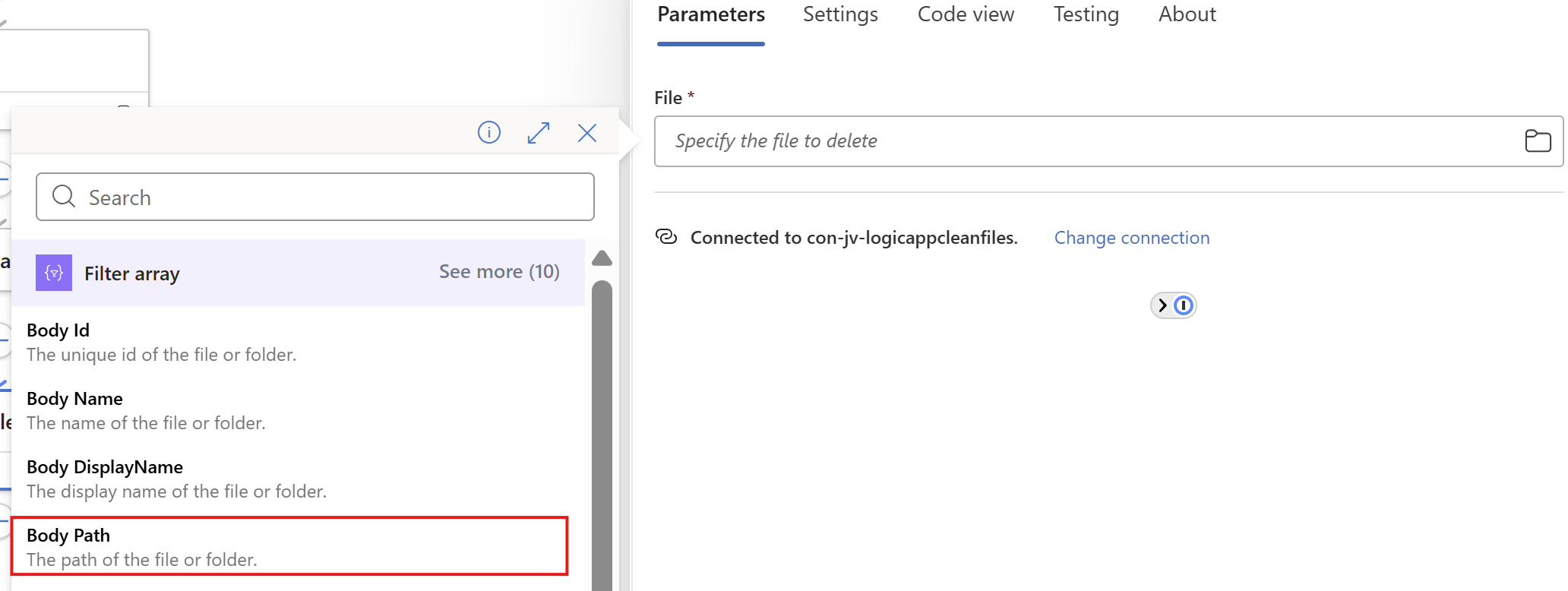

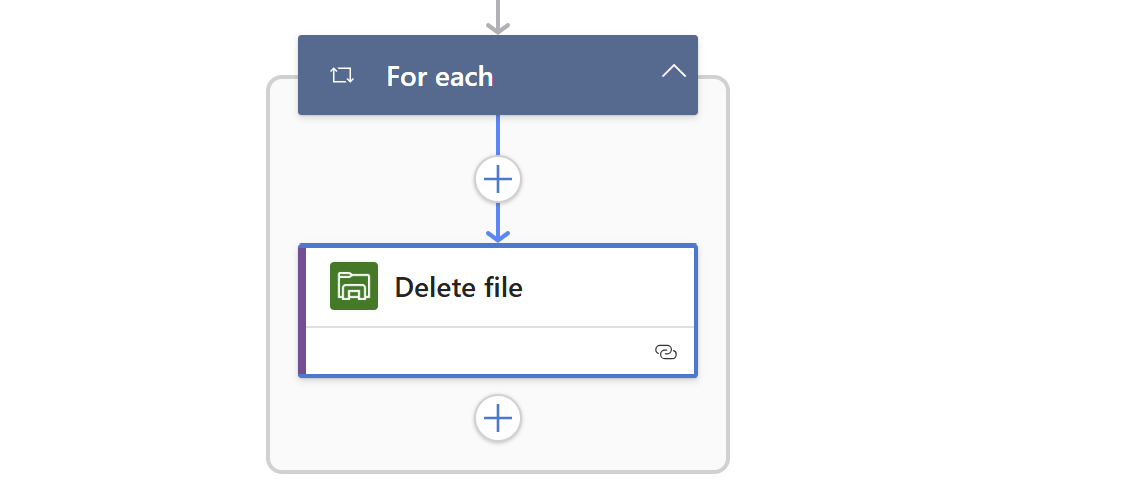

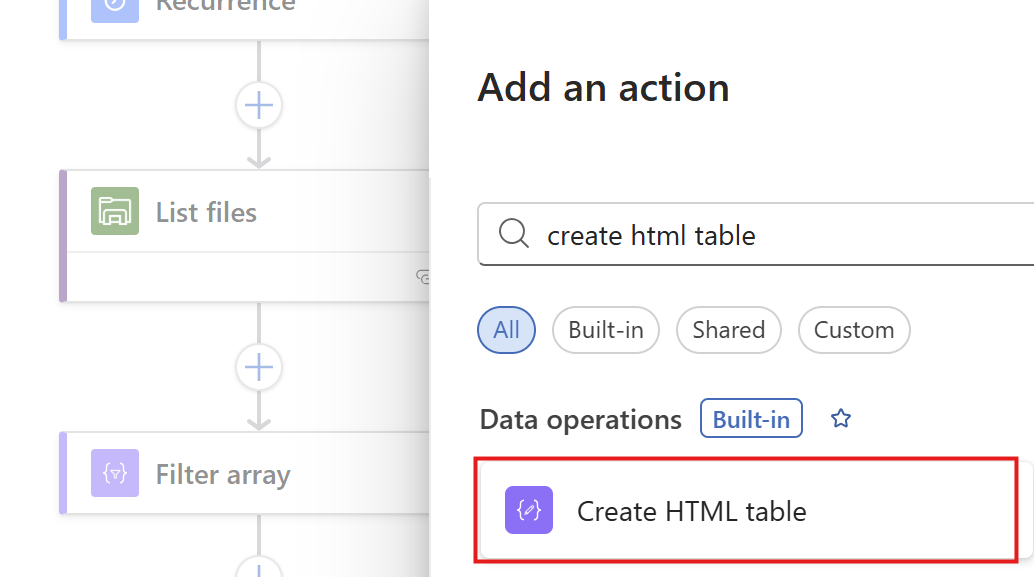

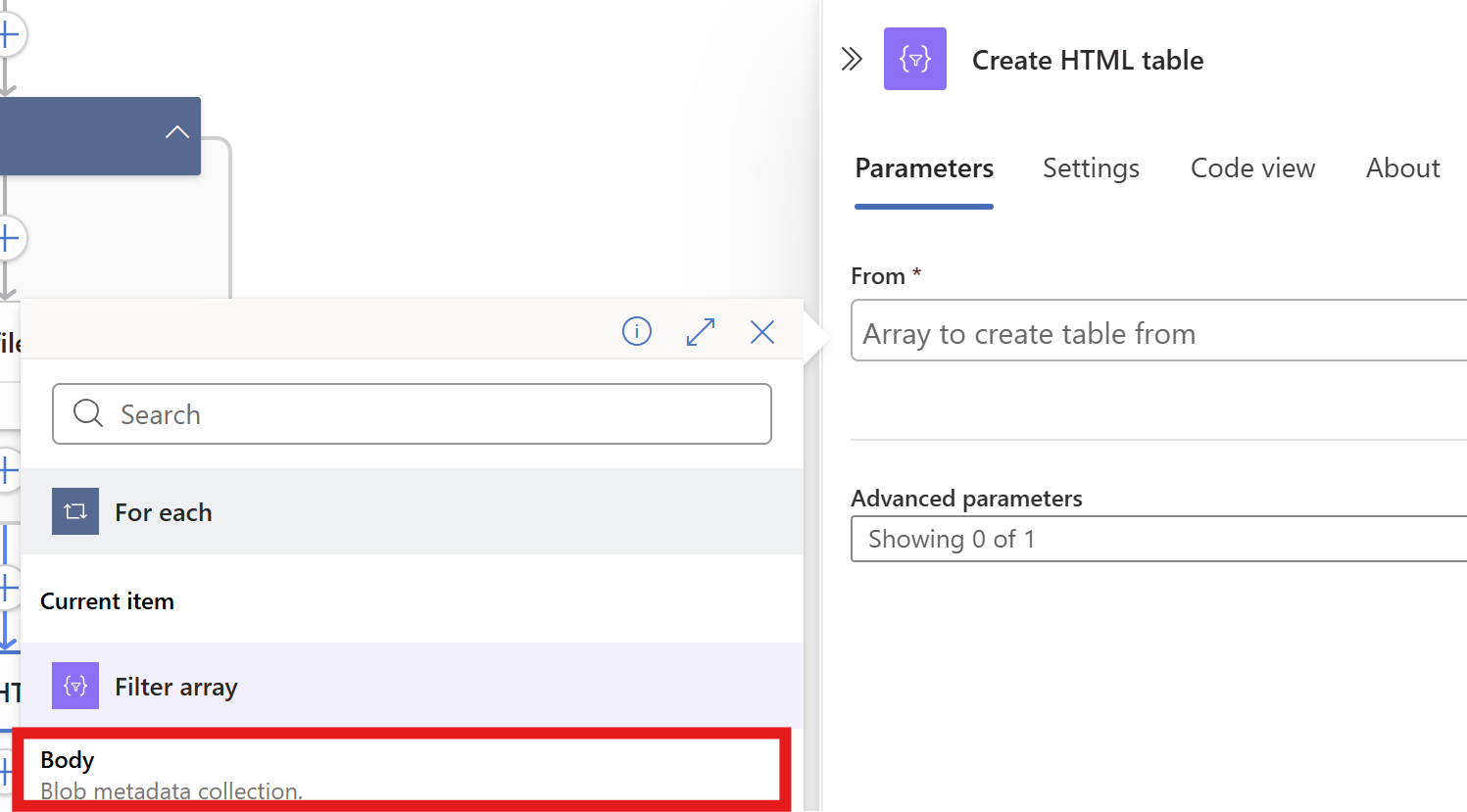

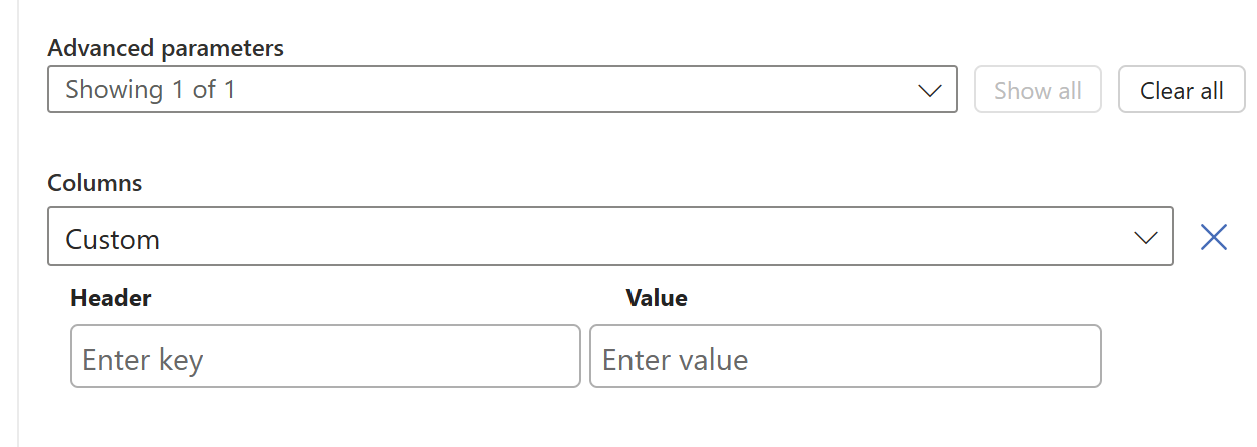

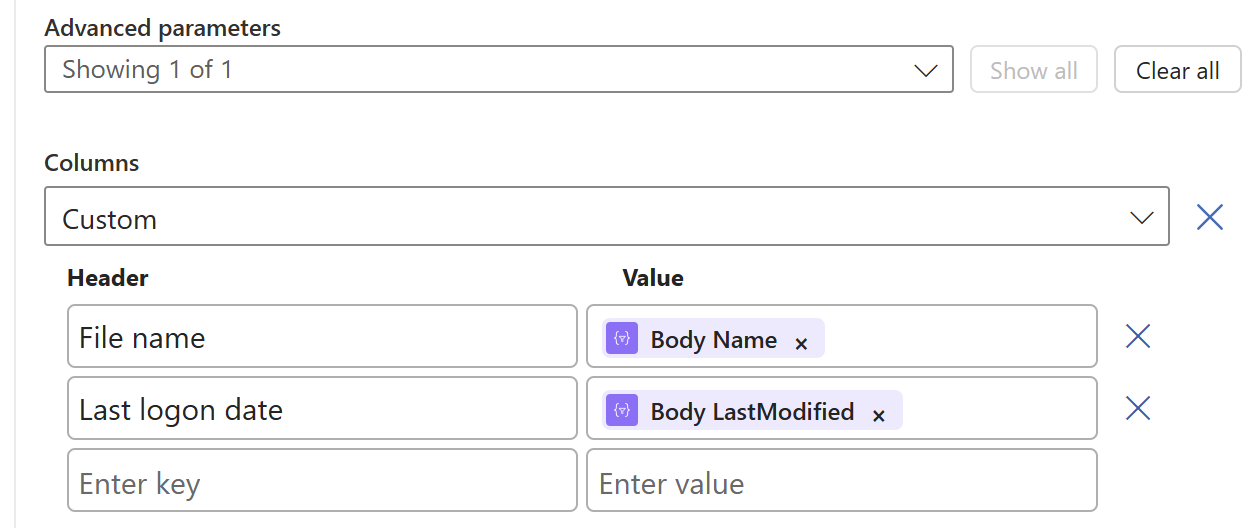

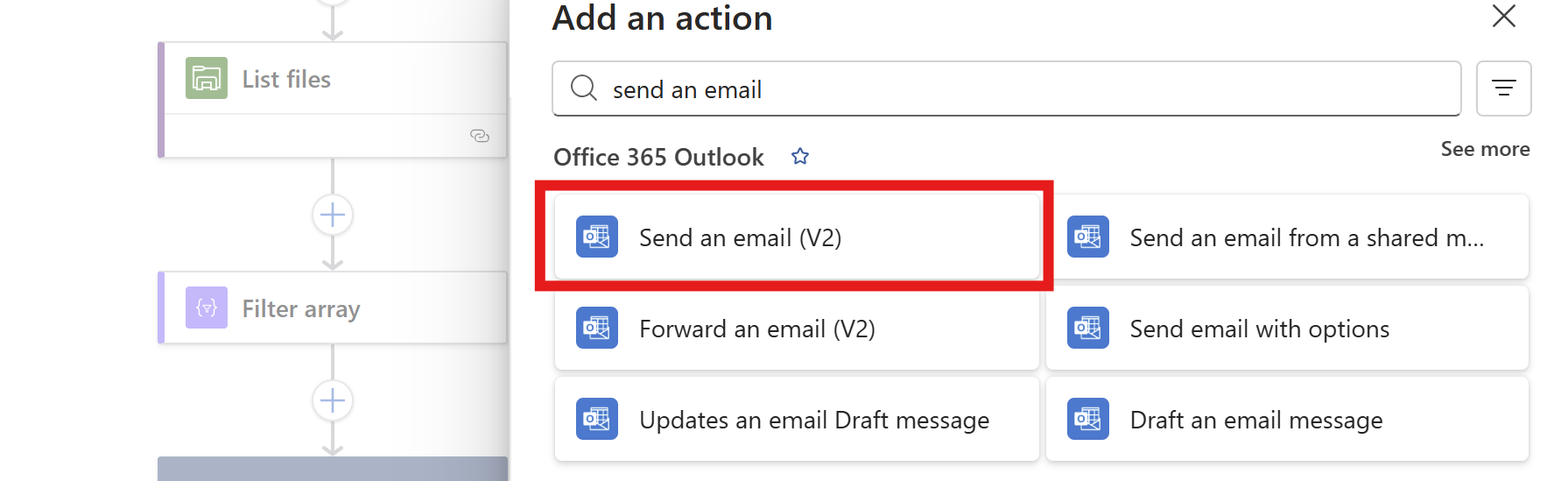

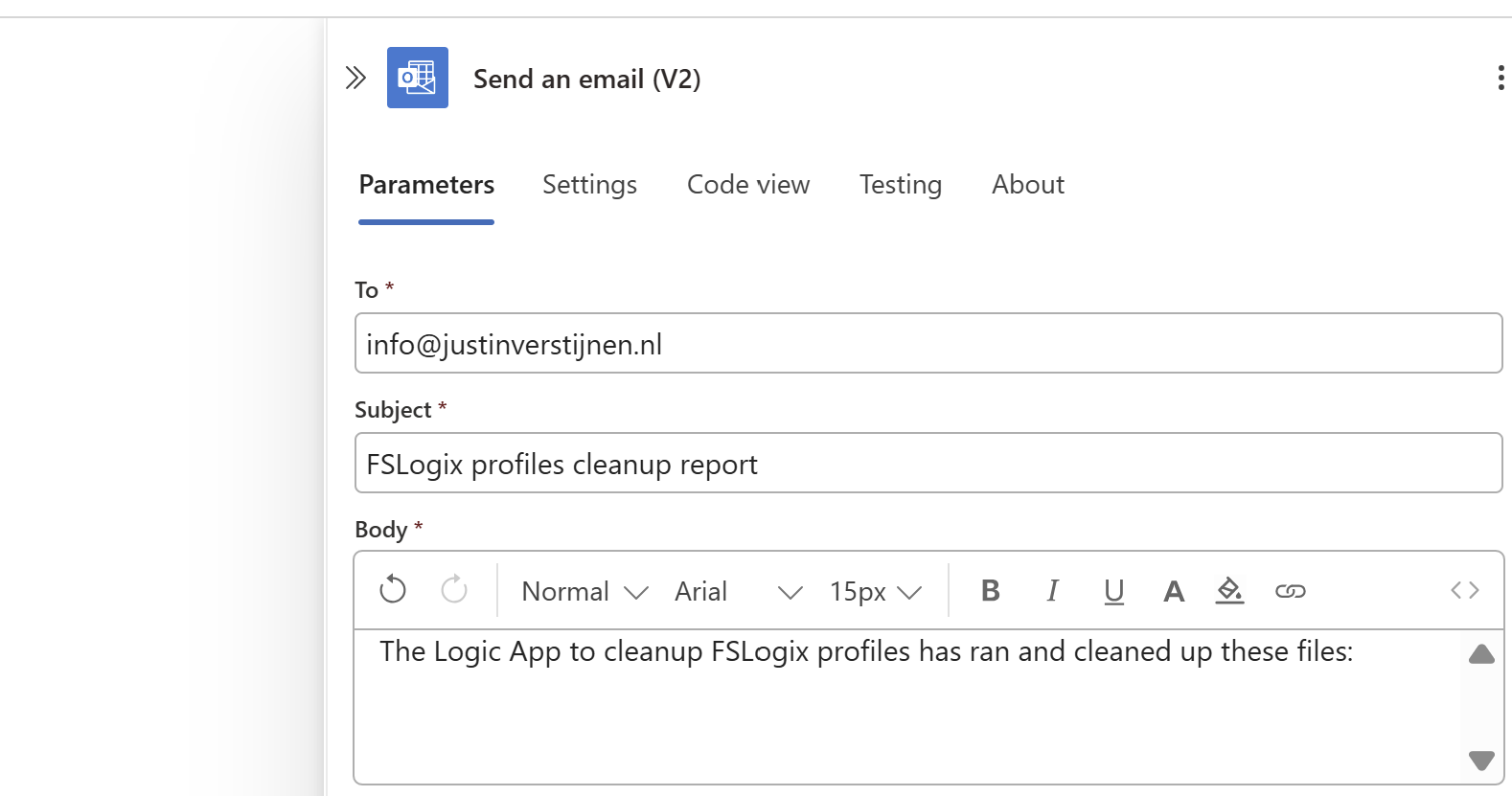

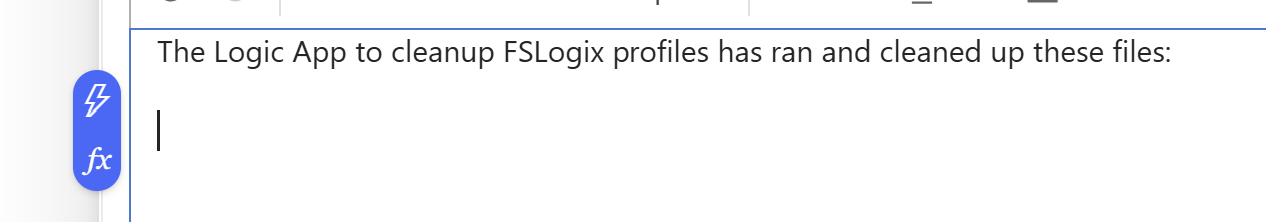

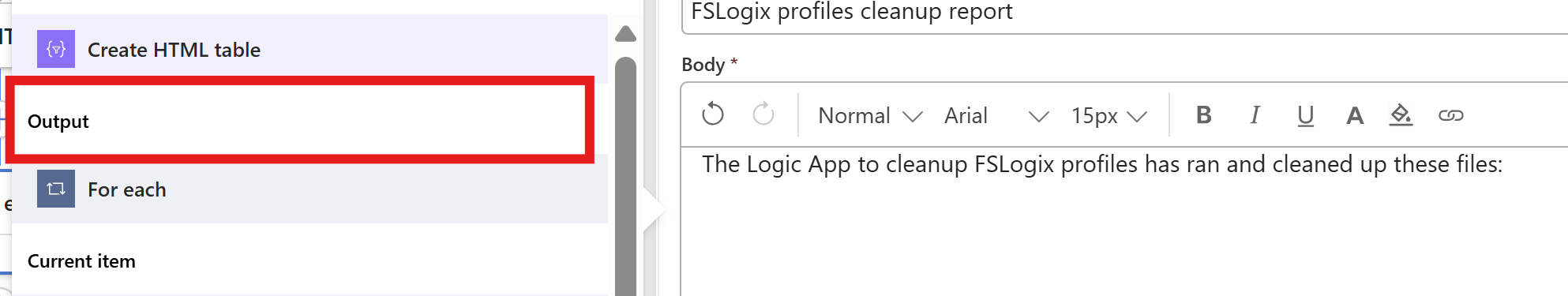

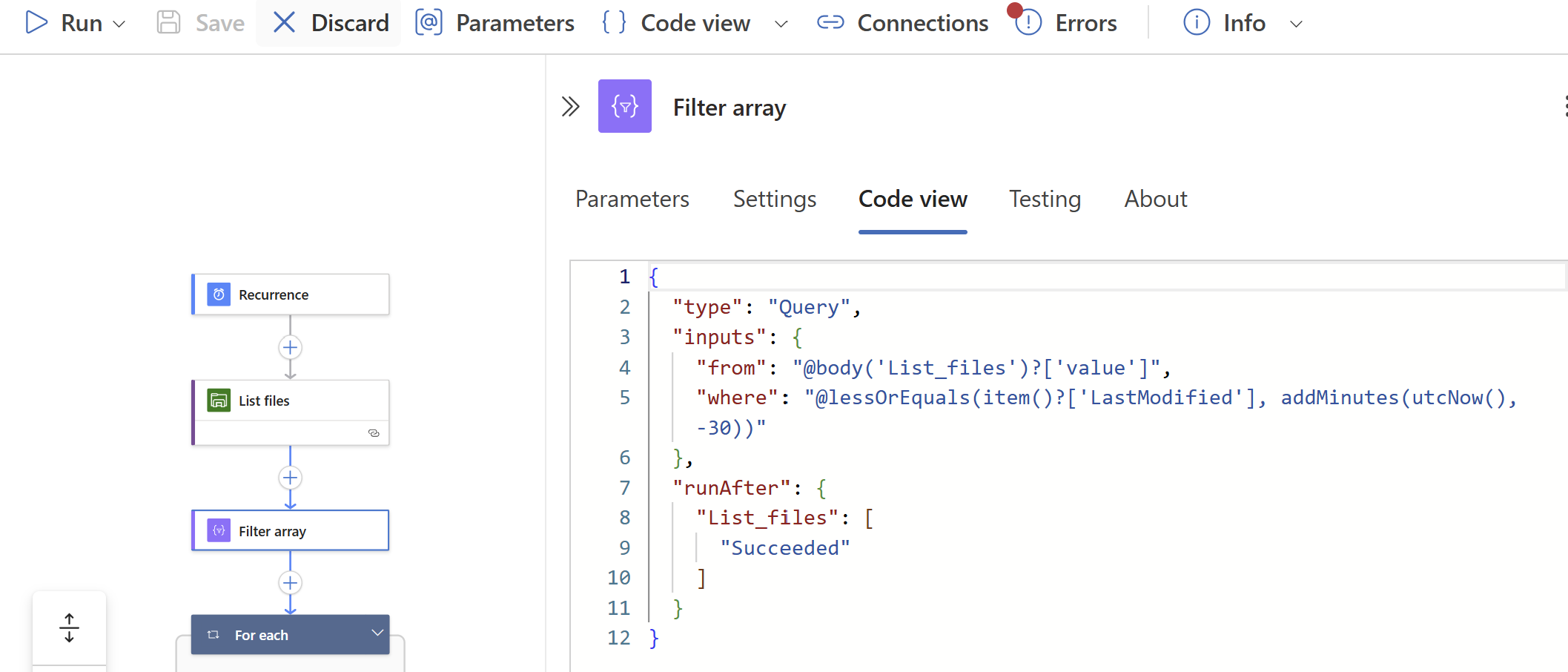

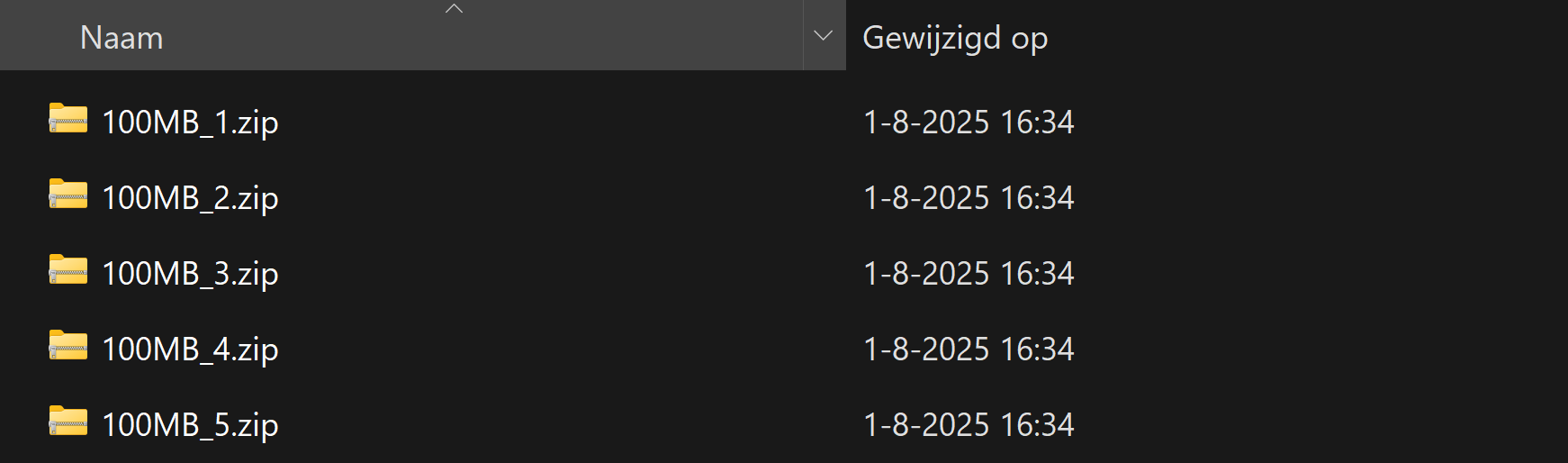

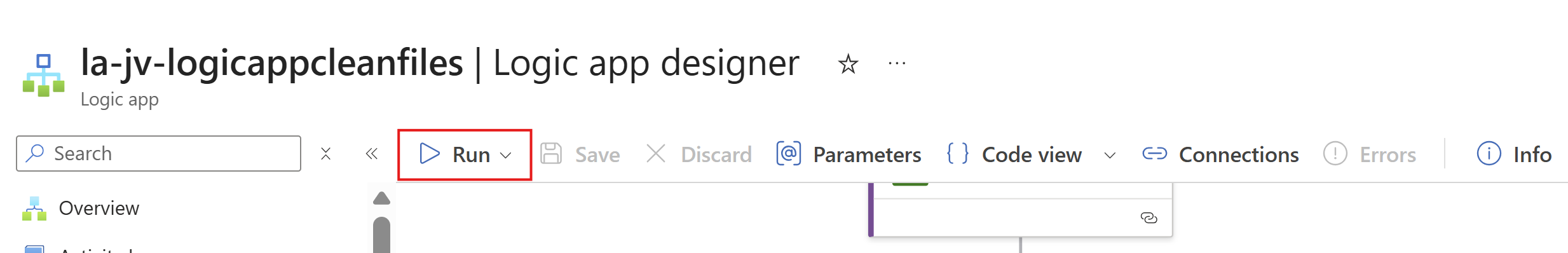

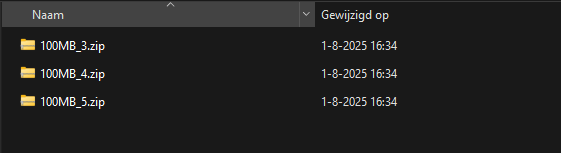

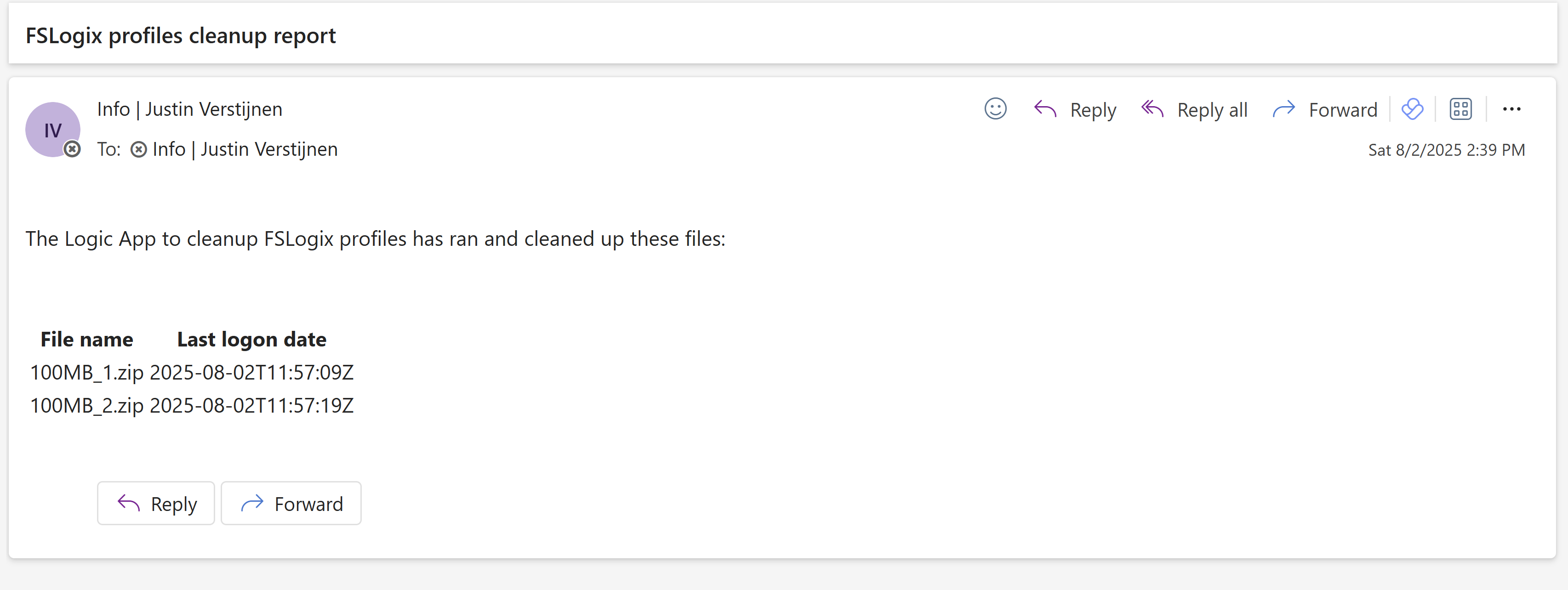

- Clean up old FSLogix profiles with Logic Apps

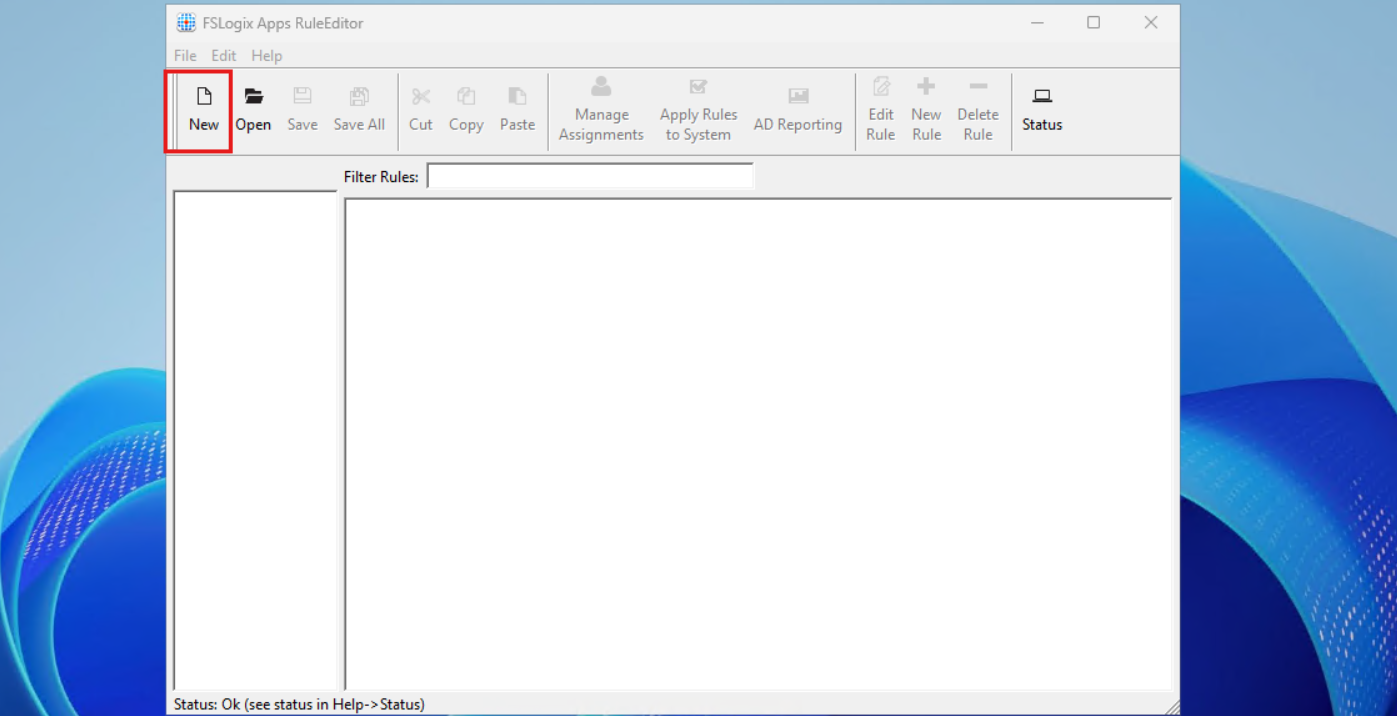

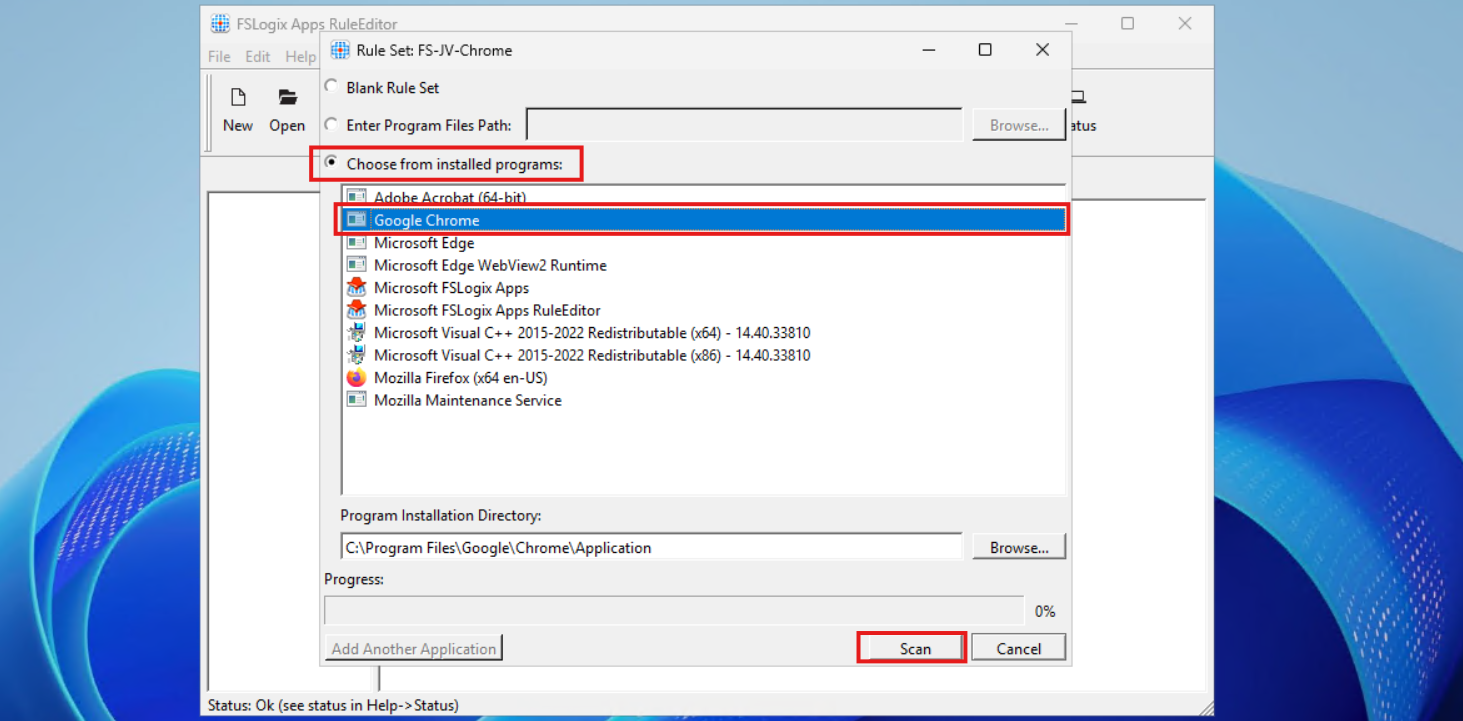

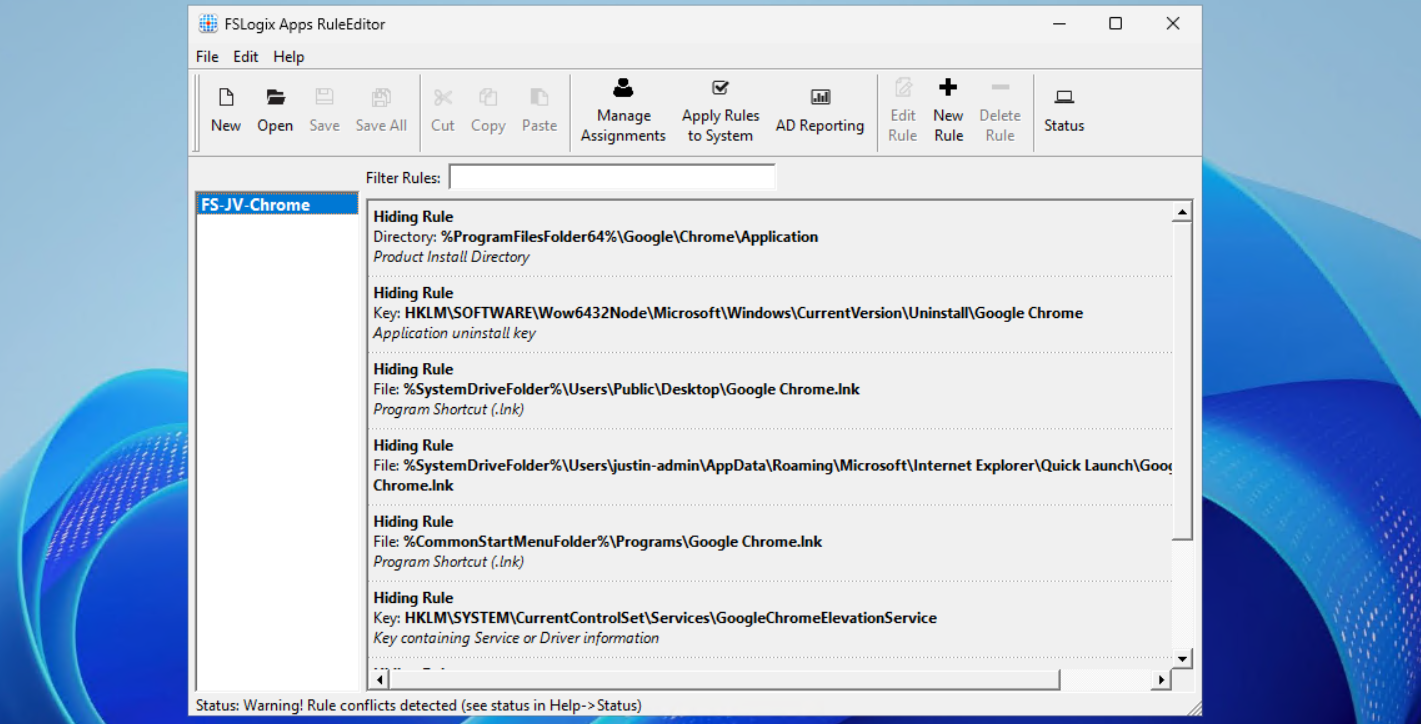

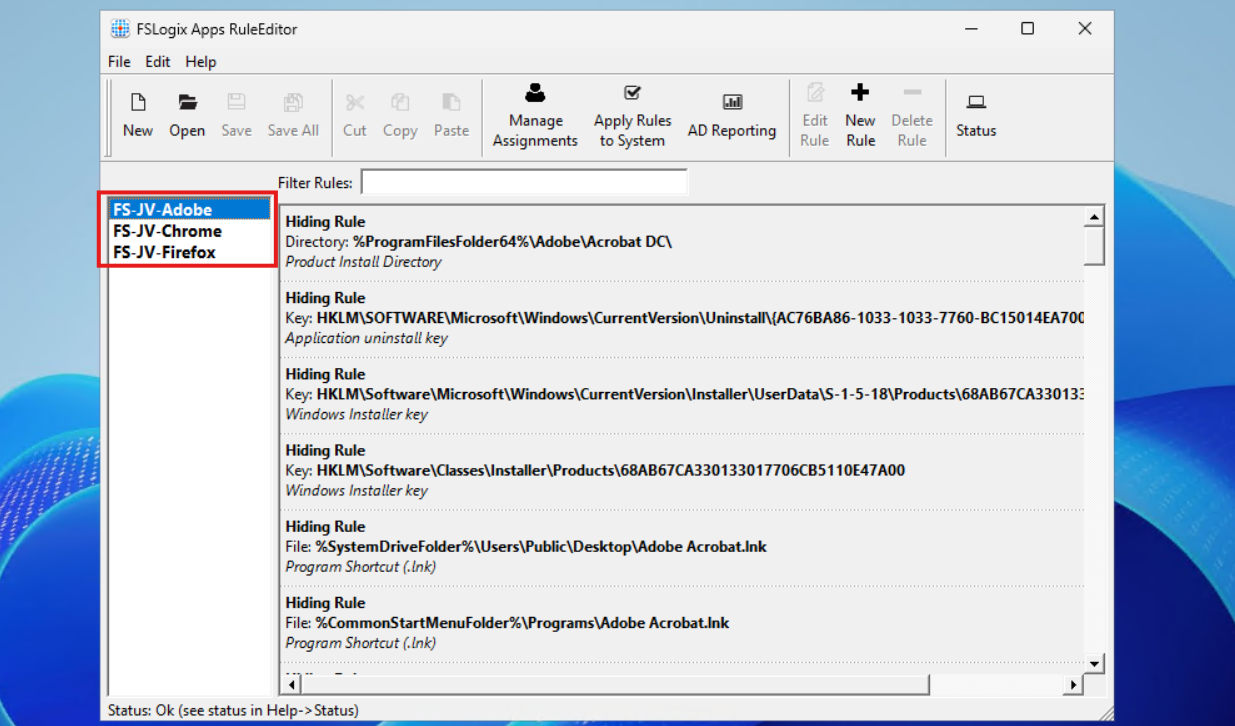

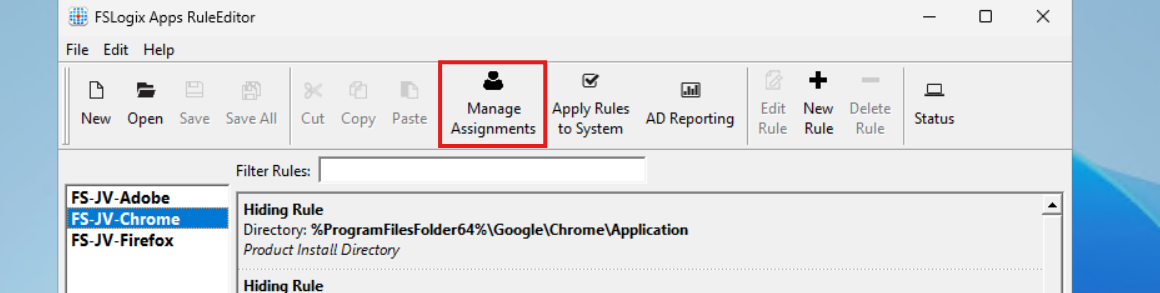

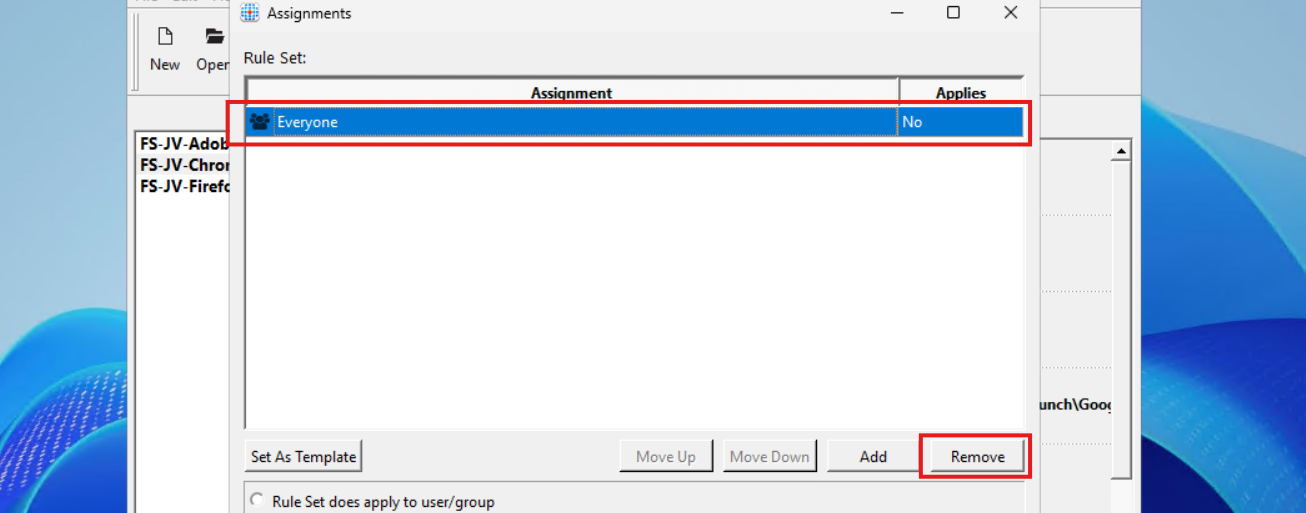

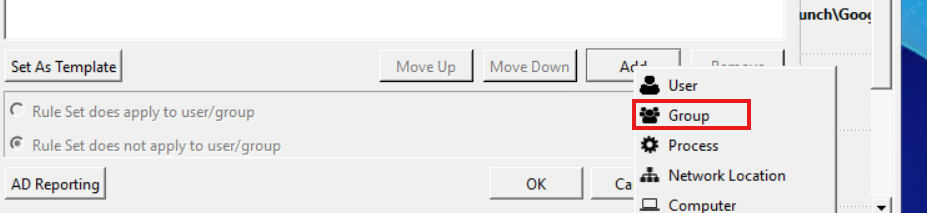

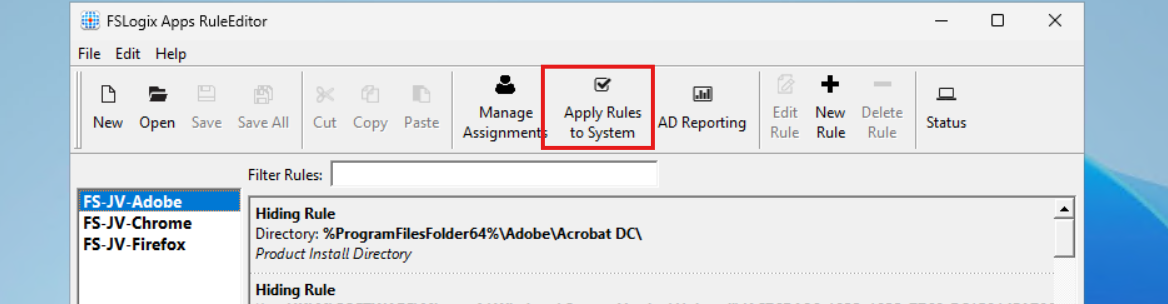

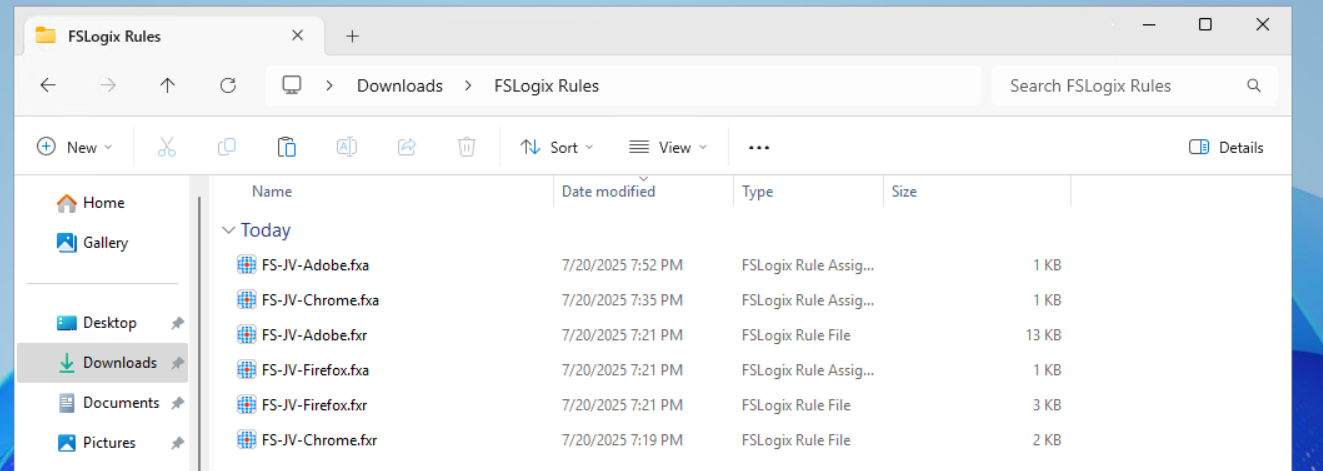

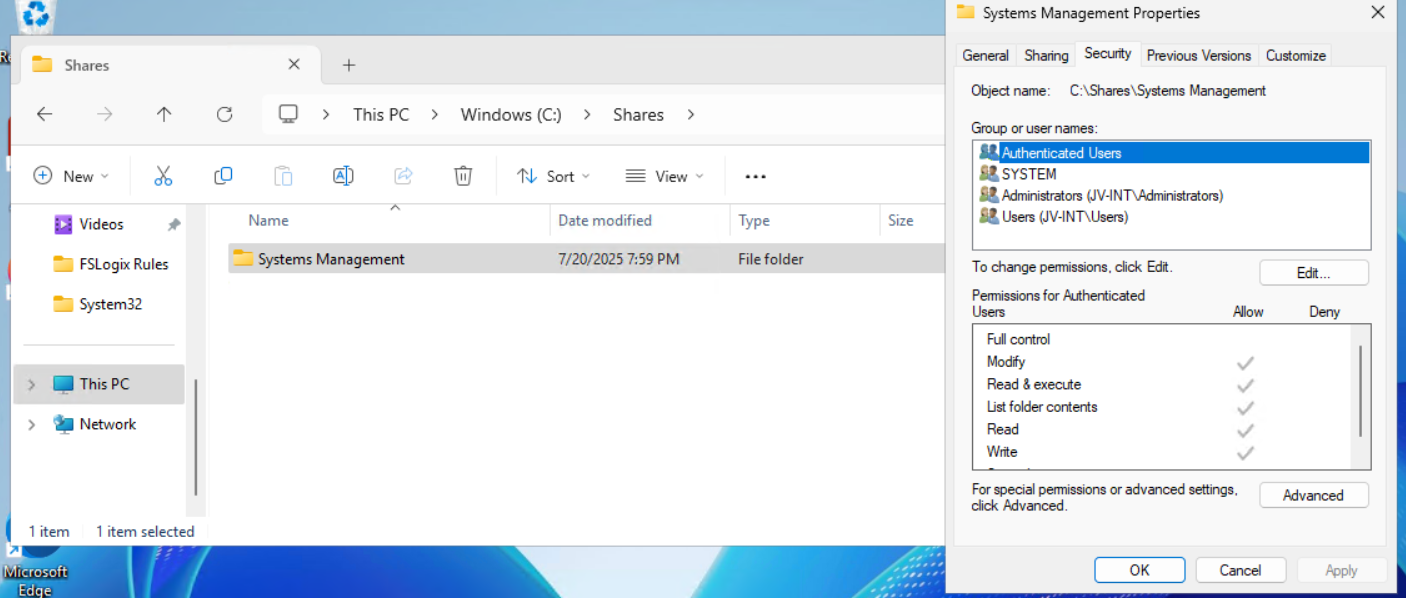

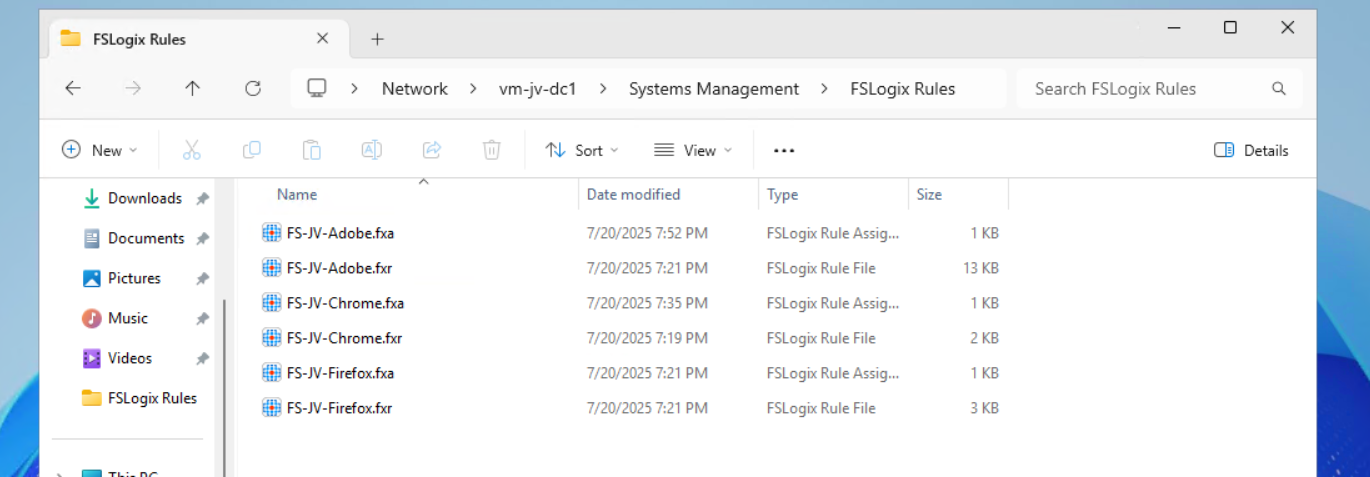

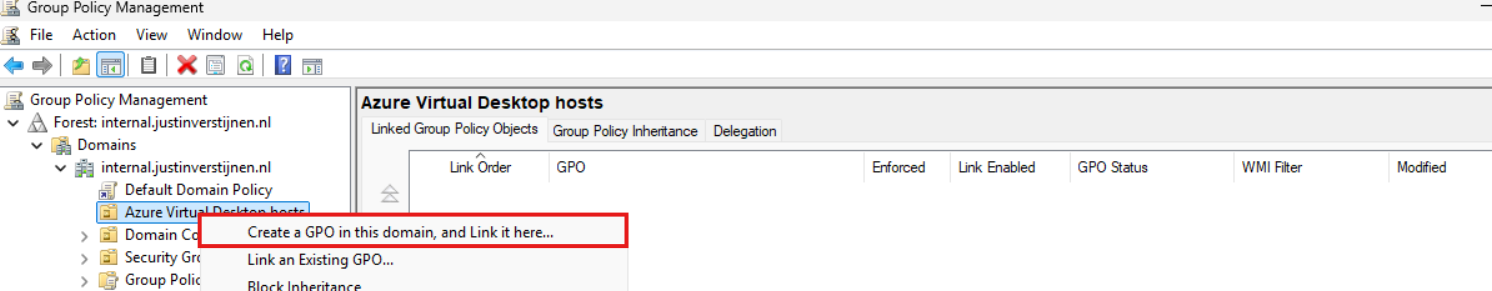

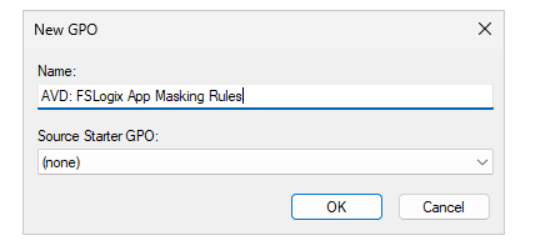

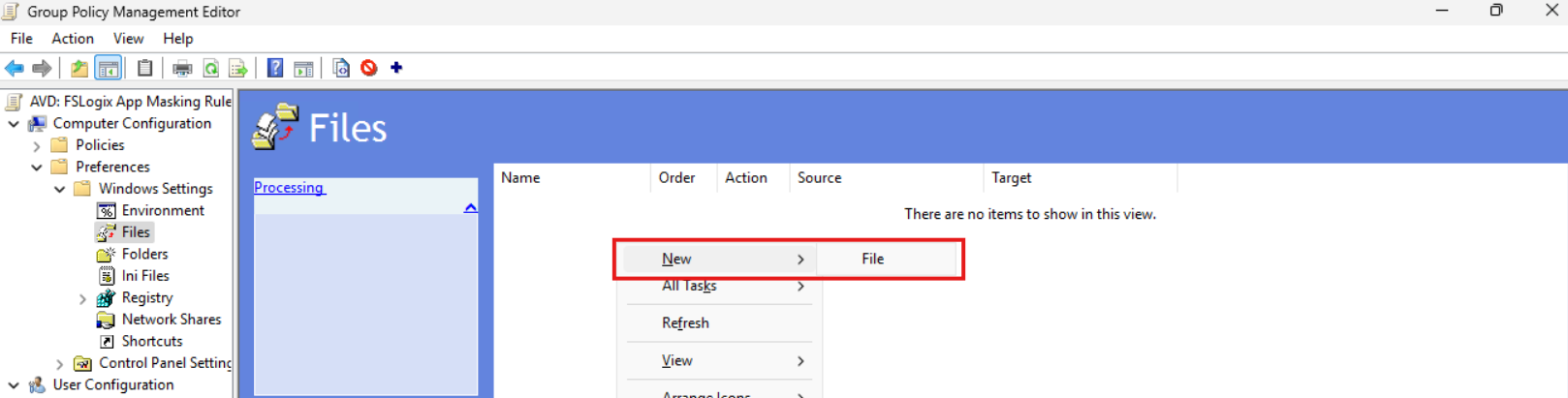

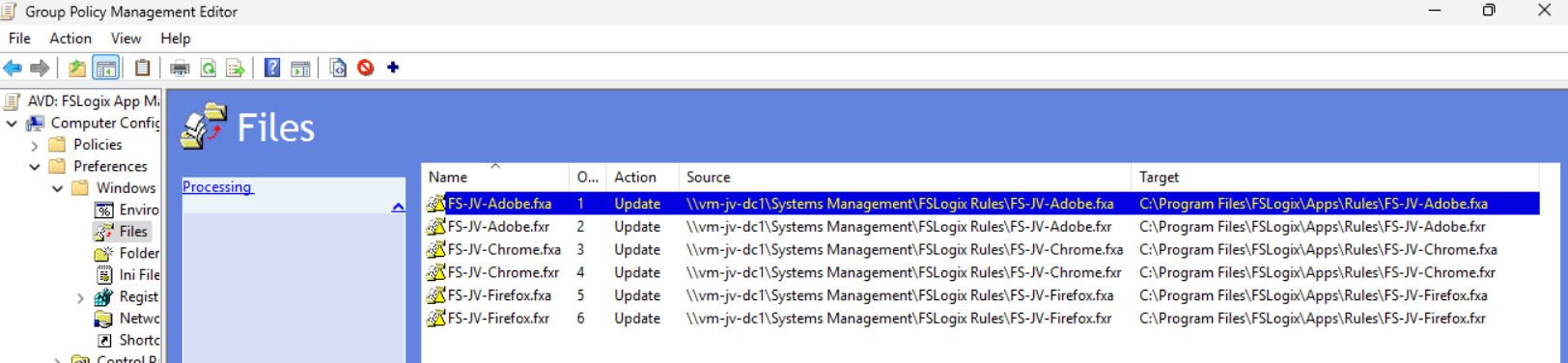

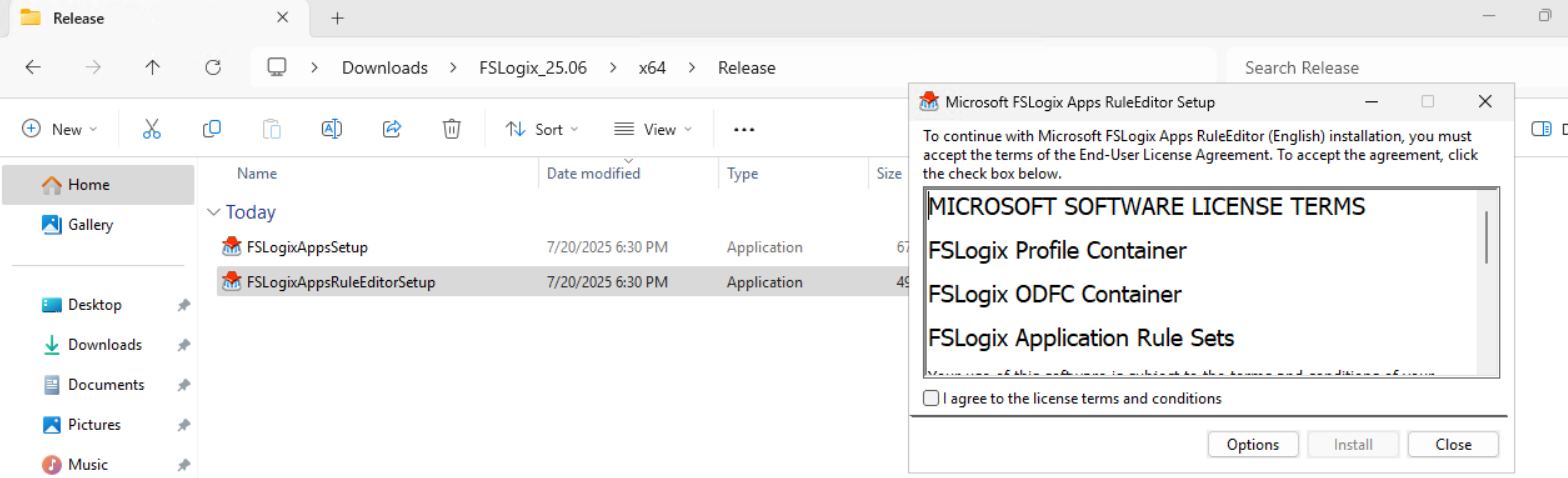

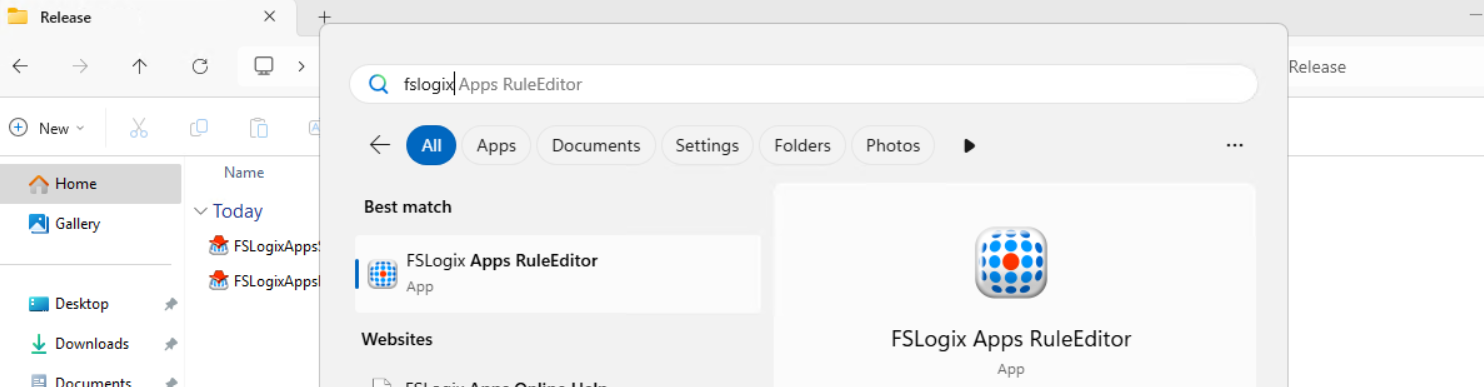

- Using FSLogix App Masking to hide applications on Virtual Desktops

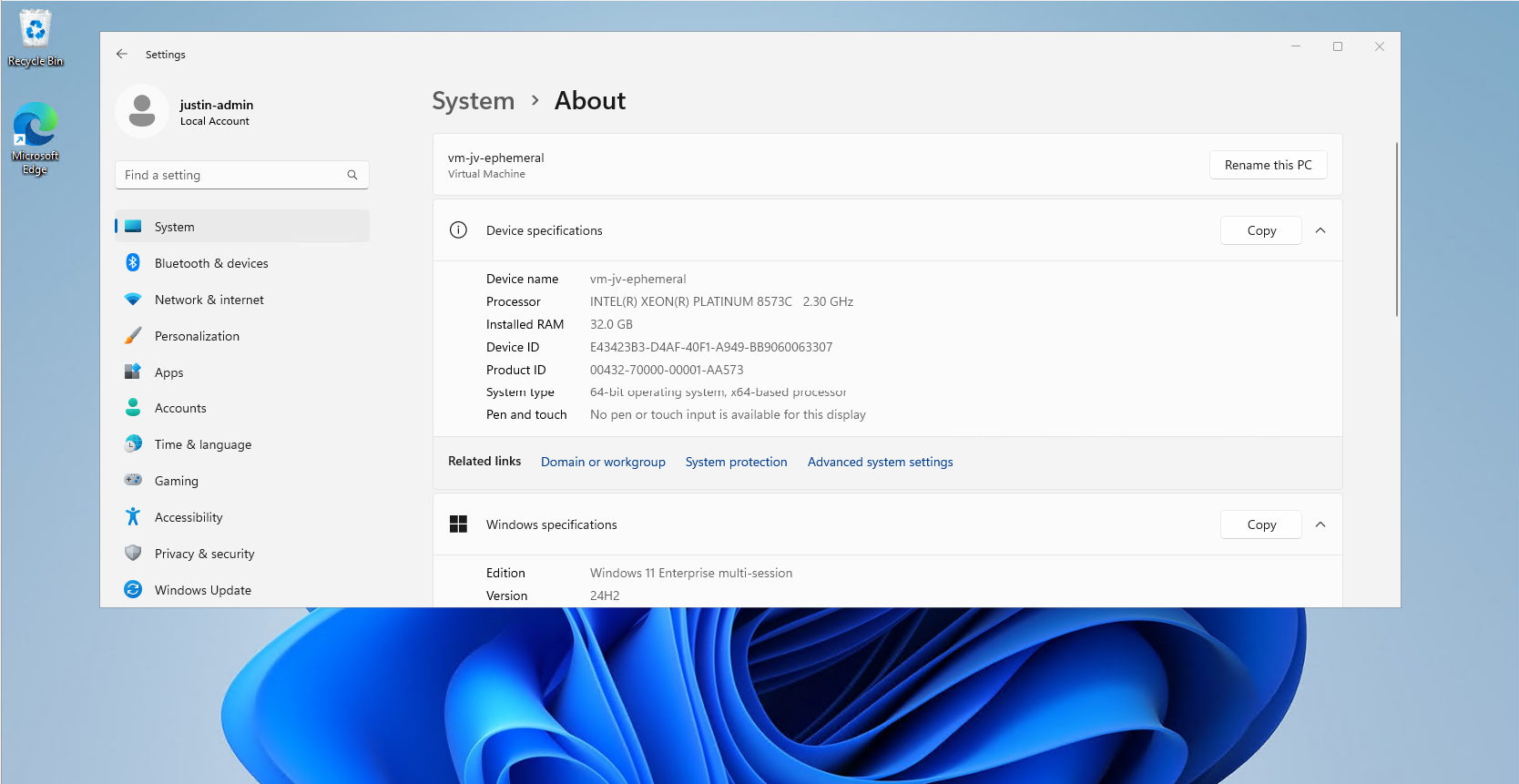

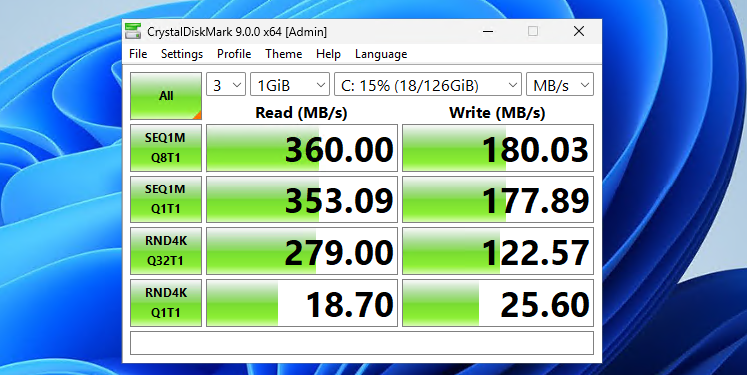

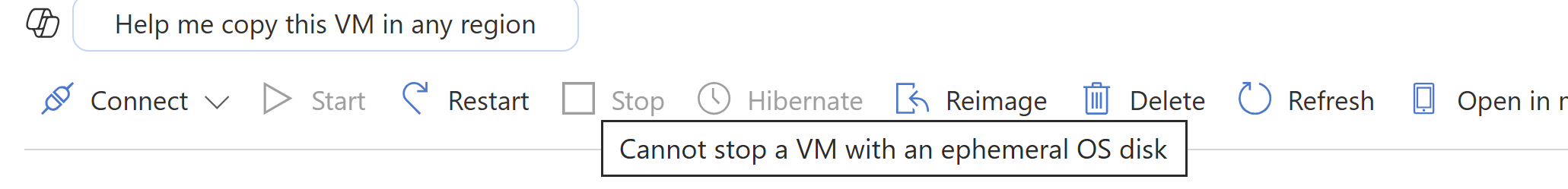

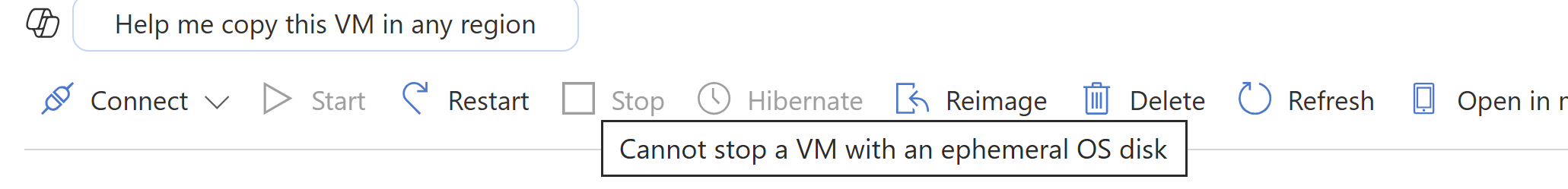

- Use Ephemeral OS Disks in Azure

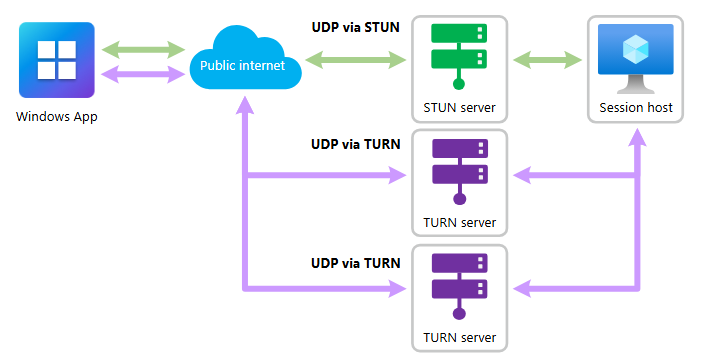

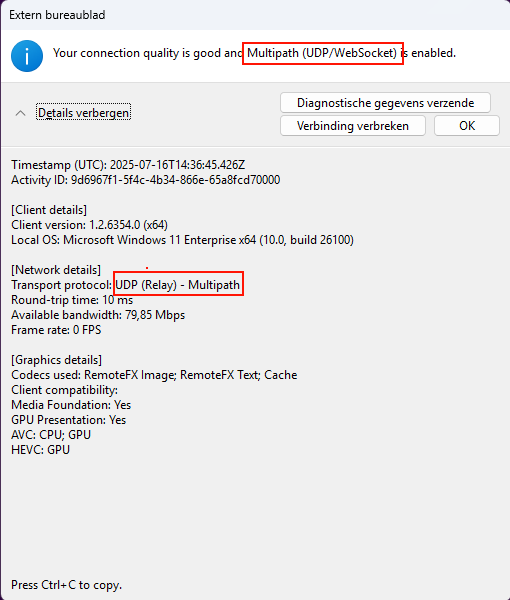

- RDP Multipath - What is it and how to configure?

- Pooled Azure Virtual Desktop with Azure AD cloud users only

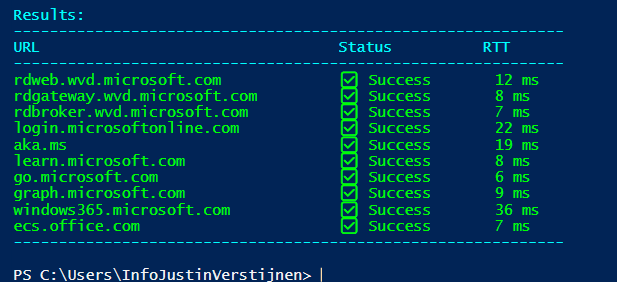

- Test Azure Virtual Desktop connectivity and RTT

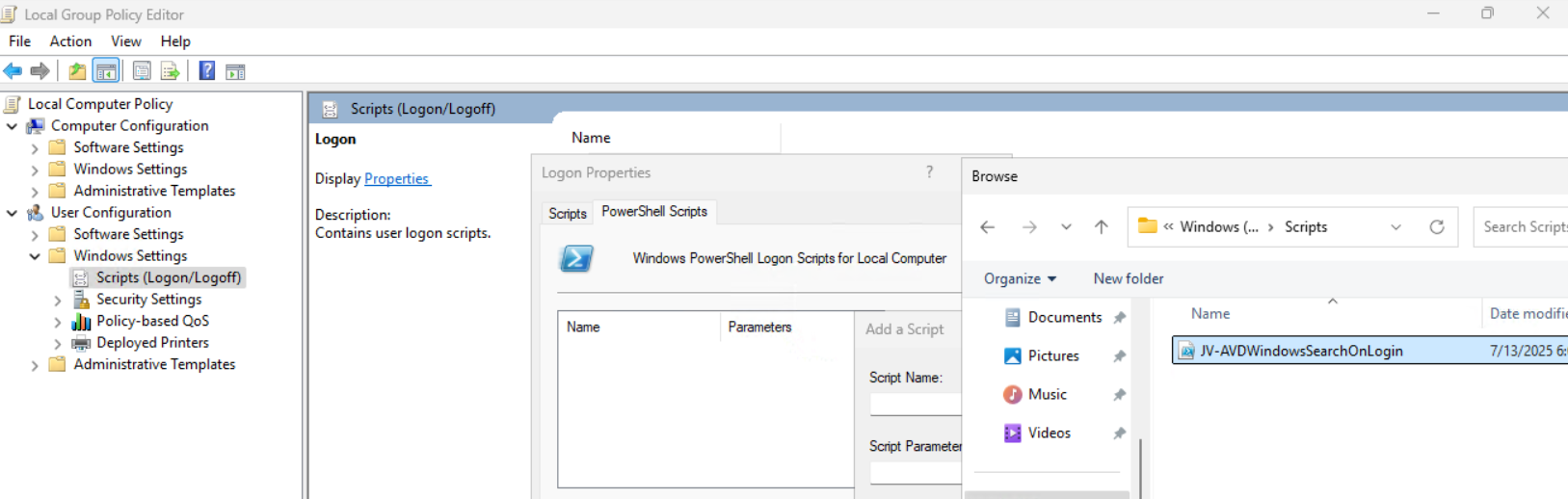

- Windows Search optimization on Azure Virtual Desktop

- Monitor Azure Virtual Deskop logon speed

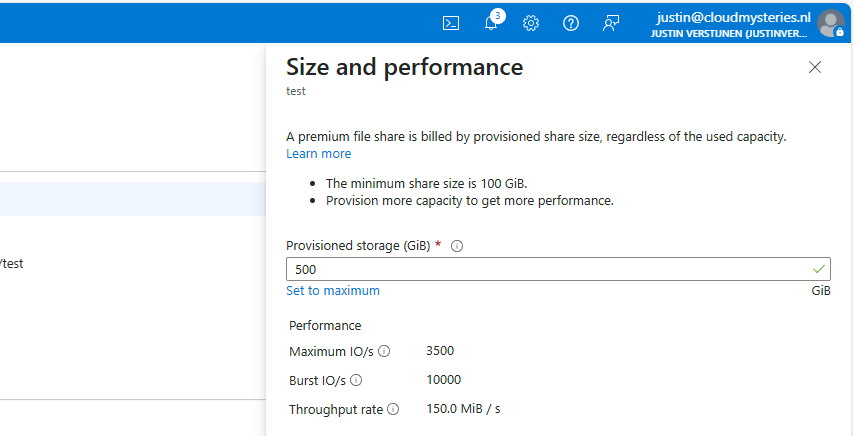

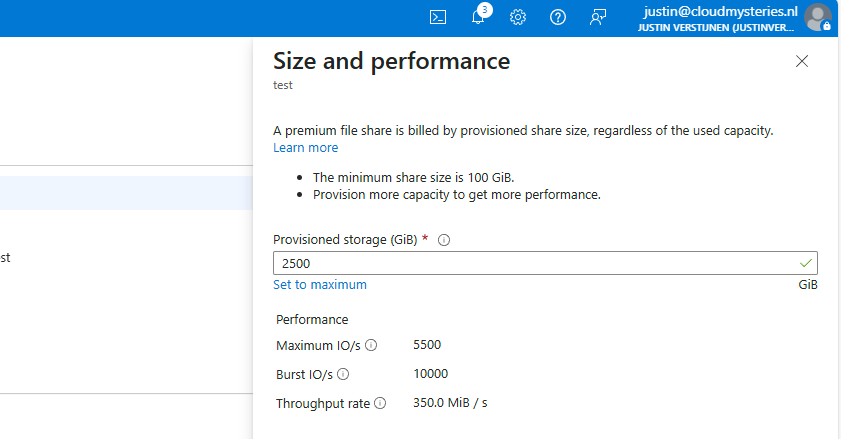

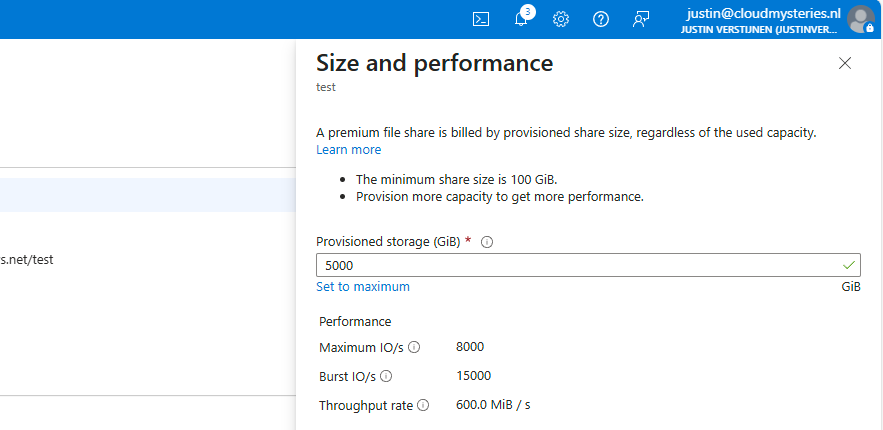

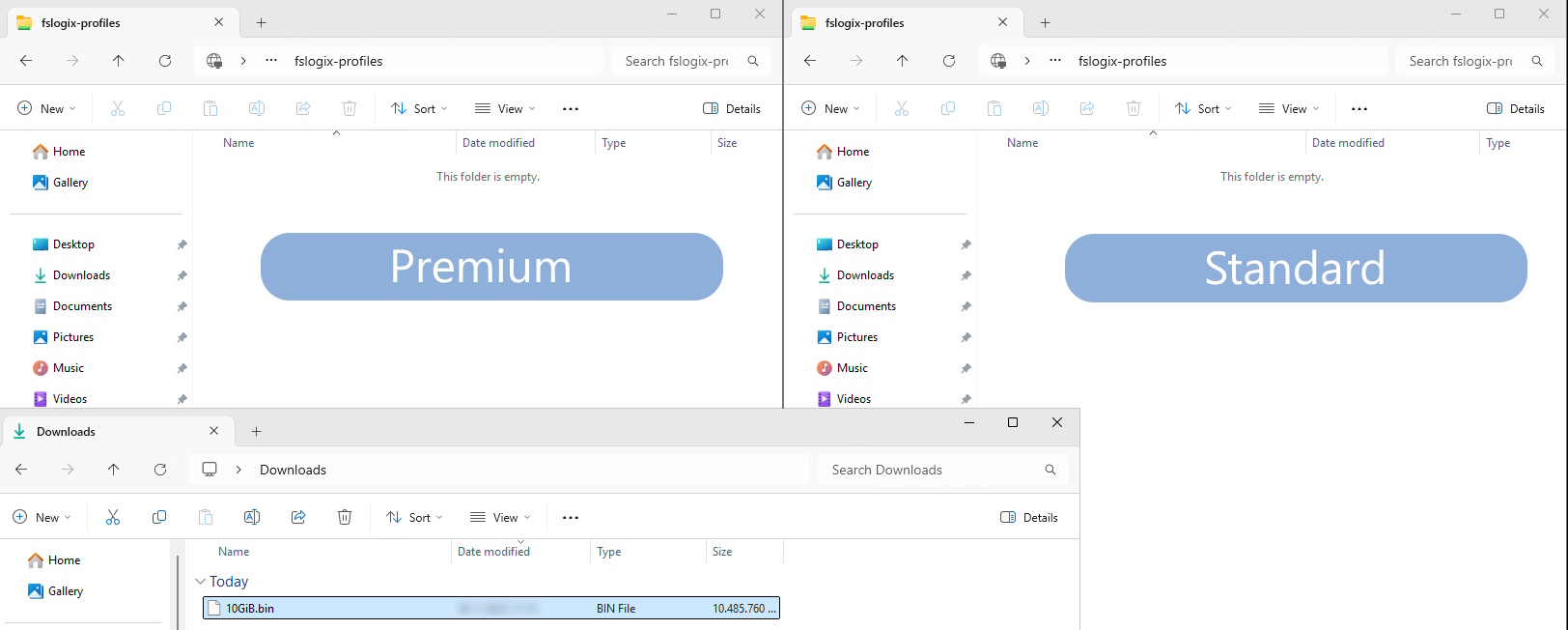

- Storage Account performance and pricing for Azure Virtual Desktop

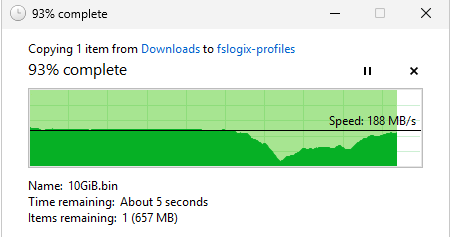

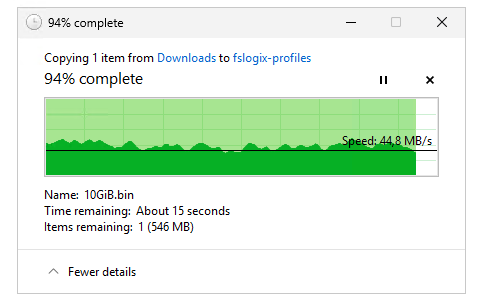

- Solved - FSLogix release 25.02 breaks Recycle Bin - Azure Virtual Desktop

- Stop OneNote printer from being default printer in AVD

- Automatic AVD/W365 Feed discovery for mobile apps

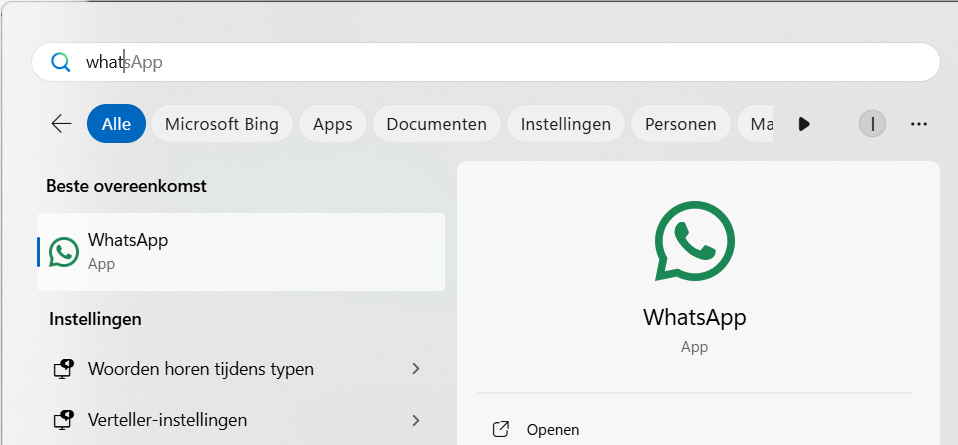

- Solved - Windows Store applications on FSLogix/Azure Virtual Desktop

- Optimize Windows 11 for Azure Virtual Desktop (AVD)

Update your Kerberos configuration with Azure Virtual Desktop (RC4)

Microsoft released that the Kerberos protocol will be hardened by an update coming in April to June 2026 to increase security. This was released by Microsoft here:

At first, they are not very specific about how to check what Kerberos encryption your environment uses and how to solve this before becoming a problem. I will do my best to explain this and show you how to solve it.

Microsoft already introduced Kerberos-related hardening changes in updates released since November 2022, which significantly reduced RC4 usage in many environments. However, administrators should still verify whether specific accounts, services or devices are explicitly or implicitly relying on RC4 before disabling it. In this guide, I will explain to you how to do this.

The update and protocols described

Kerberos is the authentication protocol used in Microsoft Active Directory Domain Services. This is being used to authenticate yourself to servers and different services within that domain, such as an Azure Files share.

Kerberos works with tickets and those tickets can be encrypted using different encryption types, where we have two important ones:

- RC4-HMAC: This protocol is deprecated and the whole point of this blog is to disable this protocol. The deprecation is because of the unsafety and the possible attack surface

- AES-256: This is a newer protocol being used from about 2022 till now and is the more secure option to encrypt Kerberos tickets which we must use from today

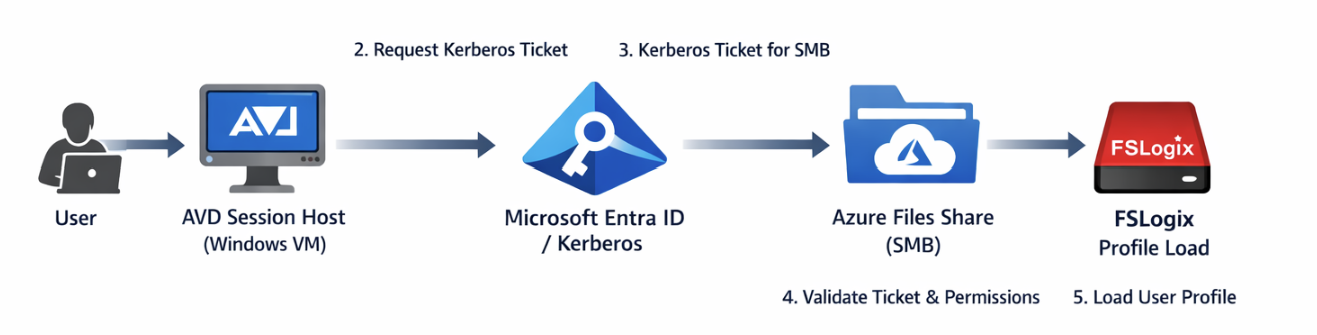

These tickets are being granted in step 3 of the diagram below:

Impacted resources

The resources impacted by this coming update and protocol deprecation are all sorts of domain-joined dependencies using Kerberos tickets, like AD DS-joined Azure Files shares.

However, this scope may not be limited to Azure Files or FSLogix only. Any resource that depends on Kerberos authentication can be affected if RC4 is still being used somewhere in the chain. This can include file servers, SMB shares, legacy service accounts, older joined devices, third-party appliances and applications that rely on Active Directory authentication. In many environments, the real risk is not the primary workload itself, but an older dependency that still expects RC4 without this being immediately visible.

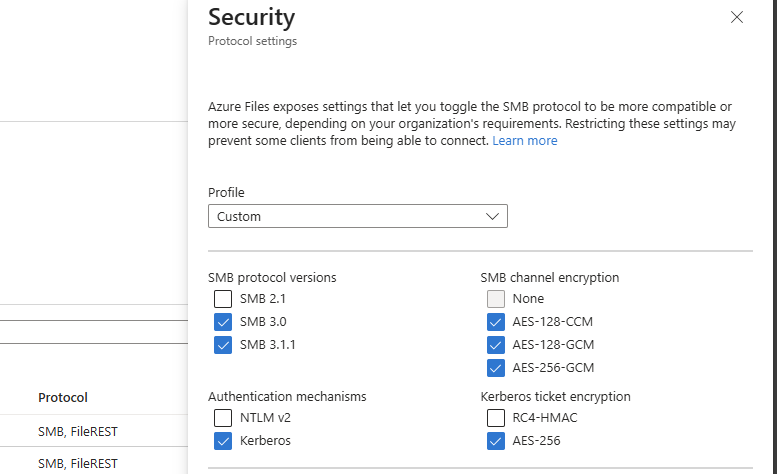

Check your configuration - Azure Portal

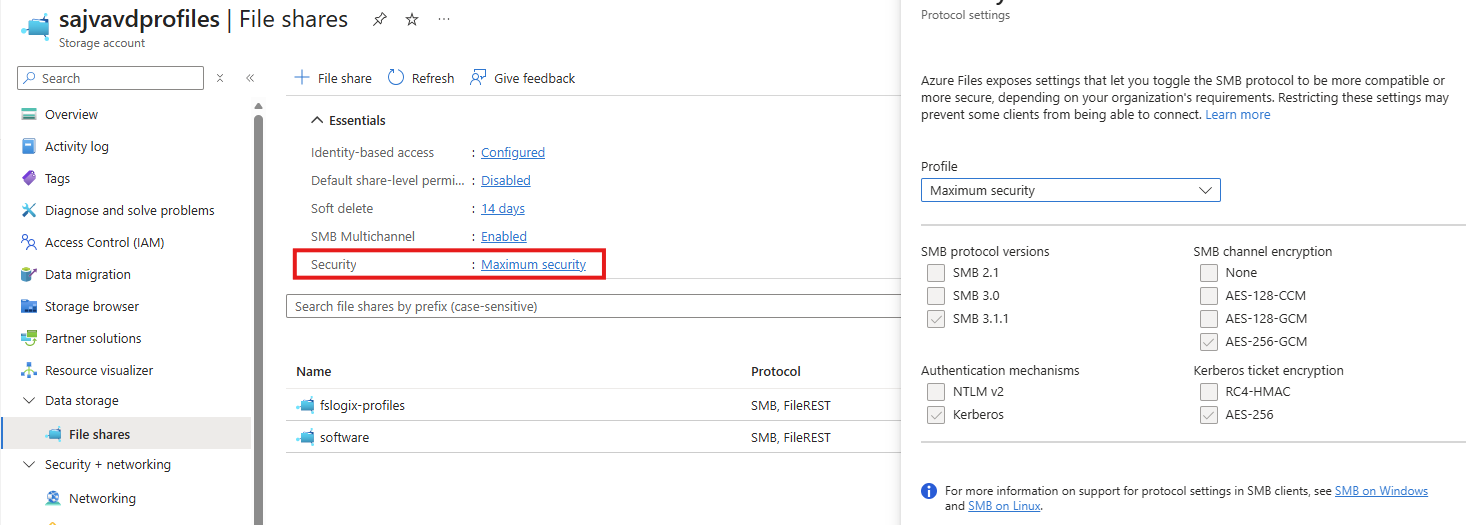

We can check our current storage account configuration in Azure to check if we still use both protocols or only the newer AES-256 option by going to the storage account:

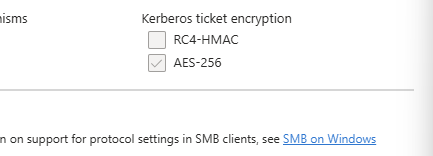

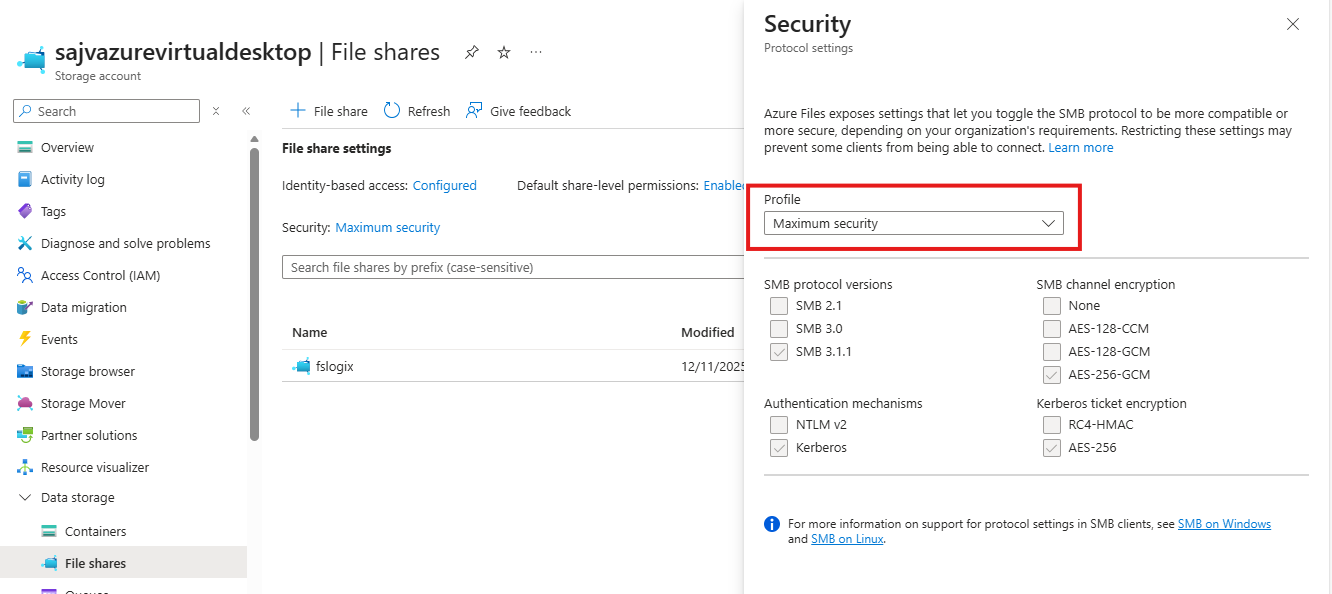

By clicking on the “Security” part, we get the overview of protocols being used by AD DS, Kerberos and SMB. This part goes about the part in the bottom right corner (Kerberos ticket encryption):

If you are already using the maximum security preset, you don’t have to change anything and you are good to go for the coming updates.

After the hardening updates coming to Windows PCs and Windows Server installations, the RC4-HMAC protocol will be phased out and not available to use, so we must take steps to disable this protocol without user disruption.

Check your configuration - PowerShell

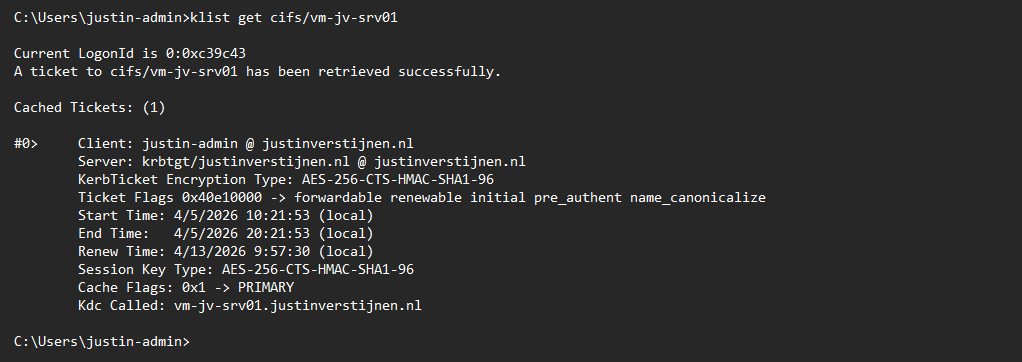

To check different server connections in your Active Directory for other resources, you can use this command. This will show the actual encryption method by Kerberos used to connect to a resource.

Replace “servername” with the actual file server you connect to.

klist get cifs/servernameFor example:

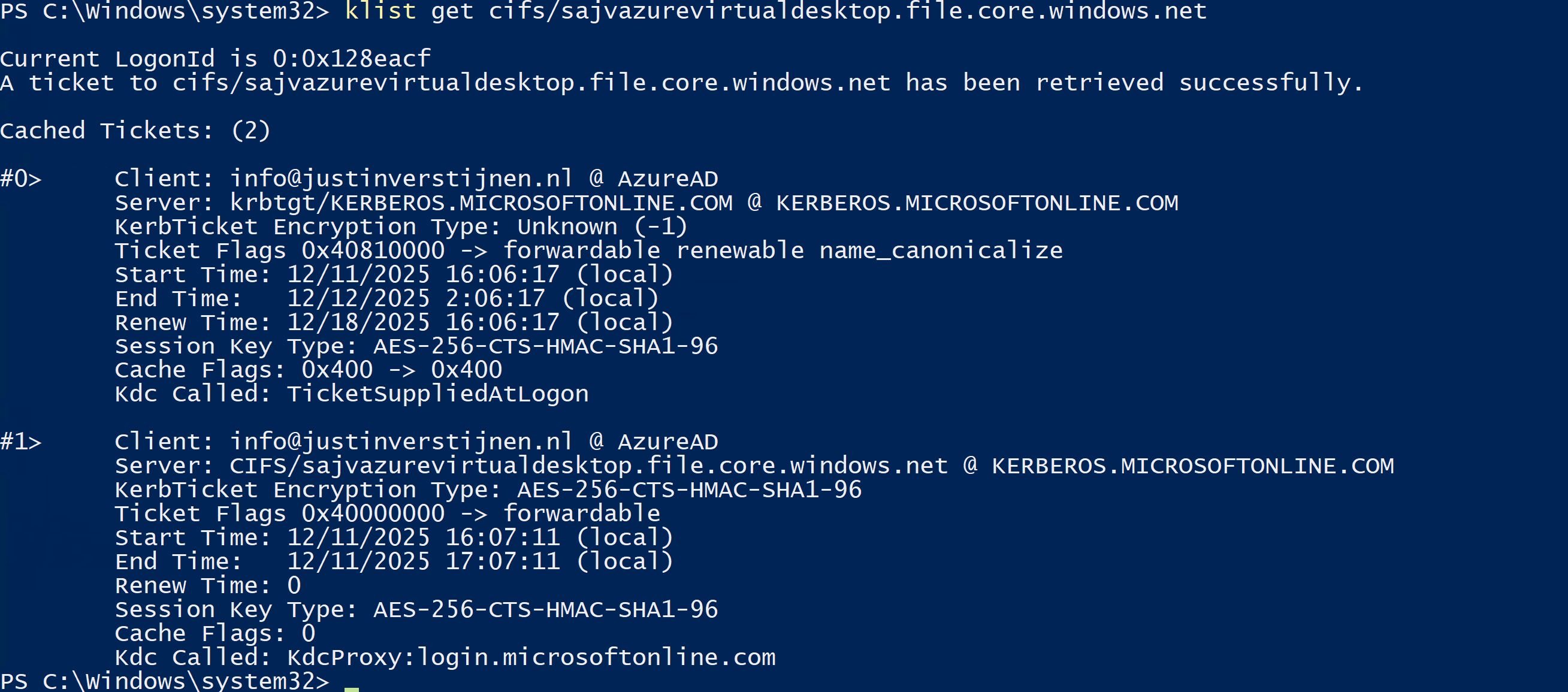

This returns the information about the current Kerberos ticket, and as you can see at the KerbTicket Encryption Type, AES-256 is being used, which is the newer protocol.

You can also retrieve all current tickets on your computer to check all tickets for their encryption protocol with this command:

klistCheck your configuration - Active Directory

In our Active Directory, we can audit if RC4 encryption is being used. The best and easiest way is to open up the Event Logs on a domain controller in your environment and check for these event IDs:

- Event ID 4768

- Event ID 4769

You can also use this PowerShell one-liner to get all RC4 events in the last 30 days.

Get-WinEvent -FilterHashtable @{LogName='Security'; Id=4768,4769; StartTime=(Get-Date).AddDays(-30); EndTime=(Get-Date)} | Select-Object TimeCreated, Id, MachineName, Message | Format-Table -AutoSize -WrapIf there are any events available, you can trace what resource still uses this older encryption and what possibly can be impacted after the update. If no events show, then your environment is ready for this upcoming change.

My advice is to check this on all your domain controllers to make sure you have checked all types of RC4 requests.

Change protocols of Storage account in Azure Portal

As Microsoft already patched this in November 2022, we can disable the RC4-HMAC protocol in the Azure Portal. Most Windows versions supported today already are patched, disabling the RC4-HMAC by default but optional if scenarios still require this protocol.

In my environment, I am using a Windows 11-based AVD environment and have a Domain Controller with Windows Server 2022. I disabled the RC4-HMAC without any problems or user interruption.

Although, I highly recommend performing this change during off-business hours to prevent any user interruption.

If the protocol is disabled and FSLogix still works, the change has been successfully done. We prepared our environment for the coming change and can now possibly troubleshoot any problems instead of a random Windows Update disabling this protocol and impacting your environment.

Summary

This blog post described the deprecation of the older RC4-HMAC protocol and what can possibly impact your environment. If using only modern operating systems, there is a great chance you don’t have to change anything. However, if older operating systems than Windows 11 are being used, this update can possibly impact your environment.

If your environment already uses AES-based Kerberos encryption for Azure Files, FSLogix and other SMB-dependent workloads, you are likely in a good position. If not, now is the right time to test, remediate and switch in a controlled way instead of finding out after the Windows updates are installed. We IT guys like controlled change of protocols where we actually know what could impact different workloads and give errors.

Thank you for visiting this page and I hope it was helpful.

Sources

These sources helped me by writing and research for this post;

End of the page 🎉

You have reached the end of the page. You can navigate through other blog posts as well, share this post on X, LinkedIn and Reddit or return to the blog posts collection page. Thank you for visiting this post.

If you think something is wrong with this post or you want to know more, you can send me a message to one of my social profiles at: https://justinverstijnen.nl/about/

If you find this page and blog very useful and you want to leave a donation, you can use the button below to buy me a beer. Hosting and maintaining a website takes a lot of time and money. Thank you in advance and cheers :)

The terms and conditions apply to this post.

I tested Azure Virtual Desktop RemoteAppV2

Microsoft announced RemoteAppV2 under some pretty enhancements on top of the older RemoteApp engine. This newer version has some improvements like:

- Better multi monitor support

- Better resizing/window experience

- Visuals like window shadows

I cannot really show this in pictures, but if you test V2 alongside V1, you definitely notice these small visual enhancements. However, a wanted feature called “drag-and-drop” is still not possible on V2.

Source: https://learn.microsoft.com/en-us/azure/virtual-desktop/remoteapp-enhancements

How to enable RemoteAppV2

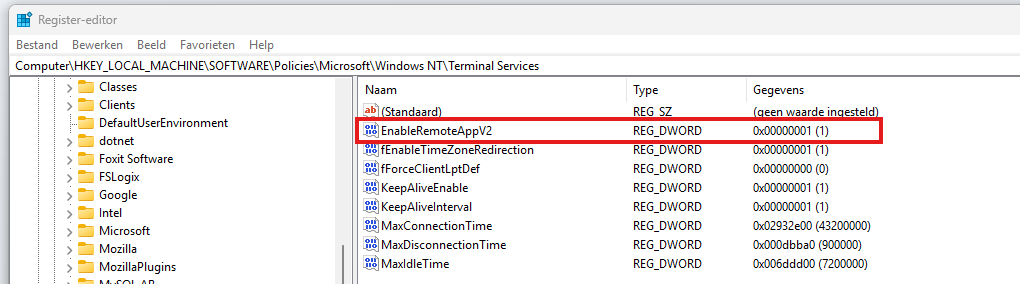

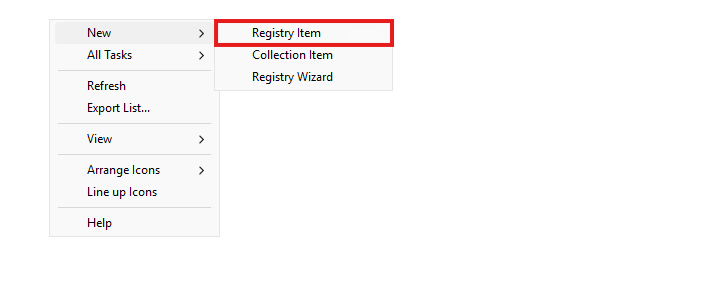

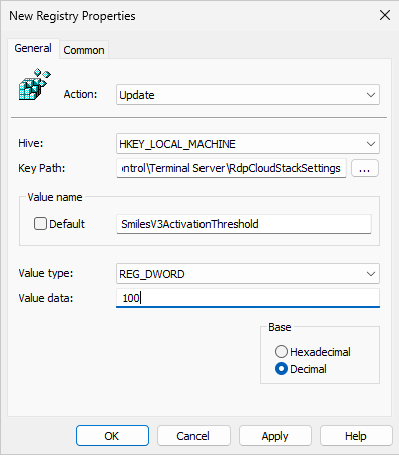

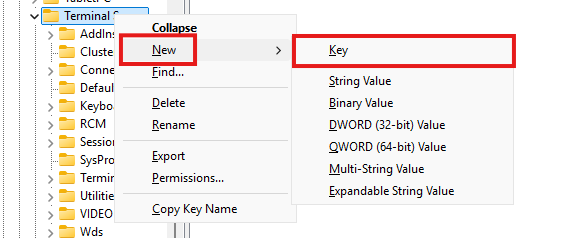

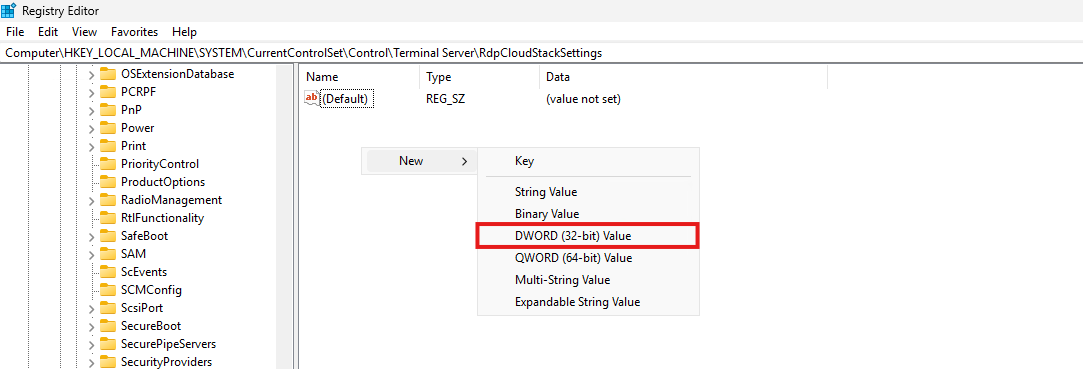

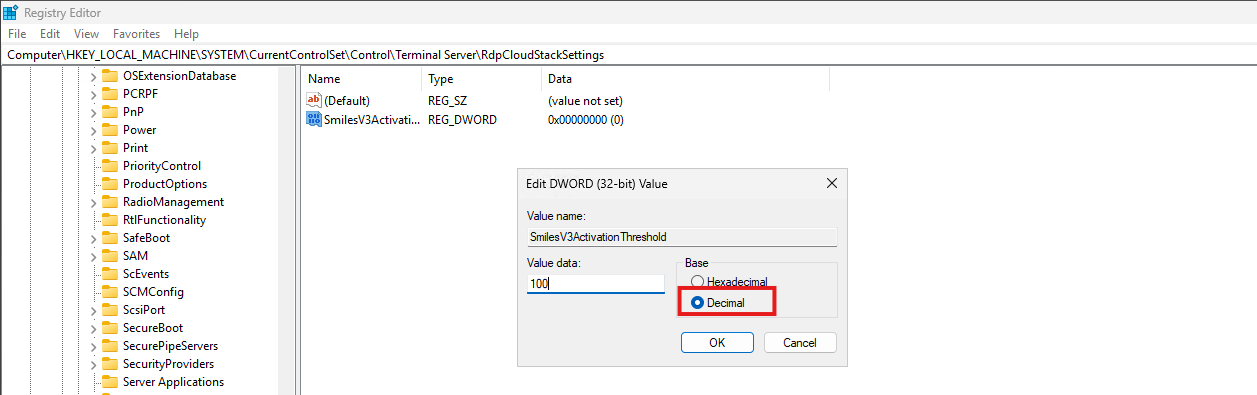

To enable RemoteAppV2, you need to set a registry key as long as the preview is running. Make sure you are compliant with the requirements as described on this page (client + hosts):

https://learn.microsoft.com/en-us/azure/virtual-desktop/remoteapp-enhancements#prerequisites

We can do this manually or through a Powershell script which you can deploy with Intune:

- Key: HKLM\Software\Policies\Microsoft\Windows NT\Terminal Services

- Type: REG_DWORD

- Value name: EnableRemoteAppV2

- Value data: 1

$registryPath = "HKLM:\Software\Policies\Microsoft\Windows NT\Terminal Services"

if (-not (Test-Path $registryPath)) {

New-Item -Path $registryPath -Force | Out-Null

}

New-ItemProperty `

-Path $registryPath `

-Name "EnableRemoteAppV2" `

-PropertyType DWord `

-Value 1 `

-Force | Out-NullThis should look like this:

Check out the status

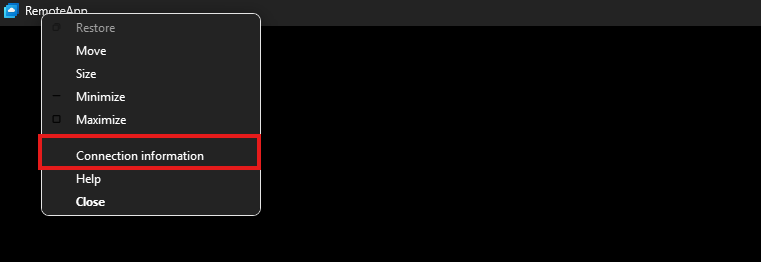

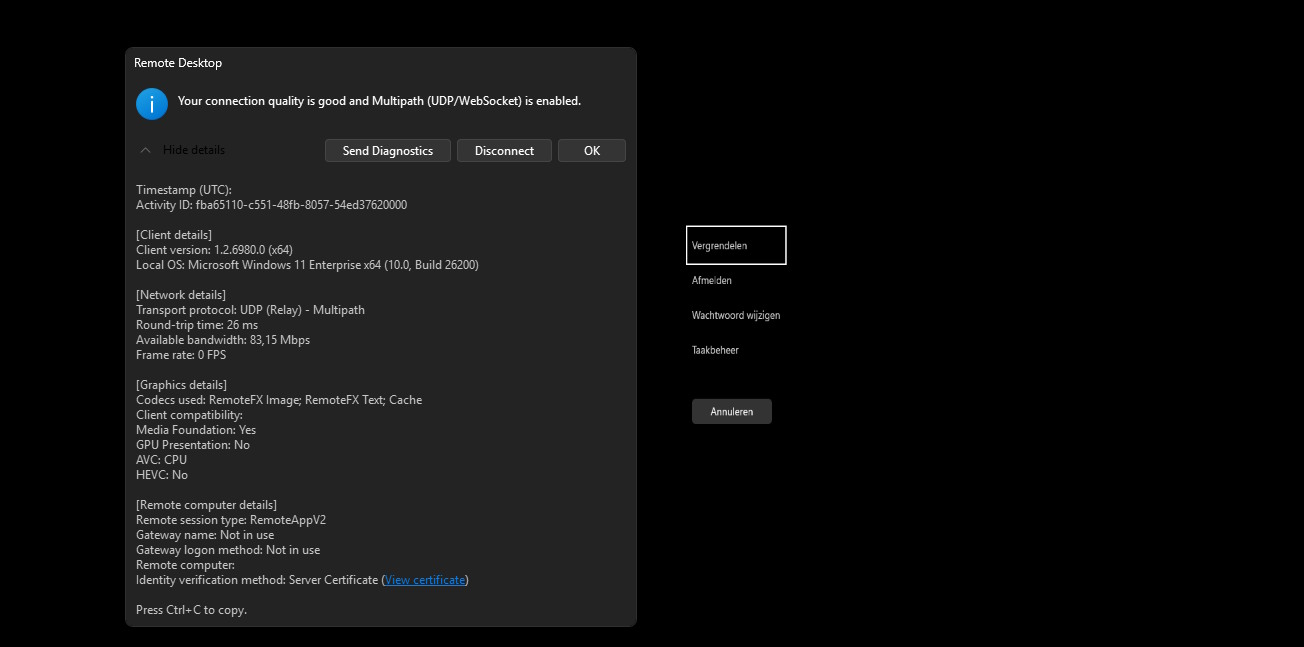

When enabled the registry key, the host must be restarted to make the changes effective. After that, when opening a Remote App, press the following shortcut:

- CTRL + ALT + END

Then right click the title bar and click Connection Information

This gives you the RDP session information, just like with full desktops.

Under the Remote session type, you must see RemoteAppV2 now. Then the new enhancements are applied.

Downsides of RemoteAppV2

The one thing which pushes me away from using RemoteApp is the missing drag and drop functionality. This is something a lot of users want when working in certain applications. This V2 version also lacks this functionality.

I also couldn’t get it to work with the validation environment setting only. In my case, I had to create the registry key.

Sources

These sources helped me by writing and research for this post;

End of the page 🎉

You have reached the end of the page. You can navigate through other blog posts as well, share this post on X, LinkedIn and Reddit or return to the blog posts collection page. Thank you for visiting this post.

If you think something is wrong with this post or you want to know more, you can send me a message to one of my social profiles at: https://justinverstijnen.nl/about/

If you find this page and blog very useful and you want to leave a donation, you can use the button below to buy me a beer. Hosting and maintaining a website takes a lot of time and money. Thank you in advance and cheers :)

The terms and conditions apply to this post.

Azure Virtual Desktop V6/V7 VMs imaging

When I first chose to use V6 or V7 machines with Azure Virtual Desktop, I ran into some boot controller errors about the boot controller not supporting SCSI images.

- The VM size ‘Standard_E4as_v7’ cannot boot with OS image or disk. Please check that disk controller types supported by the OS image or disk is one of the supported disk controller types for the VM size ‘Standard_E4as_v7’. Please query sku api at https://aka.ms/azure-compute-skus to determine supported disk controller types for the VM size. (Code: InvalidParameter)

- This size is not available because it does not support the SCSI disk controller type.

Because I really wanted to use higher version VMs, I went to research on how to solve this problem. I will describe the process from creating the initial imaging VM, to capture and installing new AVD hosts with our new image.

The problem described

When using V6 and higher version Virtual Machines in Azure, the Boot Controller will also change from the older SCSI to NVMe. When using local VM storage, this could give a pretty disk performance increase but not really for Azure Virtual Desktop. We mostly use managed disks here so we don’t use that storage.

This change means that we have to also use a NVMe capable image storage, and this brings us to Azure Compute Gallery. With this Azure solution, we are able to do image versioning and has support for NVMe enabled VMs.

I used the managed images option in the past, as this was the most efficient option to deploy images very fast. However, NVMe controller VMs are not supported by those managed images and we can install up to V5 only.

| VM Version | Boot controller |

| v1-4 | SCSI |

| v5 | SCSI |

| v6 | NVMe |

| v7 | NVMe |

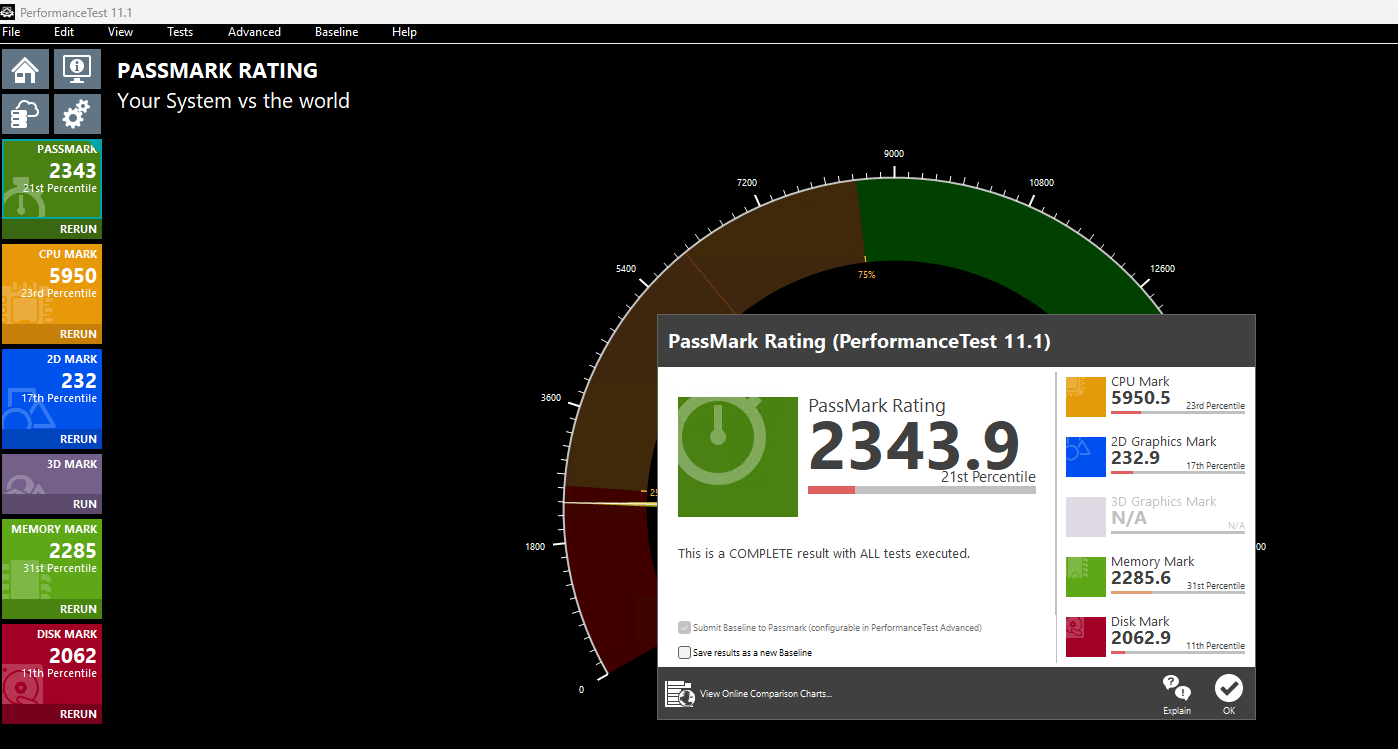

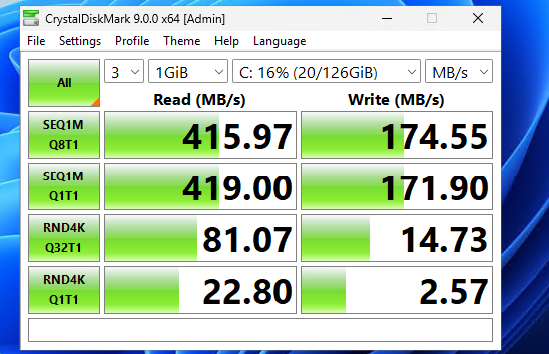

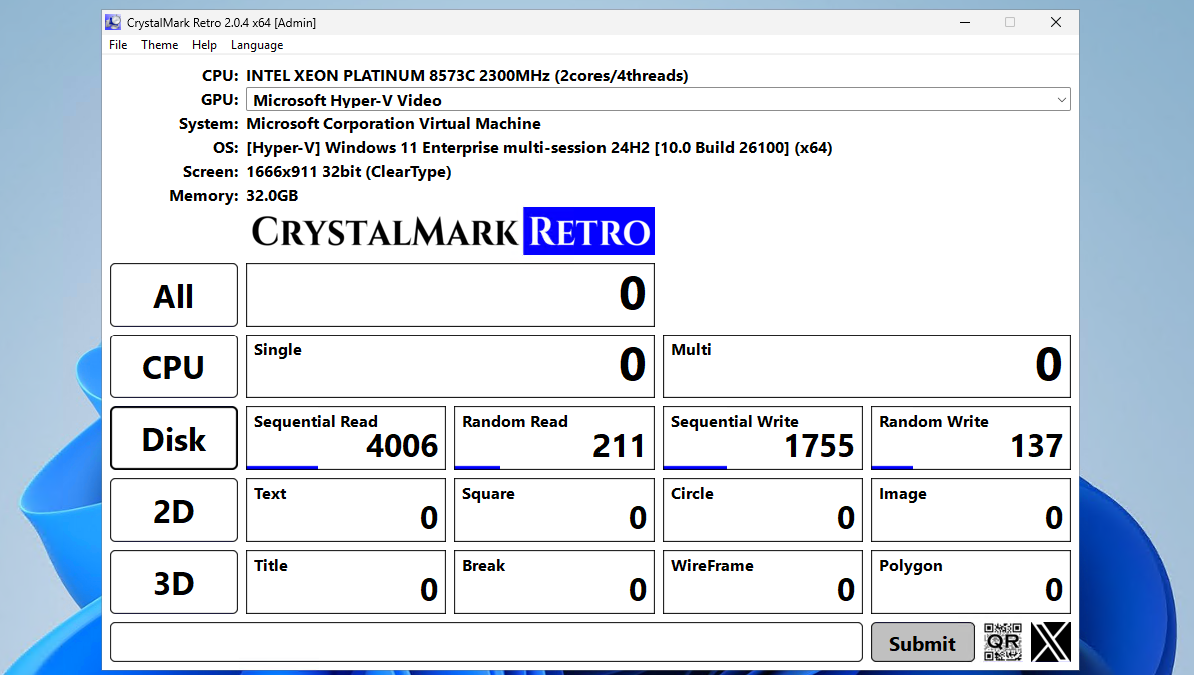

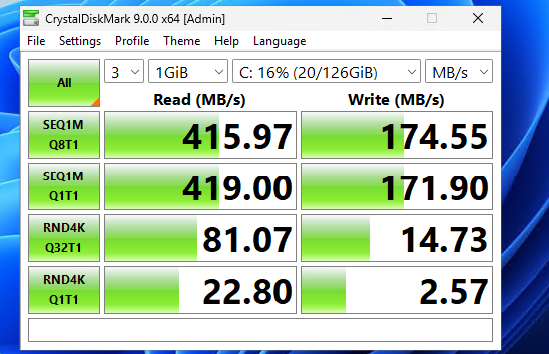

CPU performance v5 and v7 machines

Because I wondered what the performance difference could be between similar v5 and v7 machines in Azure, I did two benchmark tests on both machines. Both using these software:

- Geekbench 6

- Passmark PerformanceTest

This gave pretty interesting results:

| Benchmark software | E4s_v5 | E4as_v7 |

| Geekbench 6 Single Core | 1530 | 2377 |

| Geekbench 6 Multi Core | 3197 | 5881 |

| Passmark CPU | 5950 | 9092 |

This result would indicate a theoretical CPU performance increase of around 55%.

Click here for benchmark results

Step 1: Creating an imaging PC

Let’s start by creating our imaging PC. This is a temporary VM which we will do all our configurations on before mass deployment. Think of:

- Installing applications

- Installing dependencies

- Installing latest Windows Updates

- Optimizations

- Configuring the correct language

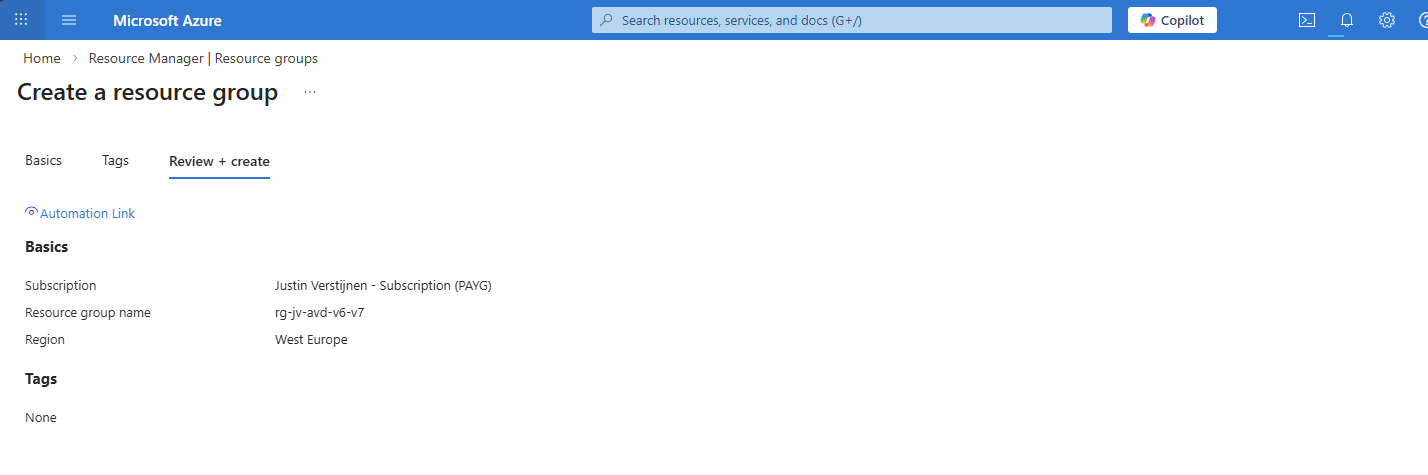

In the Azure Portal (https://portal.azure.com), create a resource group if not already having one for this purpose.

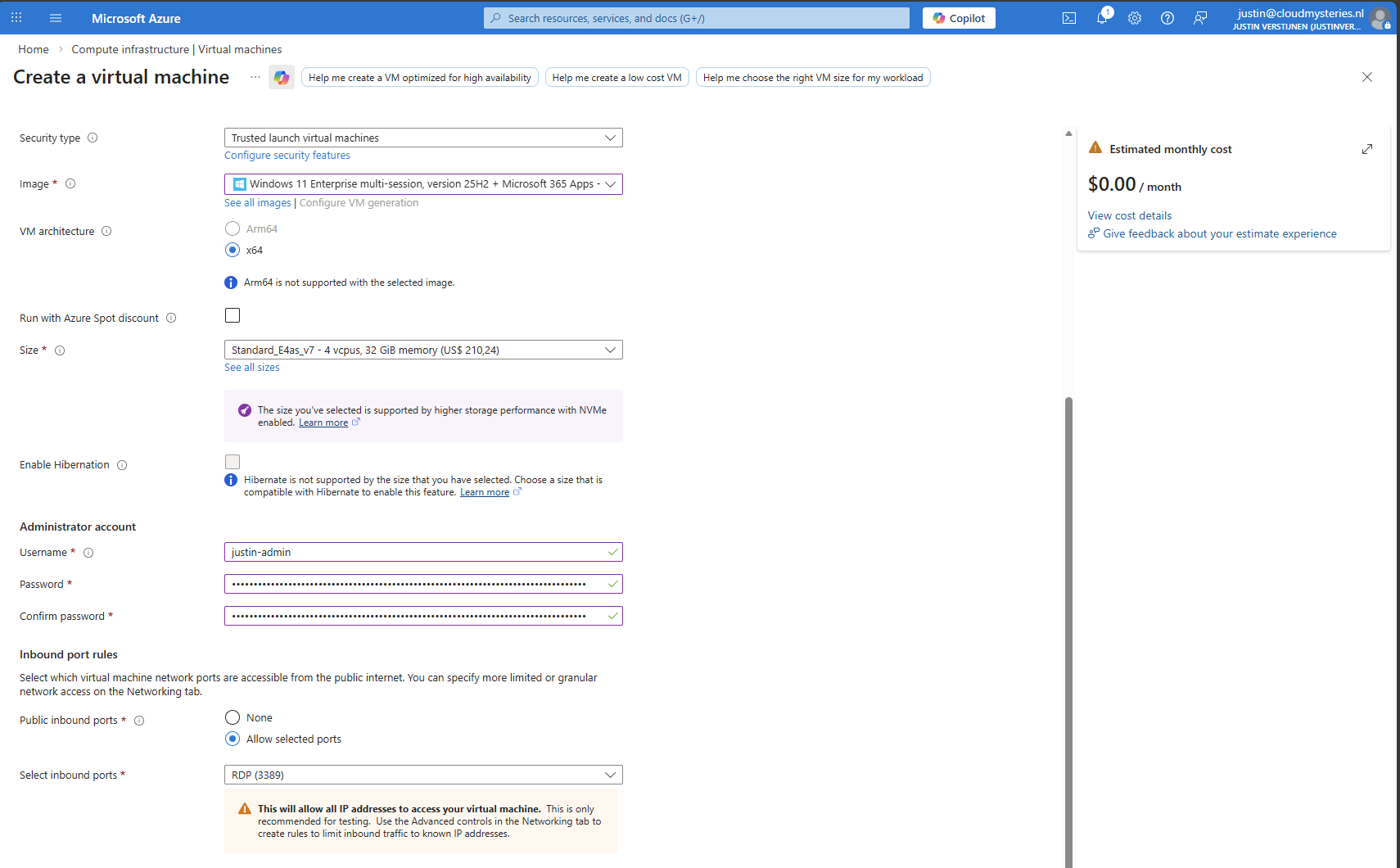

Now let’s go to “Virtual Machines” to create a temporary virtual machine. My advice is to always use the exact same size/specs as you will roll out in the future.

Create a new virtual machine using your settings. I chose the RDP top be opened so we can login to the virtual machine to install applications and such. Ensure you select the Multi-session marketplace image if you use a Pooled hostpool.

The option “Trusted launch virtual machines” is mandatory for these NVMe based VM sizes, so keep this option configured.

This VM creation process takes around 5 minutes.

Step 2: Virtual Machine customizations

Now we need to do our customizations. I would advise to do this in this order:

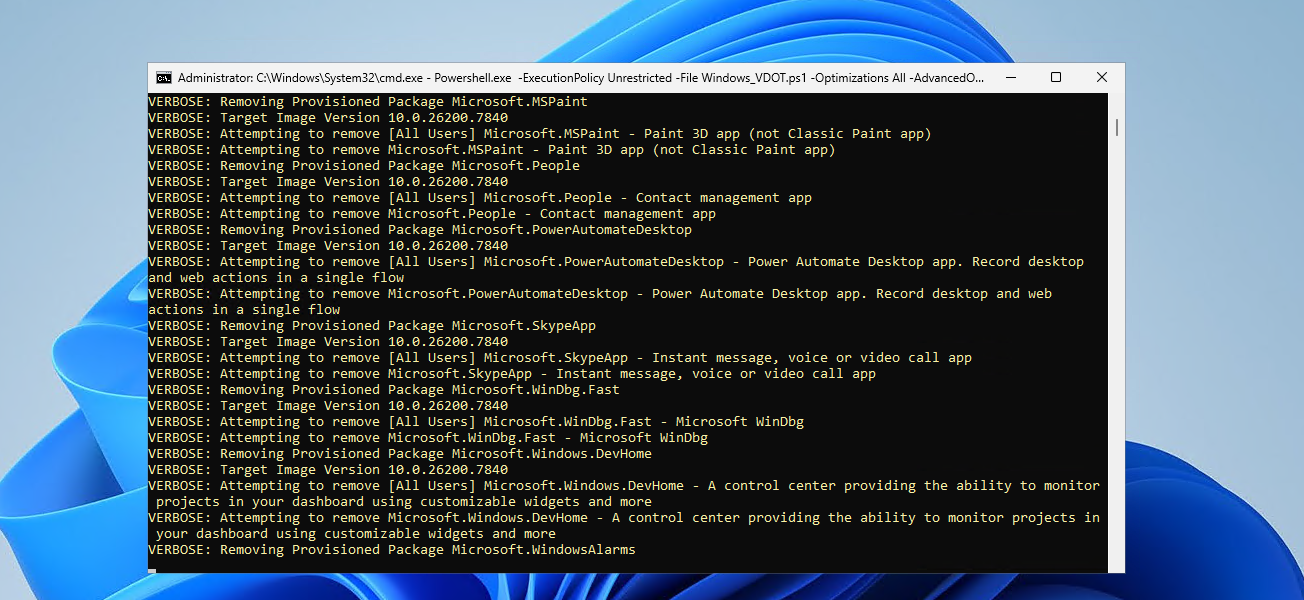

- Execute Virtual Desktop Optimization Tool (VDOT)

- Configuring the right system language

- Install 3rd party applications

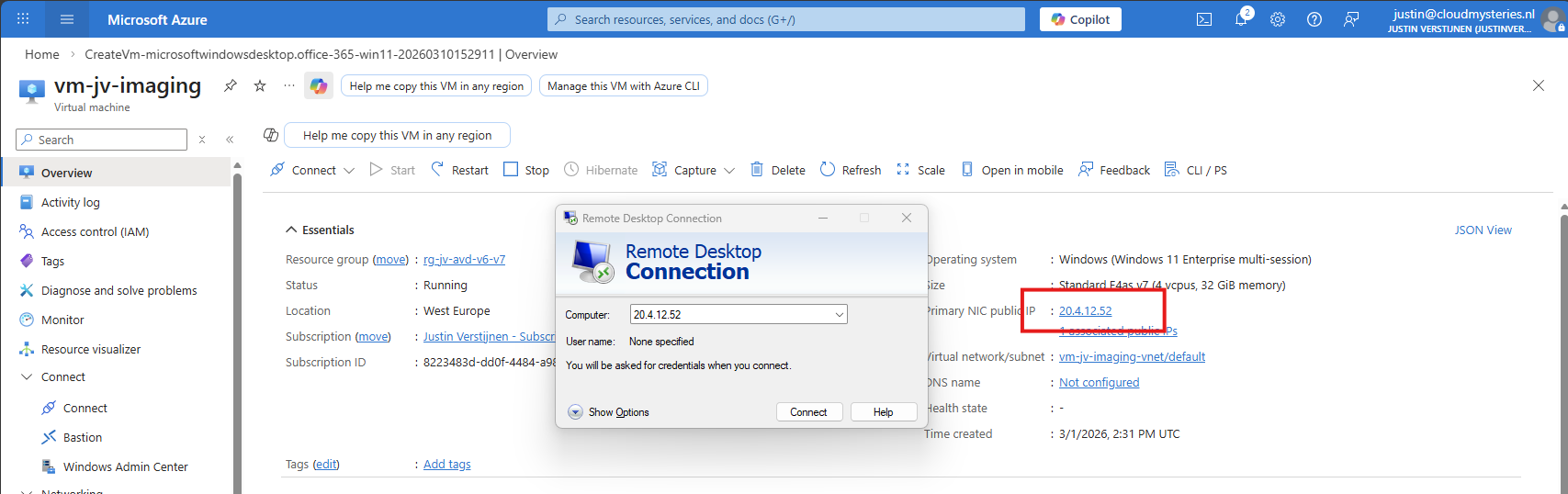

Connect to the virtual machine using RDP. You can use the Public IP assigned to the virtual machine to connect to:

After logging in with the credentials you spefidied in the Azure VM wizard we are connected.

First I executed the Virtual Desktop Optimization tool:

Then ran my script to change the language which you can find here: https://justinverstijnen.nl/set-correct-language-and-timezone-on-azure-vm/

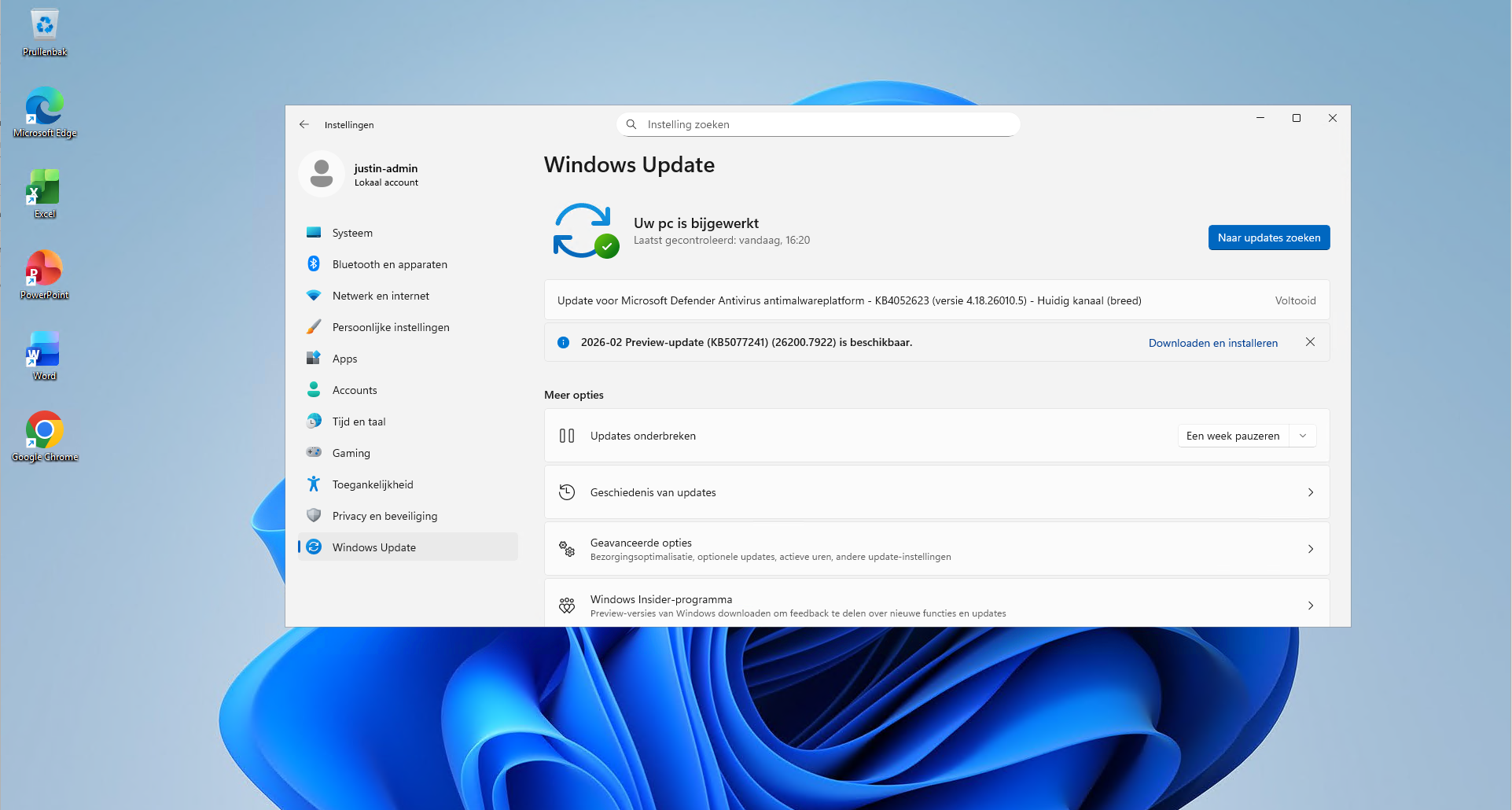

And finally installed the latest updates and applications. I dont like preview updates in production environments so not installed the update awaiting.

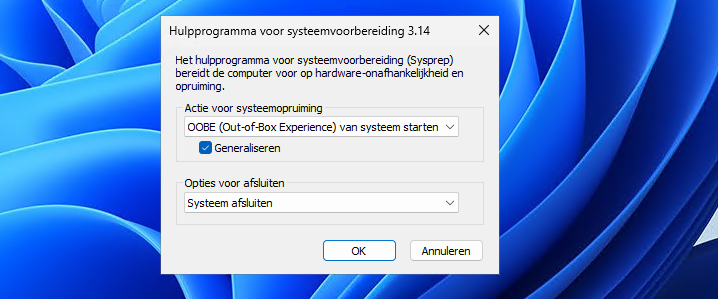

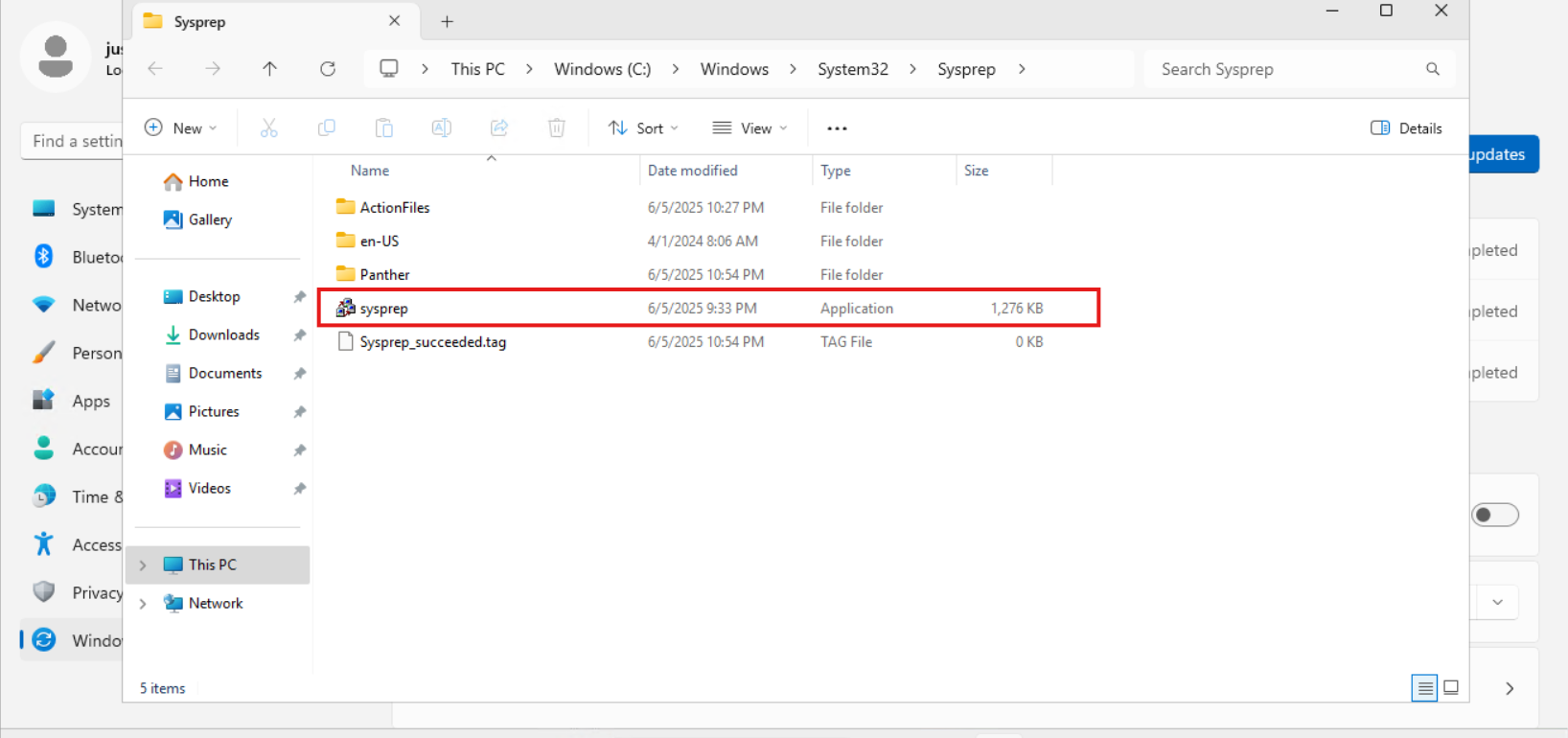

Step 3: Sysprepping the Virtual Machine

Now that we have our machine ready, it’s time to execute an application called sysprep. This makes the installation ready for mass deployment, eliminating every driver, (S)ID and other specific information to this machine.

You can find this here:

- C:\Windows\System32\Sysprep\sysprep.exe

Put this line into the “Run” window and the applications opens itself.

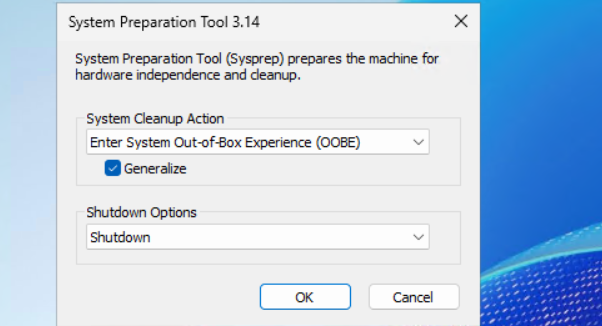

Select “Generalize” and choose the option to shutdown the machine after completing.

If getting an error that Bitlocker Drive Encryption is enabled, execute this command to disable it (you can re-enable it after deployment):

PowerShell

manage-bde -off C:

Wait for around 15 minutes to finish decryption, then try Sysprep again.

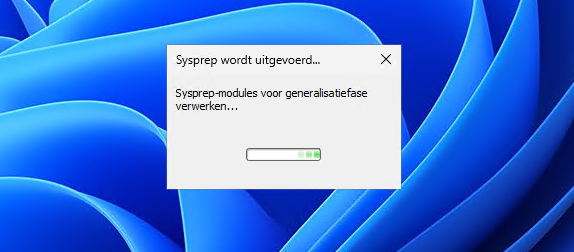

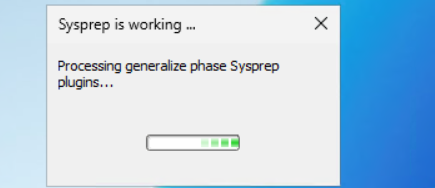

The machine will now clean itself up and then shutdown. This process can take up to 20 minutes, in the meanwhile you can advance with step 4.

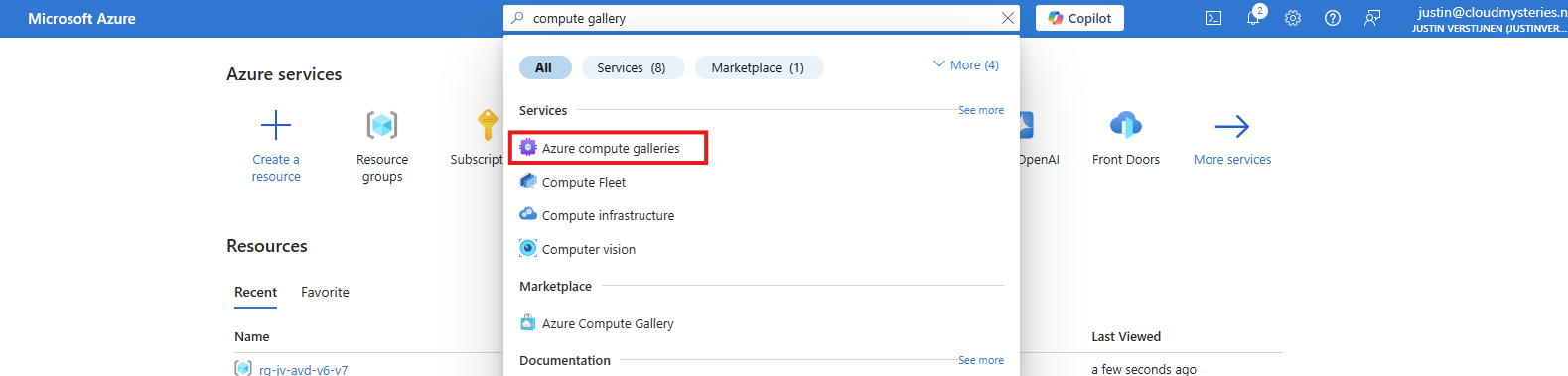

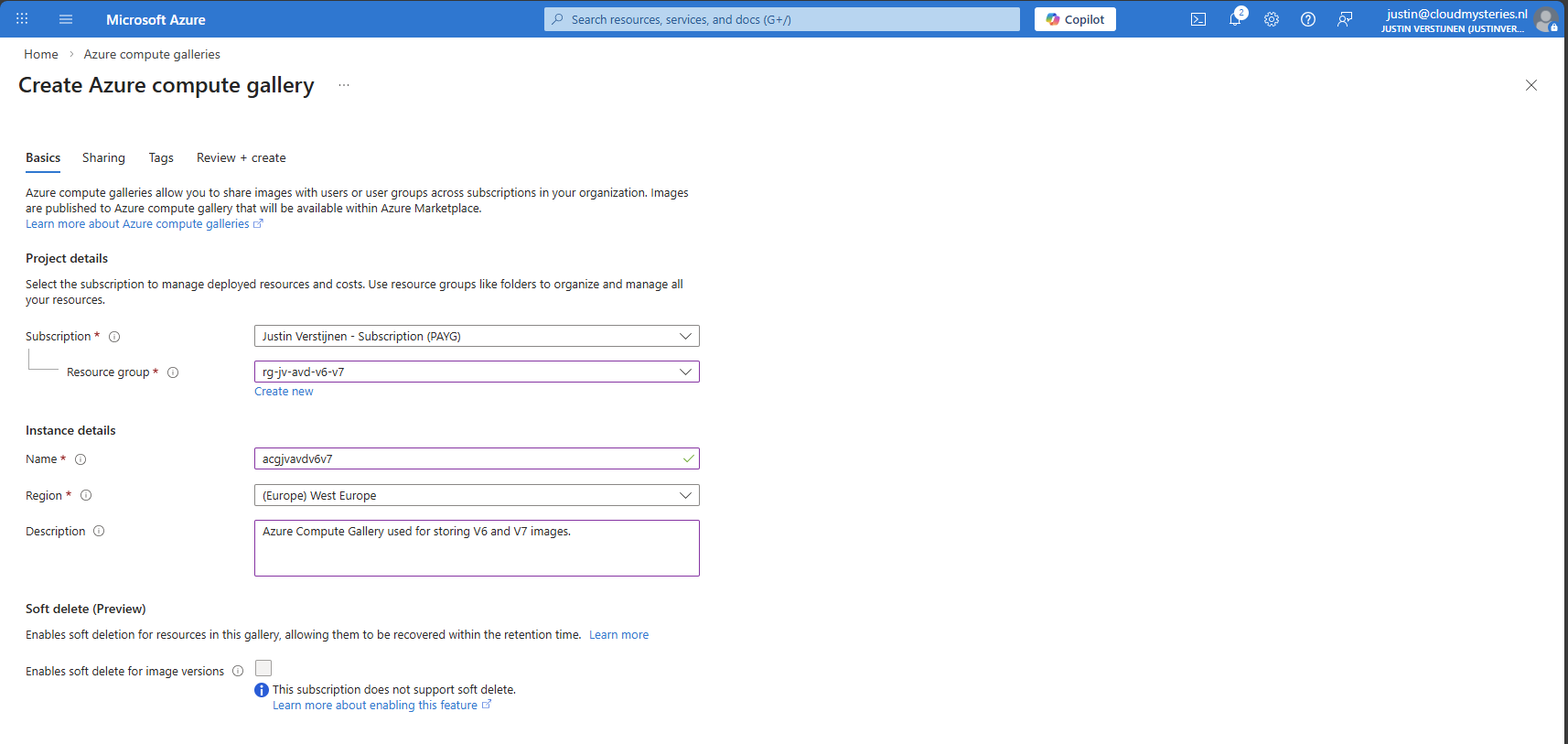

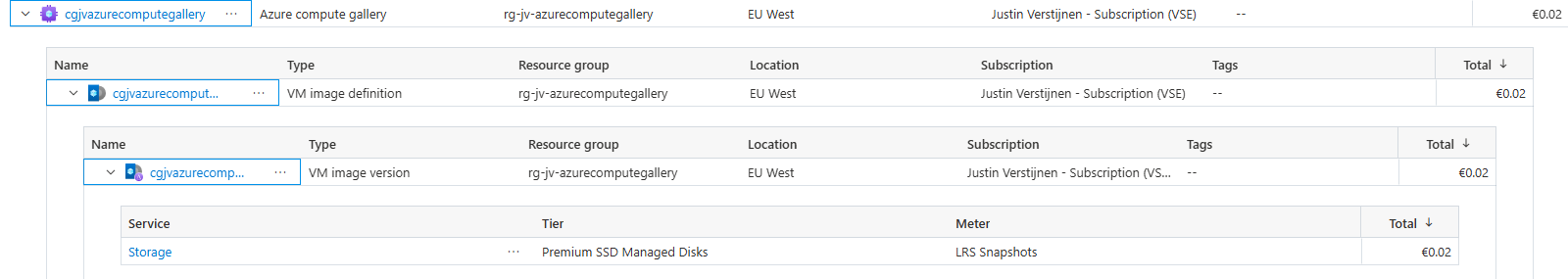

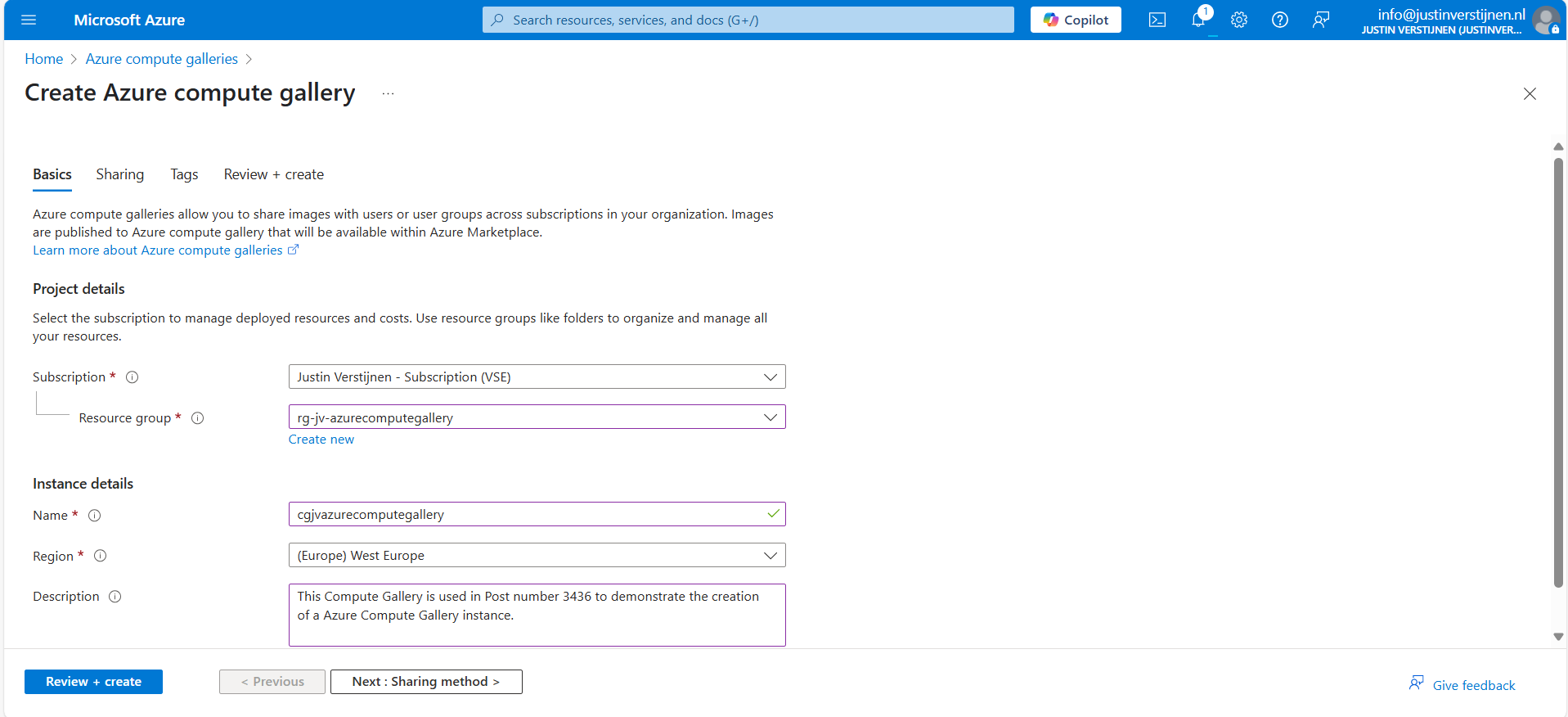

Step 4: Create Azure Compute Gallery instance

Before we can capture the VM, we must first create a space for it. This is the Azure Compute Gallery, a managed image repository inside of your Azure environment.

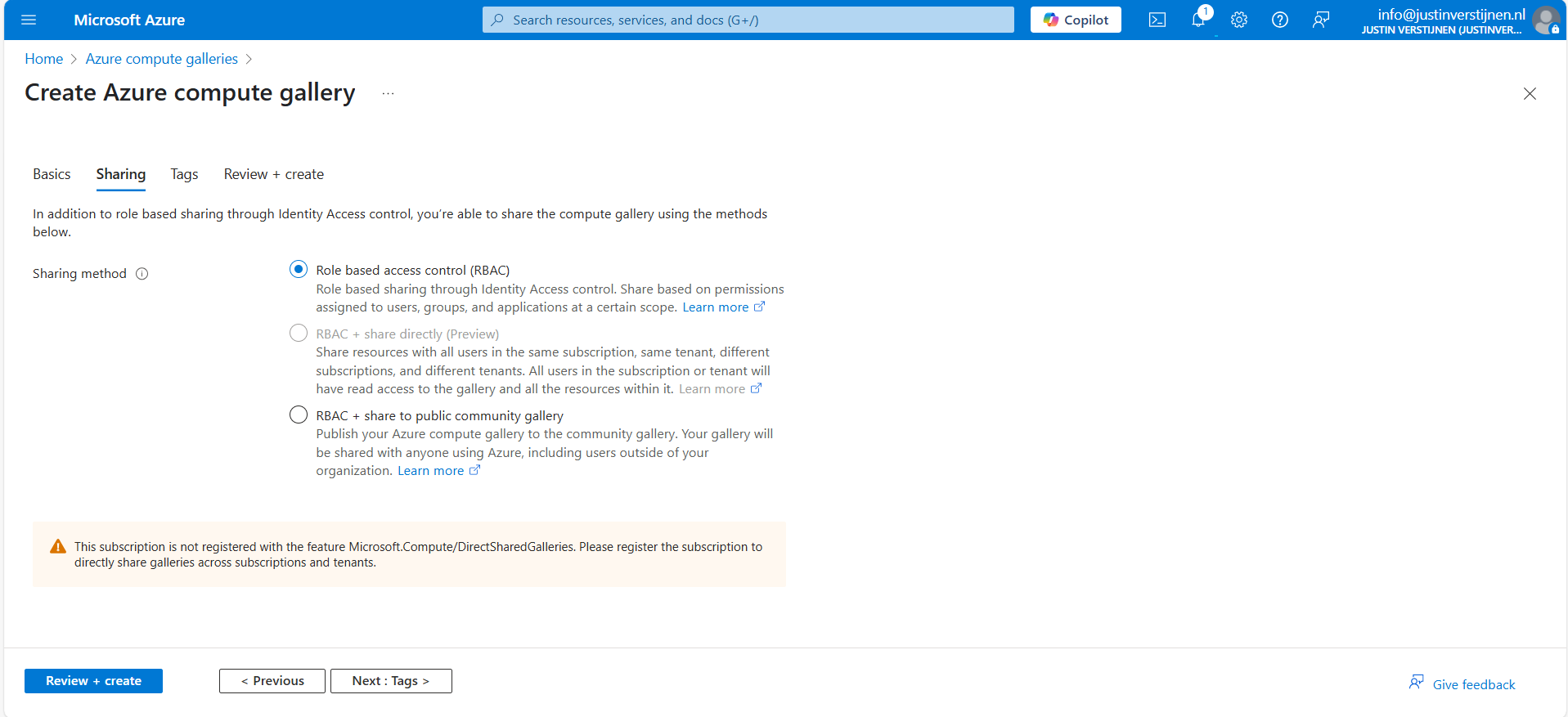

Go to “Azure compute galleries” and create a new ACG.

Give the ACG a name and place it in the right Subscription/Resouce Group.

Then click “Next”.

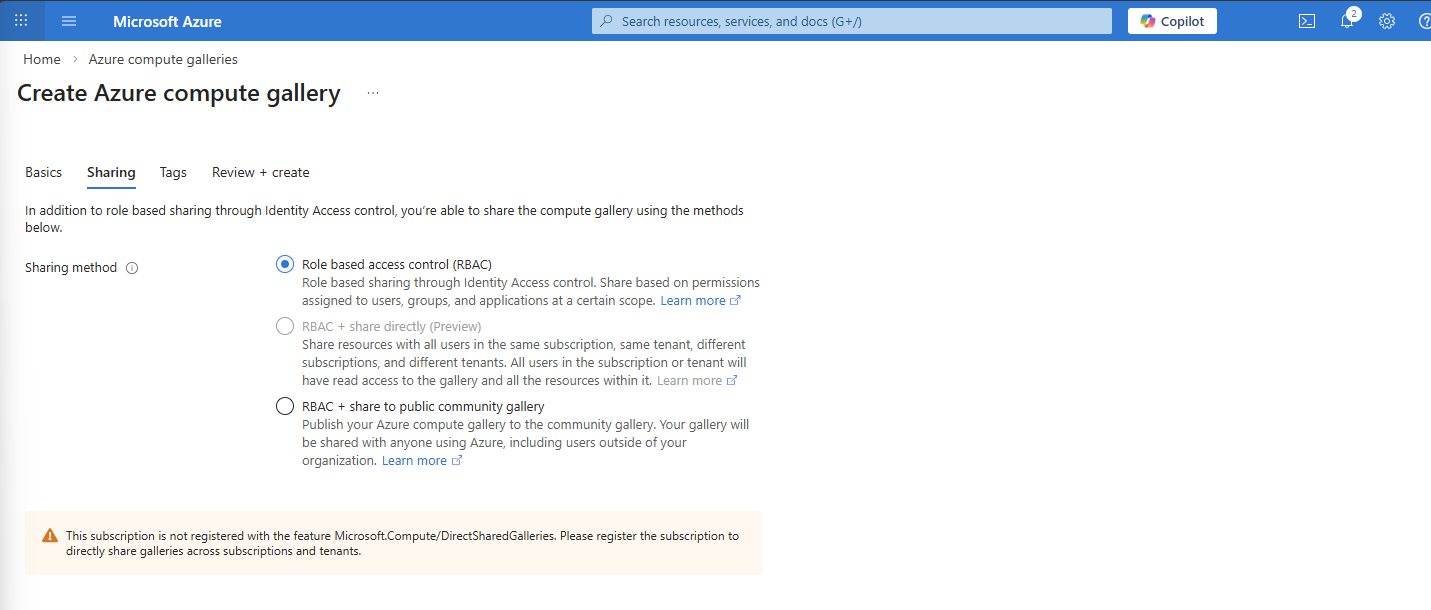

I use the default “RBAC” option at the “Sharing” tab as I dont want to publicy share this image. With the other options, you could share images acros other tenants if you want.

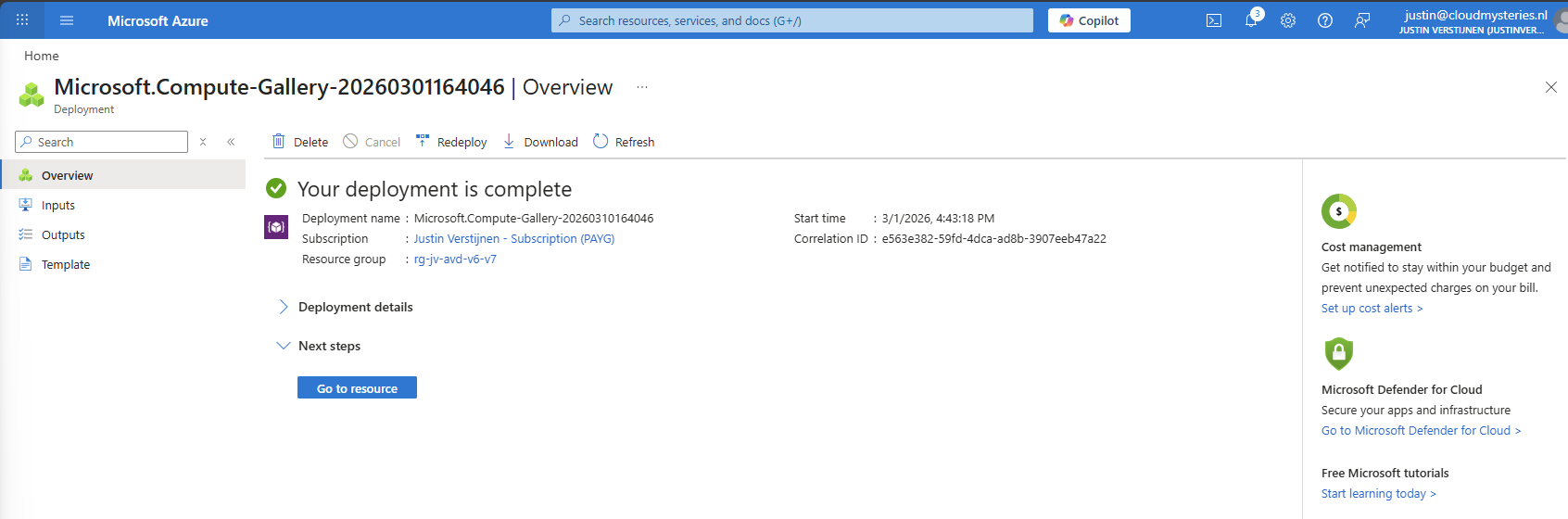

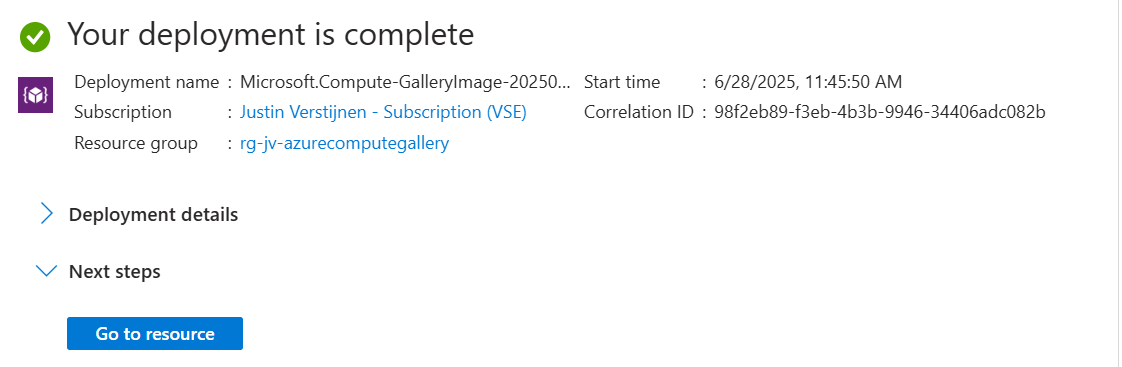

After finishing the wizard, create the Compute Gallery and wait for it to deploy which takes several seconds.

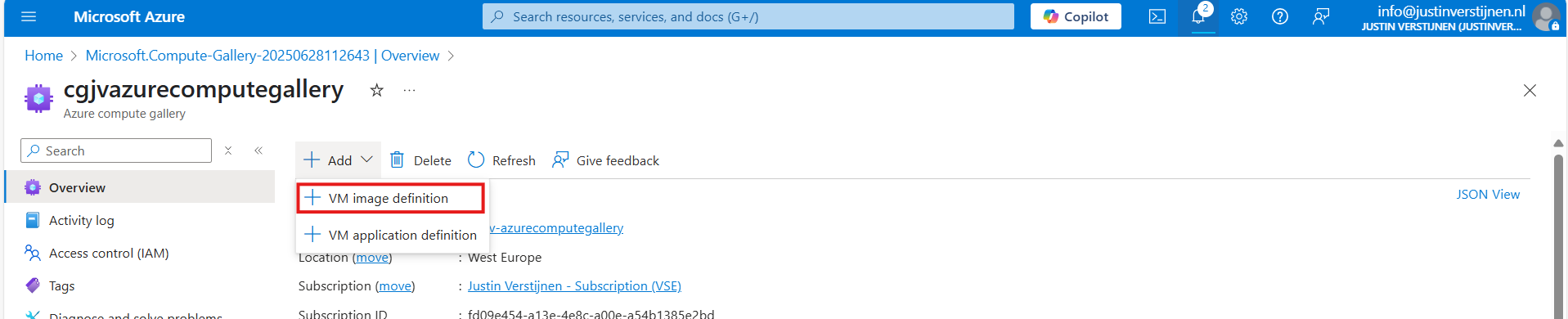

Step 5: Capture VM image and create VM definition

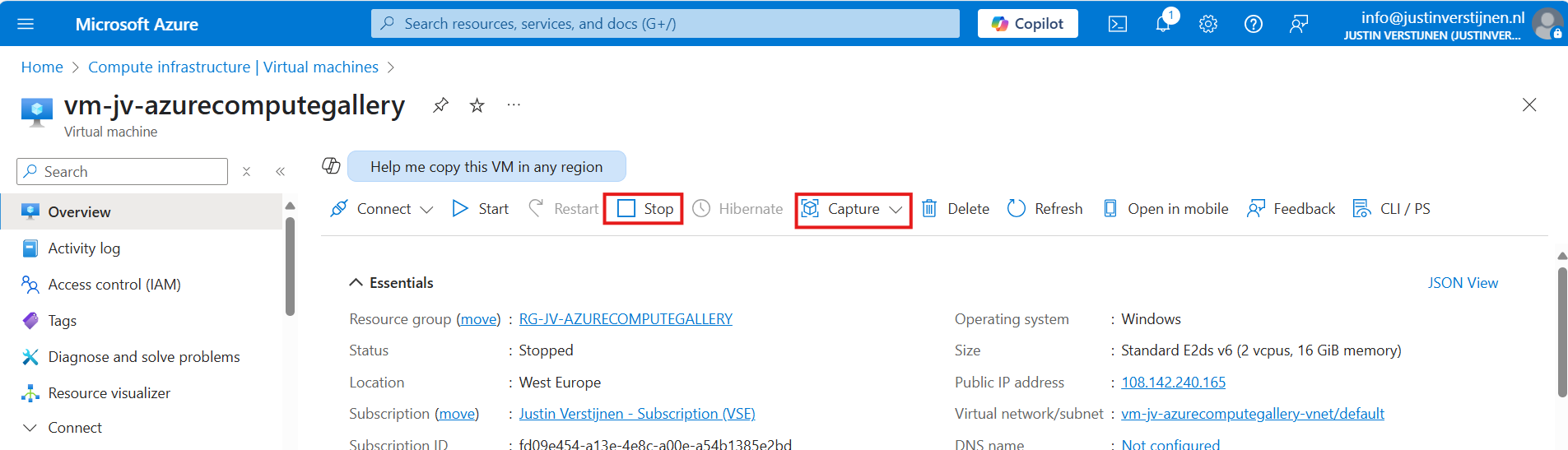

We can now finally capture our VM image and store it in the just created ACG. Go back to the virtual machine you have sysprepped.

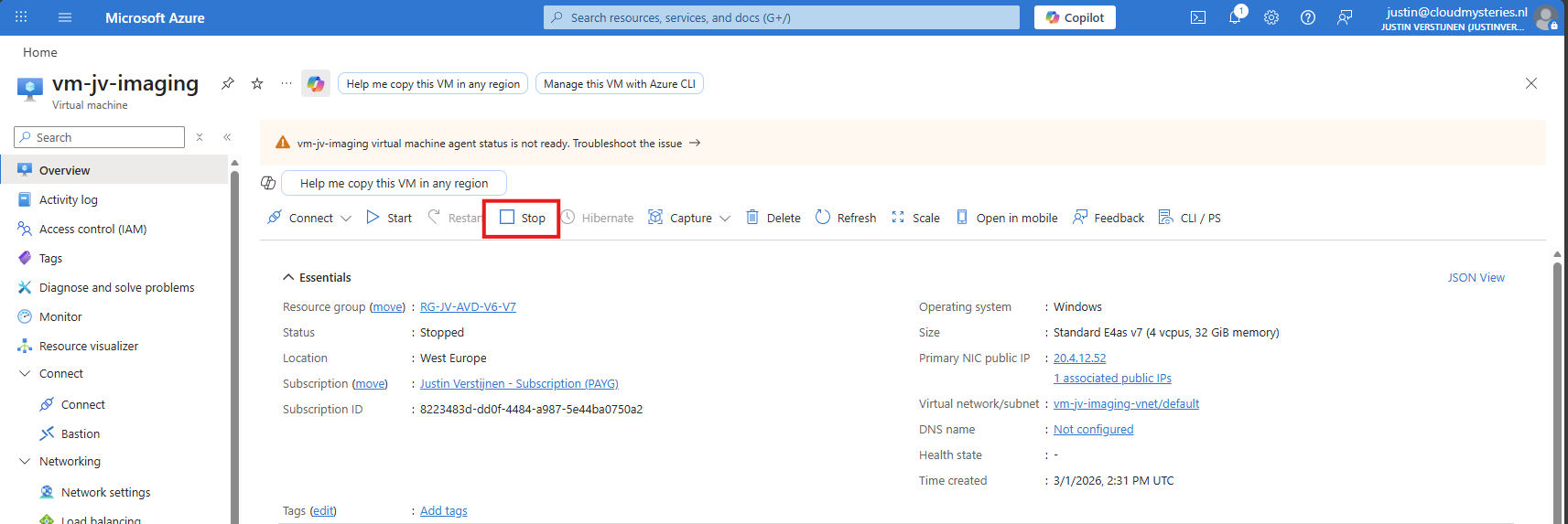

As it is “Stopped” but not “Deallocated”, we must first click “Stop” to deallocate the VM. This is because the OS itself gave the shutdown command but this does not really de-allocate the machine, and is still stand-by.

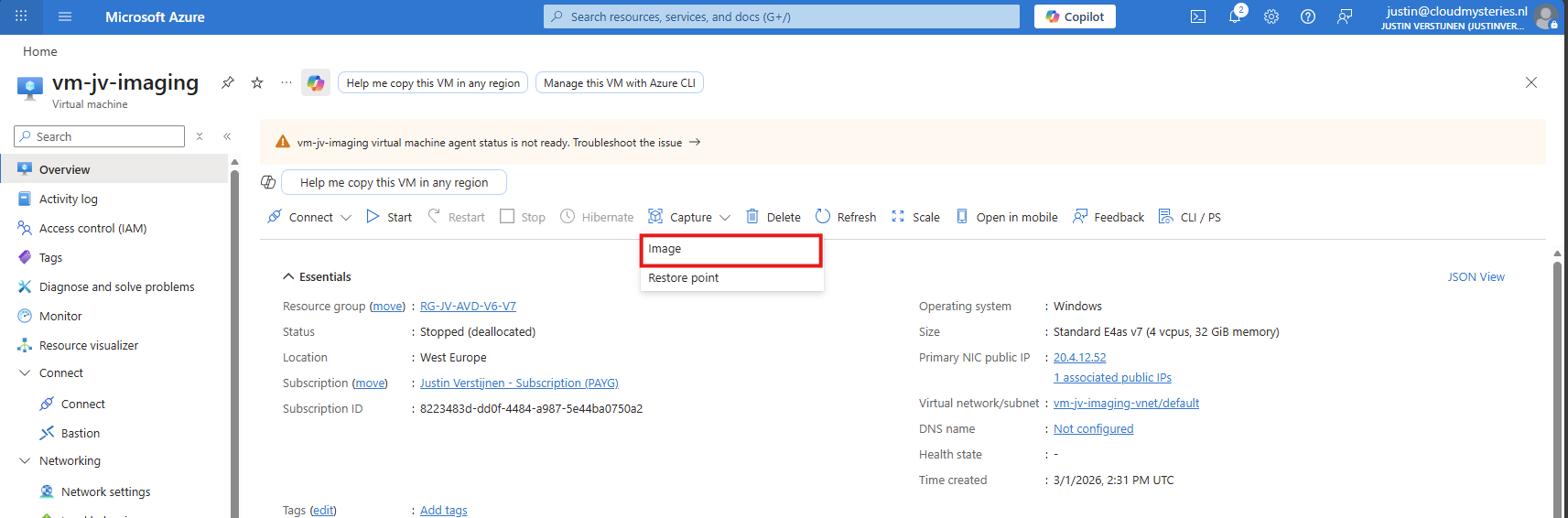

Now click “Capture” and select the “Image” option.

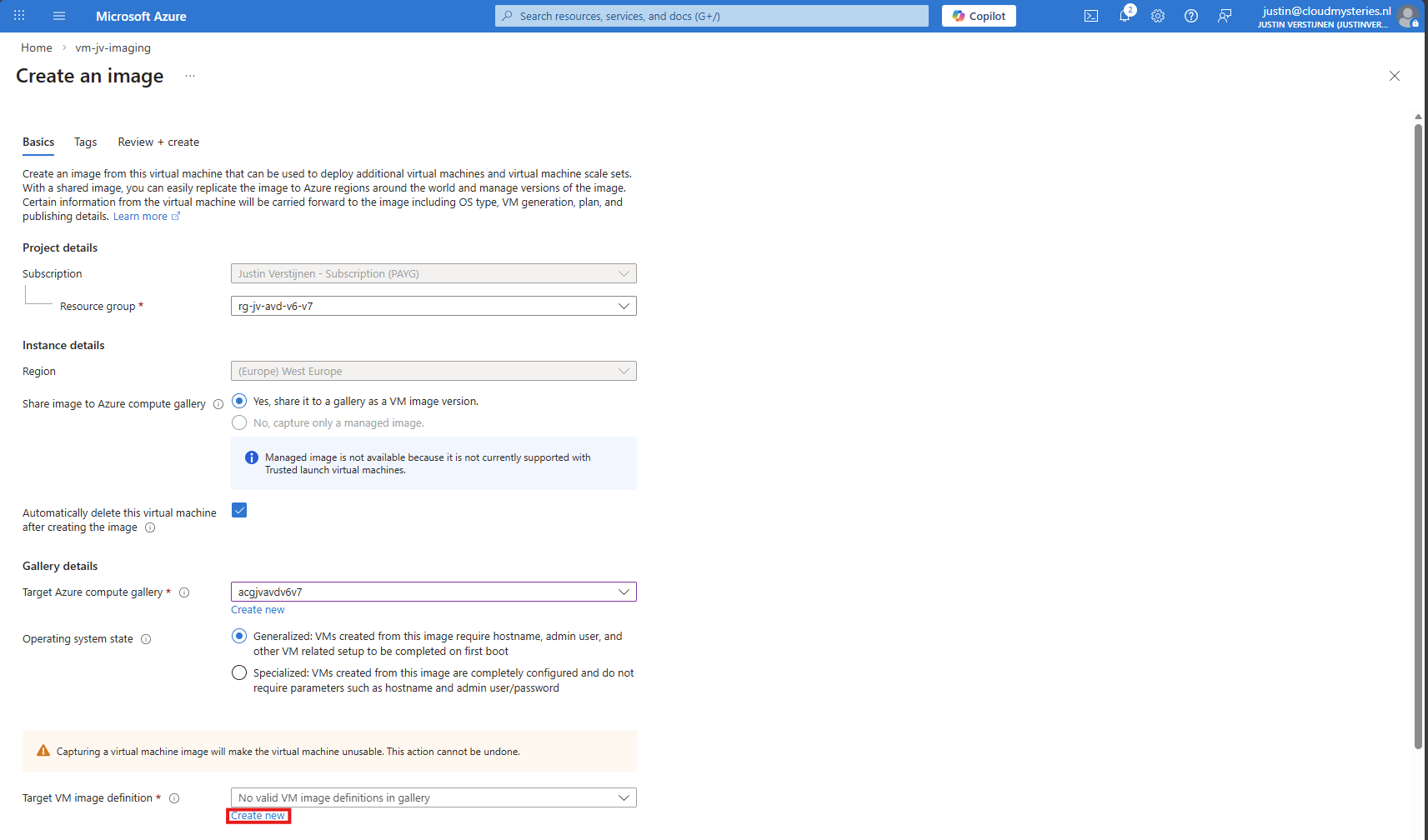

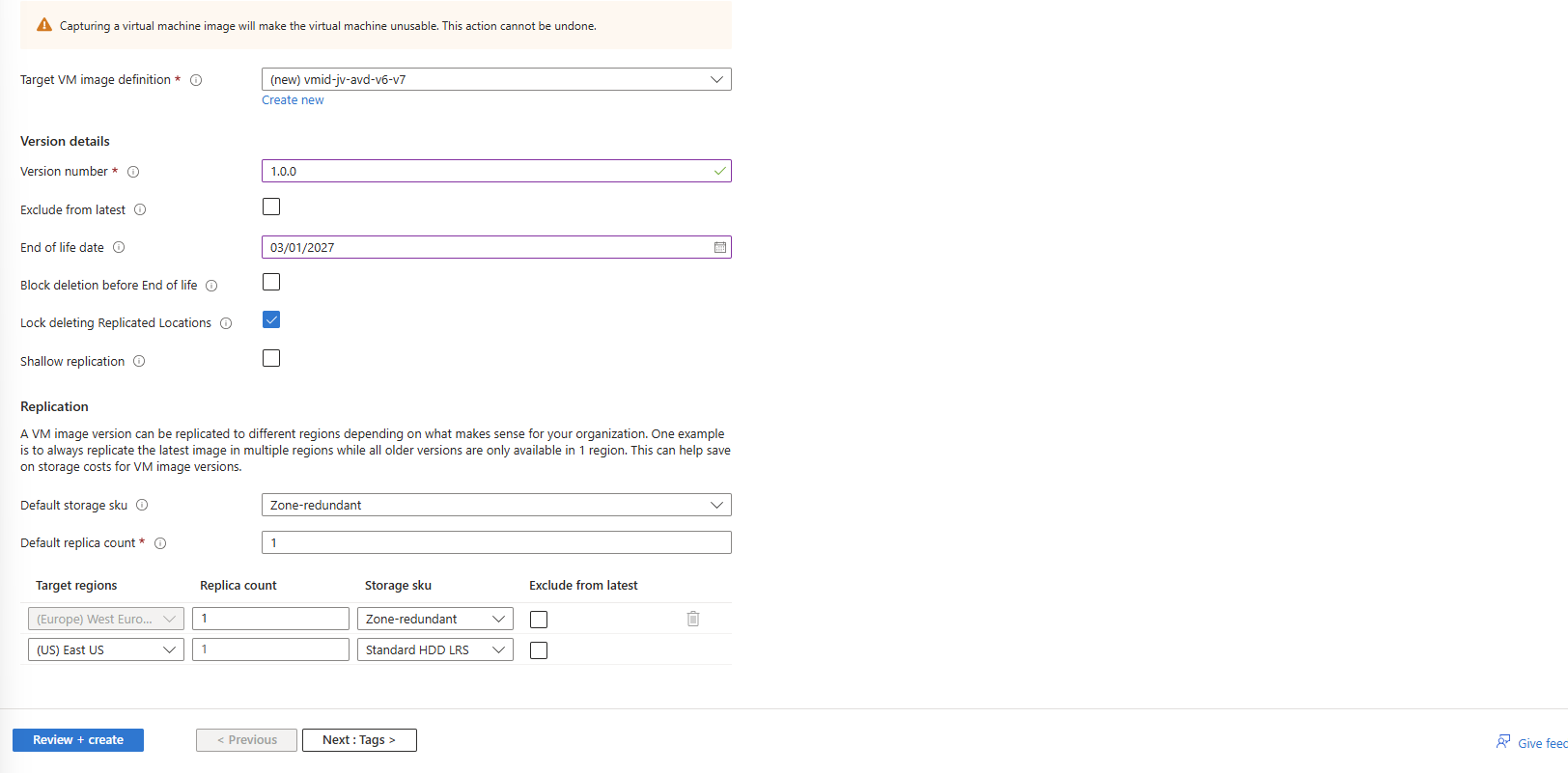

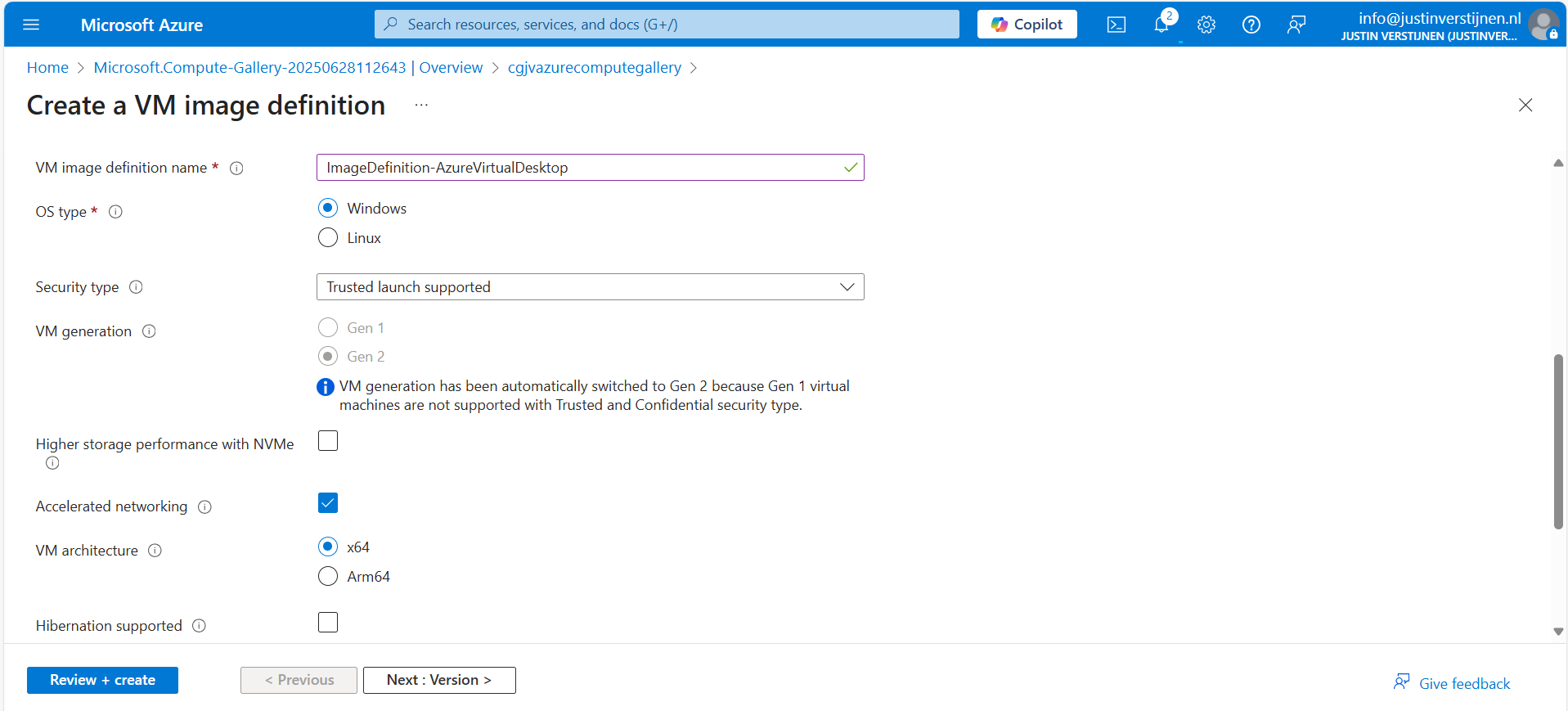

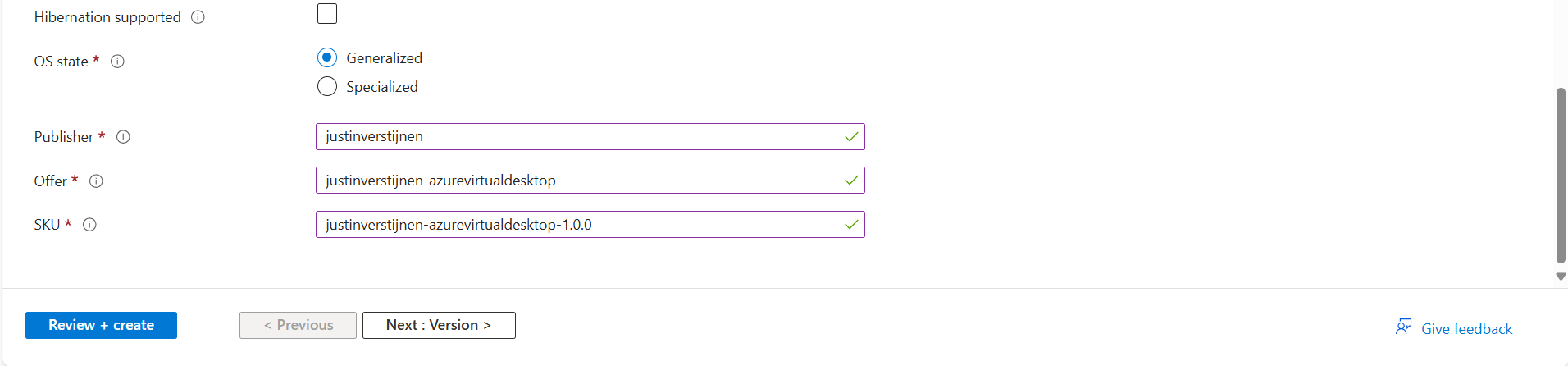

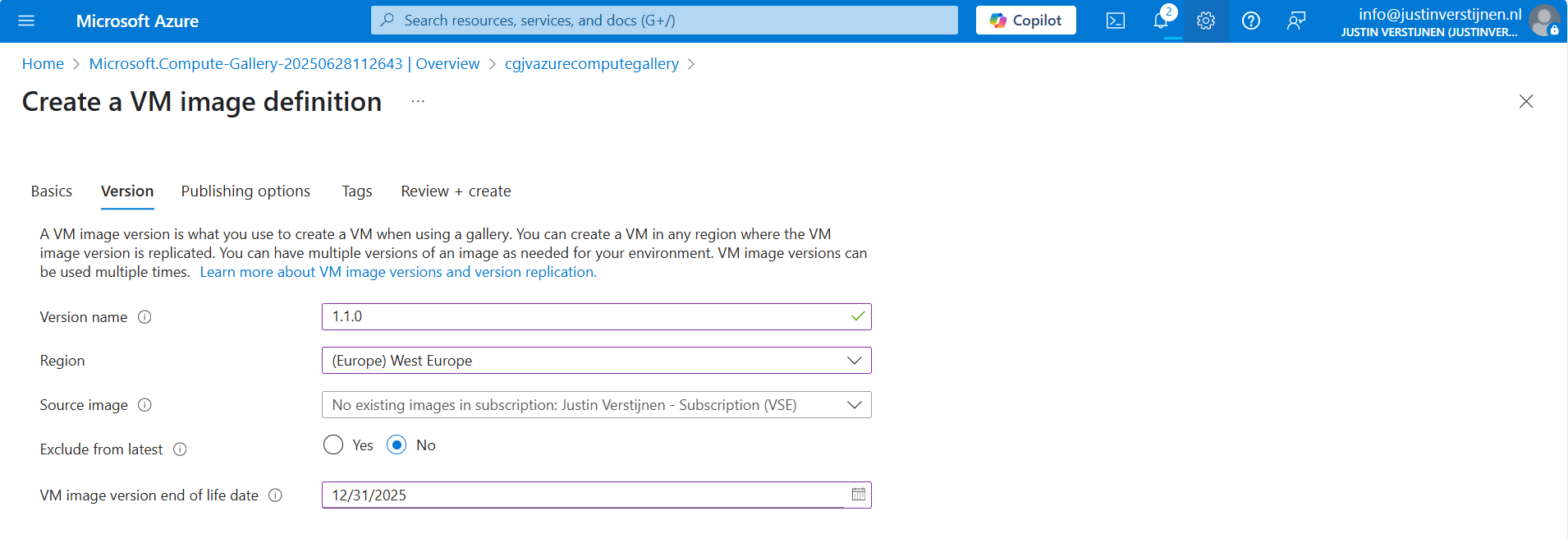

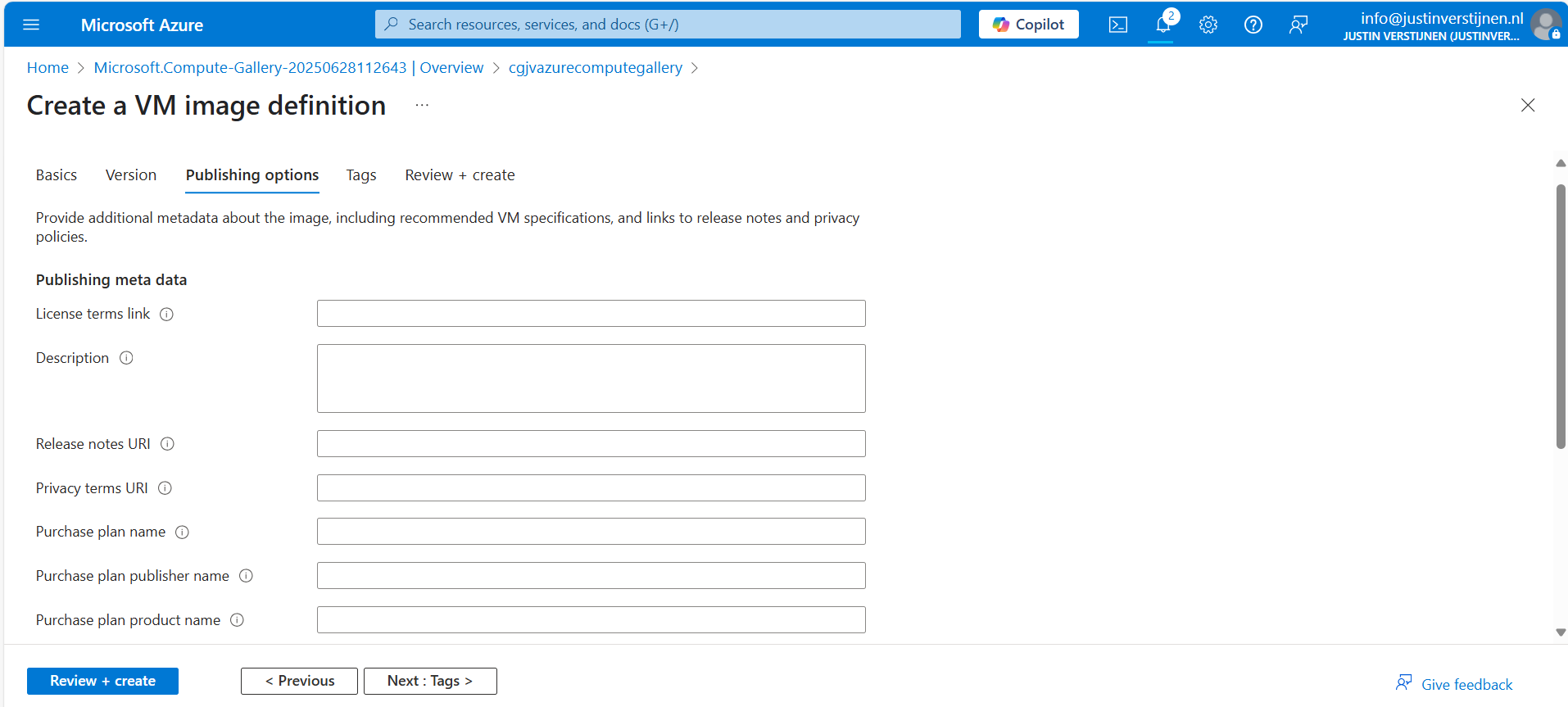

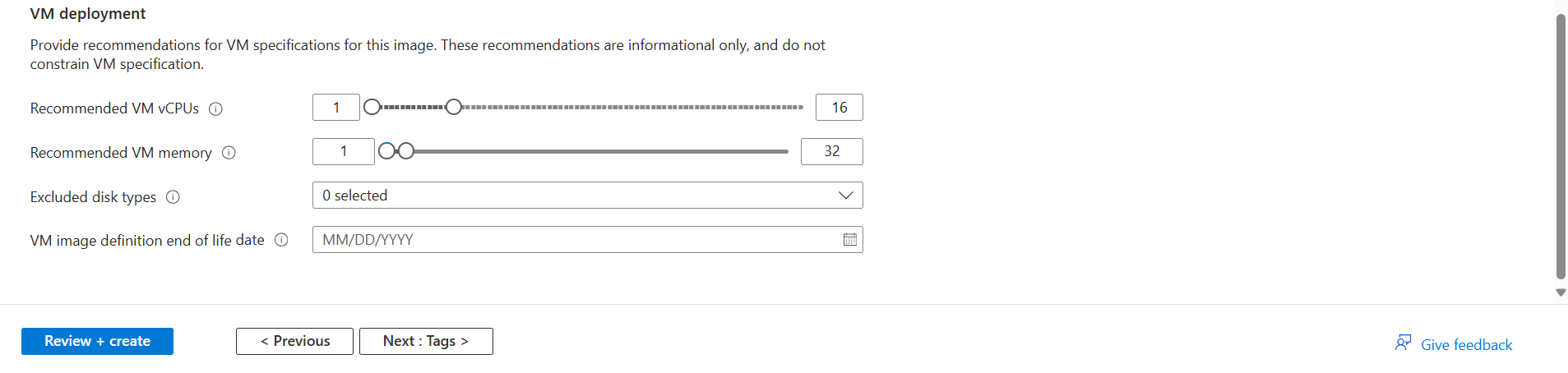

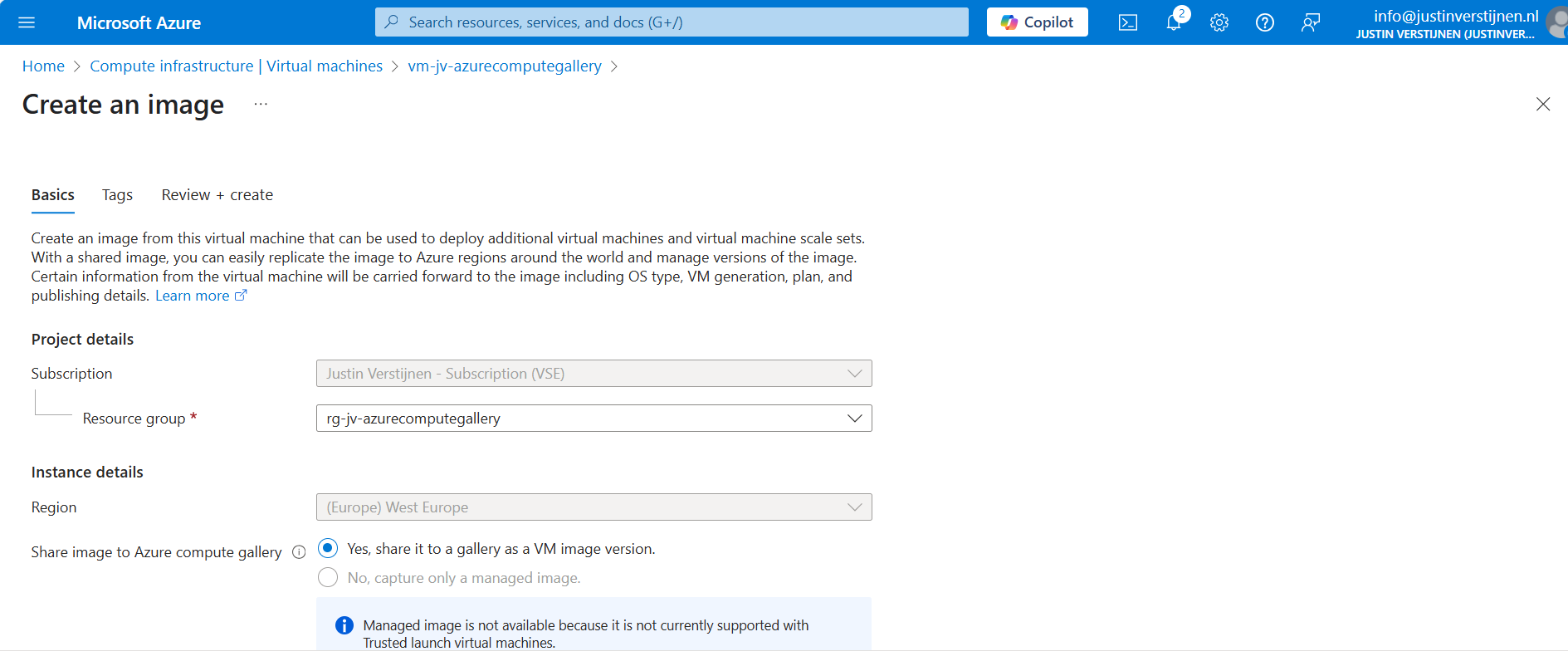

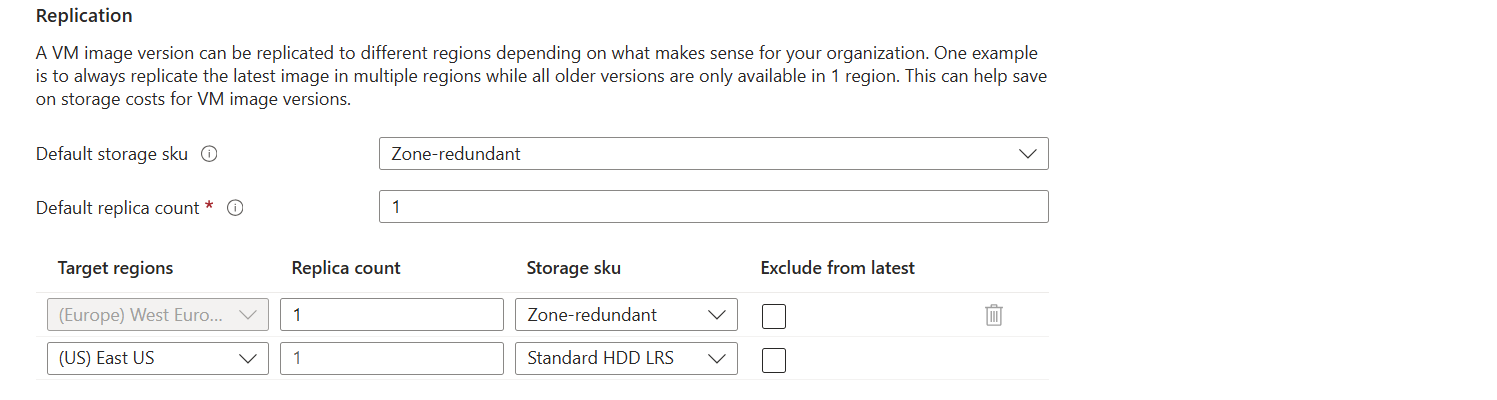

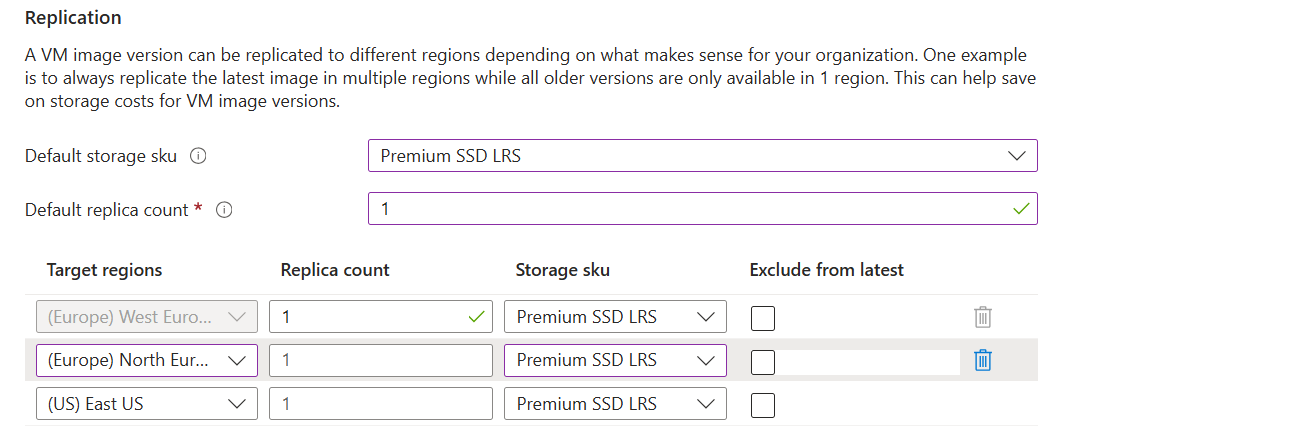

Now we get a wizard where we have to select our ACG and define our image:

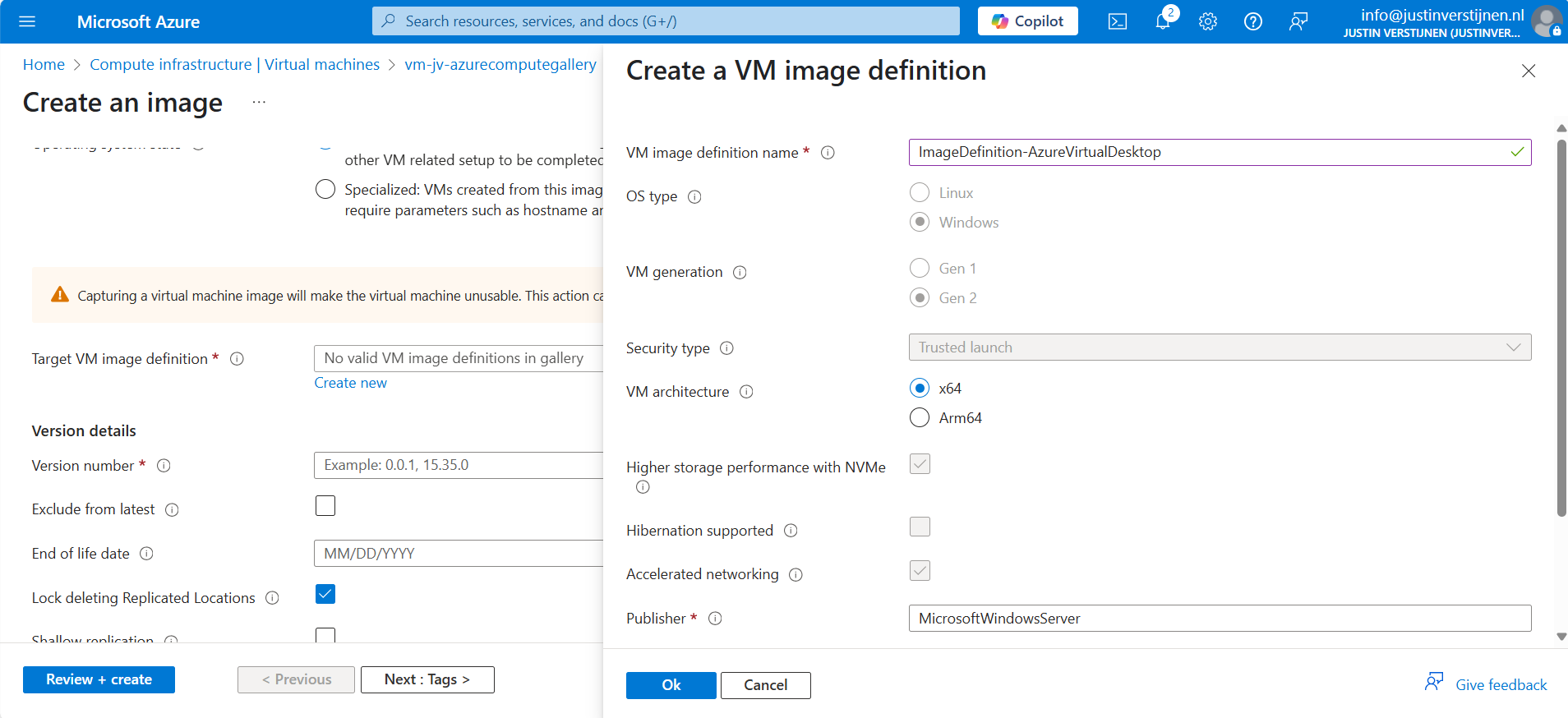

Click on “Create new” to create a new image definition:

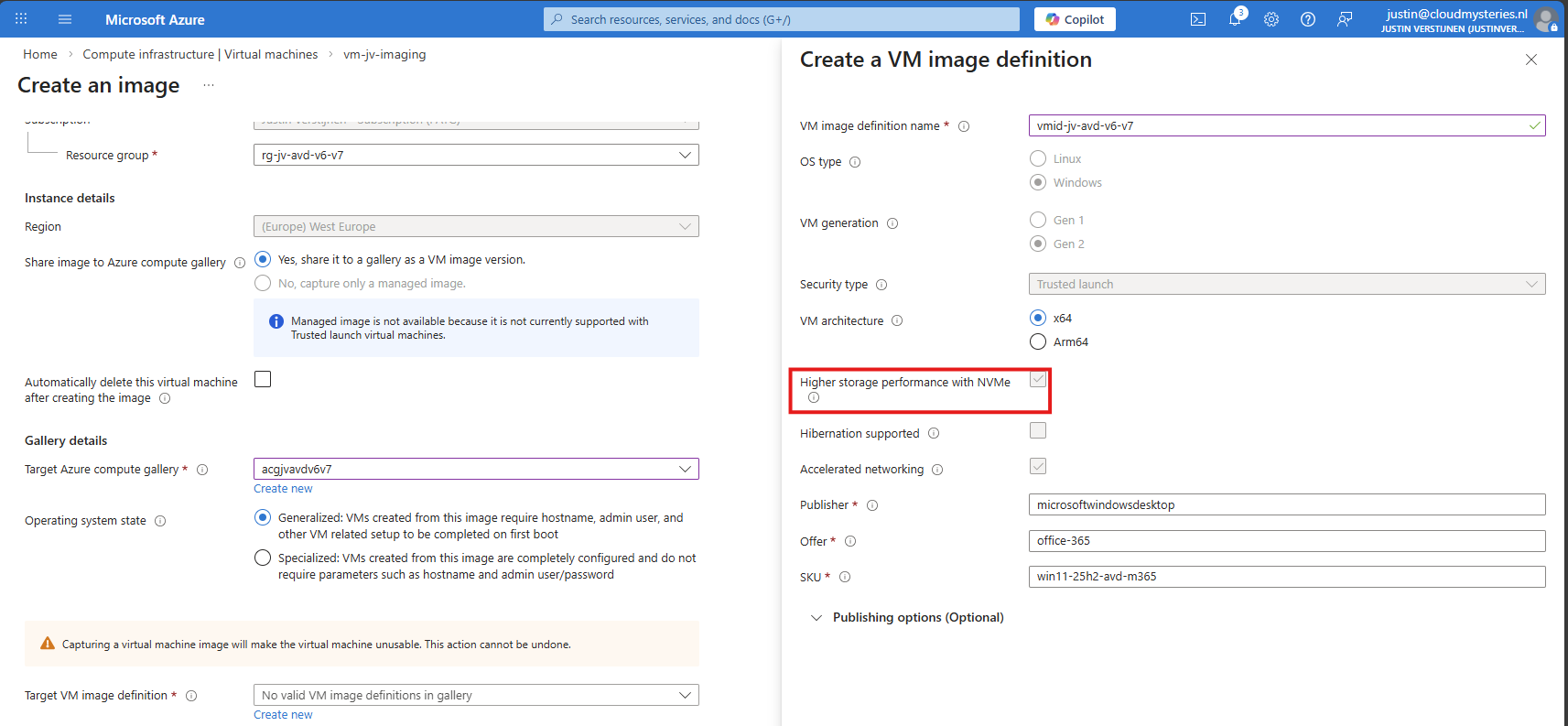

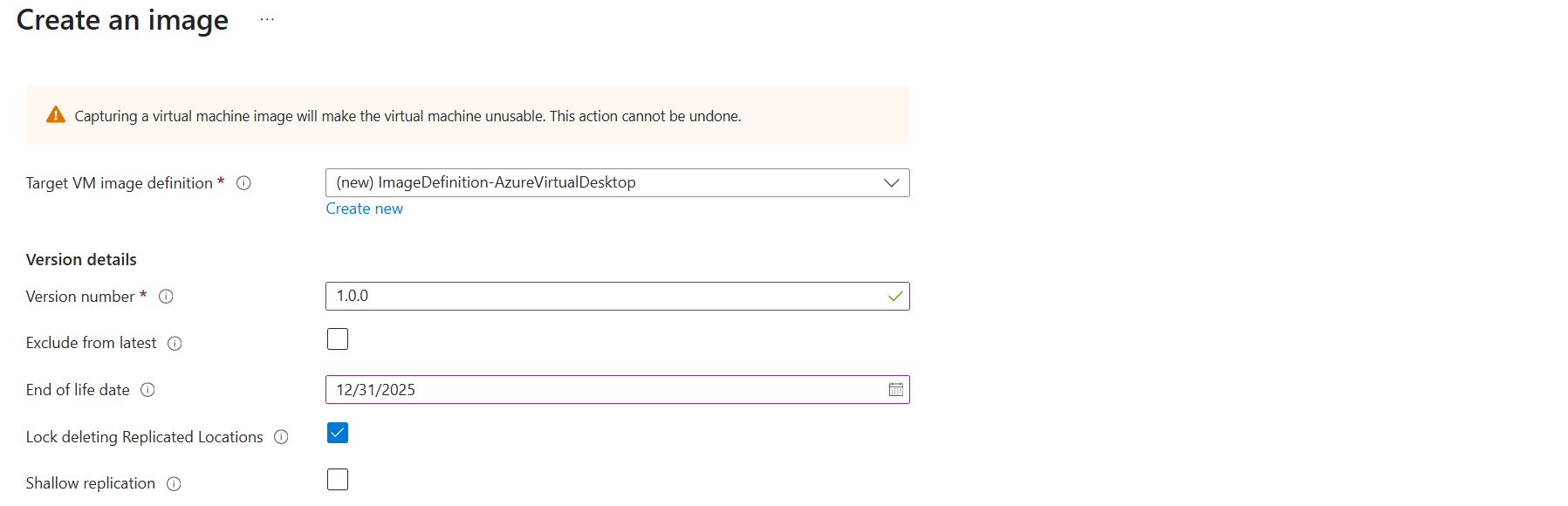

Give this a name and ensure that the check for “NVMe” is checked. Checking this mark enables NVMe support, while also still maintaining the SCSI support. Finish the versioning of the image and then advance through the wizard:

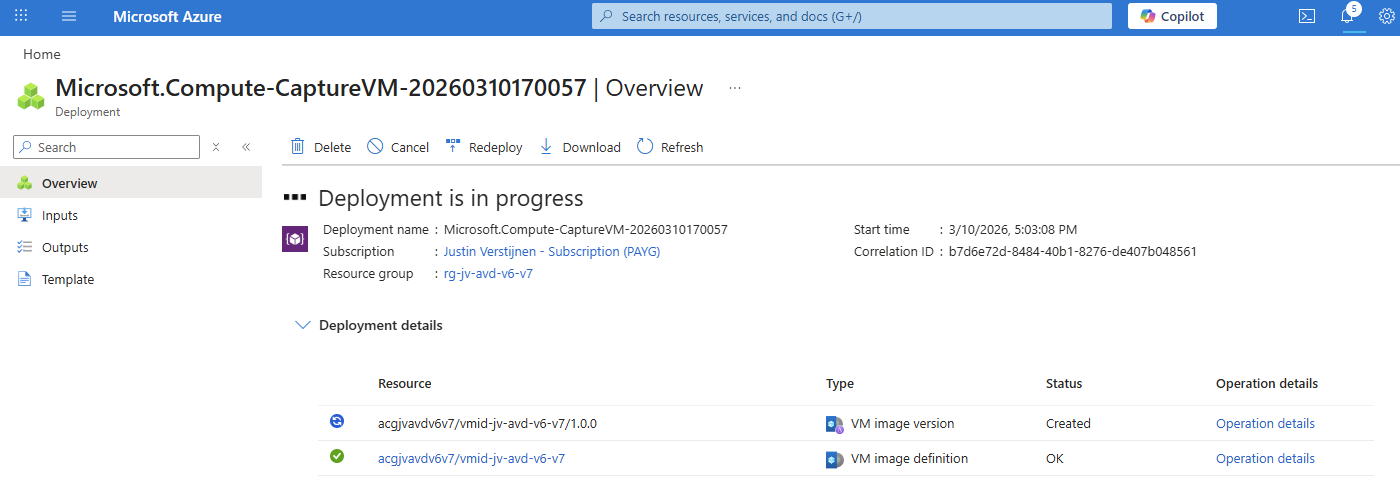

The image will then be created:

Checking image disk controller types

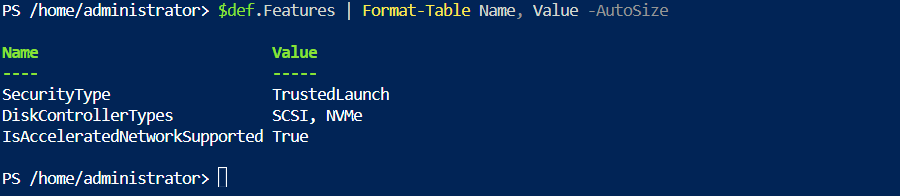

If you want, you can check the VM support of your image using this simple Azure PowerShell scipt:

$rg = "your resourcegroup"

$gallery = "your gallery"

$imageDef = "your image definition"

$def = Get-AzGalleryImageDefinition `

-ResourceGroupName $rg `

-GalleryName $gallery `

-Name $imageDef

$def.Features | Format-Table Name, Value -AutoSizeThis will result something like this:

This states at the DiskControllerTypes that it supports both SCSI and NVMe for a broad support.

Step 6: Deploy the new NVMe image

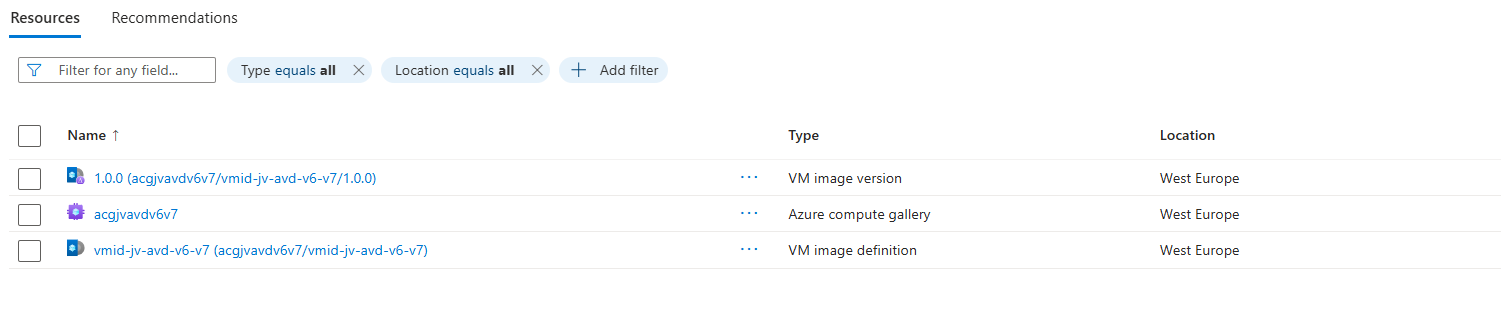

After the image has captured, I removed the imaging PC from my environment as you can do in the image capture wizard. I ended up having these 3 resources left:

These resources should be kept, where the VM image version will get newer instances as you capture more images during the lifecycle.

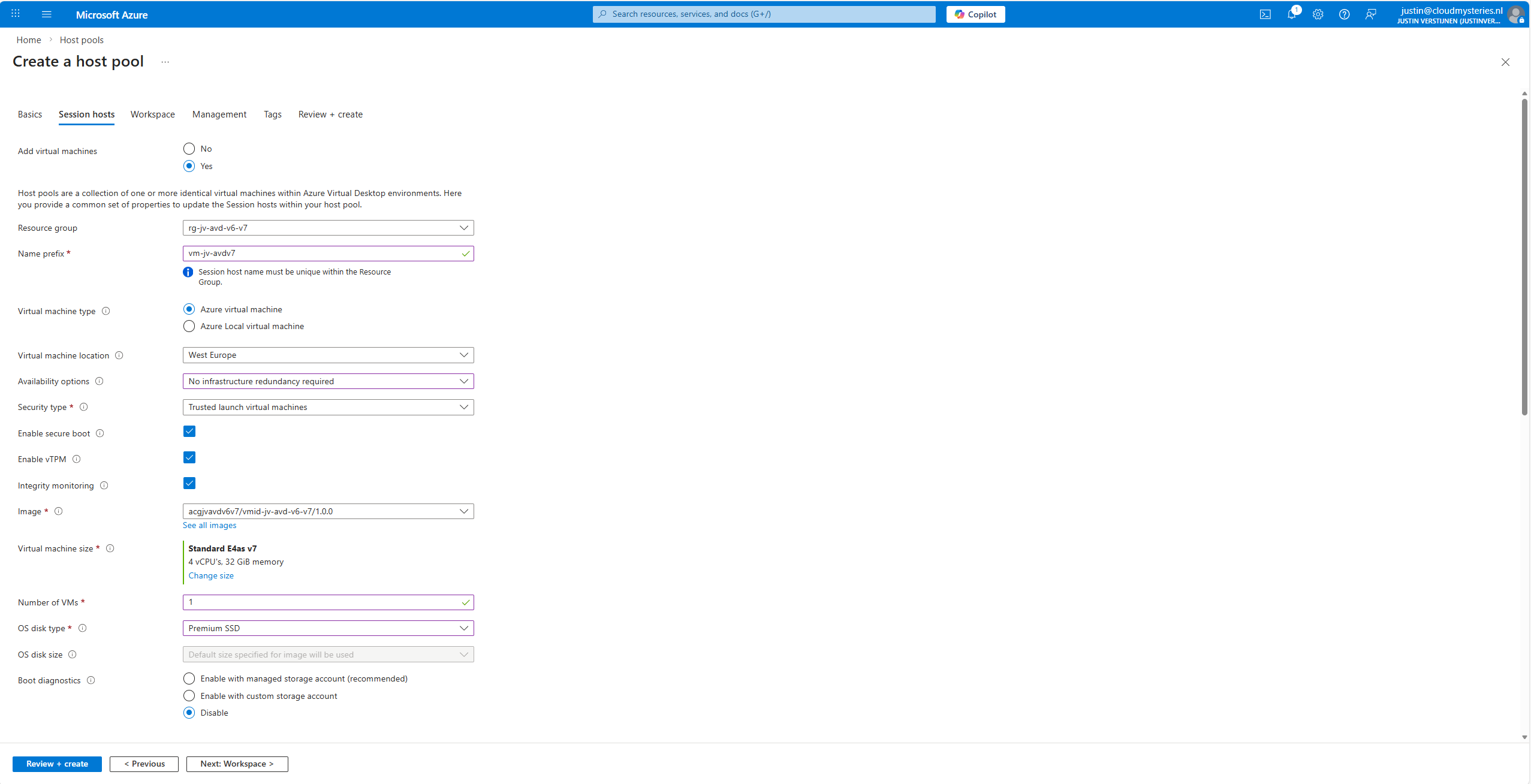

We will now deploy a Azure Virtual Desktop hostpool with one VM in it, to test if we can select V7 machines at the wizard. Go to “host pools” and create a new hostpool if not done so already. Adding VMs to an existing hostpool is also possible.

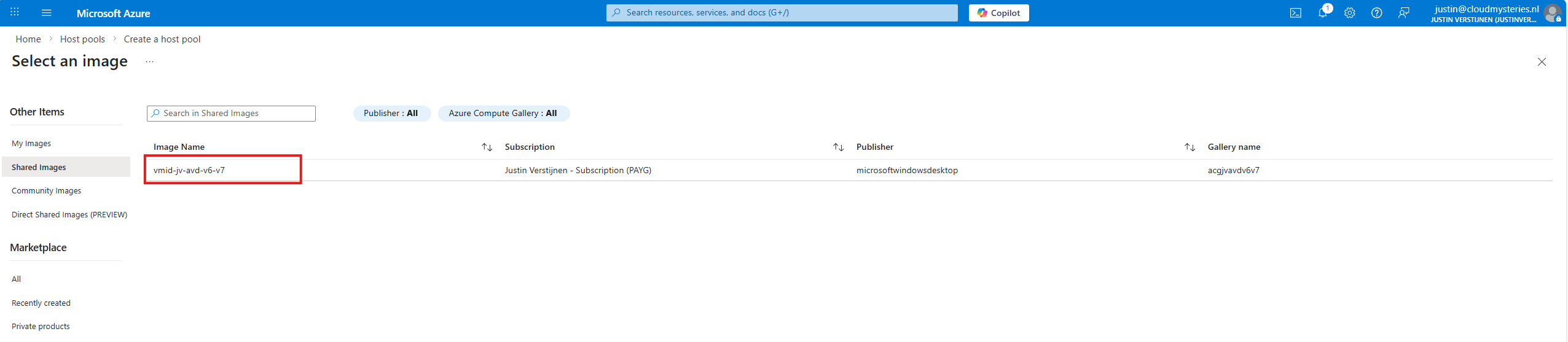

The next tab is more important, as we have to actually add the virtual machines there:

At the “Image” section, click on “see all images”, and then select your shared image definition. This will automatically pick the newest version from the list you saved there.

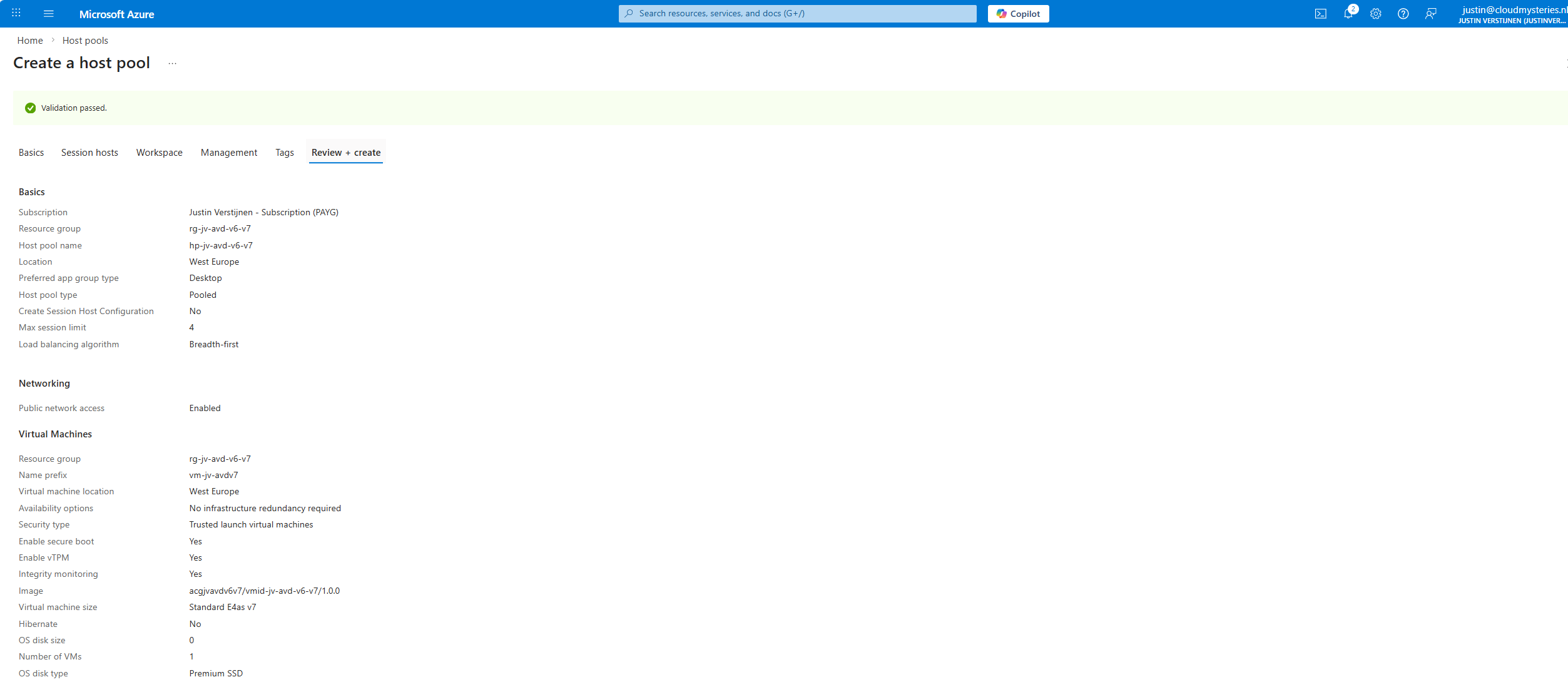

Now advance through the Azure Virtual Desktop hostpool wizard and finish.

This will create a hostpool with the machines in it with the best specifications and highest security options available at this moment.

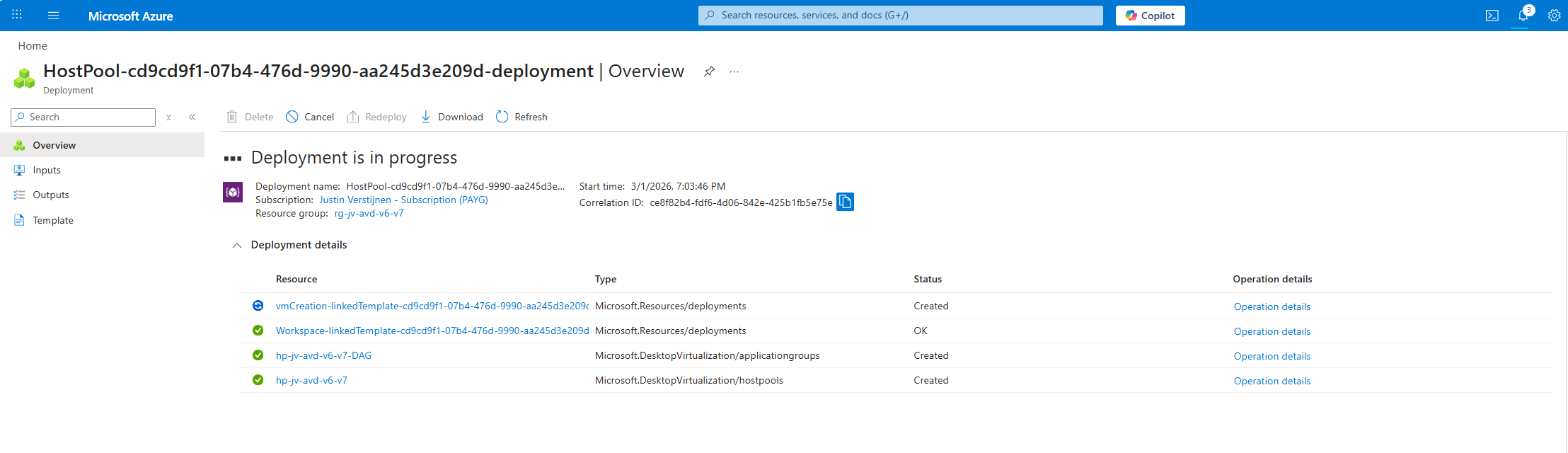

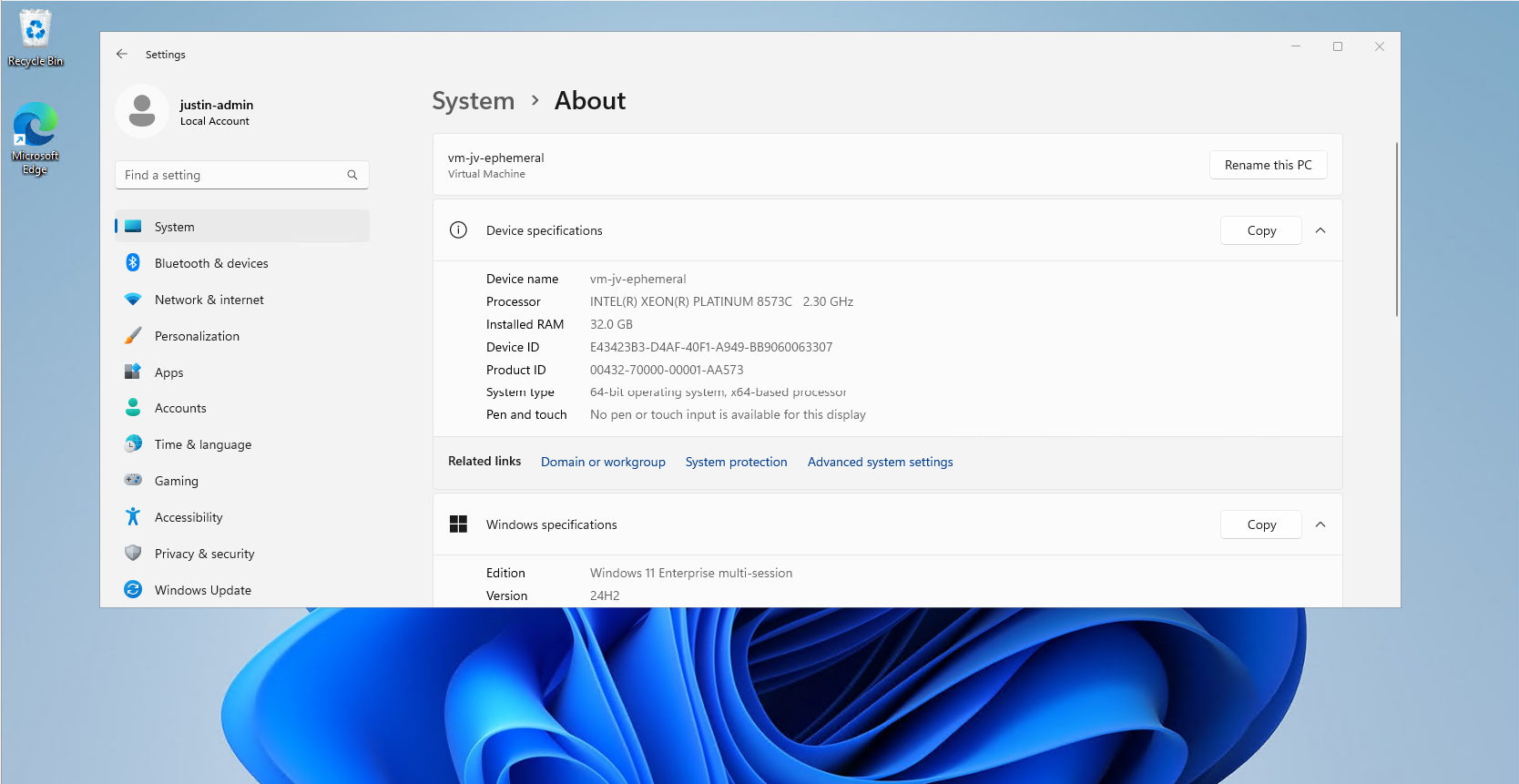

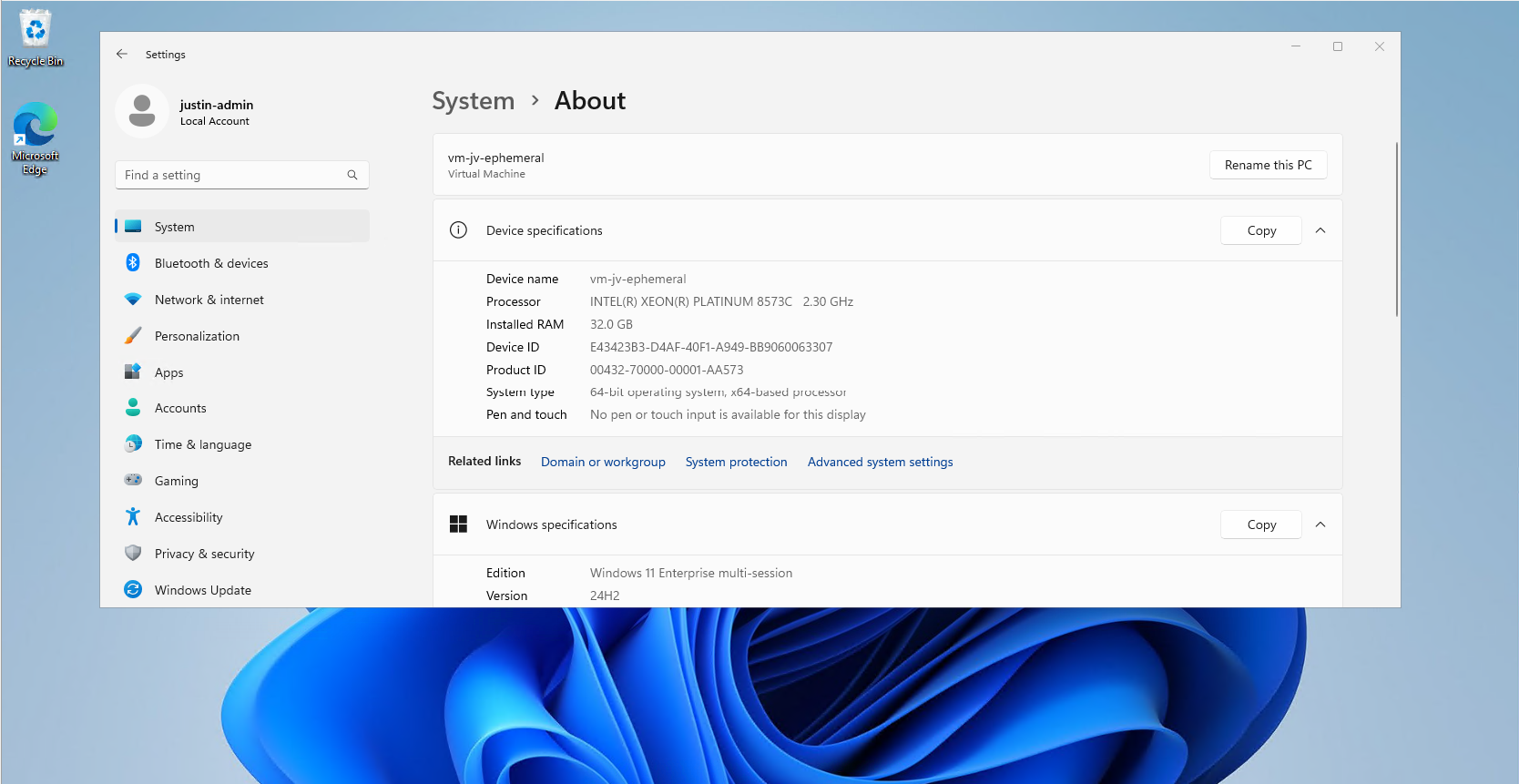

Step 7: Testing the virtual machine

After the hostpool is deployed, we can check how this works now. The hostpool and machine are online:

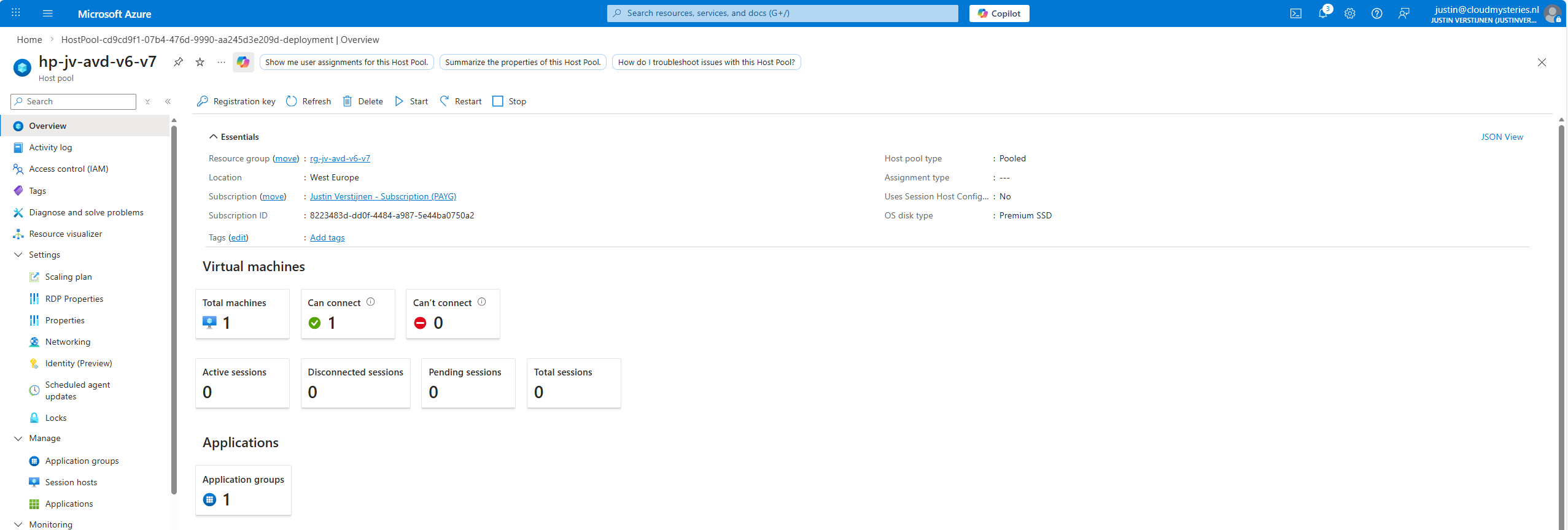

And looking into the VM itself, we can check if this is a newer generation of virtual machine:

Now I have finished the configuration of the hostpool as described in my AVD implementation guide: https://justinverstijnen.nl/azure-virtual-desktop-fslogix-and-native-kerberos-authentication/#9-preparing-the-hostpool

Summary

If you want to use newer V6 or V7 AVD machines, you need to switch to an NVMe-compatible image workflow with Azure Compute Gallery. That is the supported way to build, version, and deploy modern AVD session hosts.

I hope I also informed you a bit on how these newer VMs work and why you cloud get the errors in the first place. Simply by still using a method Microsoft wants you to stop doing. I really think the Azure Compute Gallery is the better option right now, but takes a bit more configuration.

Thank you for reading this guide and I hope it was helpful.

Sources

These sources helped me by writing and research for this post;

- https://learn.microsoft.com/en-us/azure/virtual-machines/shared-image-galleries

- https://learn.microsoft.com/en-us/azure/virtual-machines/nvme-overview

- https://learn.microsoft.com/en-us/azure/virtual-machines/enable-nvme-interface

- https://justinverstijnen.nl/azure-compute-gallery-and-avd-vm-images/

End of the page 🎉

You have reached the end of the page. You can navigate through other blog posts as well, share this post on X, LinkedIn and Reddit or return to the blog posts collection page. Thank you for visiting this post.

If you think something is wrong with this post or you want to know more, you can send me a message to one of my social profiles at: https://justinverstijnen.nl/about/

If you find this page and blog very useful and you want to leave a donation, you can use the button below to buy me a beer. Hosting and maintaining a website takes a lot of time and money. Thank you in advance and cheers :)

The terms and conditions apply to this post.

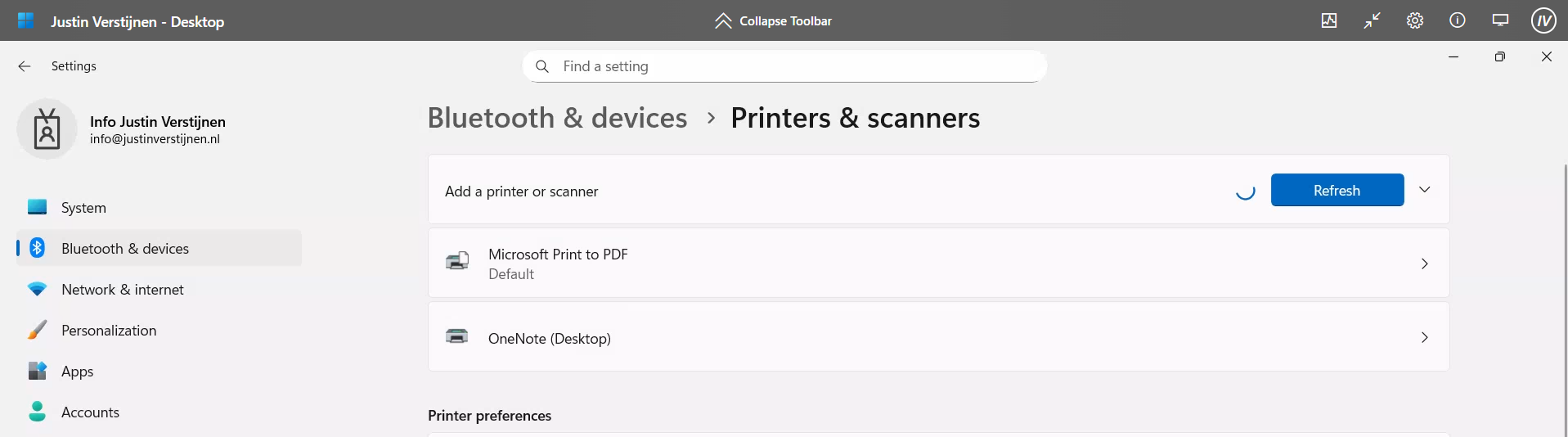

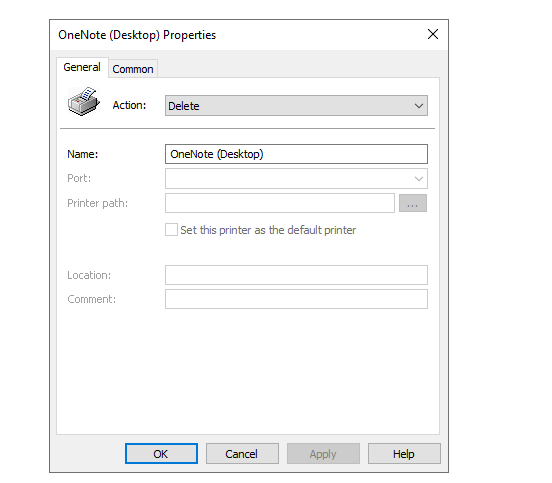

Remove Microsoft Print to PDF and OneNote printers script

In this guide, I will show you how to delete the printers using a PowerShell script. This is compatible with Microsoft Intune and Group Policy and can be used on physical devices, Azure Virtual Desktop and Windows 365.

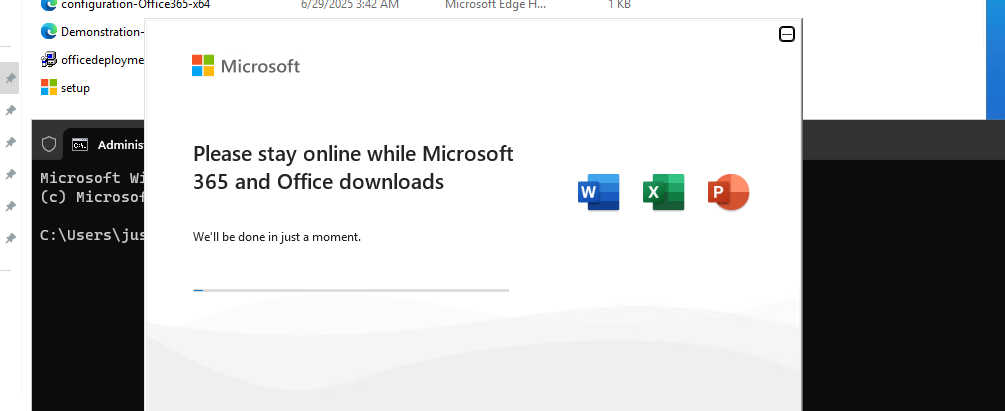

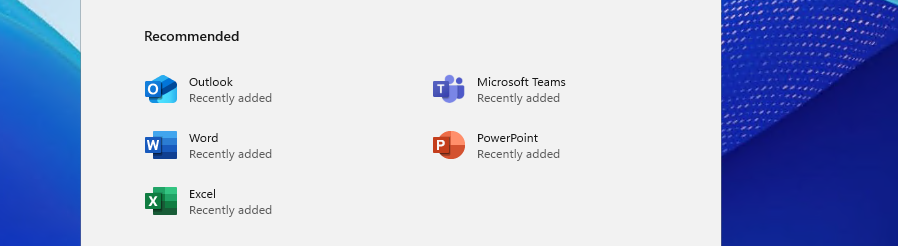

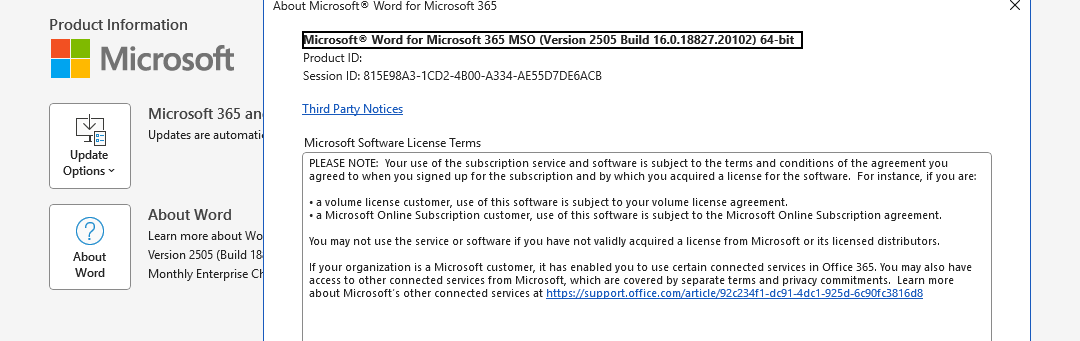

By default in Windows 11 with Microsoft 365 apps installed, we have two software printers installed. These are:

- OneNote (Desktop)

- Microsoft Print to PDF

However, some users don’t use them and they will annoyingly be as default printer sometimes, which we want to avoid. Most software have built-in options to save to PDF, so this is a bit redundant. Our real printers will be further down which causes their own problems for end users.

The PowerShell script

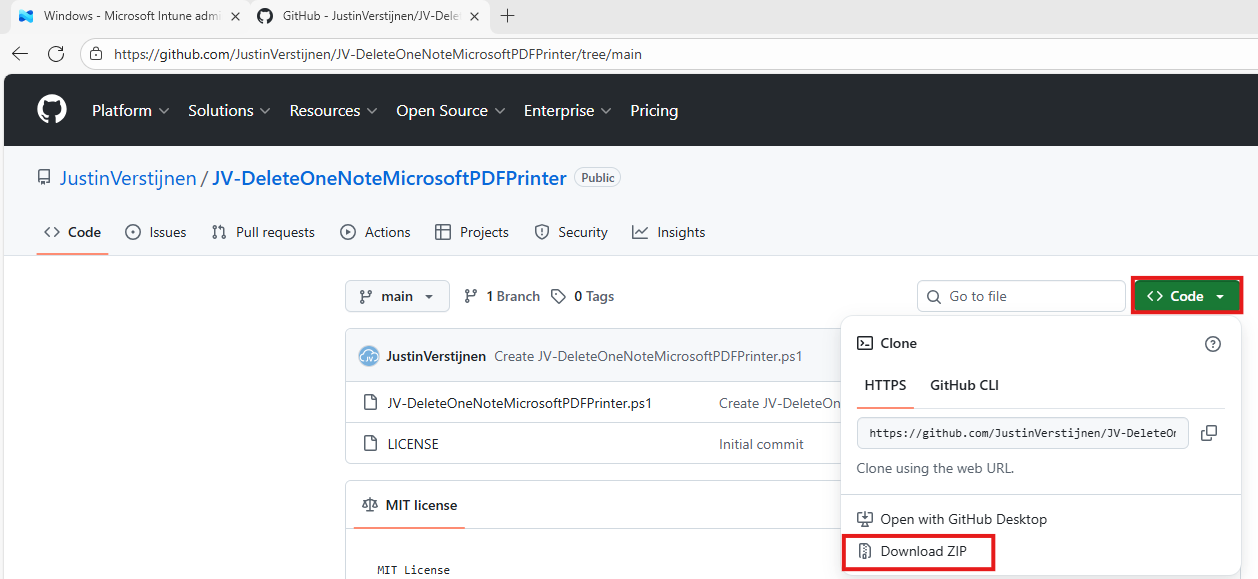

The PowerShell script can be downloaded from my Github page:

On the Github page, click on “<> Code” and then on “Download ZIP”.

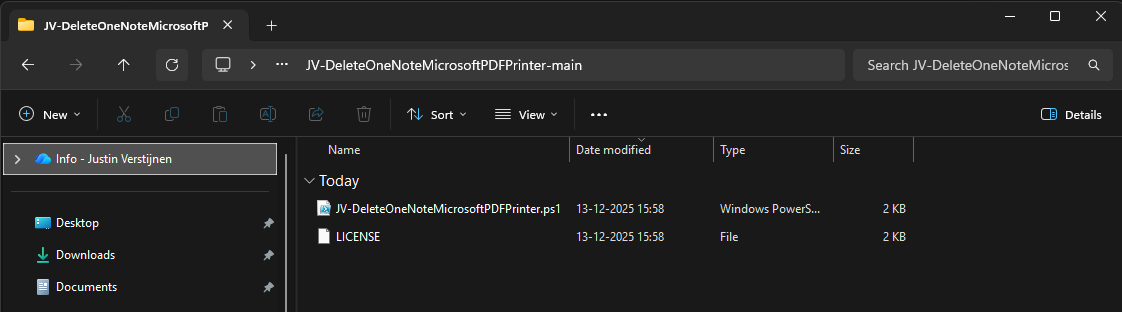

Unzip the file to get the PowerShell script:

The script described

The script contains 2 steps, one step for deleting one of the two printers. The Onedrive printer is a very easy removal as this only needs removing and will never return till you reinstall Office. The Microsoft PDF printer needs removing a Windows Feature.

This however cannot be accomplished by native Intune/GPO settings so we have to do this by script. Therefore I have added two different options to deploy the script to choose which one to use. It can also be used on other management systems too but steps may be different.

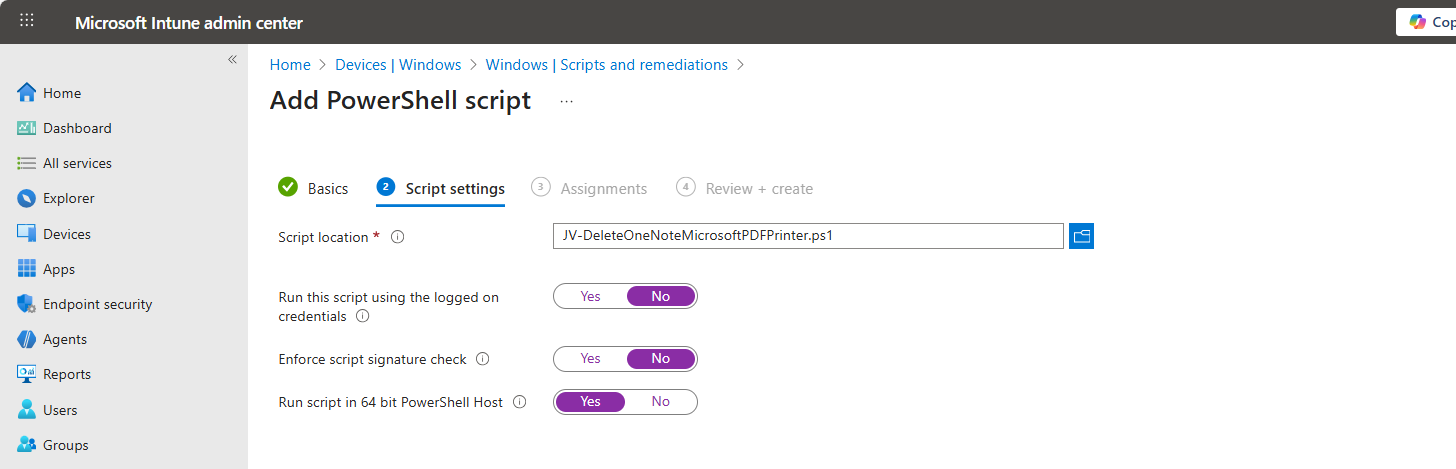

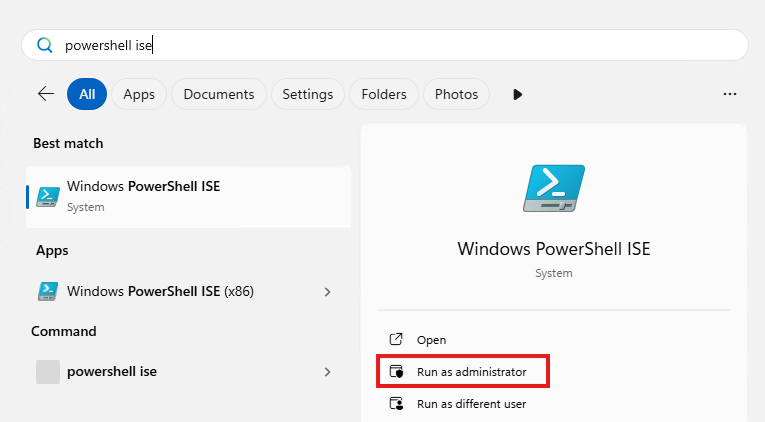

Option 1: Deploy script with Microsoft Intune

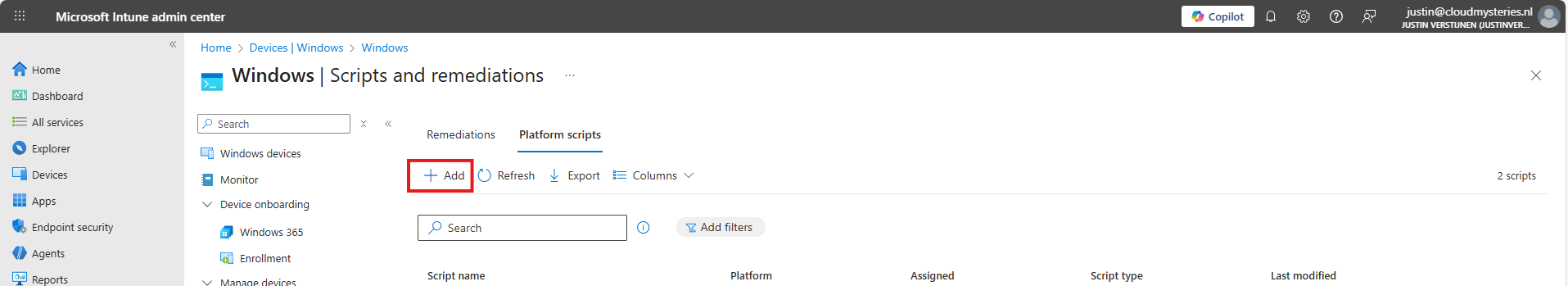

To deploy this script, let’s go to the Microsoft Intune Admin Center: https://intune.microsoft.com

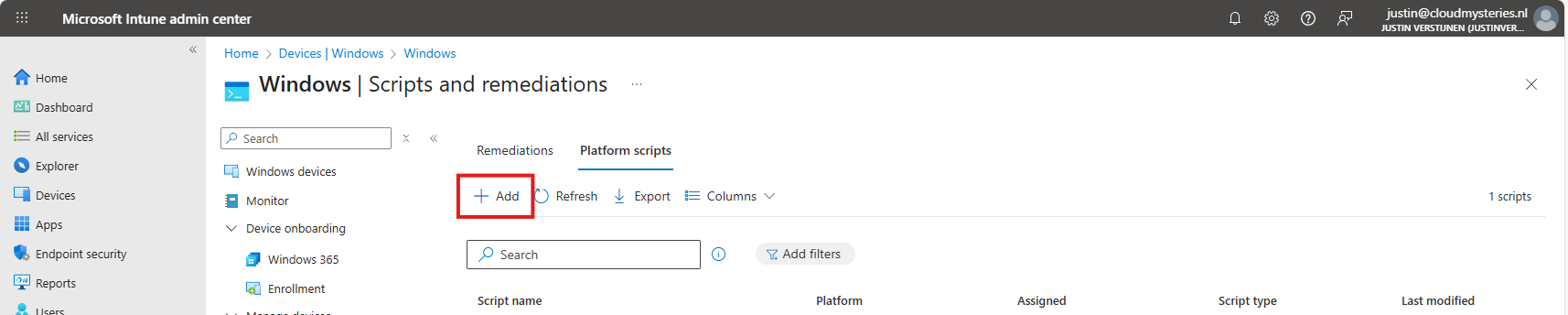

Navigate to Devices -> Windows -> Scripts and remediations and open the “Platform scripts” tab. Click on “+ Add” here to add a new script to your configuration.

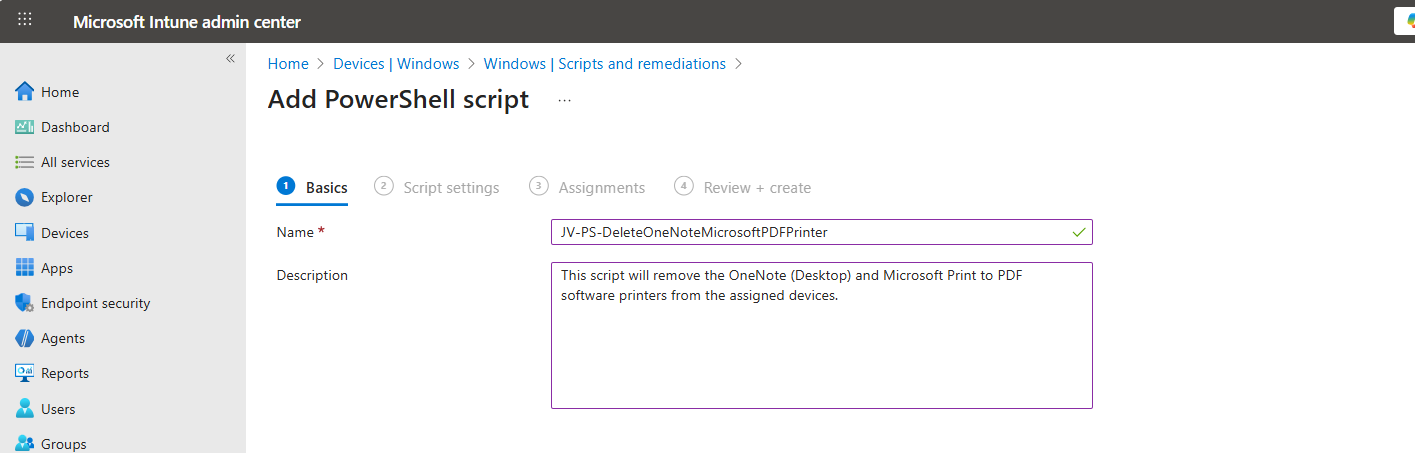

Give your script a name and good description of the result of the script.

Then click “Next” to go to the “Script settings” tab.

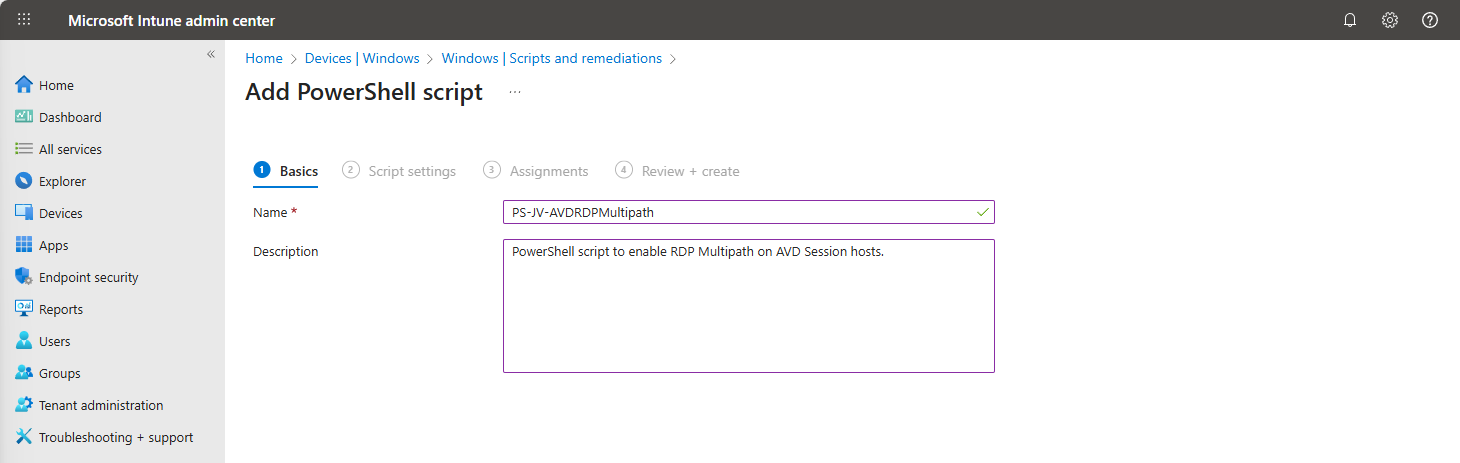

Import the script you just downloaded from my Github page. Then set the script options as this:

- Run this script using the logged on credentials: No

- Enforce script signature check: No

- Run script in 64 bit PowerShell Host: Yes

Then click “Next” and assign it to your devices. In my case, I selected “All devices”.

Click “Next” and then “Create” to deploy the script that will delete the printers upon execution.

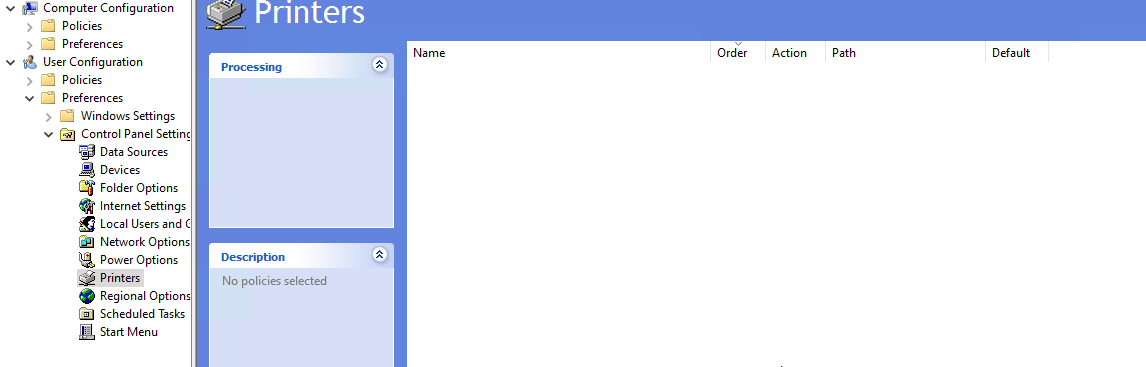

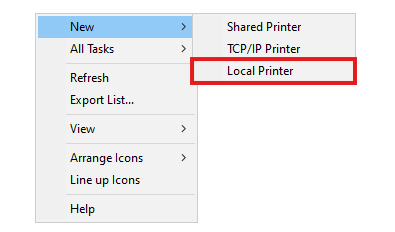

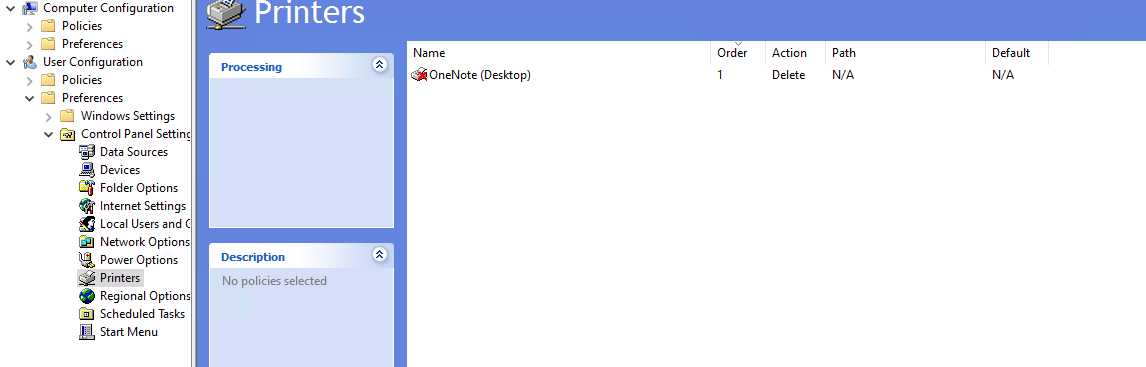

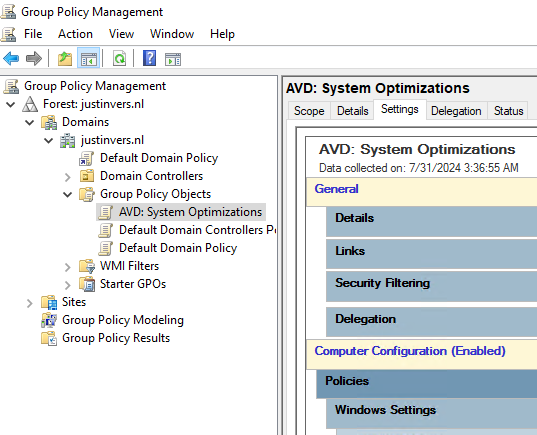

Option 2: Deploy script with Group Policy

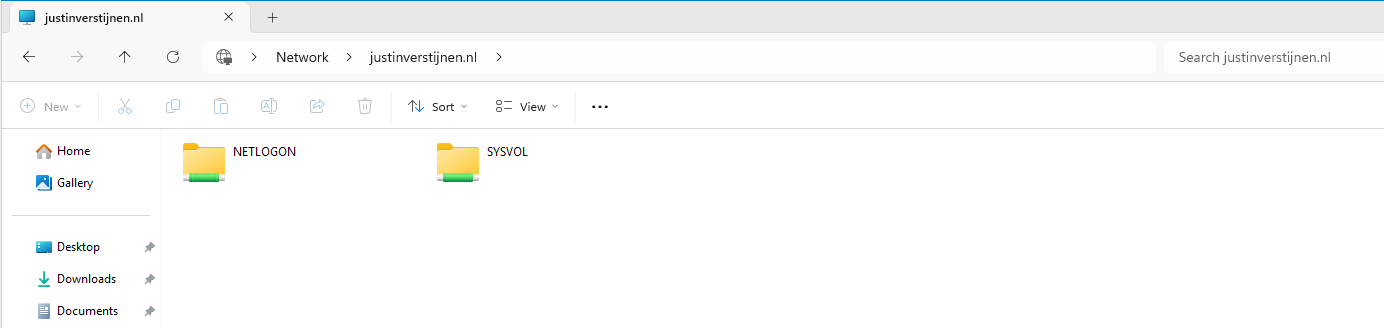

If your environment is Active Directory based, then Group Policy might be a good option to deploy this script. We will place the script in the Active Directory SYSVOL folder, which is a directory-wide readable folder for all clients and users and will then create a task that starts when the workstation itself starts.

Login to your Domain-joined management server and go to File Explorer and go to your domains SYSVOL folder by typing in: \domain.com in the File Explorer bar:

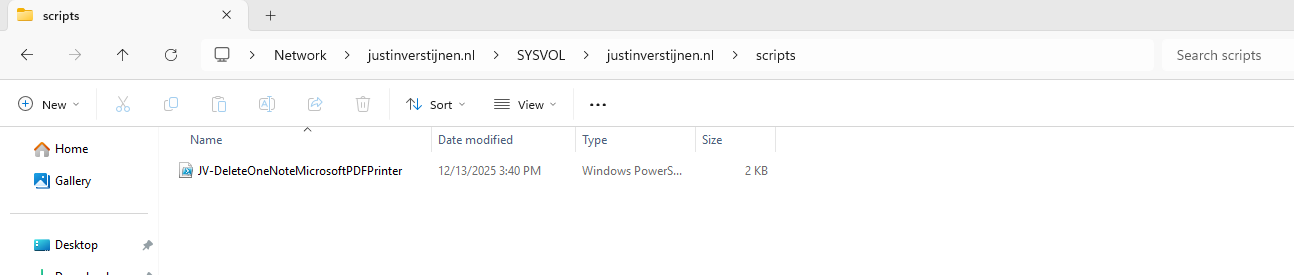

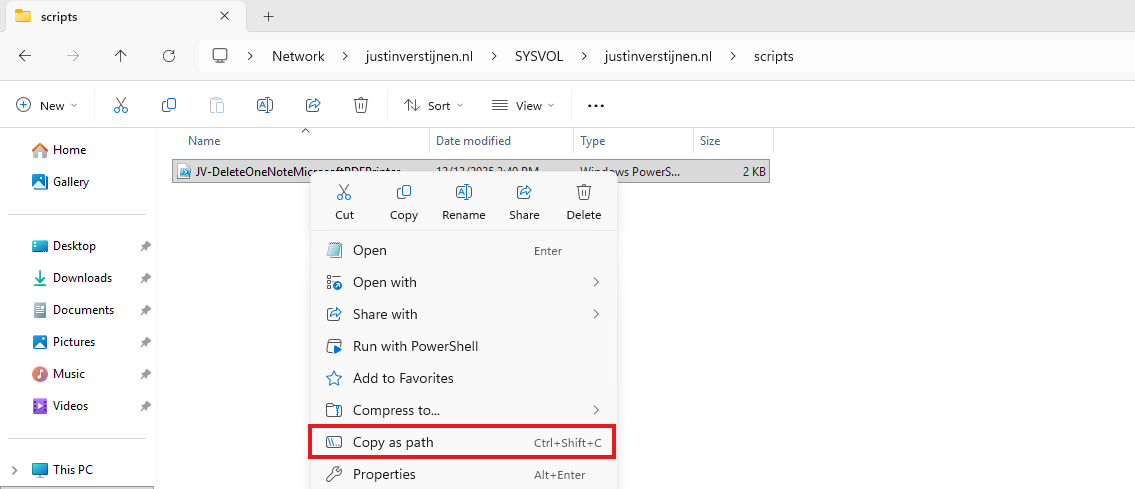

Open the SYSVOL folder -> domain -> scripts. Paste the script in this folder:

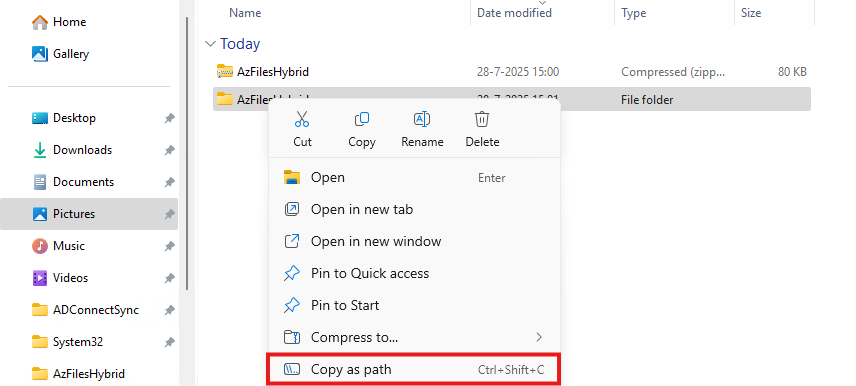

Then right-click the file and select “Copy as path” to set the full scipt path in your clipboard.

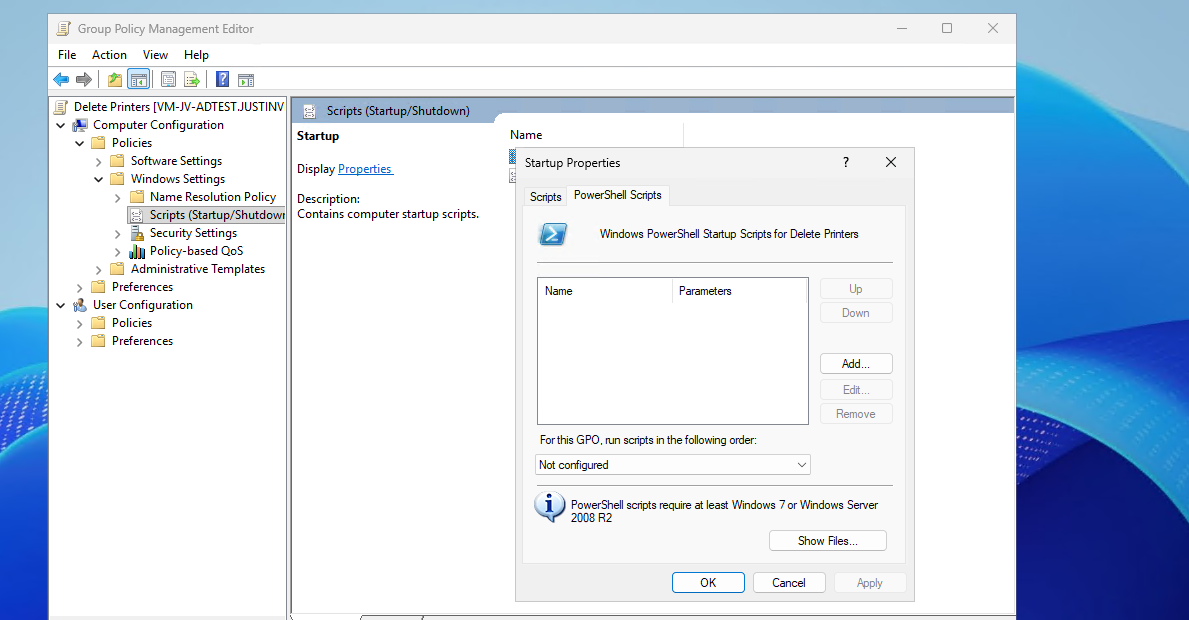

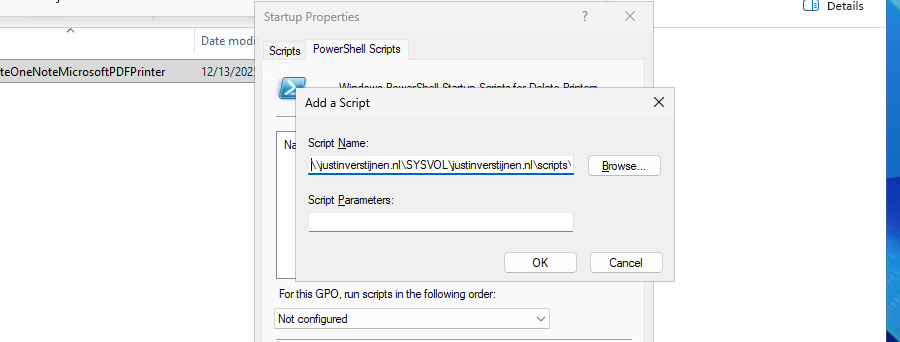

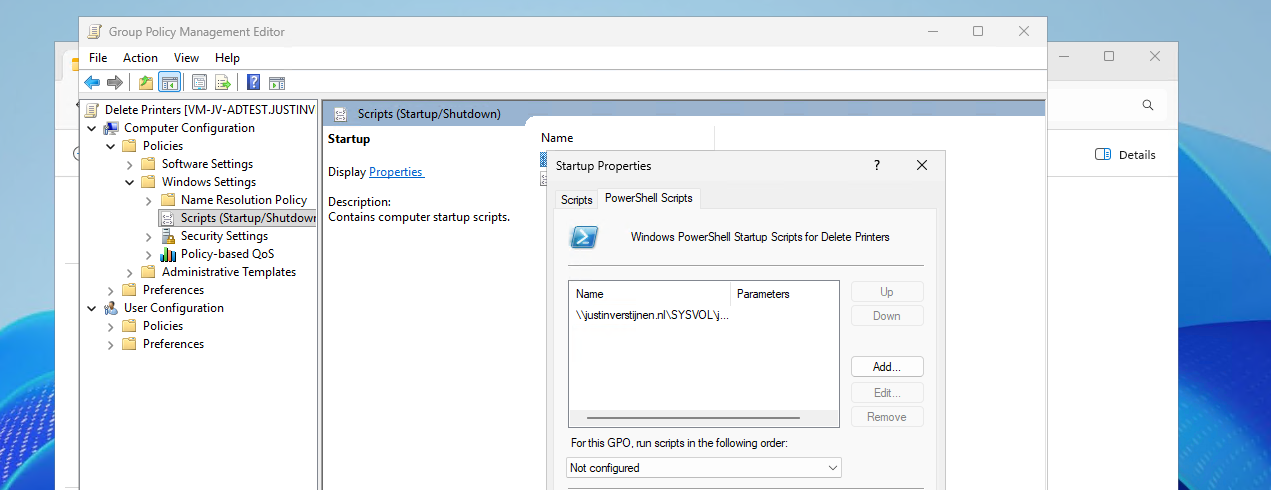

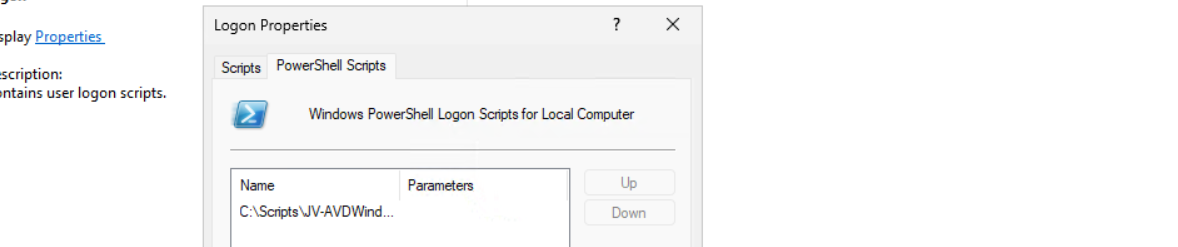

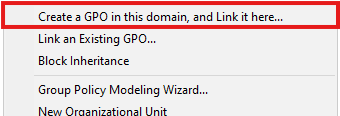

Open Group Policy Management on the server to create a new start-up script. Use an existing GPO or create a new one and navigate to:

Computer Configuration -> Policies -> Windows Settings -> Scripts -> Startup

Create a new script here and select the “PowerShell scripts” tab.

Add a new script here. Paste the copied path and remove the quotes.

Then click “OK” to save the configuration. This will bring us to this window:

We have now made a start-up script which will run at every startup of the machine. If you place a updated script as the same name in the same directory, this new version will be executed.

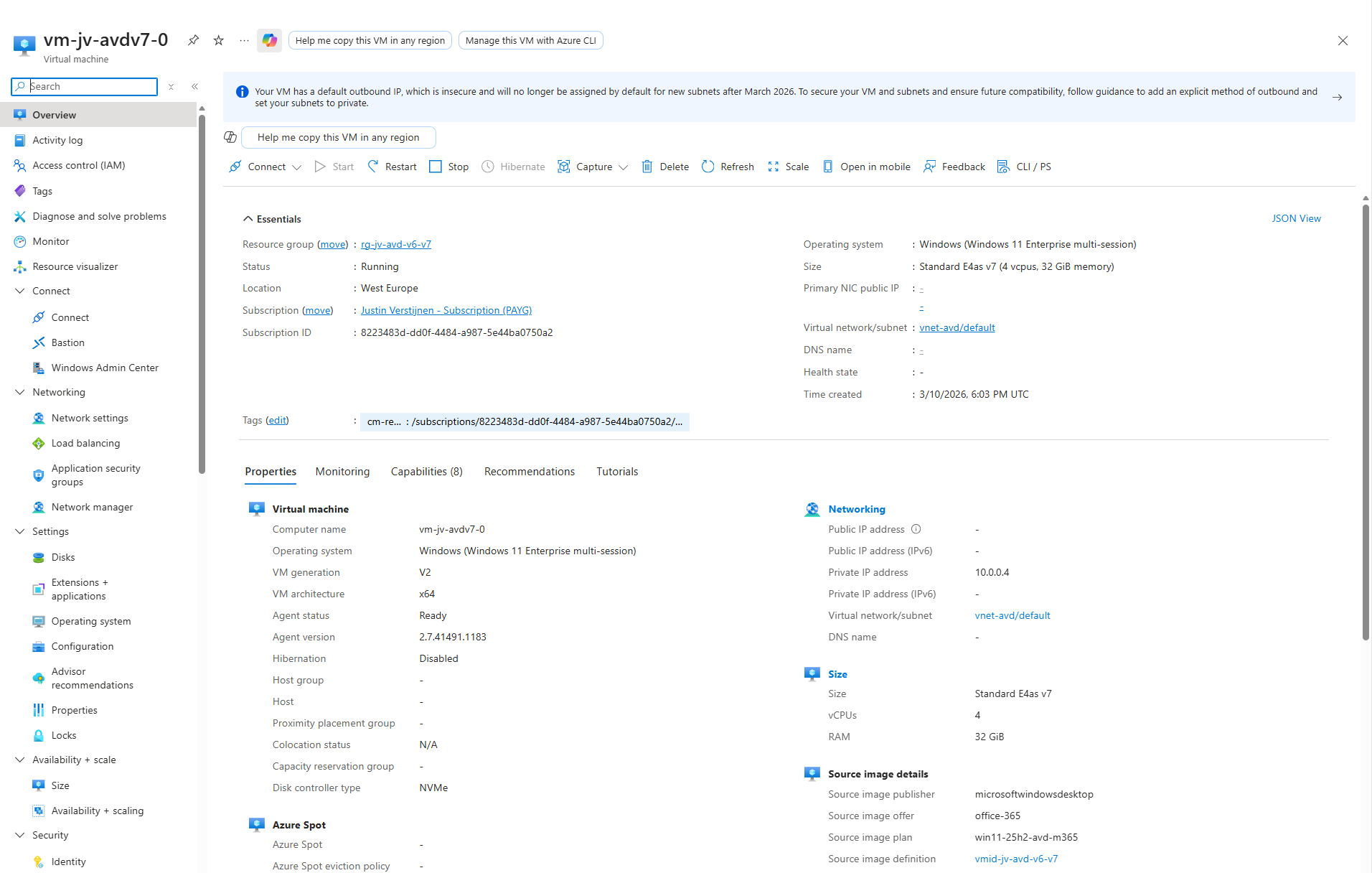

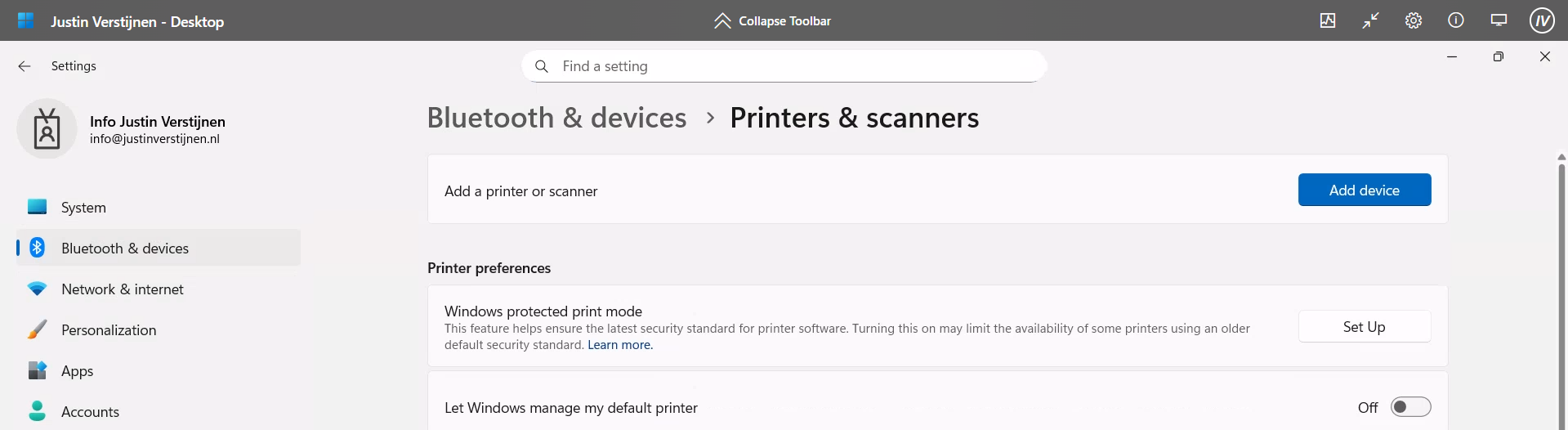

The results on the client machine

After the script has been executed succesfully, which should be at the next logon, we will check the status in the Printers and Scanners section:

No software printers left bothering us and our end users anymore :)

Summary

Removing the default software printers may be strange but can help enhancing the printing for your end users. No software printer installed by default can take over being default printer anymore or even filling the list with printers. Almost every application has a option to save as PDF these days so this would be a little bit redundant.

Thank you for reading this guide and I hope it was helpful.

Sources

These sources helped me by writing and research for this post;

- None

End of the page 🎉

You have reached the end of the page. You can navigate through other blog posts as well, share this post on X, LinkedIn and Reddit or return to the blog posts collection page. Thank you for visiting this post.

If you think something is wrong with this post or you want to know more, you can send me a message to one of my social profiles at: https://justinverstijnen.nl/about/

If you find this page and blog very useful and you want to leave a donation, you can use the button below to buy me a beer. Hosting and maintaining a website takes a lot of time and money. Thank you in advance and cheers :)

The terms and conditions apply to this post.

Azure Virtual Desktop FSLogix and Native Kerberos authentication

On this page I will describe how I built an environment with a pooled Azure Virtual Desktop hostpool with FSLogix and using the Entra Kerberos option for authentication. This new authentication option eliminates the unsafe need of storing the storage key in hosts’ registry like we did in my earlier AVD full Entra blog.

In this guide I will dive into how I configured an simple environment where I placed every configuration action in separate steps to keep it simple and clear to follow and also will give some describing information about some concepts and settings.

I also added some optional steps for a better configuration and security than this guide already provides for a better user experience and more security.

The solution described

The day has finally come; we can now build a Azure Virtual Desktop (AVD) hostpool in pooled configuration without having to host an Active Directory, and/or having to host an unsecured storage account by having to inject the Storage Access Key into the machines’ registry. This newer setup enhances performance and security on those points.

In this post we will build a simple Azure Virtual Desktop (AVD) setup with one hostpool, one session host and one storage account. We will use Microsoft Entra for authentication and Microsoft Intune for our session host configuration, maintenance and security.

This looks like this, where I added some session host to get a better understanding of the profile solution.

FSLogix is a piece of software that can attach a virtual disk from a network location and attach it to Windows at logon. This ensures users can work on any machine without losing their settings, applications and data.

In the past, FSLogix always needed an Active Directory or Entra Domain Services because of SMB and Kerberos authentication. We now finally got a solution where this is a thing of the past and go full cloud only.

For this to work we also get an Service Principal for your storage account, building a bridge between identity and storage account for Kerberos authentication for the SMB protocol.

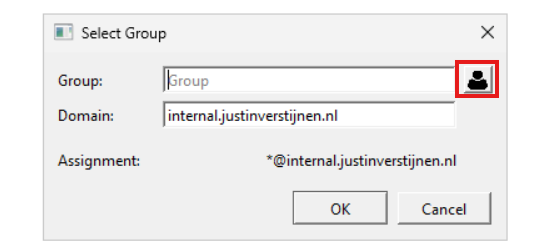

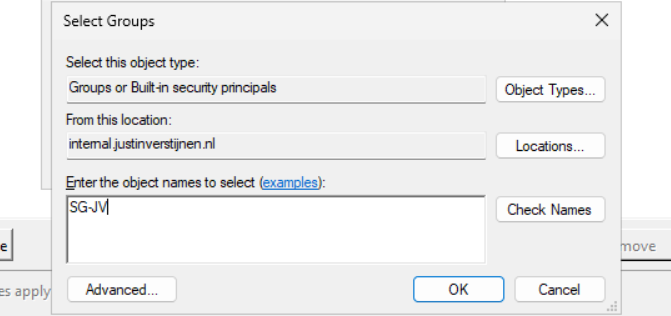

1: Create Security Groups and configure roles

Before we can configure the service, we will first start with creating a security group to give users permissions to the FSLogix storage. Every user who will use FSLogix will need at least Read/write (Contributor) permissions.

Go to the Entra Admin center (https://entra.microsoft.com) and go to “Groups”.

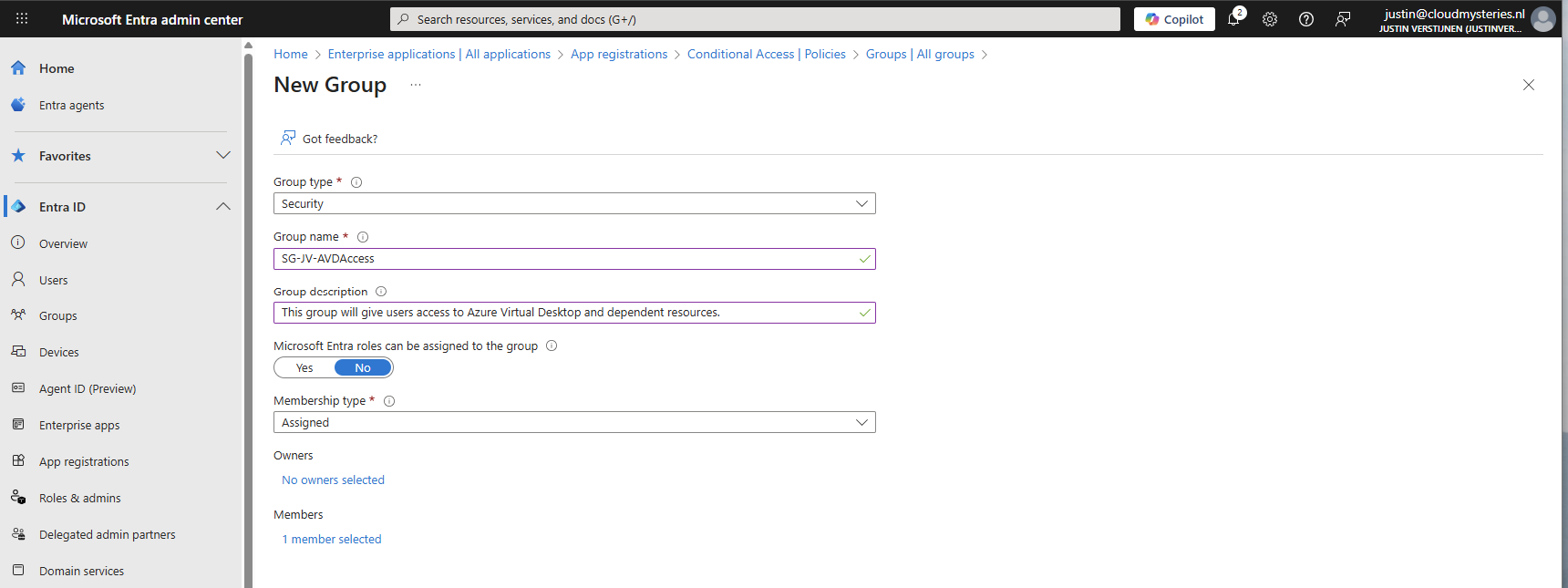

Create a user group

Create a new security group here:

You can use a assigned group if you want to manage access, or you can use a dynamic group to automate this process. Then create the group, which in my case will be used for storage permissions and hostpool access.

Create a device group

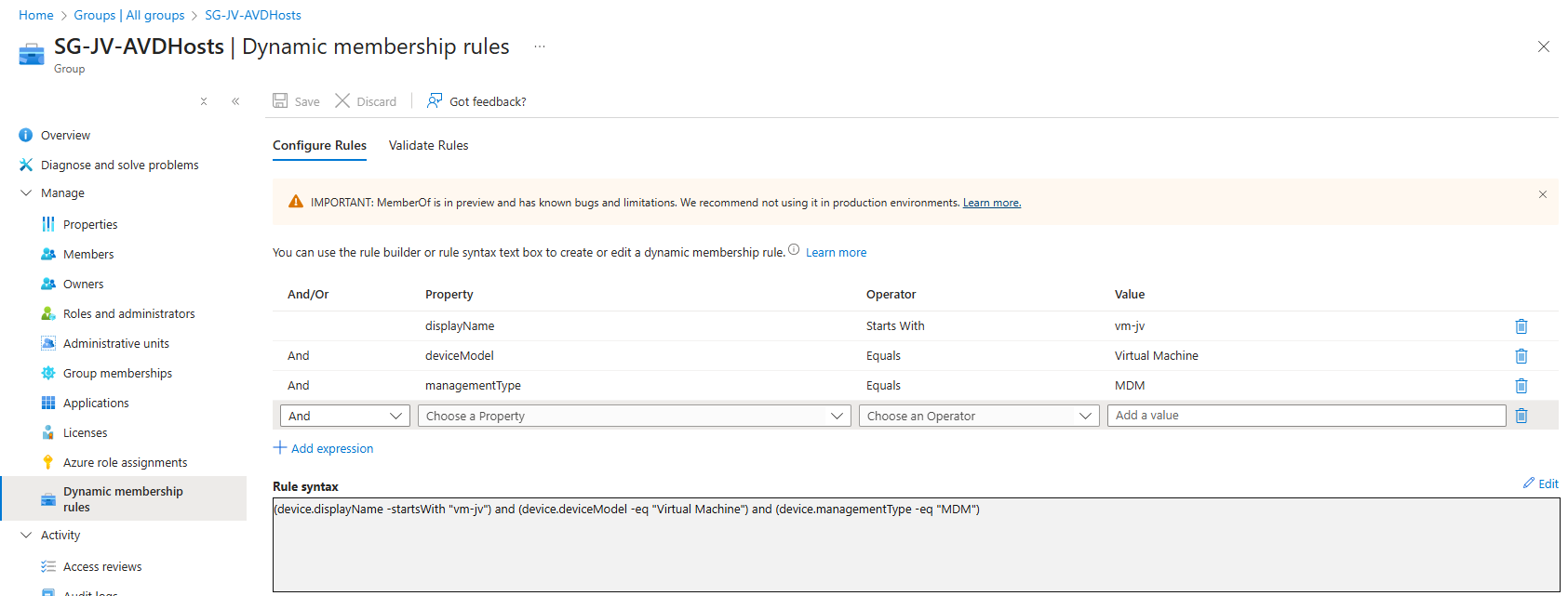

If having a larger Intune environment, it is recommended to create a Azure Virtual Desktop device/session hosts group. This way you can apply computer settings to the hosts group in Intune.

You can create a group with your desired name and this can be an assigned or dynamic group. An examples of dynamic group rules can be this:

(device.displayName -startsWith "vm-jv") and (device.deviceModel -eq "Virtual Machine") and (device.managementType -eq "MDM")For AVD hosts, I really like dynamic groups, as you deploy more virtual machines, policies, scripts and such are all applied automatically.

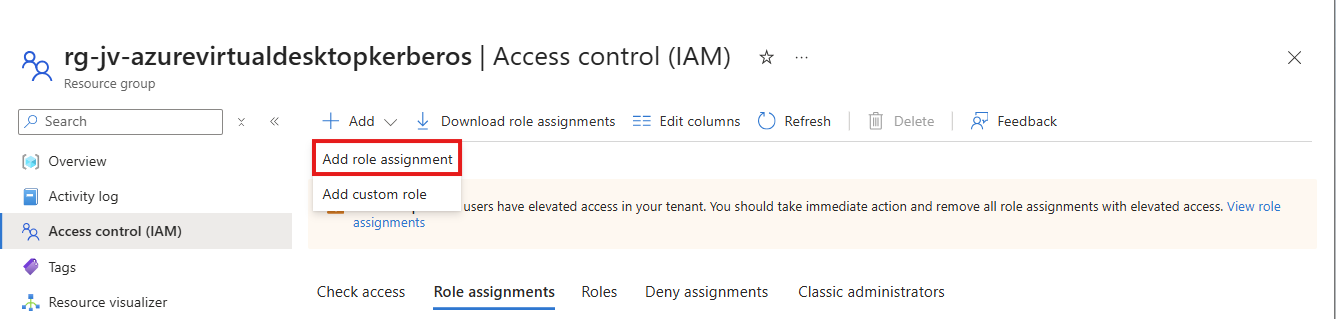

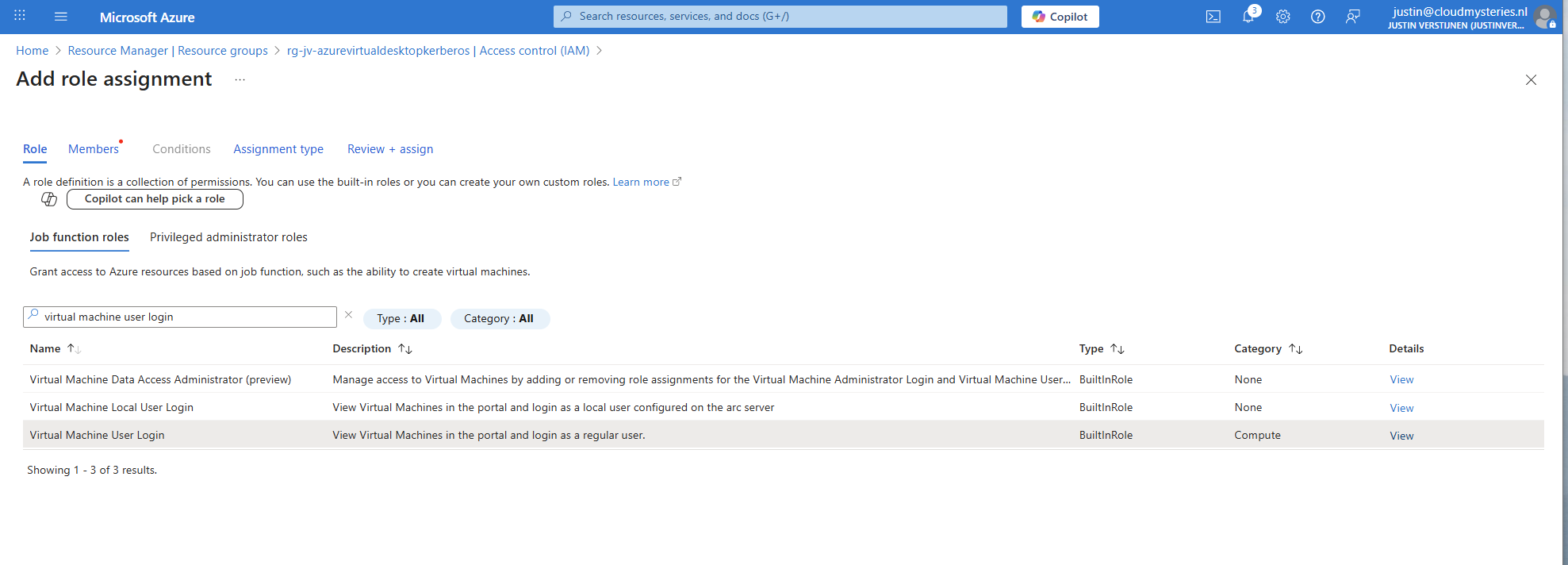

Assign Virtual Machine login roles to users

After the group is created, we need to assign a role to the group. This role is:

- Virtual Machine User Login on all session hosts -> Resource group

- For default, non administrative users

- Virtual Machine Administrator Login on all session hosts -> Resource group

- For administrative users

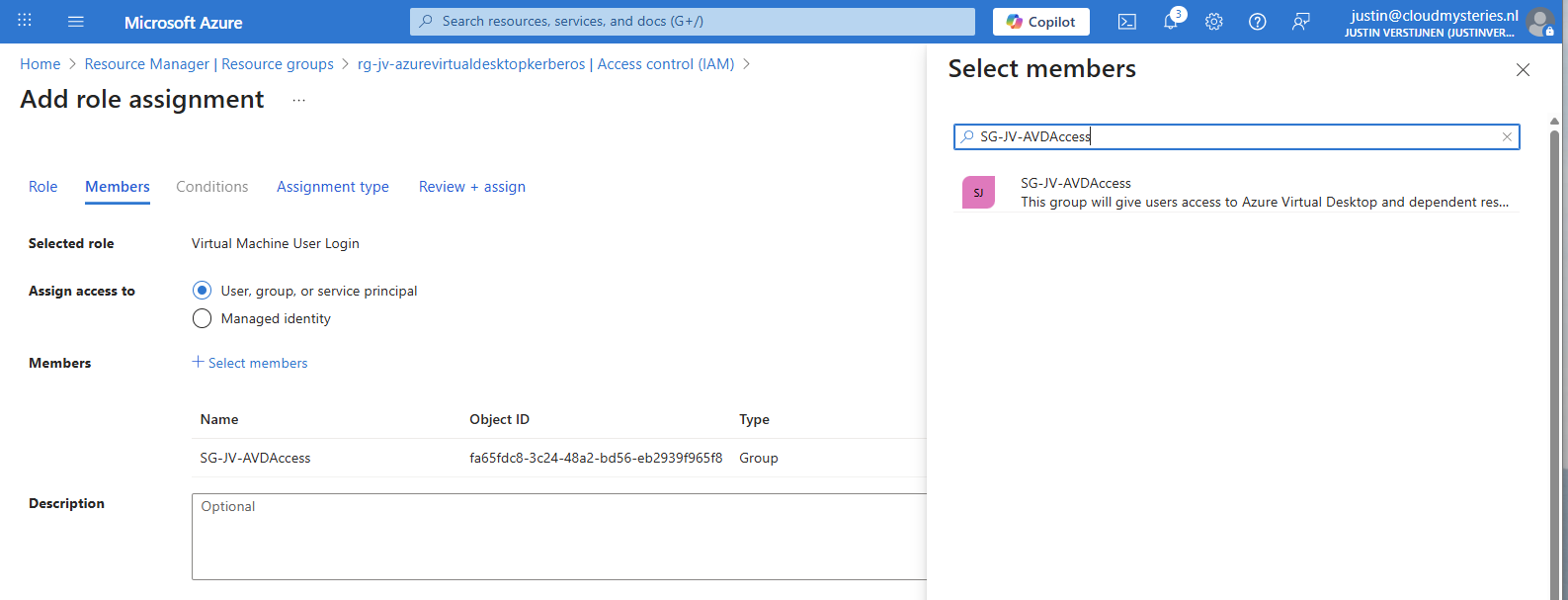

We will use the role “Virtual Machine User Login” in this case for normal end users. Go to the resource group where your AVD hosts are and go to “Access control (IAM)”.

Click on “+ Add” and then “Add role assignment”.

Select the role “Virtual Machine User Login” and click on “Next”. On the Members page, click on “+ Select members” and select the group with users you just created.

The role assignment is required because users will be loggin into a virtual machine. Azure requires the users to have the RBAC role for security.

You can do this on Resource, Resource Group and Subscription level, but mostly we will be placing similar hosts in the same resource group. My advice in such situation would be to use the resource group for the permissions.

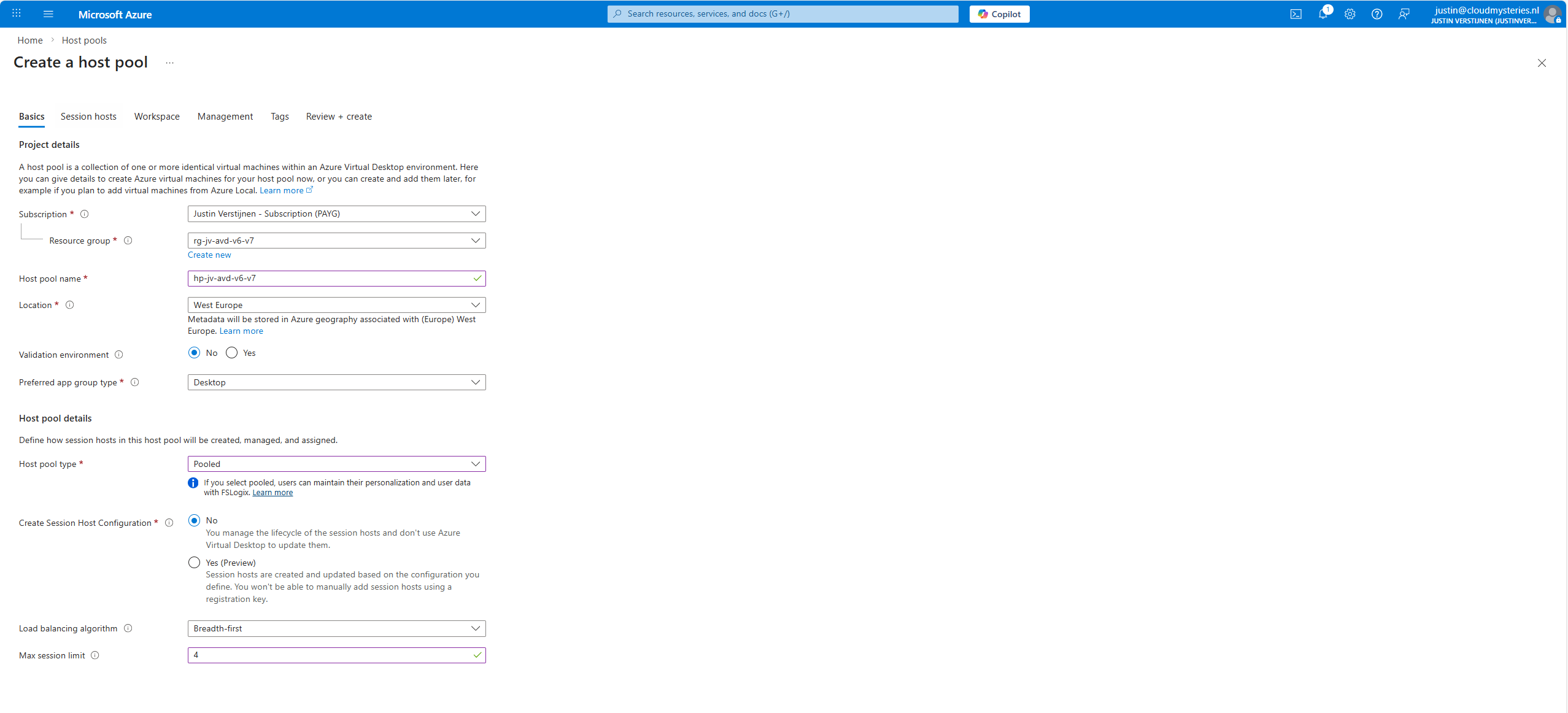

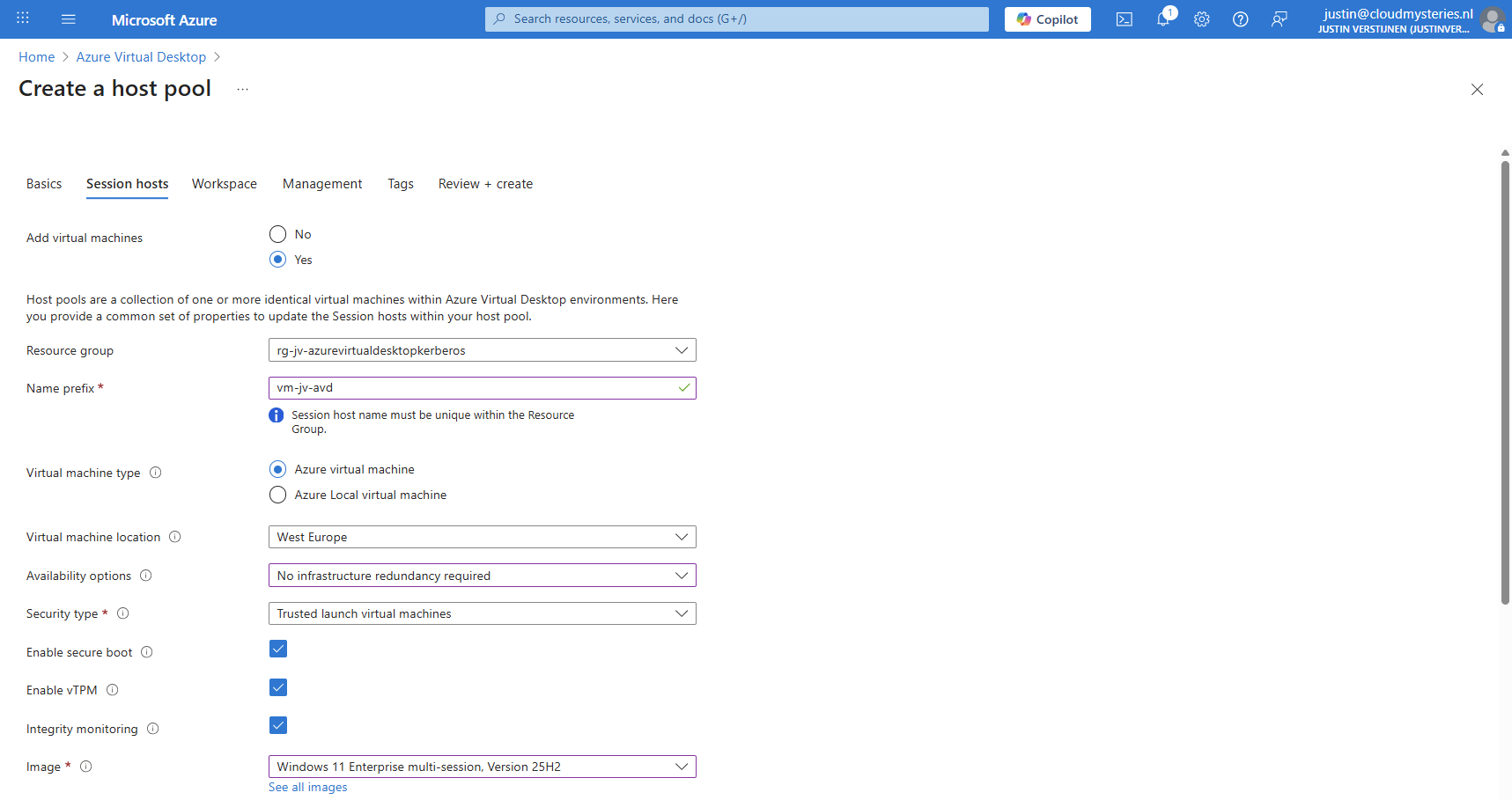

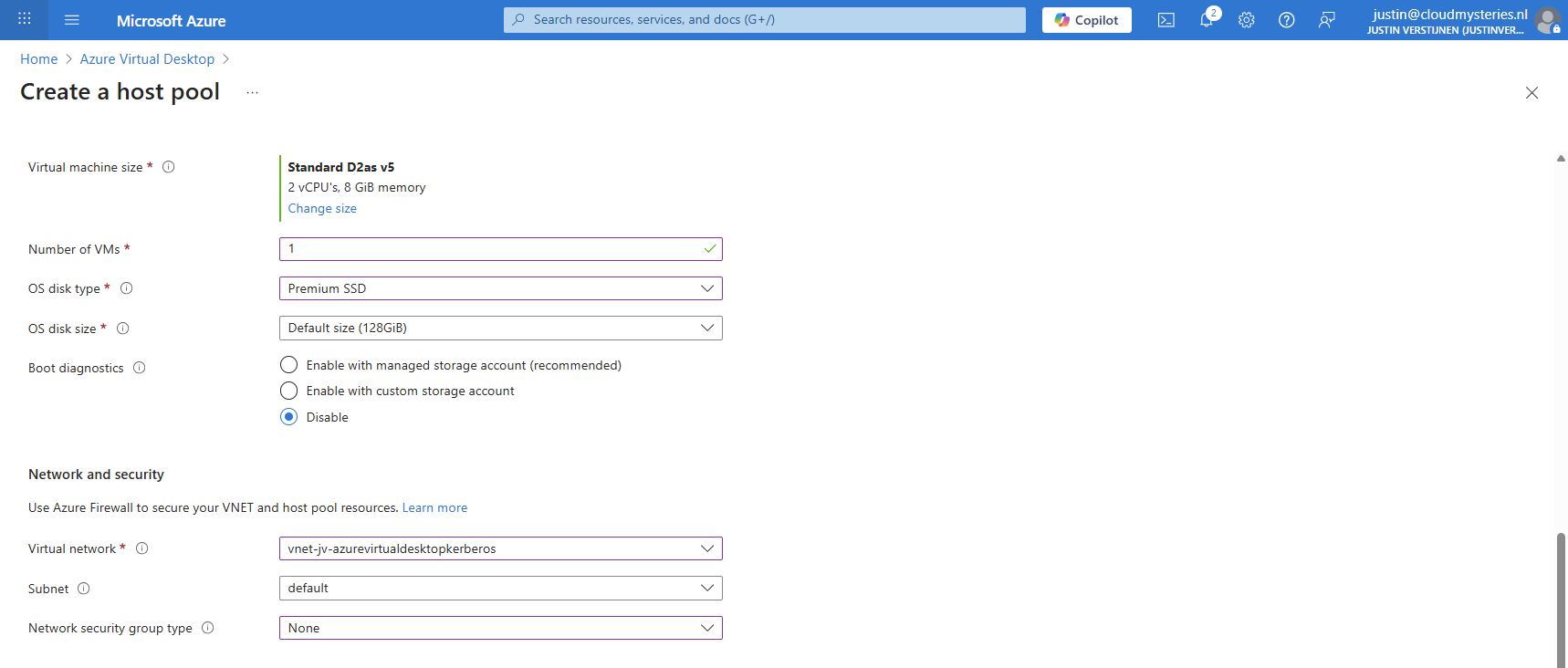

2: Create Azure Virtual Desktop hostpool

Now we have to create a hostpool for Azure Virtual Desktop. This is a group of session hosts which will deliver a desktop to the end user.

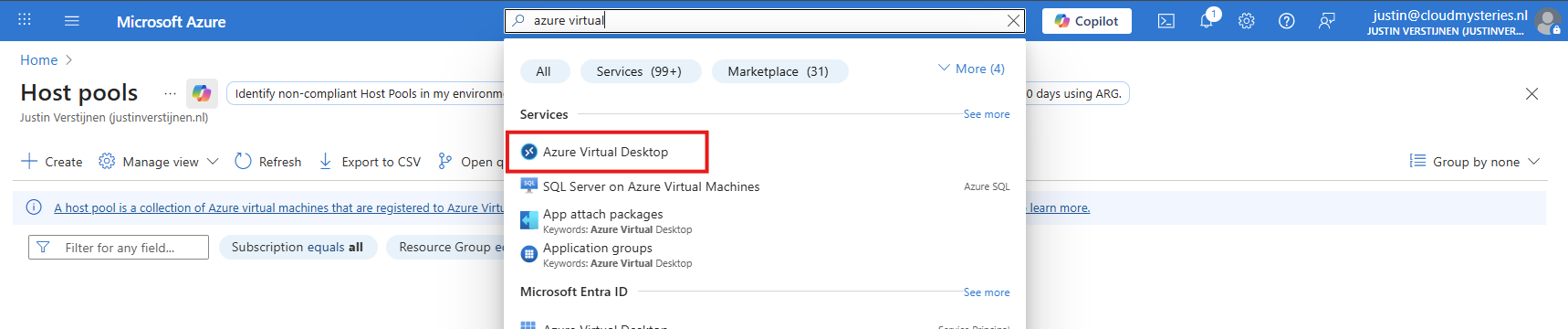

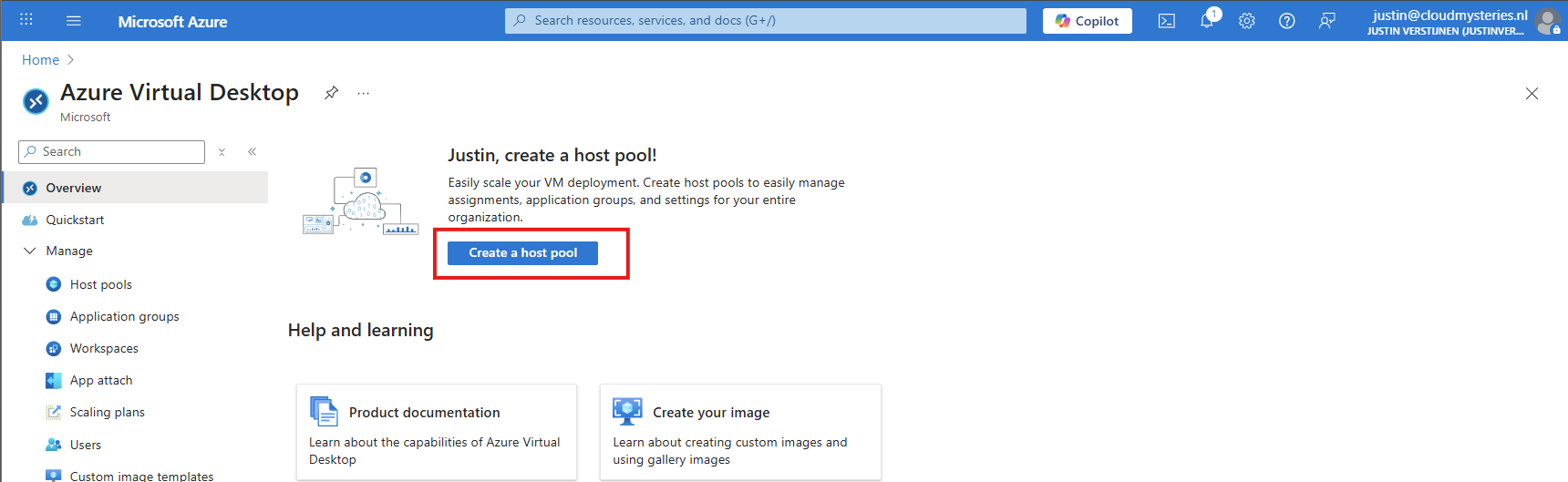

In Microsoft Azure, search for “Azure Virtual Desktop”.

Then click on “Create a hostpool”.

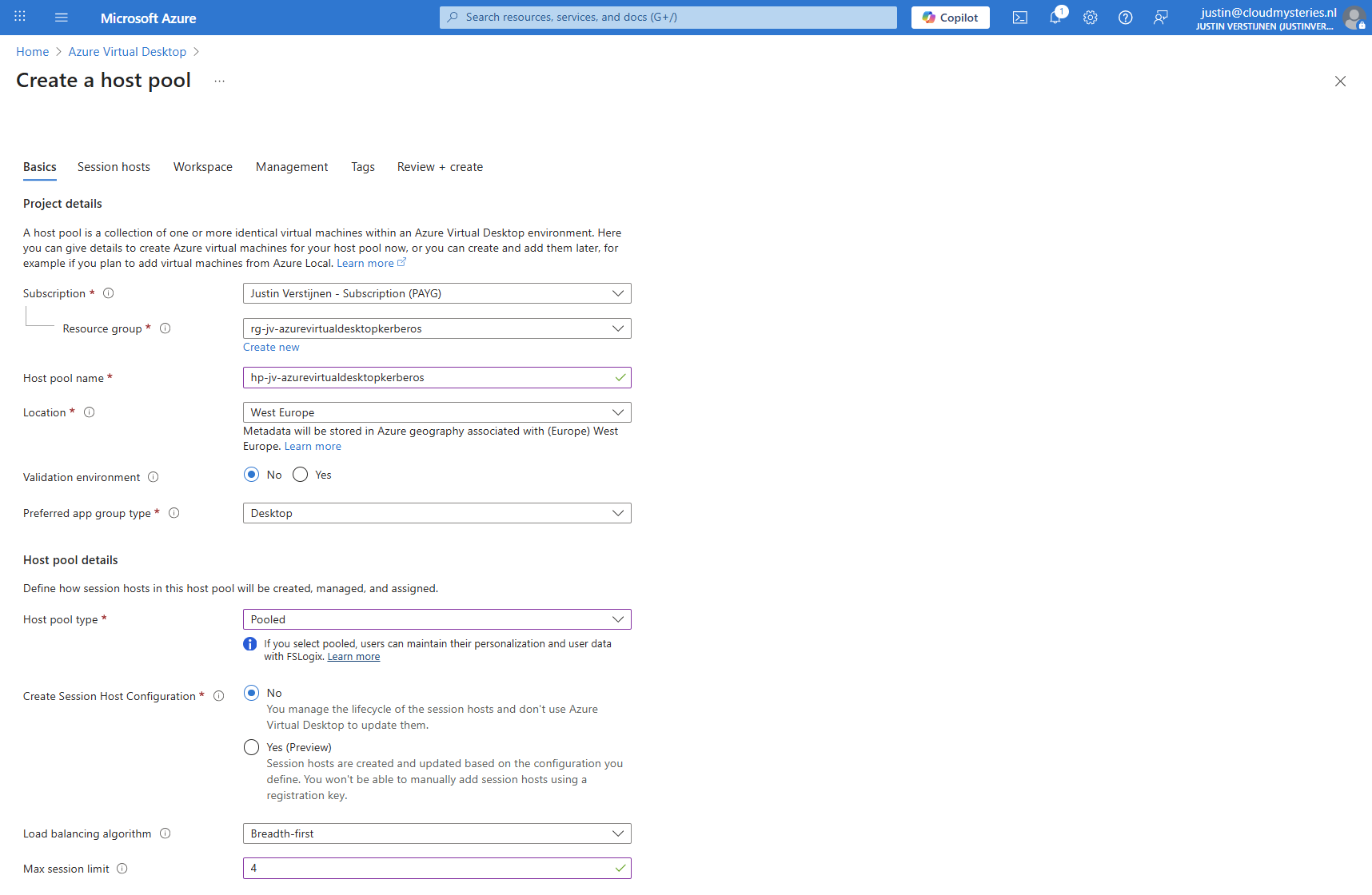

Fill in the details of your hostpool like a name, the region you want to host it and the hostpool type. Assuming you are here for FSLogix, select the “Pooled” type.

Then click “Next” to advance to the next configuration page. Here we must select if we want to deploy a virtual machine. In my case, I will do this.

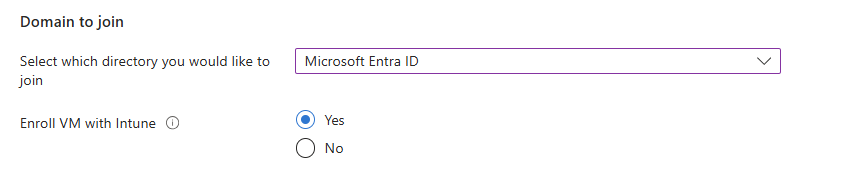

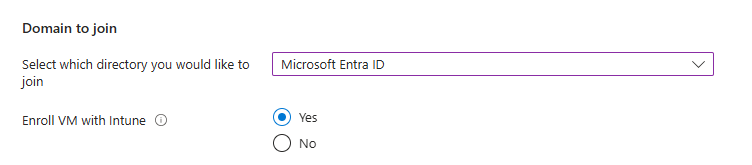

And at the end select the option “Microsoft Entra ID”.

Create your local administrator account for initial or emergency access and then finish creating the hostpool.

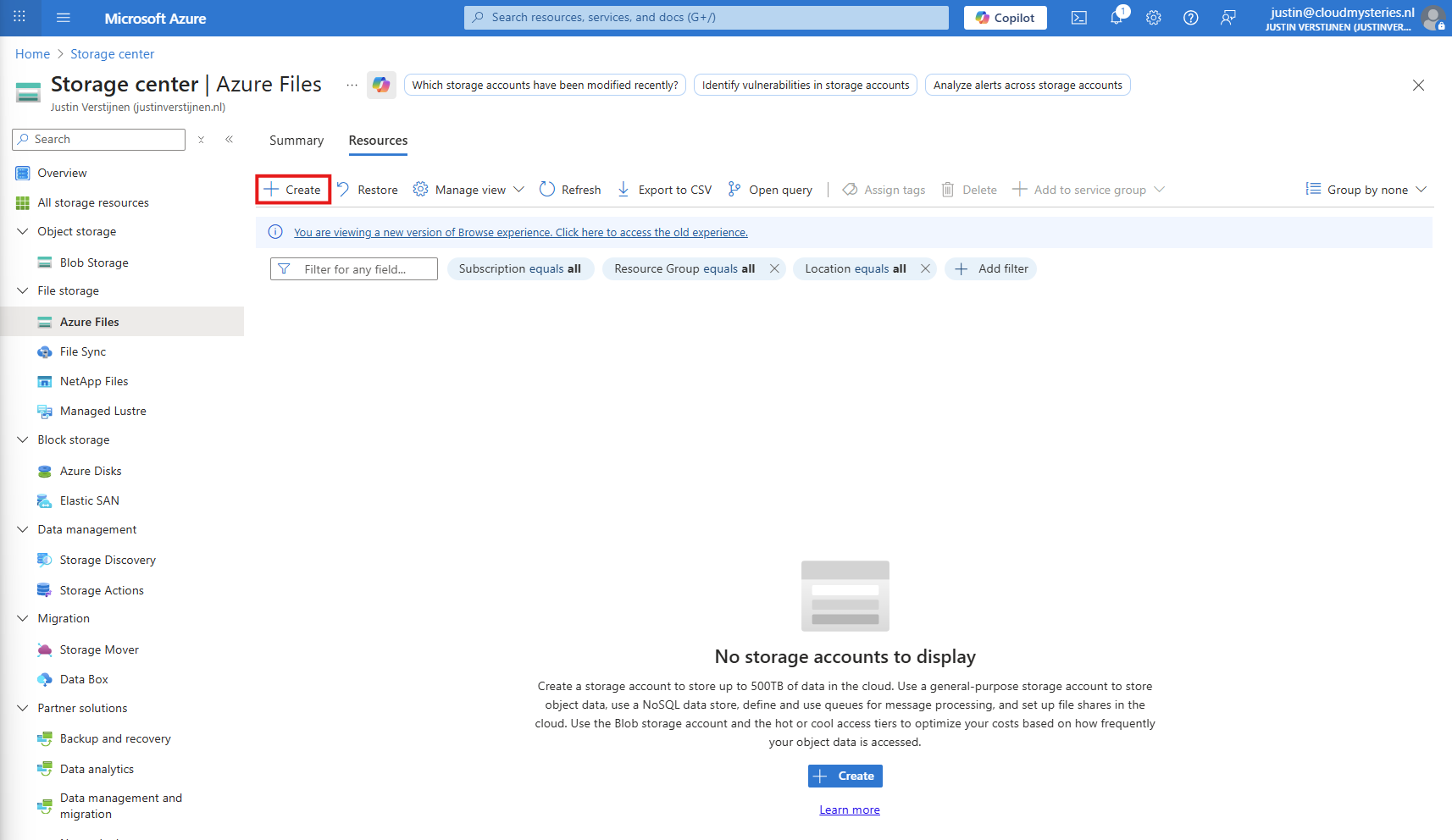

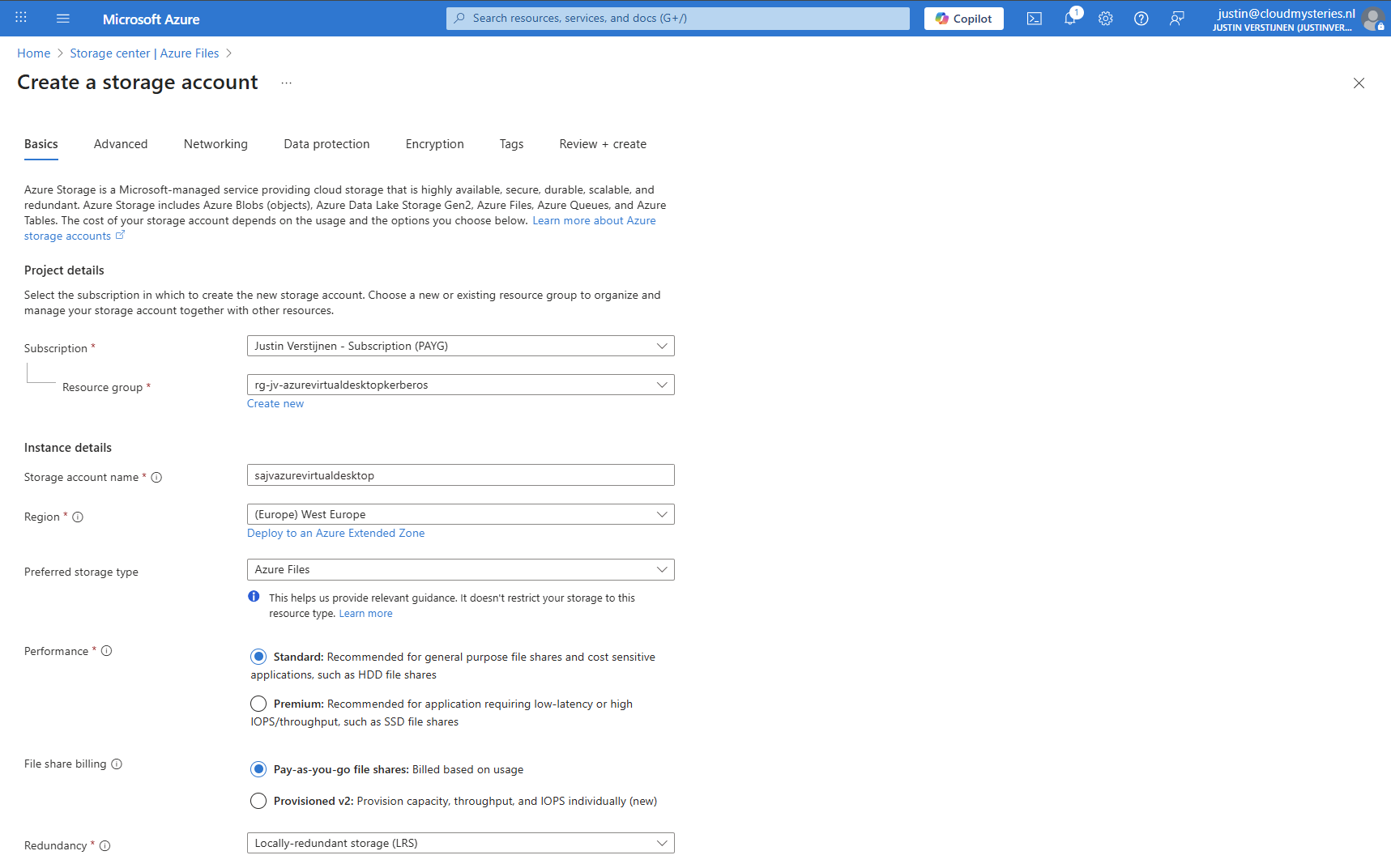

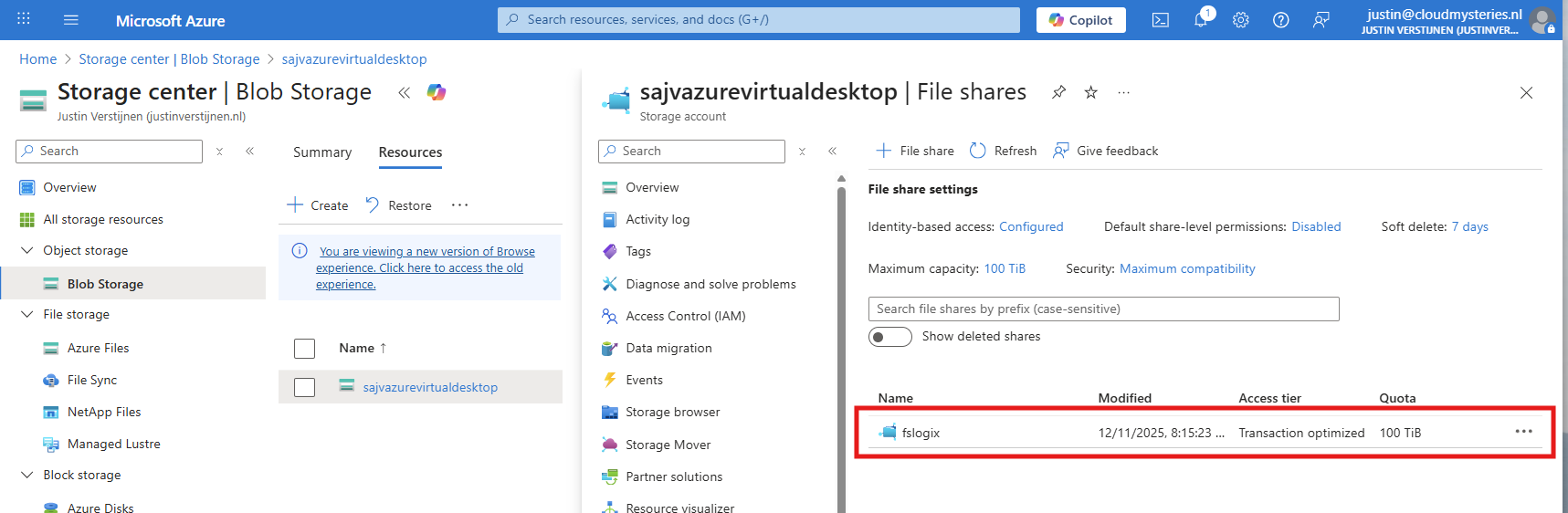

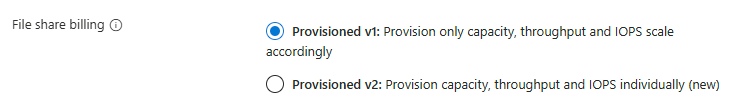

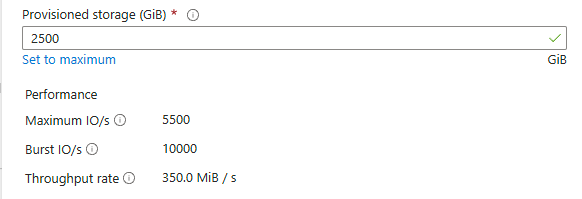

3: Create Storage Account for FSLogix

After having the hostpool ready and the machine deploying, we have to create a storage account and fileshare for storing the FSLogix profiles. In the Azure Portal, go to Azure Files and create a new storage account:

Then fill in the details of your storage account:

I chose the Azure Files type as we don’t need the other storages. We can skip to the end to create the storage account.

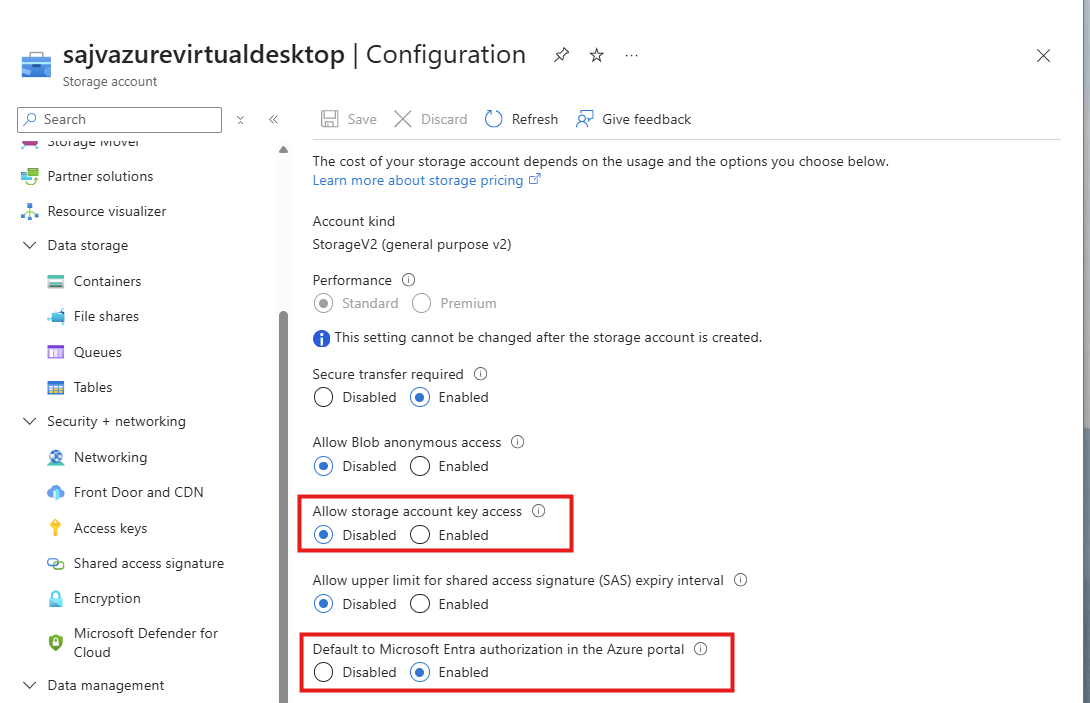

Storage account security

After creating the storage account, we must do some configurations. Go to the storage account and then to “Configuration”.

Set these two options to this setting:

- Allow storage account key access: Disabled

- Default to Microsoft Entra authorization in the Azure Portal: Enabled

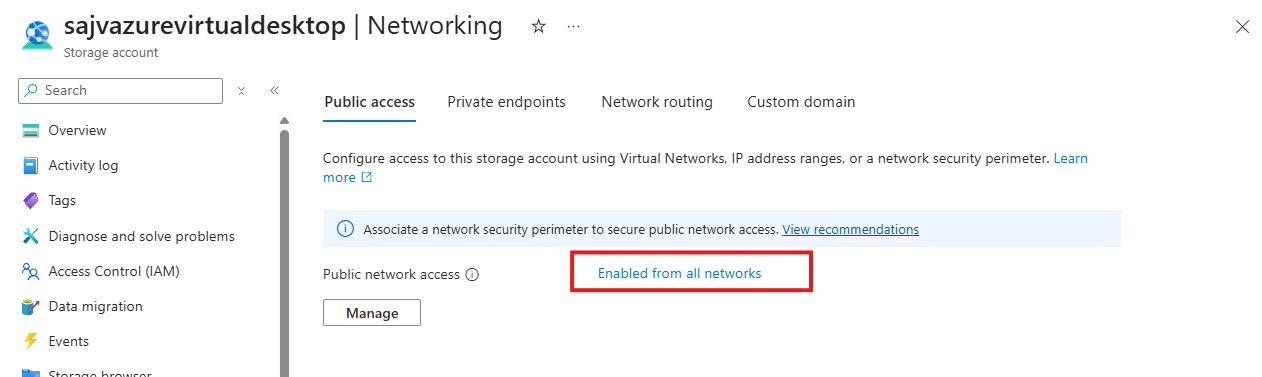

Storage account firewall settings

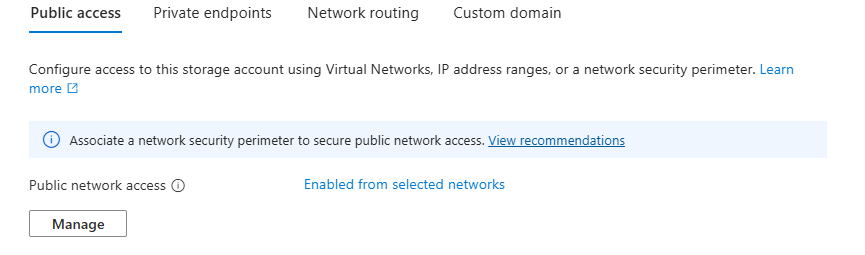

Navigate in the Storage account to the blade “Networking”. We will limit the networks and IP addresses that can access the storage account which is by default the whole internet.

Click on “Enabled from all networks”.

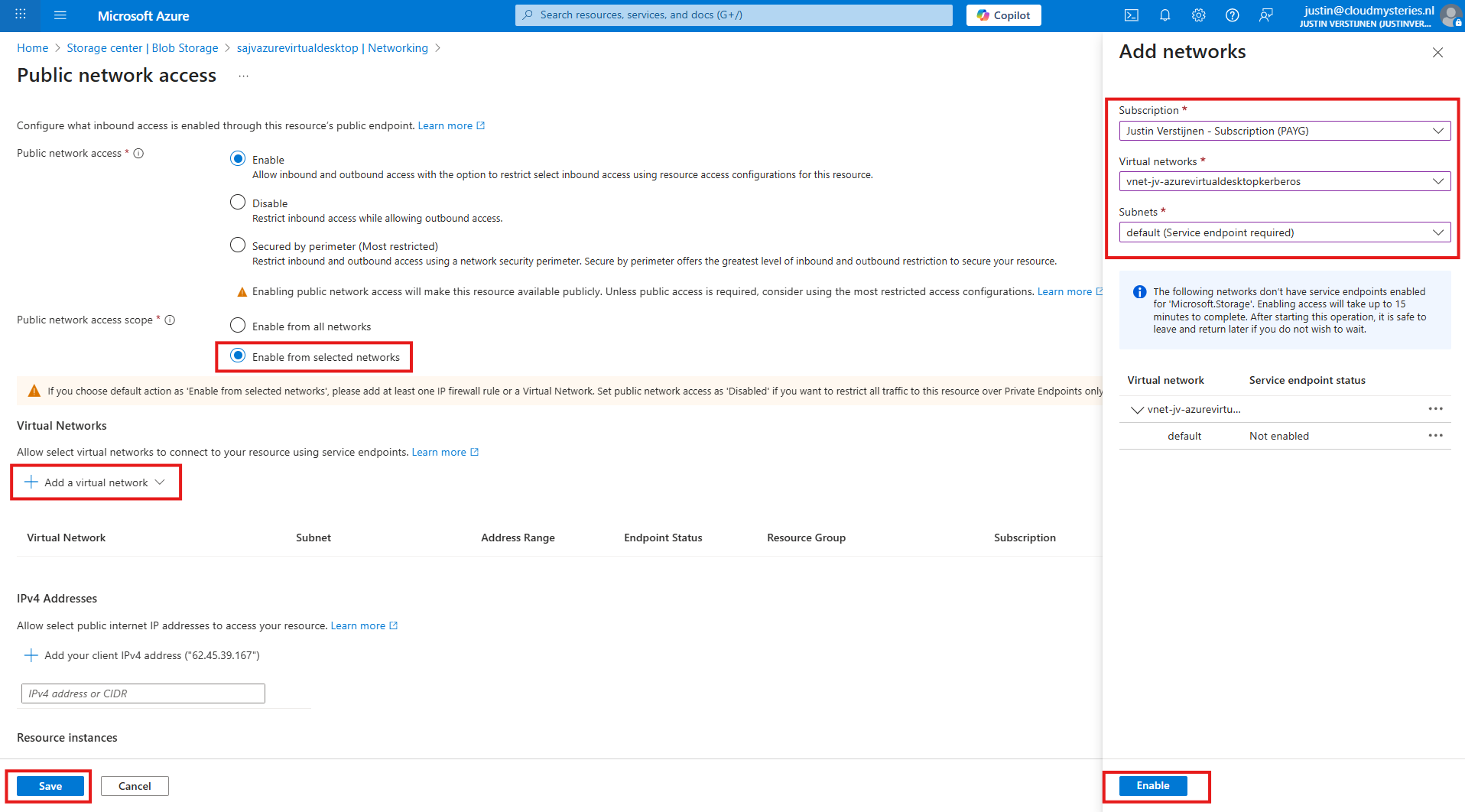

Here select the “Enable from selected networks” option, and select your network containing your Azure Virtual Desktop hosts.

Click “Enable” to let Azure do some under the hood work (Creates a Service Endpoint for the AVD network to reach the Storage account).

Then click “Save” to limit access to your Storage Account only from your AVD hosts network.

Configuring this shifts the option to “Enabled from selected networks”.

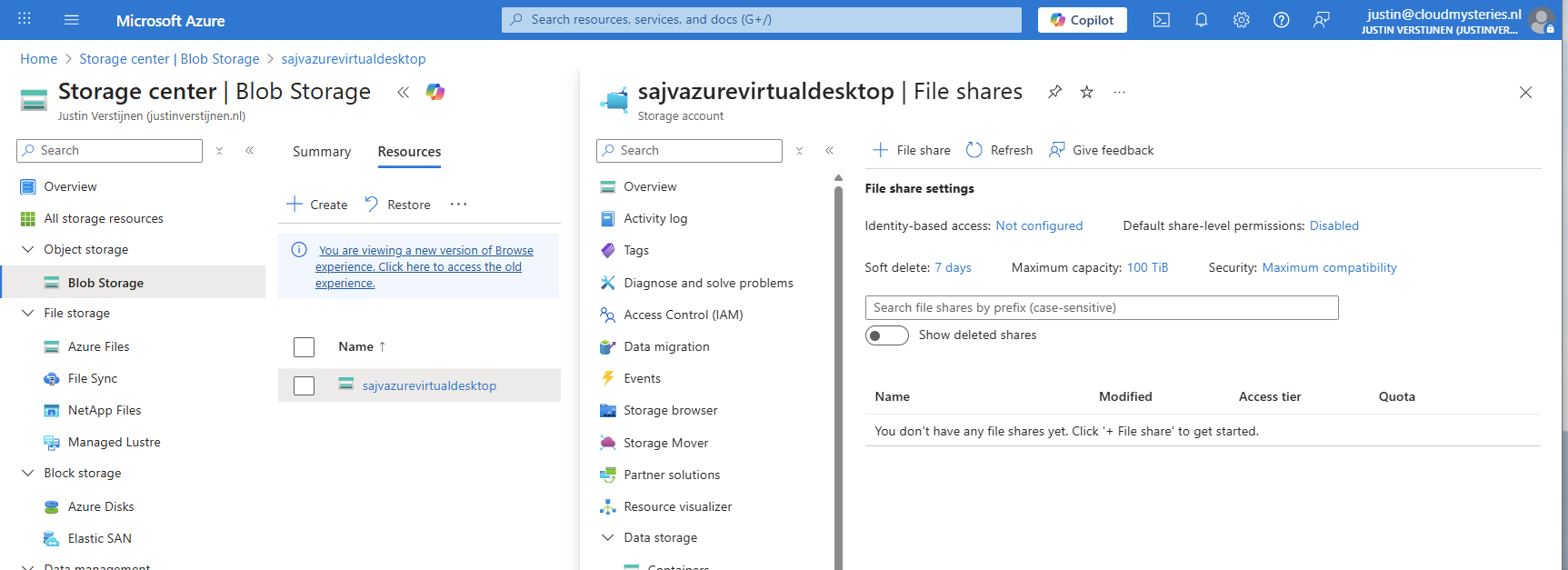

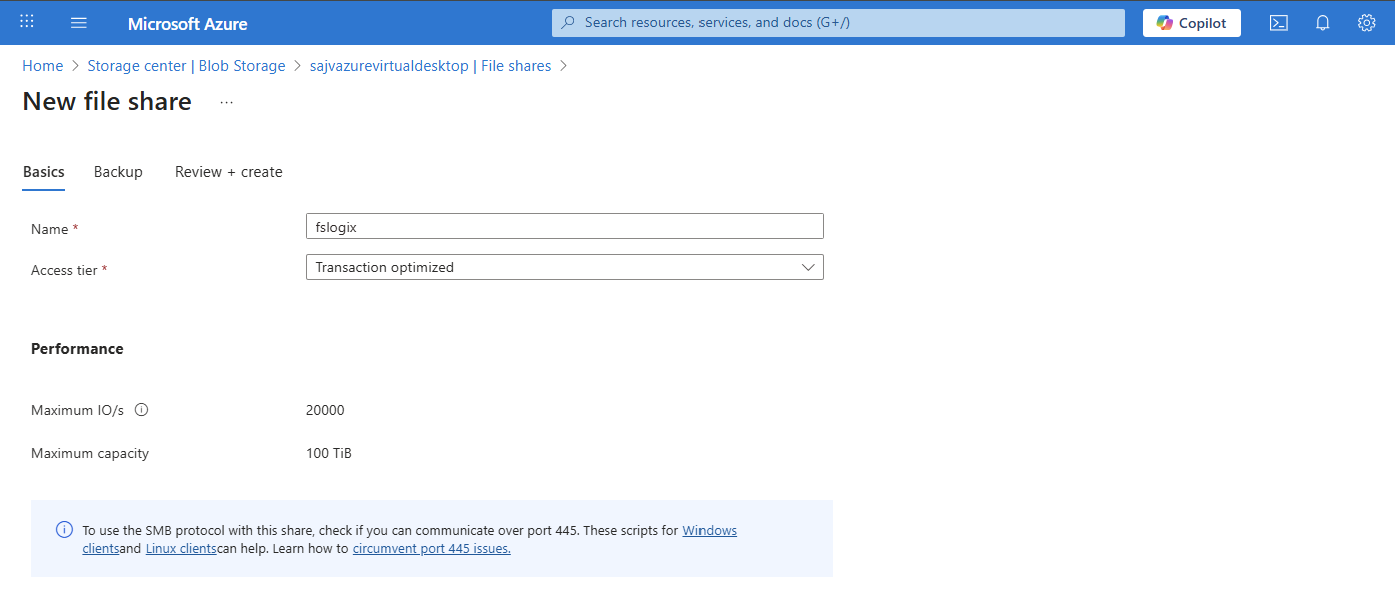

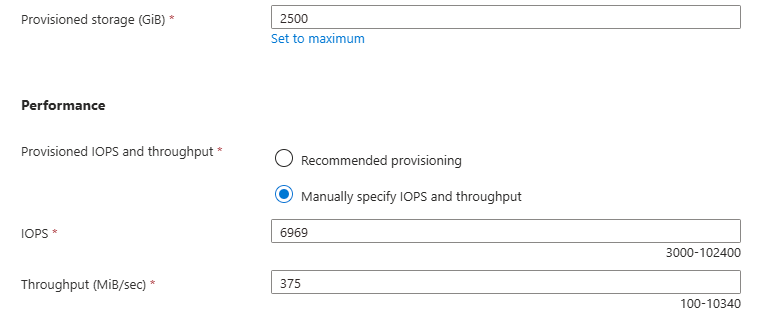

4: Create the File Share and Kerberos

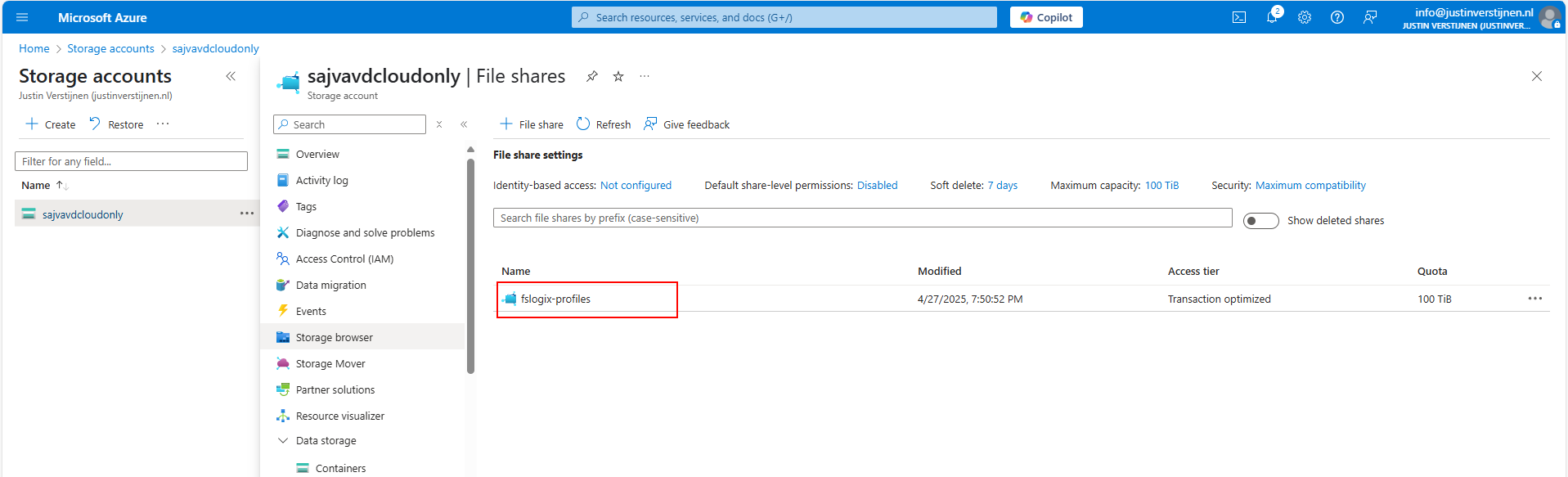

After creating, navigate to the storage account. We have to create a fileshare to place the FSLogix profiles.

Navigate to the storage account and create on “+ File share”.

Give the file share a name and decide to use back-up or not. For production environments, this is highly recommended.

Finish the wizard to create the file share.

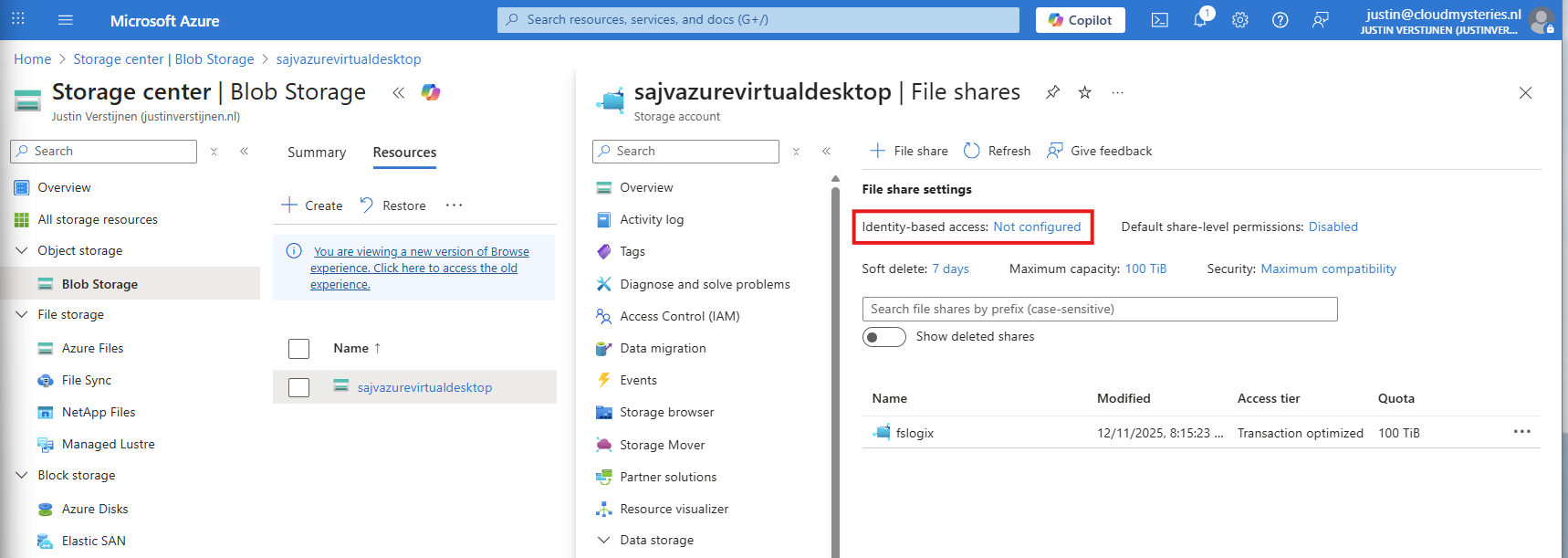

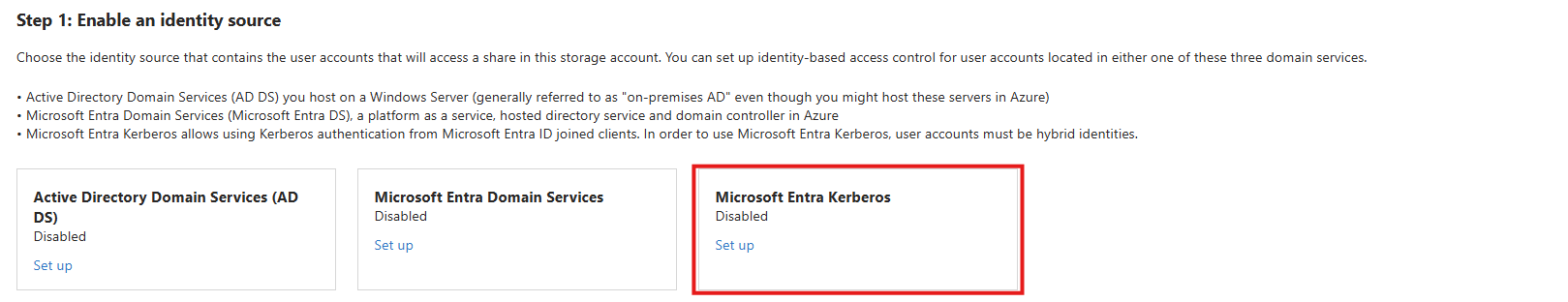

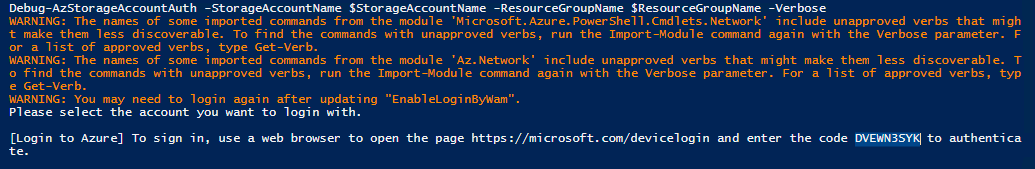

Now we have to configure the Microsoft Entra Authentication to authenticate against the file share. Go to the storage account, then “file shares” and then click on “Identity-based access”.

Select the option “Microsoft Entra Kerberos”.

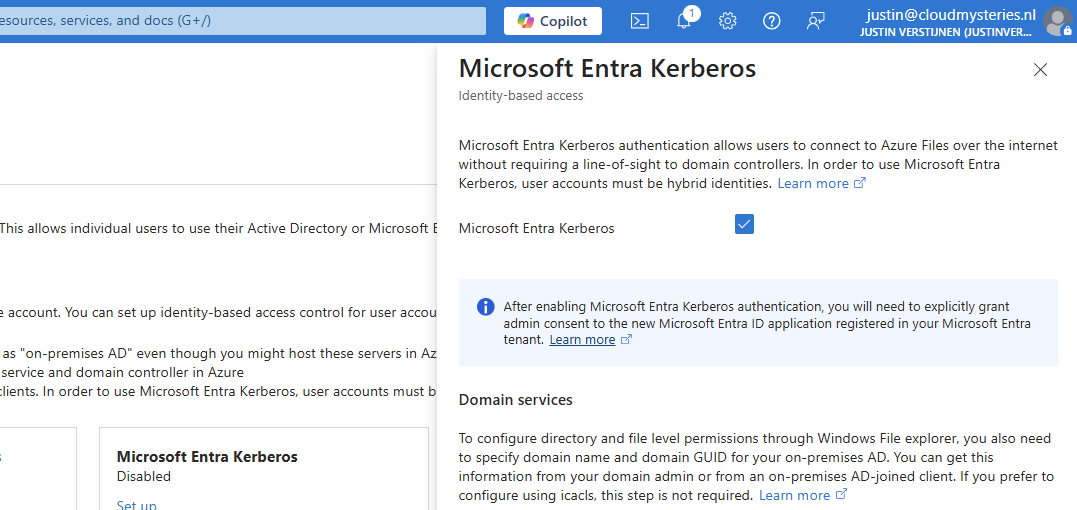

Enable Microsoft Entra Kerberos on this window.

After enabling this option, save and wait for a few minutes.

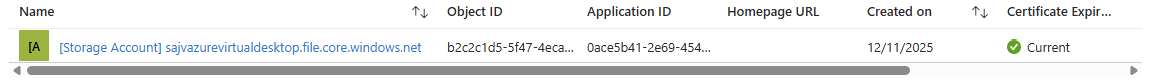

Enabling this option will create a new App registration in your Entra ID.

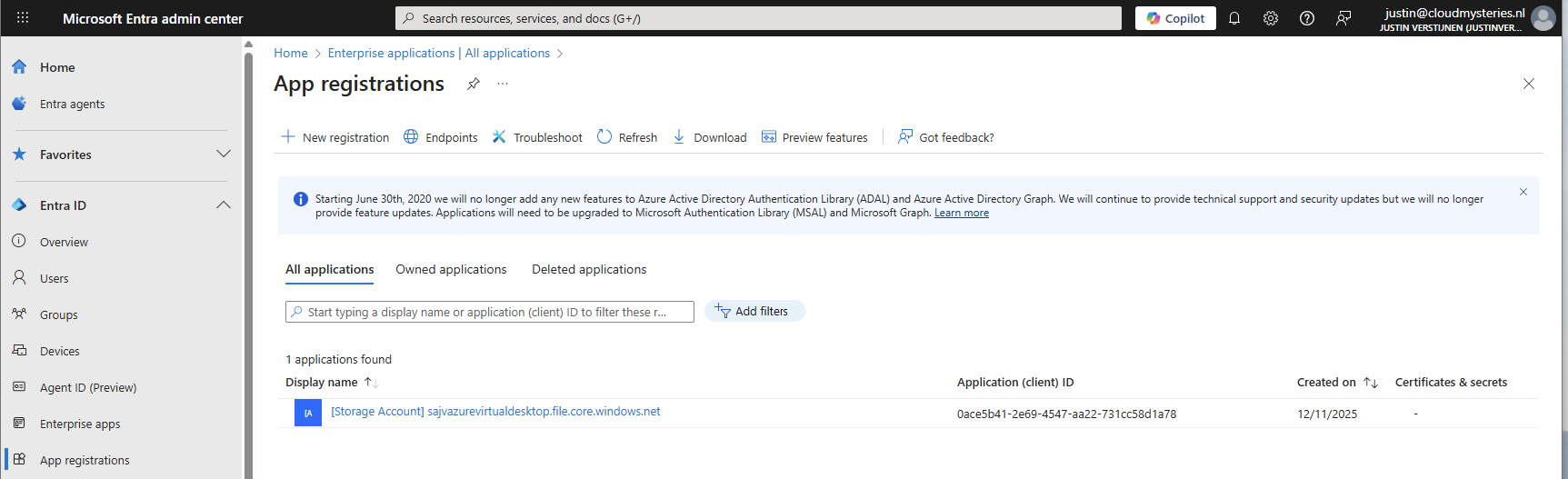

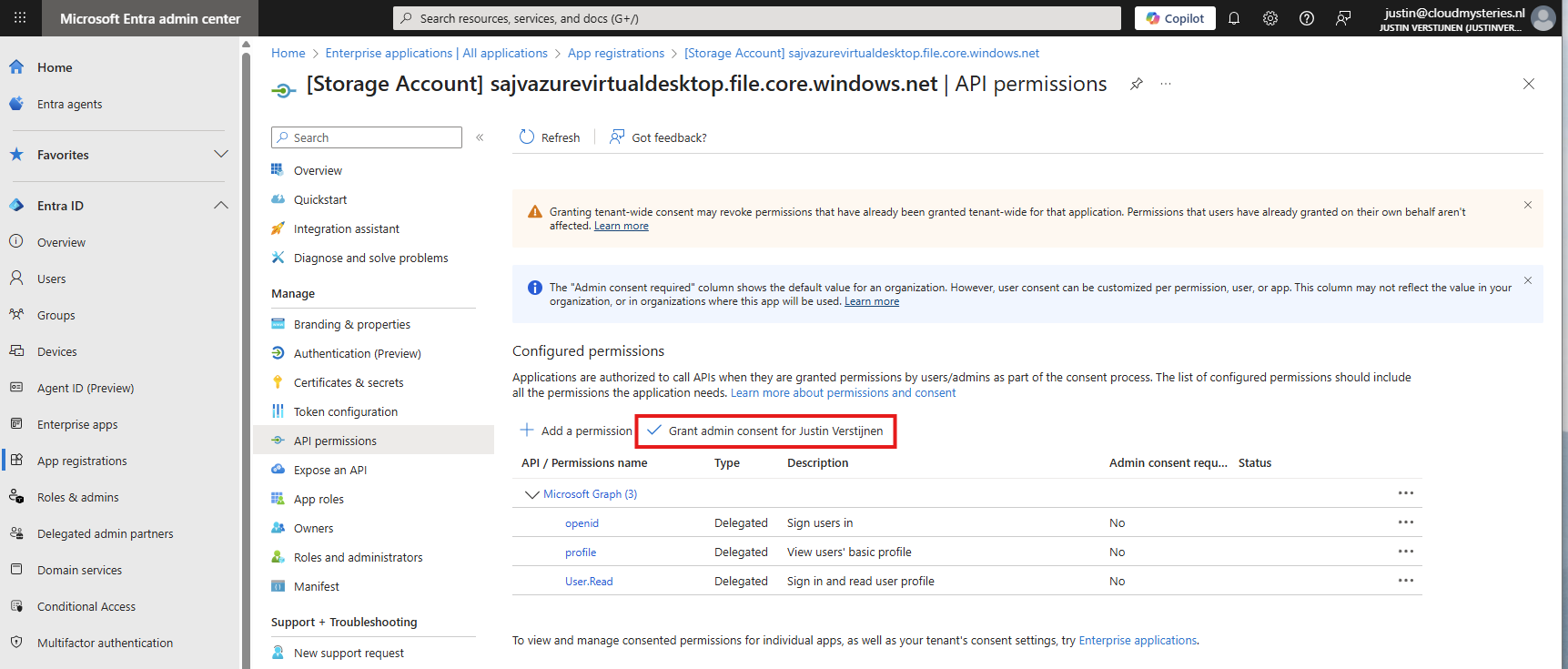

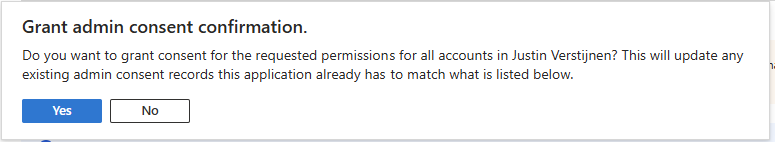

5: Configure the App registration

Now that we have enabled the Entra Kerberos option, an App registration will be created. This will be used as Service Principal for gaining access to the file share. This will be a layer between the user logging into Azure Virtual Desktop and the file share.

Go to the Microsoft Entra portal: https://entra.microsoft.com

Head to “App registrations” and open it. We need to give it some permissions as administrator.

Then head to “API permissions”.

The required permissions are already filled in by Azure, but we need to grant admin consent as administrator. This means we tell Azure that it may read our users and can use it to sign in to the File share.

Click on “Yes” to accept the permissions.

Without granting access, the solution will not work. Even when it stated that admin consent is not required.

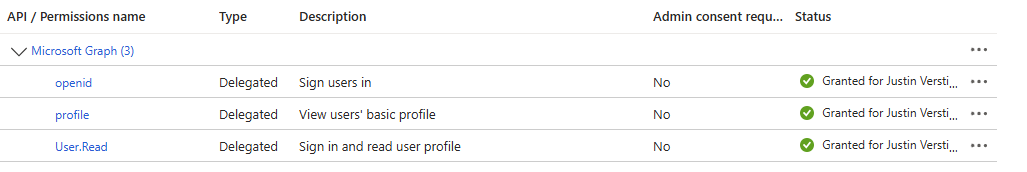

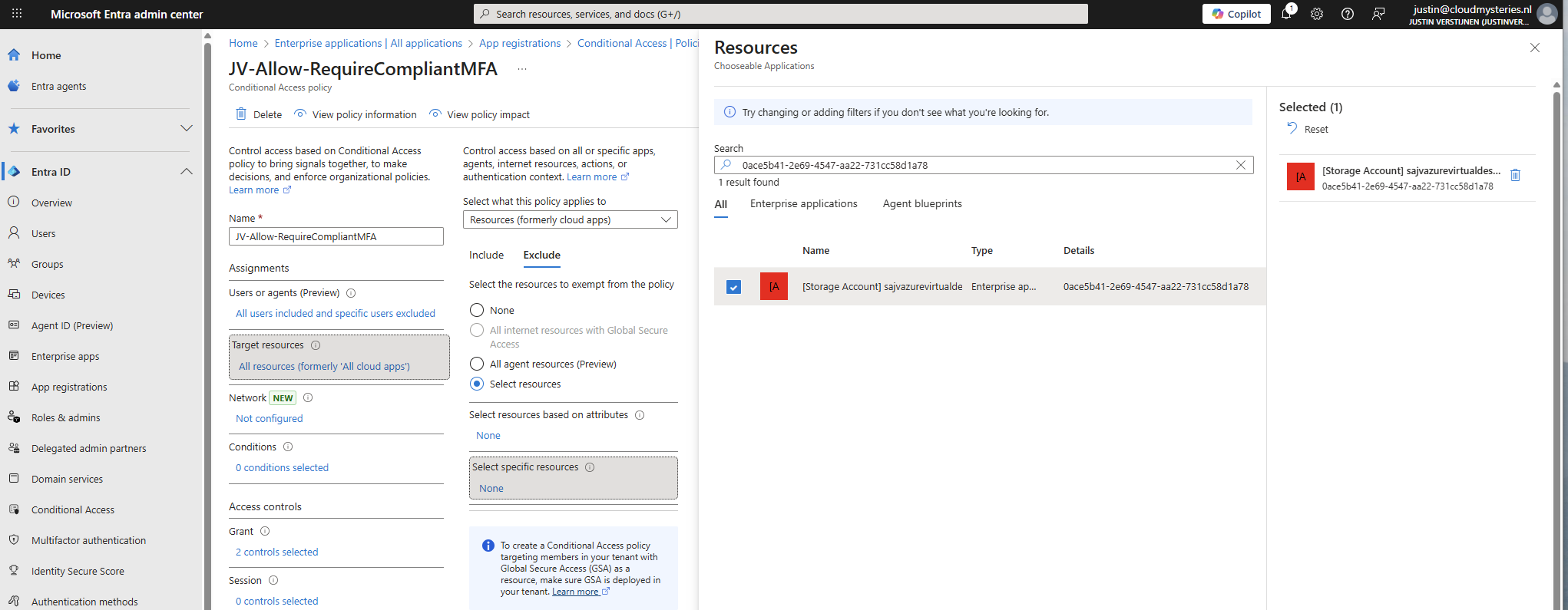

You also need to exclude the application from your Conditional Access policies. For every policy, add it as excluded resource:

In my case, the name did not pop-up so I used the Application ID instead.

Add this to the excluded resource of every Conditional Access policy in your tenant to make sure this will not interrupt.

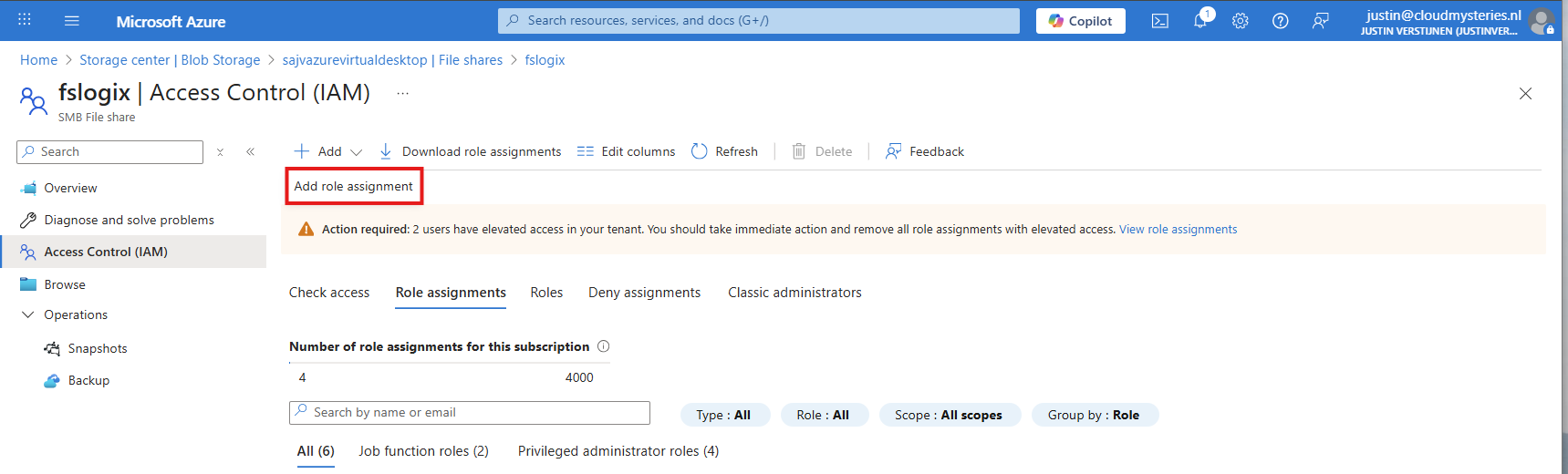

6: Configure storage permissions

To give users and this solution access to the storage account, we need to configure the permissions on our storage account. We will give the created security group SMB Contributor permissions to read and write the profile disks.

User permissions

Go to the Storage account, then to the file share and open the file share. For narrow security, we will give only permissions on the file share we just created some steps earlier.

Open the file share and open the “Access Control (IAM)” blade and add a new role assignment.

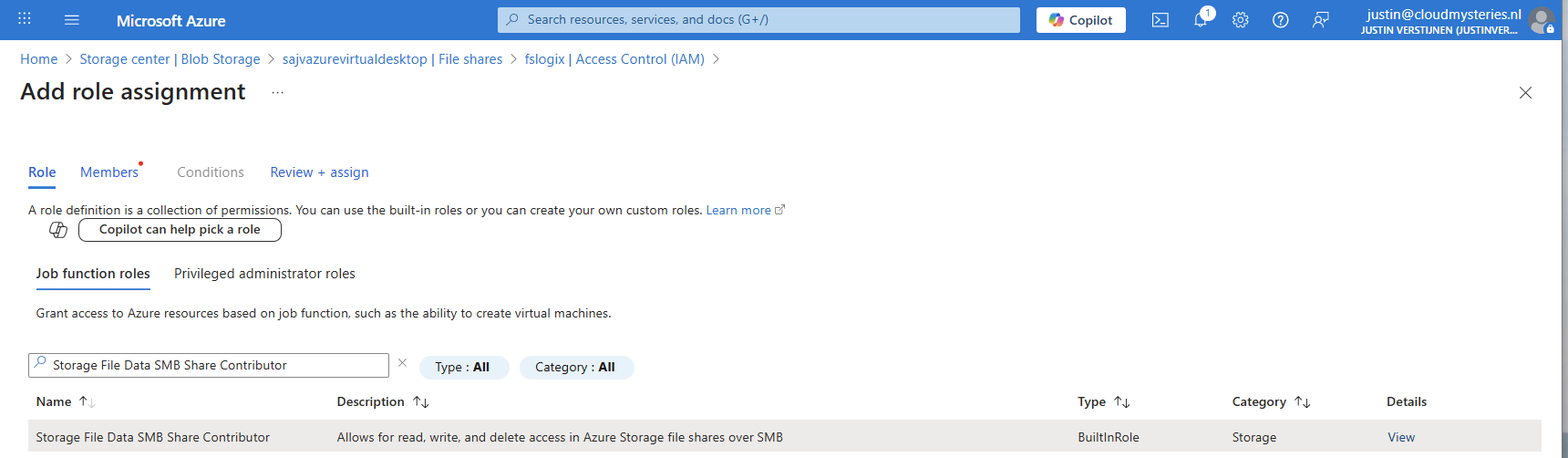

Now search for the role named:

- Storage File Data SMB Share Contributor

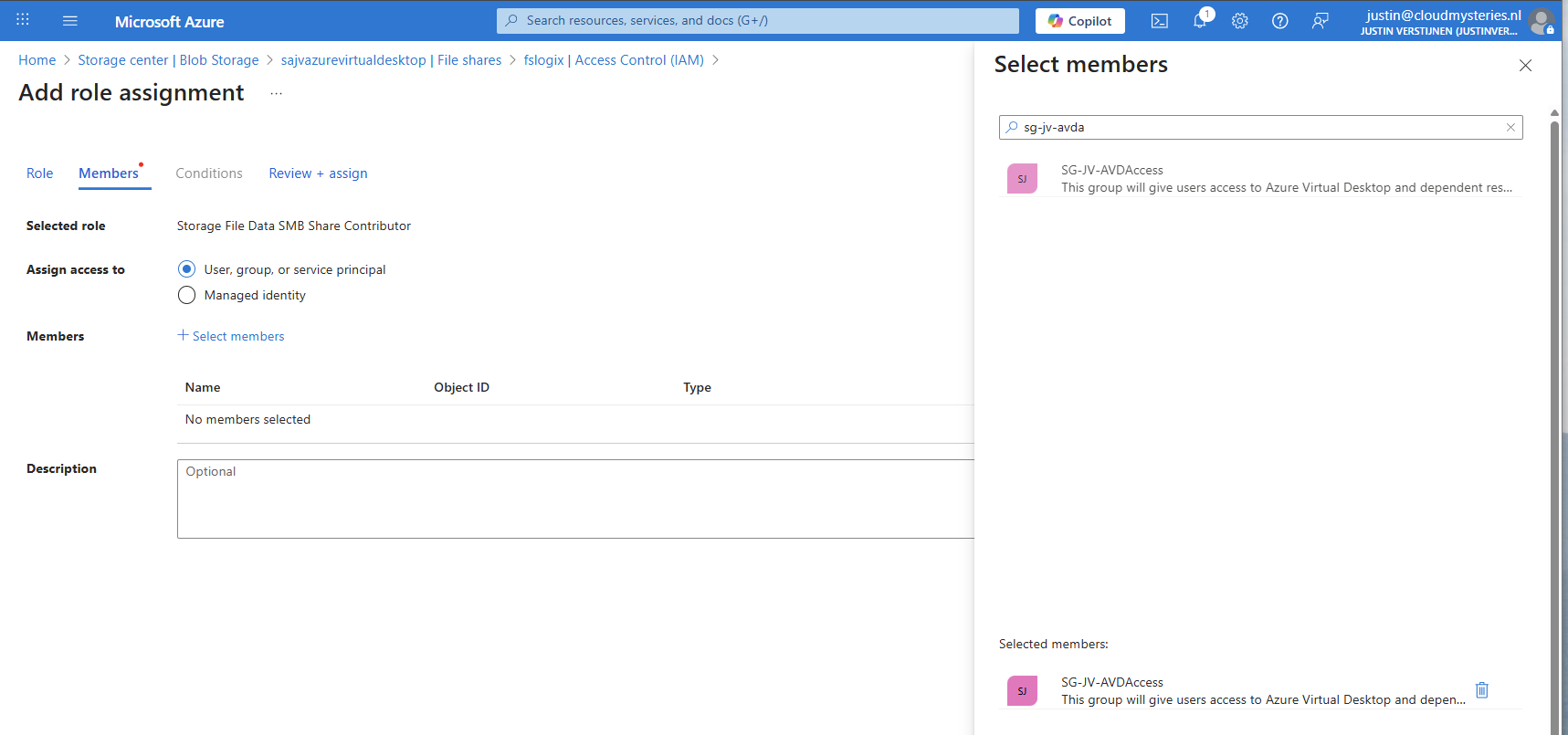

This role gives read/write access to the file share, which is the SMB protocol. We will assign this role to our created security group.

Click “Next” to get to the “Members” tab.

Search for your group and add it to the role. Then finish the wizard.

Administrator permissions

To view the profiles as administrator, we must give our accounts another role, this is to use Microsoft Entra authentication in the portal as we disabled the storage account key for security reasons.

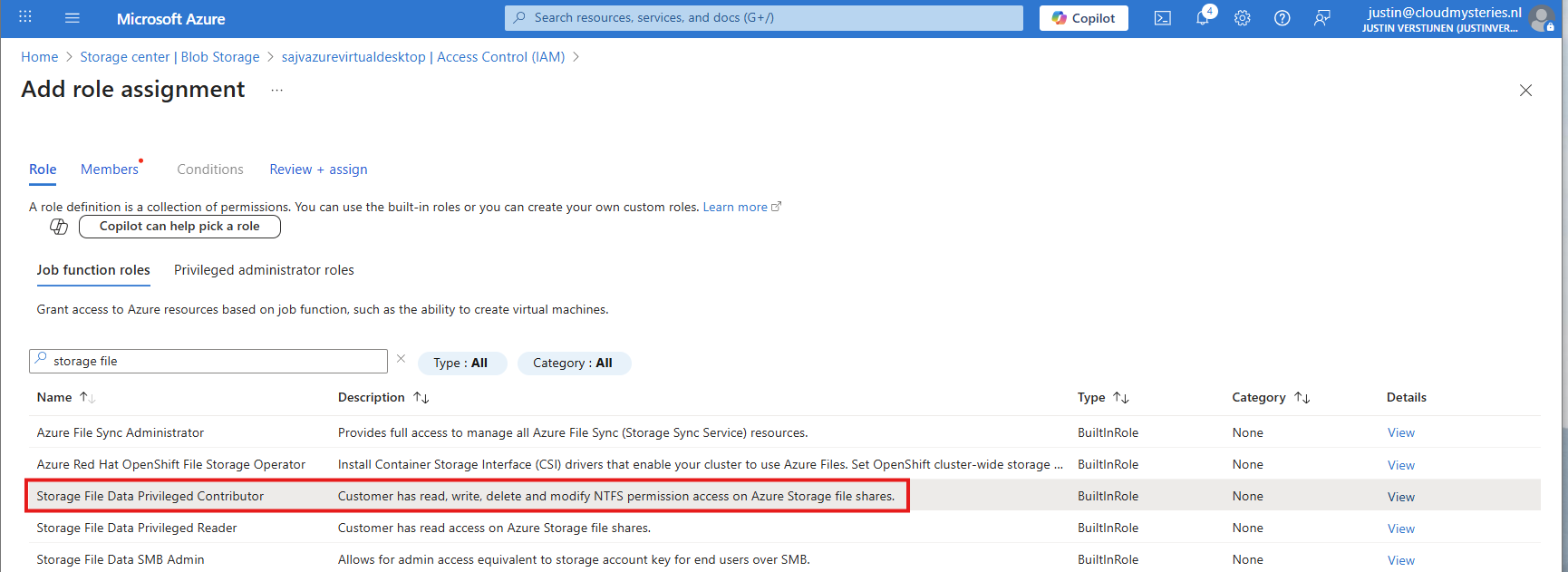

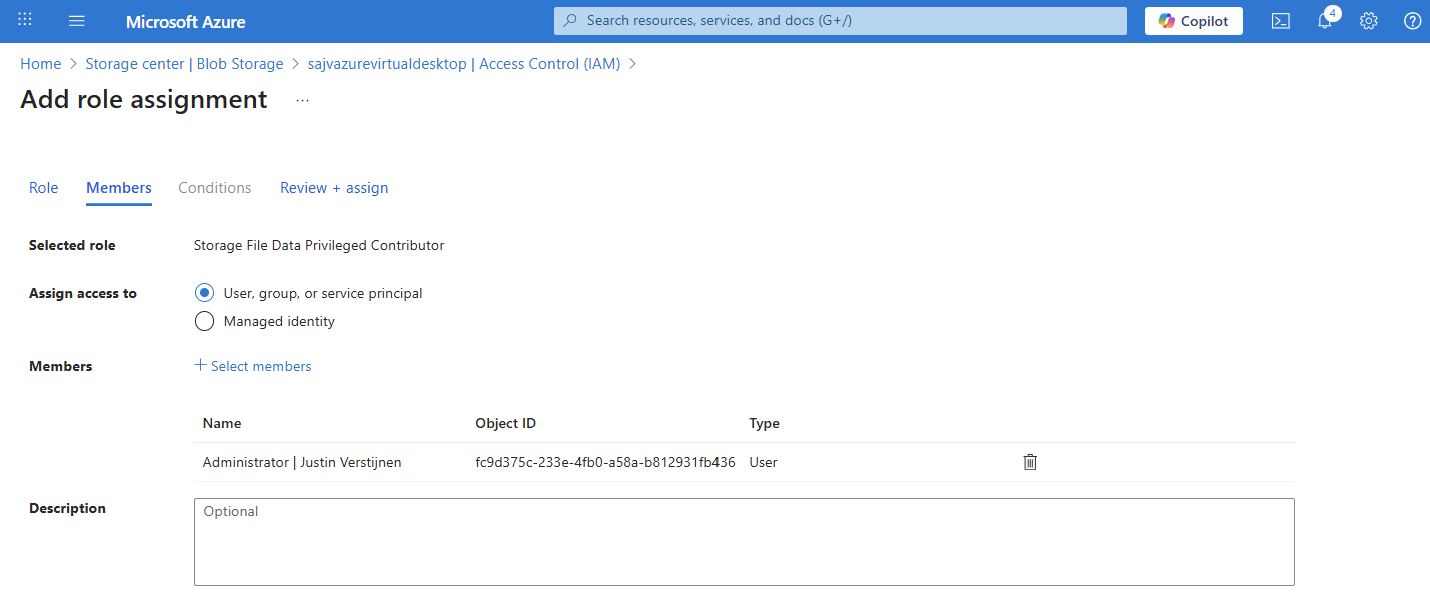

Again, add a new role assignment:

Search for the role: Storage File Data Privileged Contributor

Assign this to your administrator accounts:

Finish the wizard to make the assignment active.

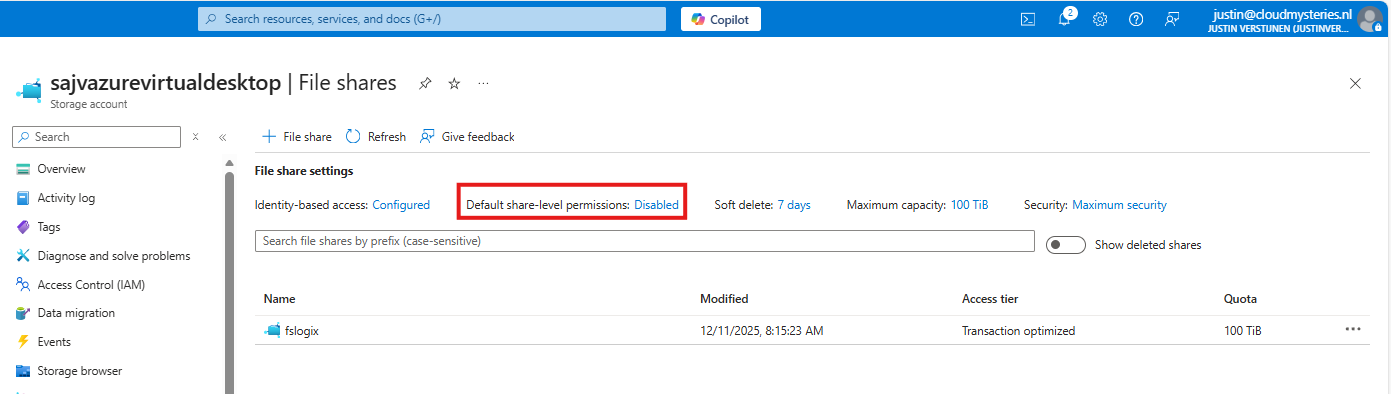

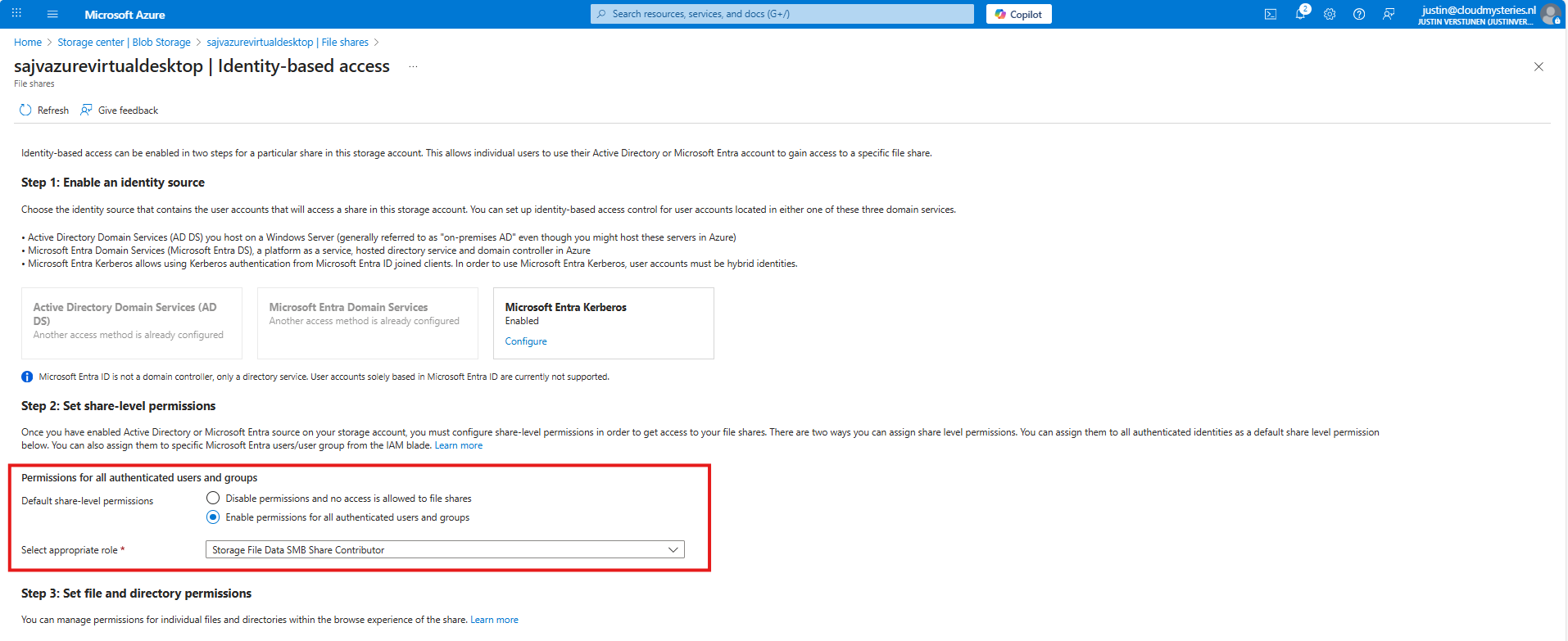

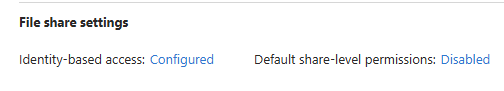

Default share-level permissions

We must also do one final configuration to the storage account permissions, and that is to set default share-level permissions. Is is a requirement of this Microsoft Entra Kerberos thing.

Go back to the storage account, click on FIle shares and then click on “Default share-level permissions”

Set the share-level permissions to “Enable permissions for all authenticated users and groups”. Also select the “Storage File Data SMB Share Contributor” role, which includes read/write permissions.

Save the configuration, and we will now dive into the session host configuration part.

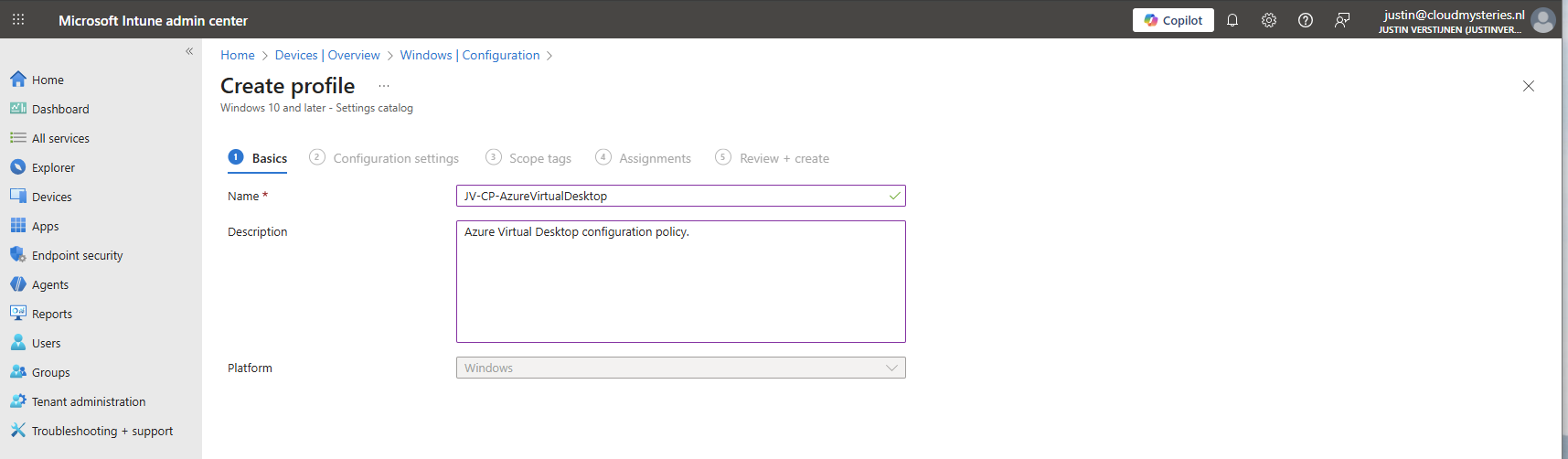

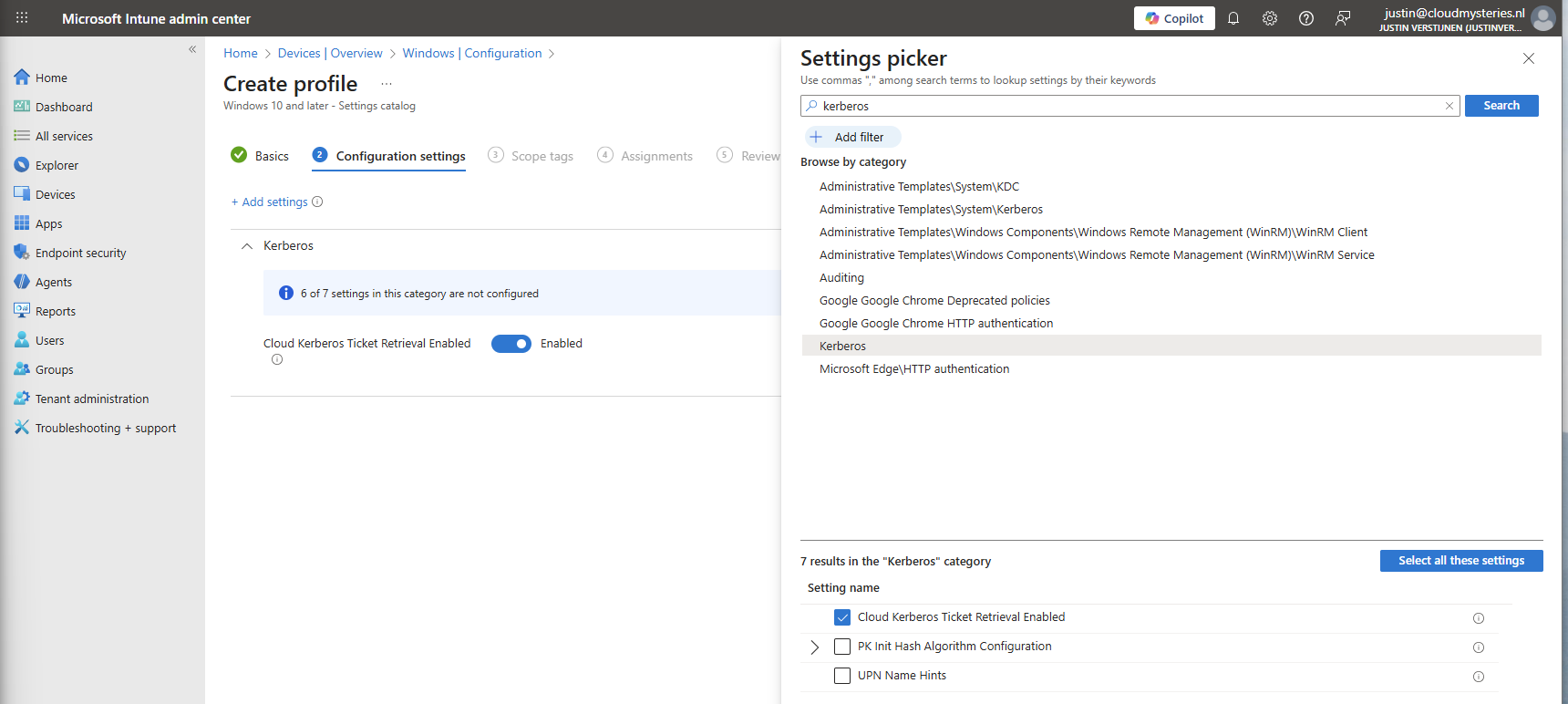

7: Intune configuration for AVD hosts

Now we need to configure the following setting for our AVD hosts in Intune:

- Kerberos Cloud Ticket Retrieval: This setting allows cloud devices to obtain Kerberos tickets from Microsoft Entra ID by using cloud credentials to use against SMB file shares

Go to the Intune Admin center (https://intune.microsoft.com). We need to create or change an existing configuration policy.

Search for “Kerberos” and search for the “Cloud Kerberos Ticket Retrieval” option and enable it.

Then assign the configuration policy to your AVD hosts to apply this configuration.

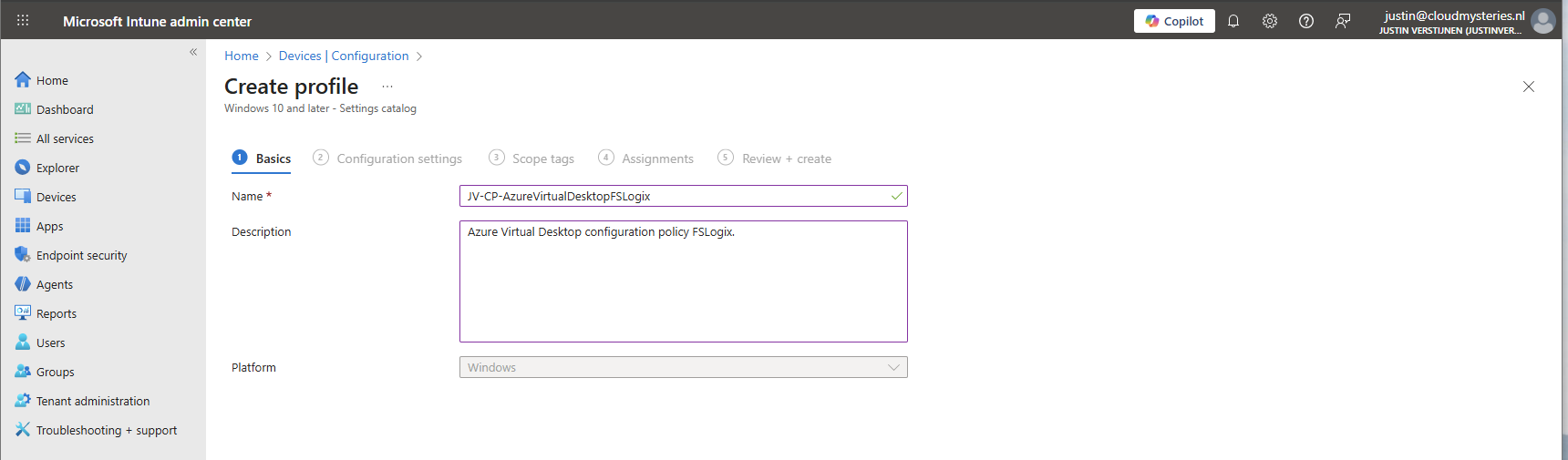

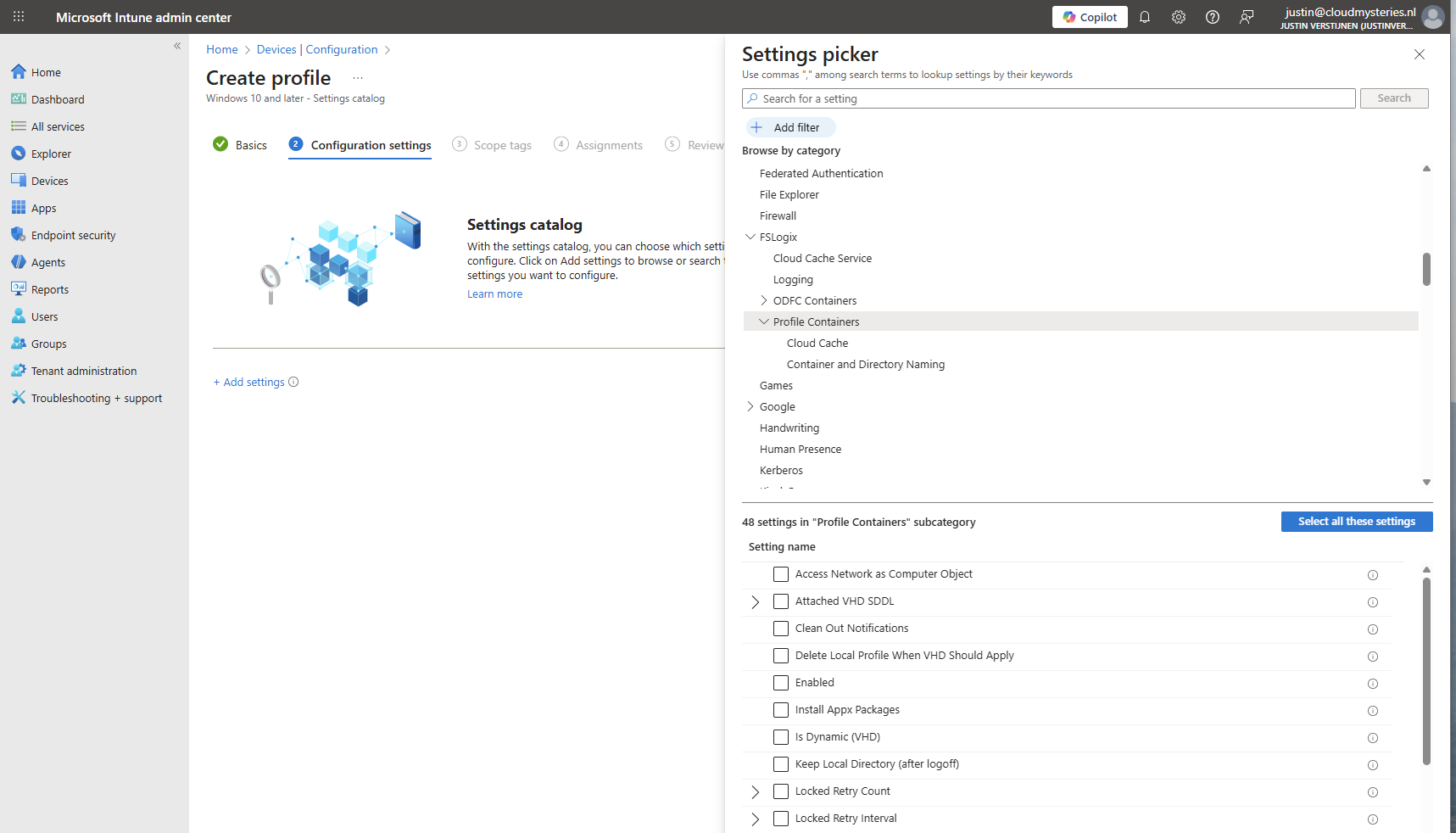

8: FSLogix configuration

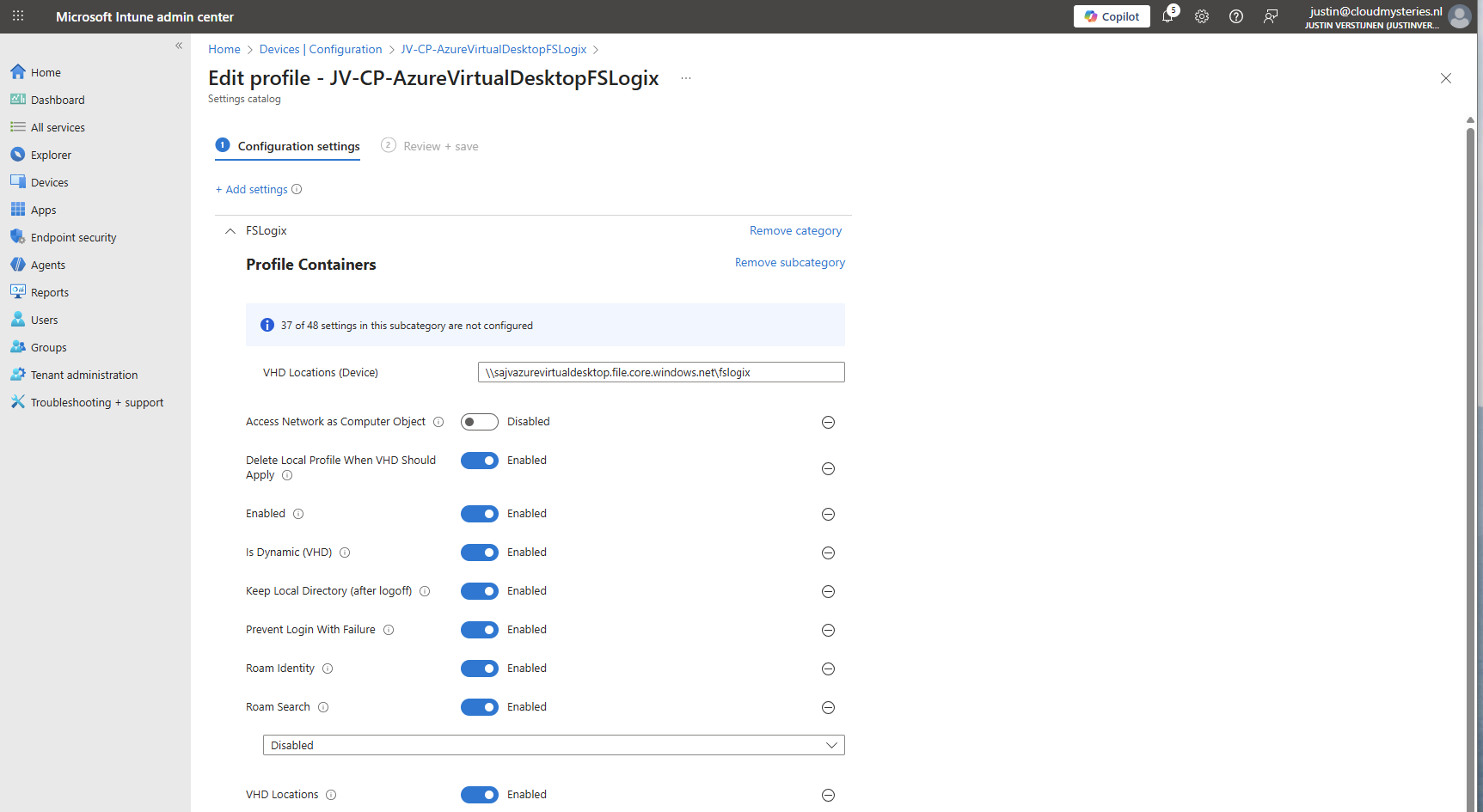

We can now configure FSLogix in Intune. I do this by using configuration profiles from settings catalogs. These are easy to configure and can be imported and exported.

To configure this create a new configuration template from scratch for Windows 10 and higher and use the “Settings catalog”.

Give the profile a name and description and advance.

Click on “Add settings” and navigate to the FSLogix policy settings.

Profile Container settings

Under FSLogix -> Profile Containers, select the following settings, enable them and configure them:

| etting name | Value |

| Access Network as Computer Object | Disabled |

| Delete Local Profile When VHD Should Apply | Enabled |

| Enabled | Enabled |

| Is Dynamic (VHD) | Enabled |

| Keep Local Directory (after logoff) | Enabled |

| Prevent Login With Failure | Enabled |

| Roam Identity | Enabled |

| Roam Search | Disabled |

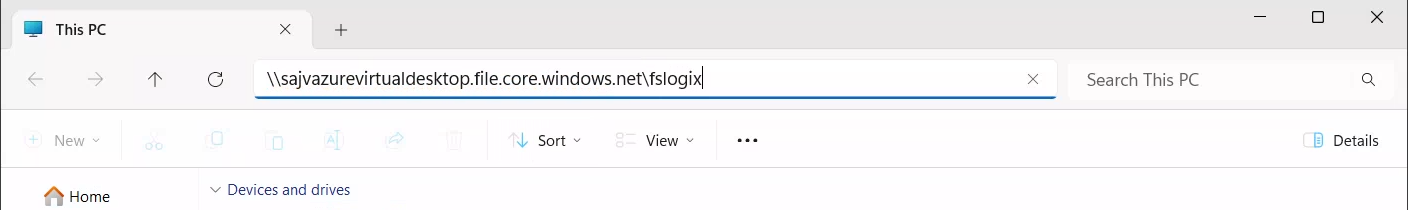

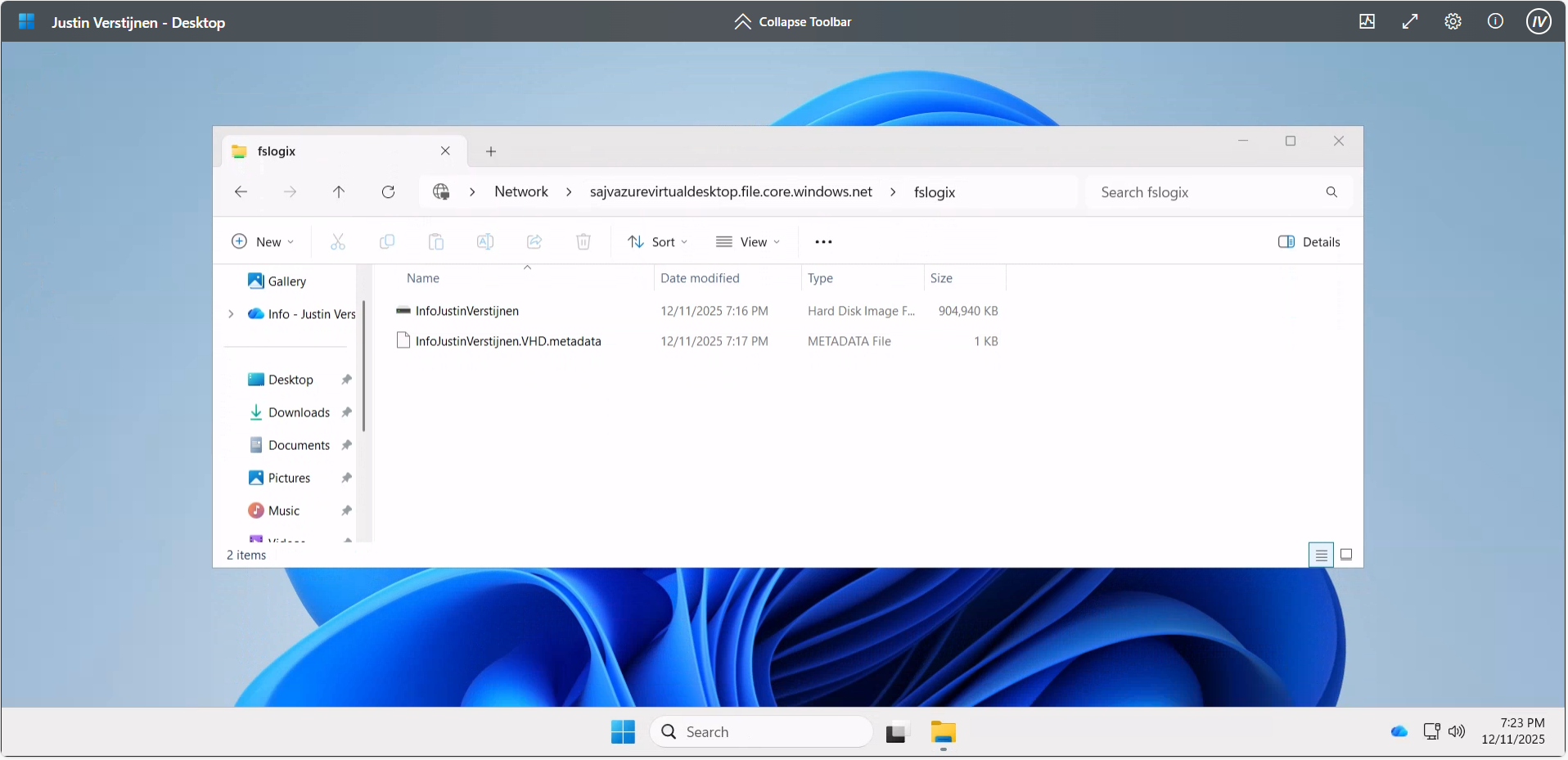

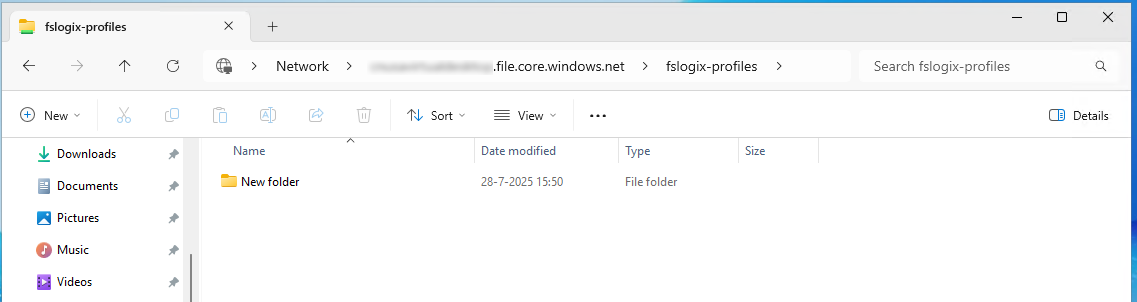

| VHD Locations | Your storage account and share in UNC. Mine is here: \sajvazurevirtualdesktop.file.core.windows.net\fslogix |

Make sure the option “Access Network as Computer Object” is Disabled, as this is a requirement for user authentication. Otherwise the solution will not work and sign in will result in a FSLogix “Error code: 0x000000035, Message: Impossibile to find network path” error.

Container naming settings

Under FSLogix -> Profile Containers -> Container and Directory Naming, select the following settings, enable them and configure them:

| Setting name | Value |

| No Profile Containing Folder | Enable |

| VHD Name Match | %username% |

| VHD Name Pattern | %username% |

| Volume Type (VHD or VHDX) | VHDX |

You can change this configuration to fit your needs, this is purely how I configured FSLogix to keep the configuration as simple and effective as possible.

Save the policy and assign this to your AVD hosts.

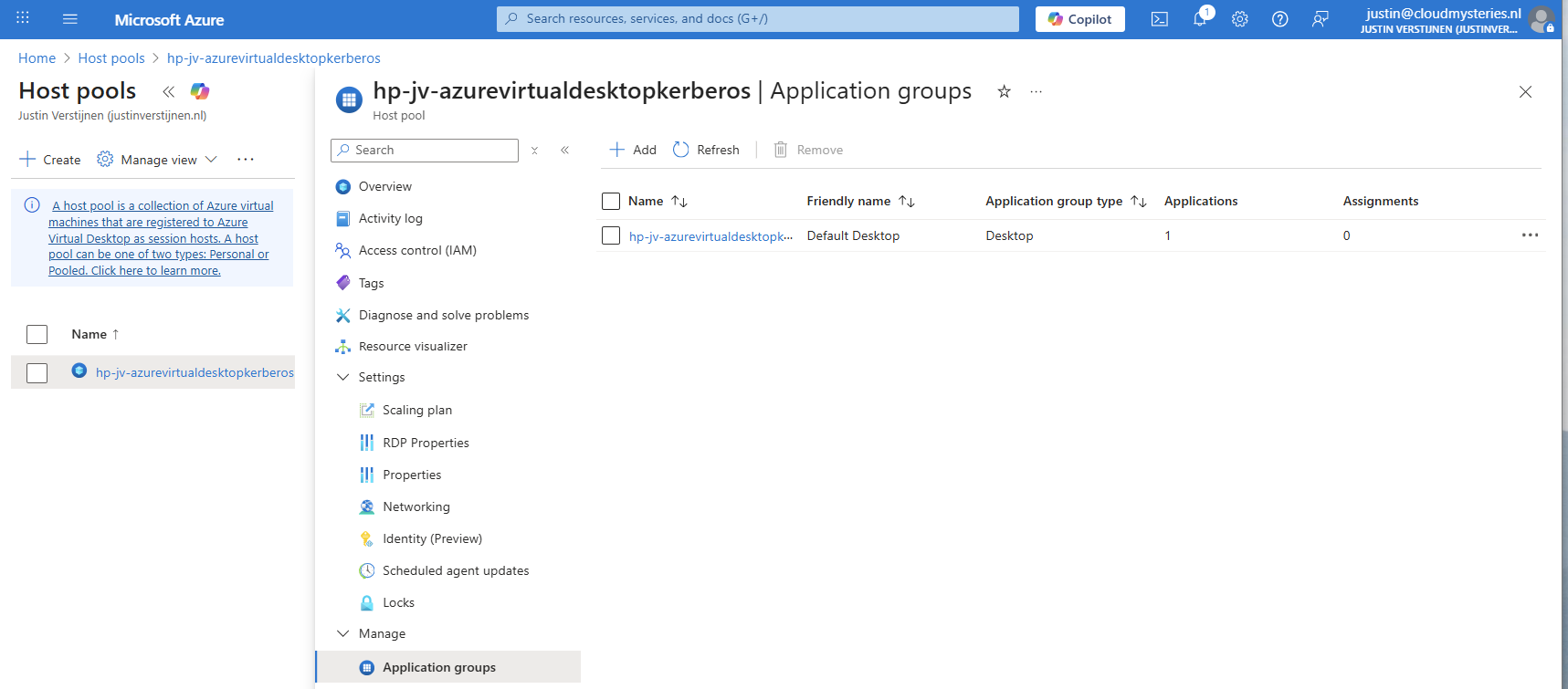

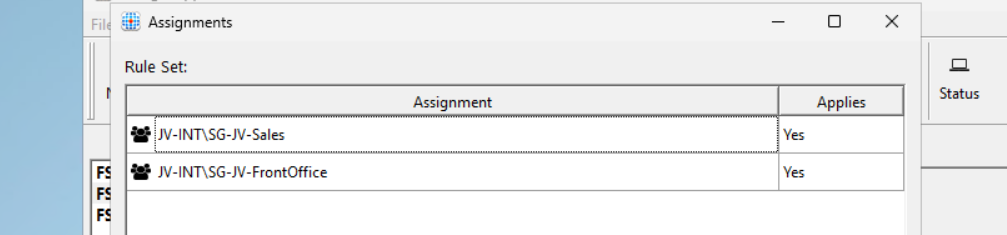

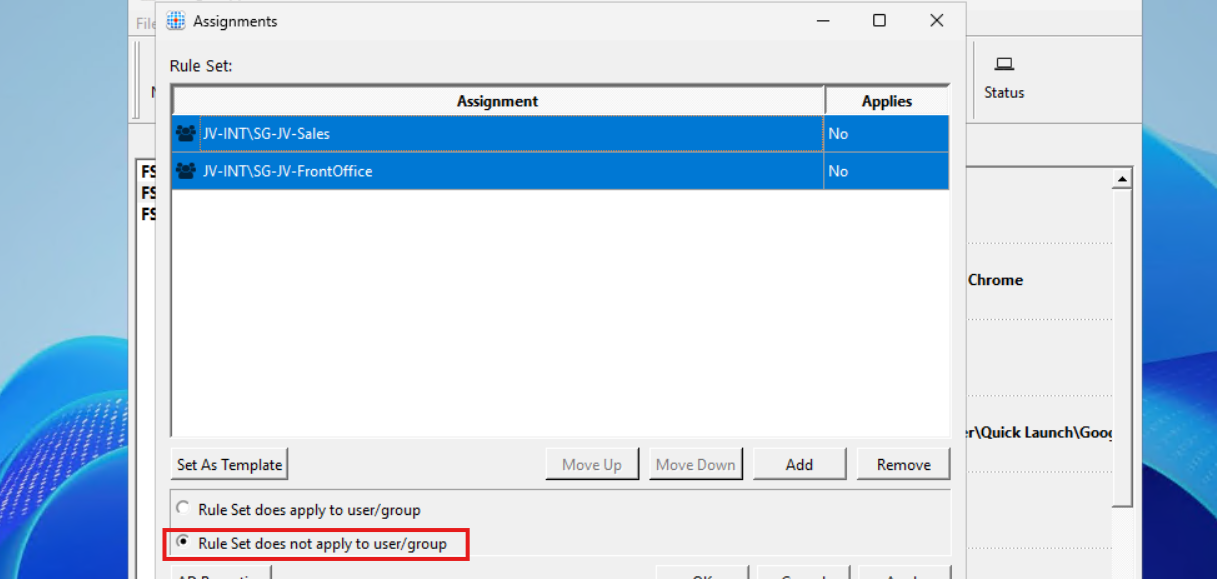

9: Preparing the hostpool

We need to do some small final configurations, gaining access to the virtual desktops by giving the permissions.

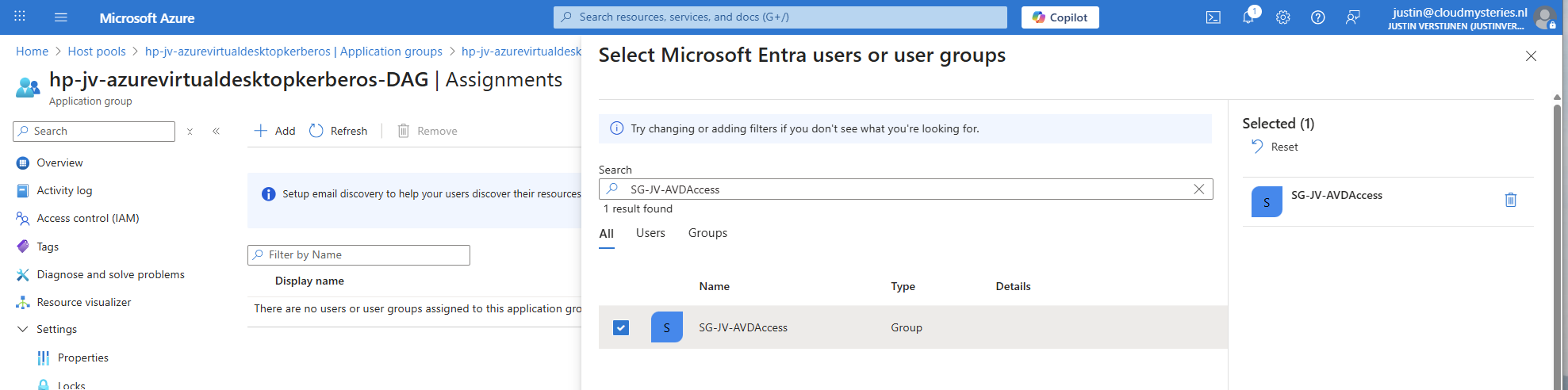

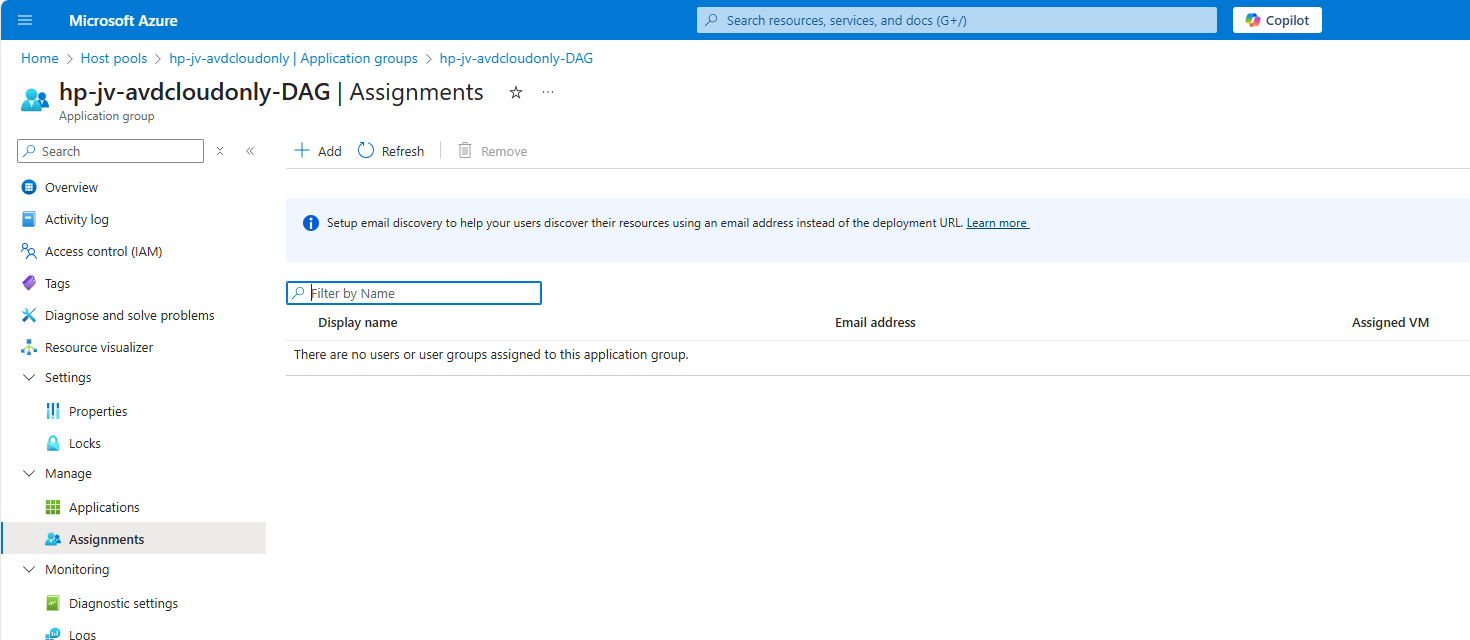

Go to the hostpool and then to Application Groups.

Then open the application group that contains the desktop. Then click on “Assignments”.

Select the group to give desktop access to the users. Then save the assignment.

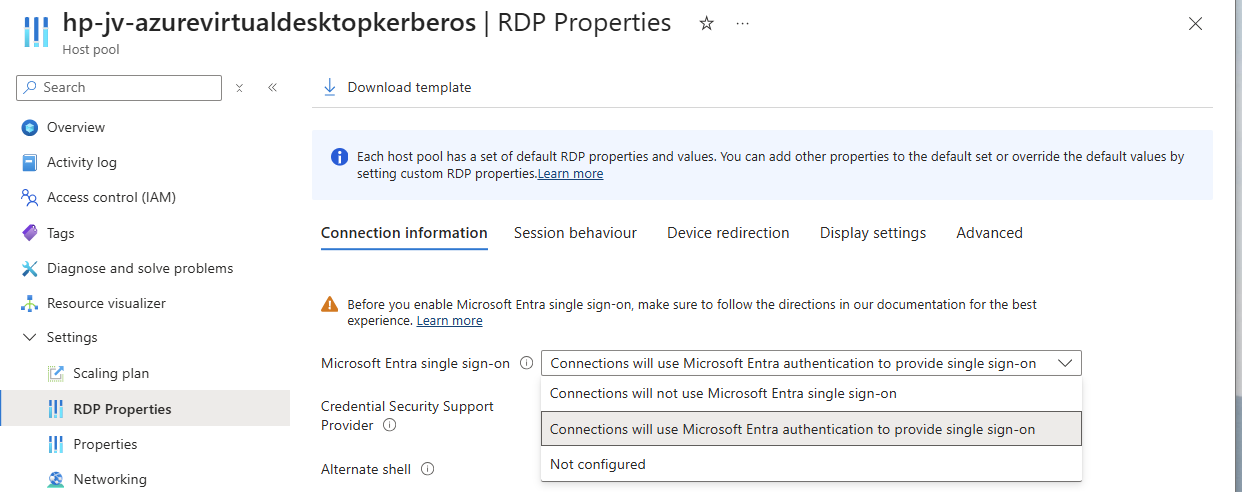

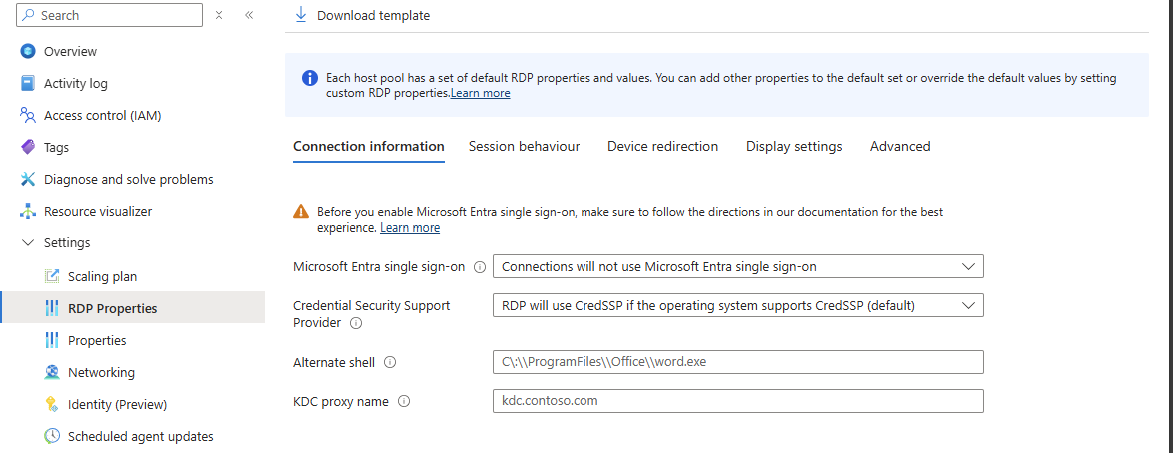

After assigning the group we would have to do one last configuration, enabling Single Sign On on the hostpool. Go to your hostpool and open the RDP Properties

For a comprehensive guide about Azure Virtual Desktop and RDP Properties, visit: https://justinverstijnen.nl/azure-virtual-desktop-rdp-properties/

On the “Connection Information” tab, select the “Microsoft Entra single sign-on” option and set this to provide single sign-on. Then save the configuration.

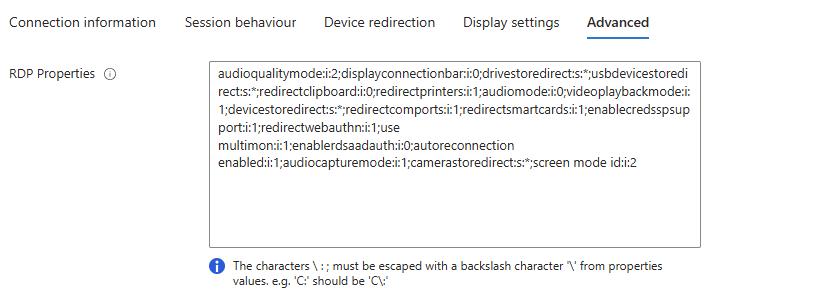

At this point, my advanced RSP Properties configuration is:

drivestoredirect:s:;usbdevicestoredirect:s:;redirectclipboard:i:0;redirectprinters:i:0;audiomode:i:0;videoplaybackmode:i:1;devicestoredirect:s:*;redirectcomports:i:1;redirectsmartcards:i:1;enablecredsspsupport:i:1;redirectwebauthn:i:1;use multimon:i:1;enablerdsaadauth:i:110: Connecting to the hostpool

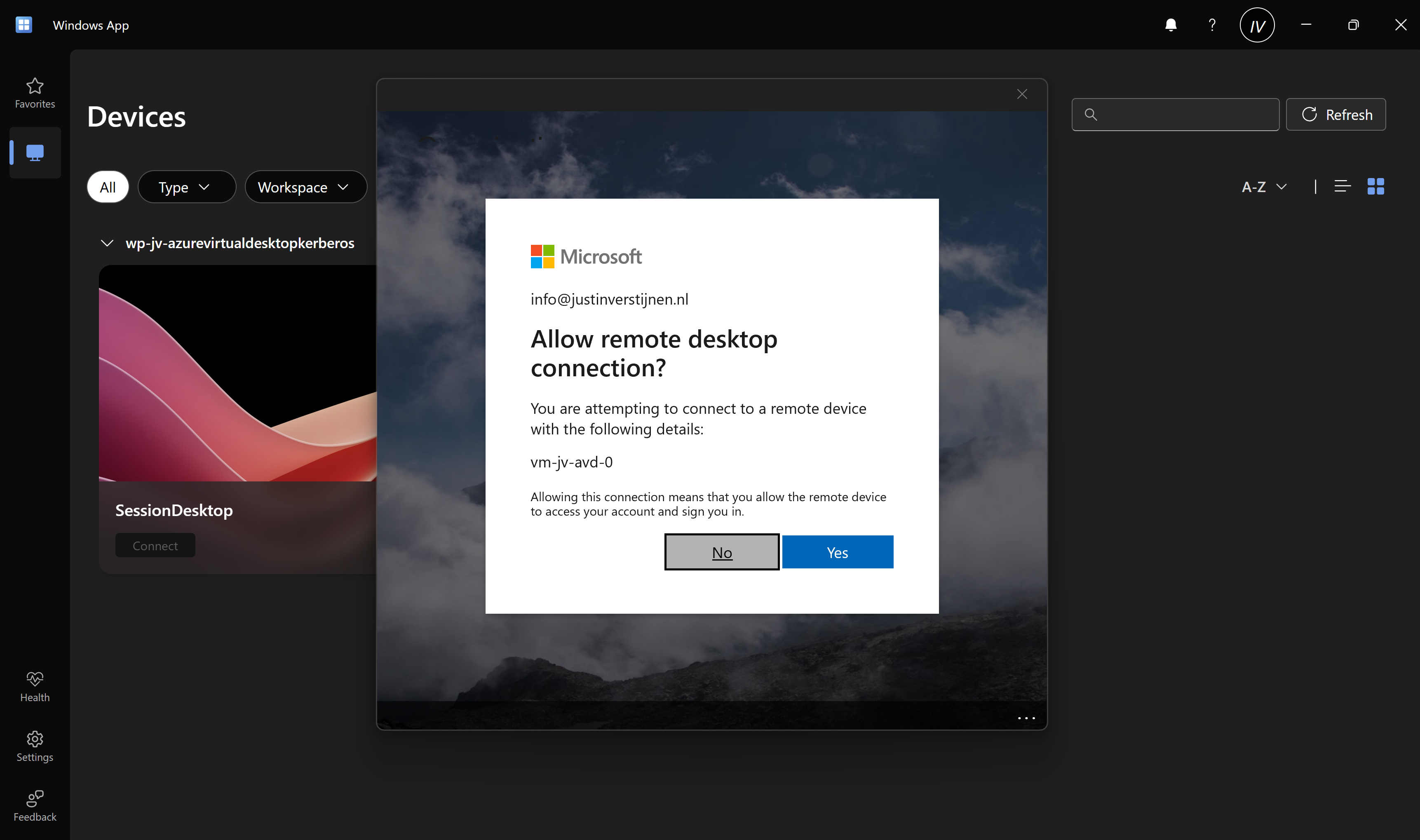

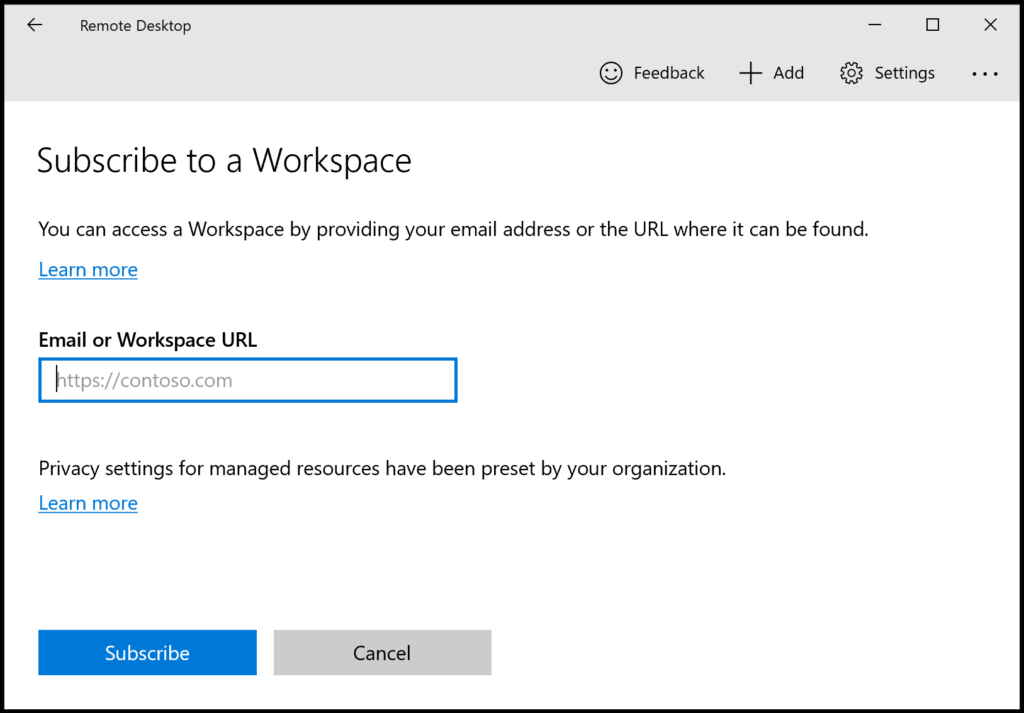

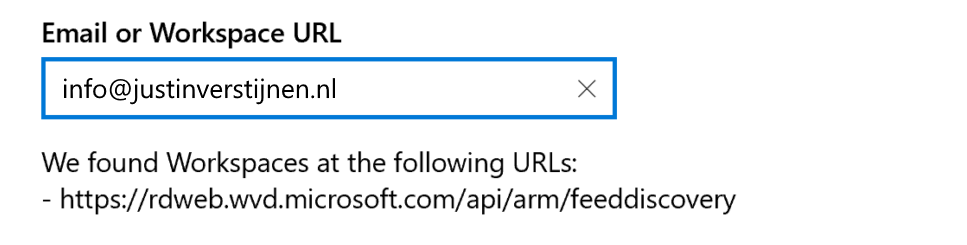

Now we have everything ready under the hood, we can finally connect to our hostpool. Download the Windows App or use the webclient and sign into your account:

Also click on “Yes” on the Single sign-on prompt to allow the remote desktop connection.

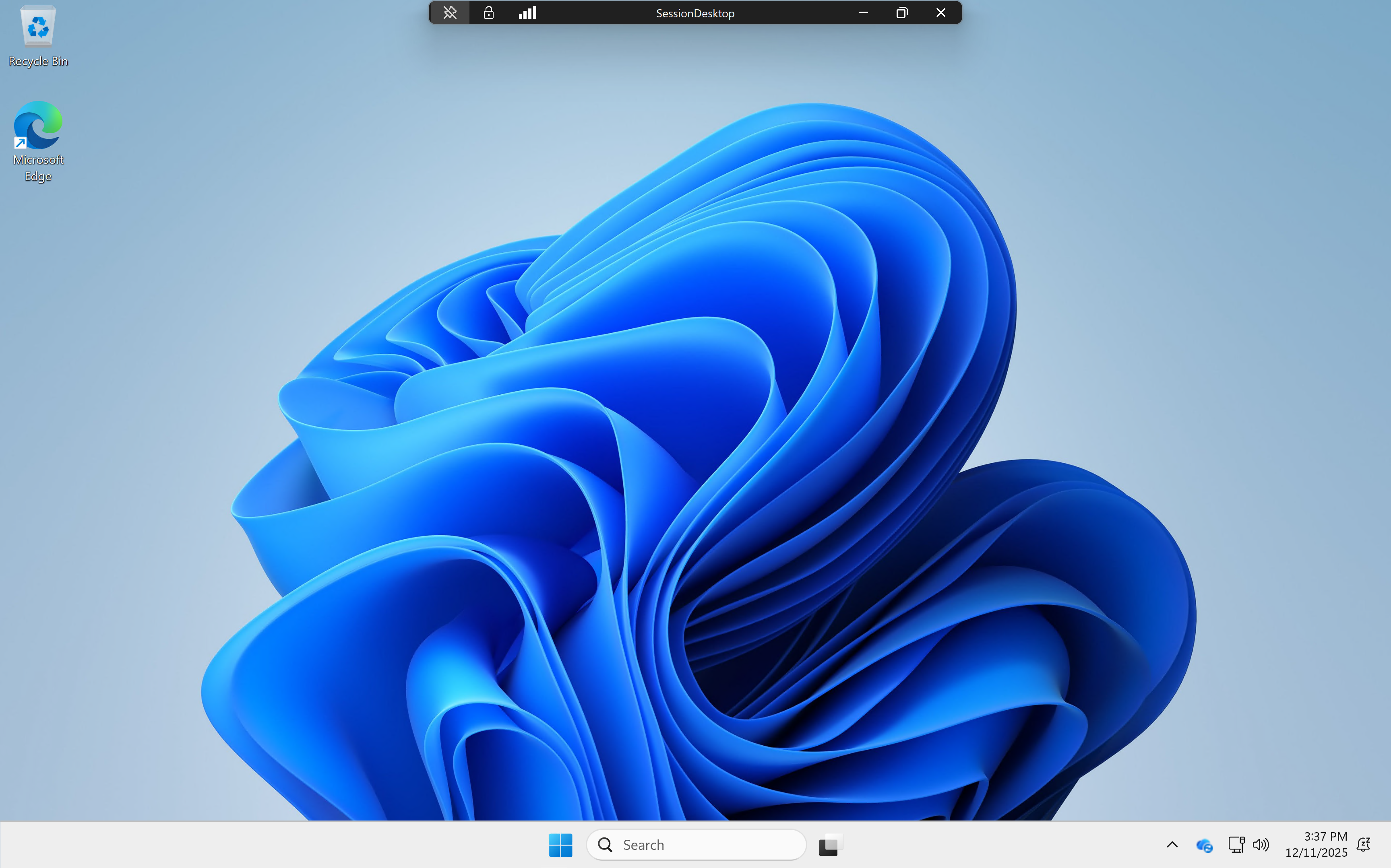

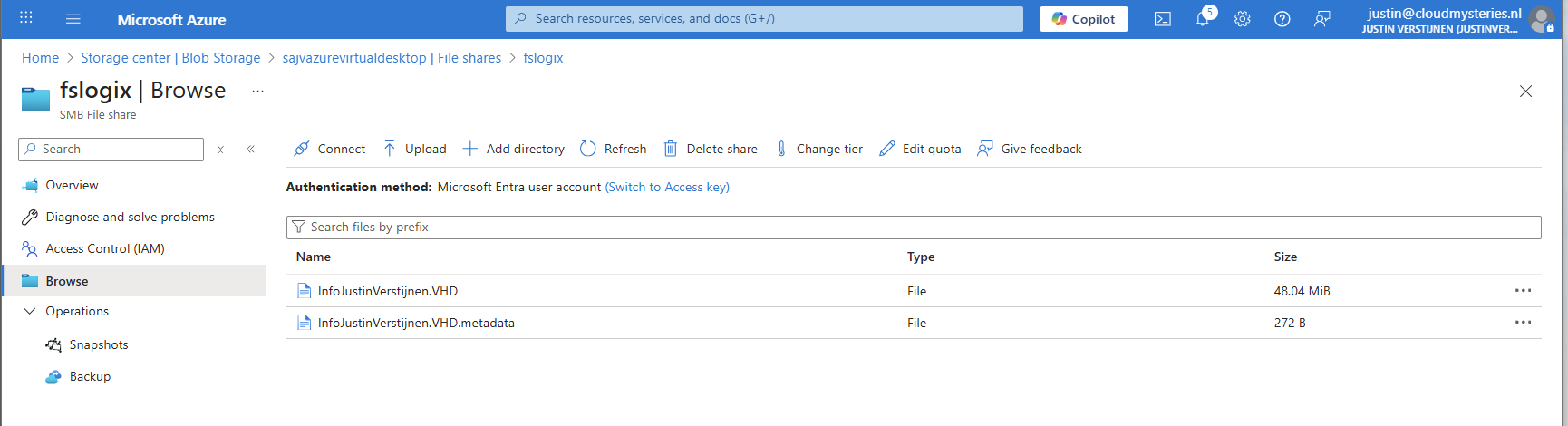

Here we are on our freshly created desktop. After connecting the FSLogix profile will be automatically created on the storage account.

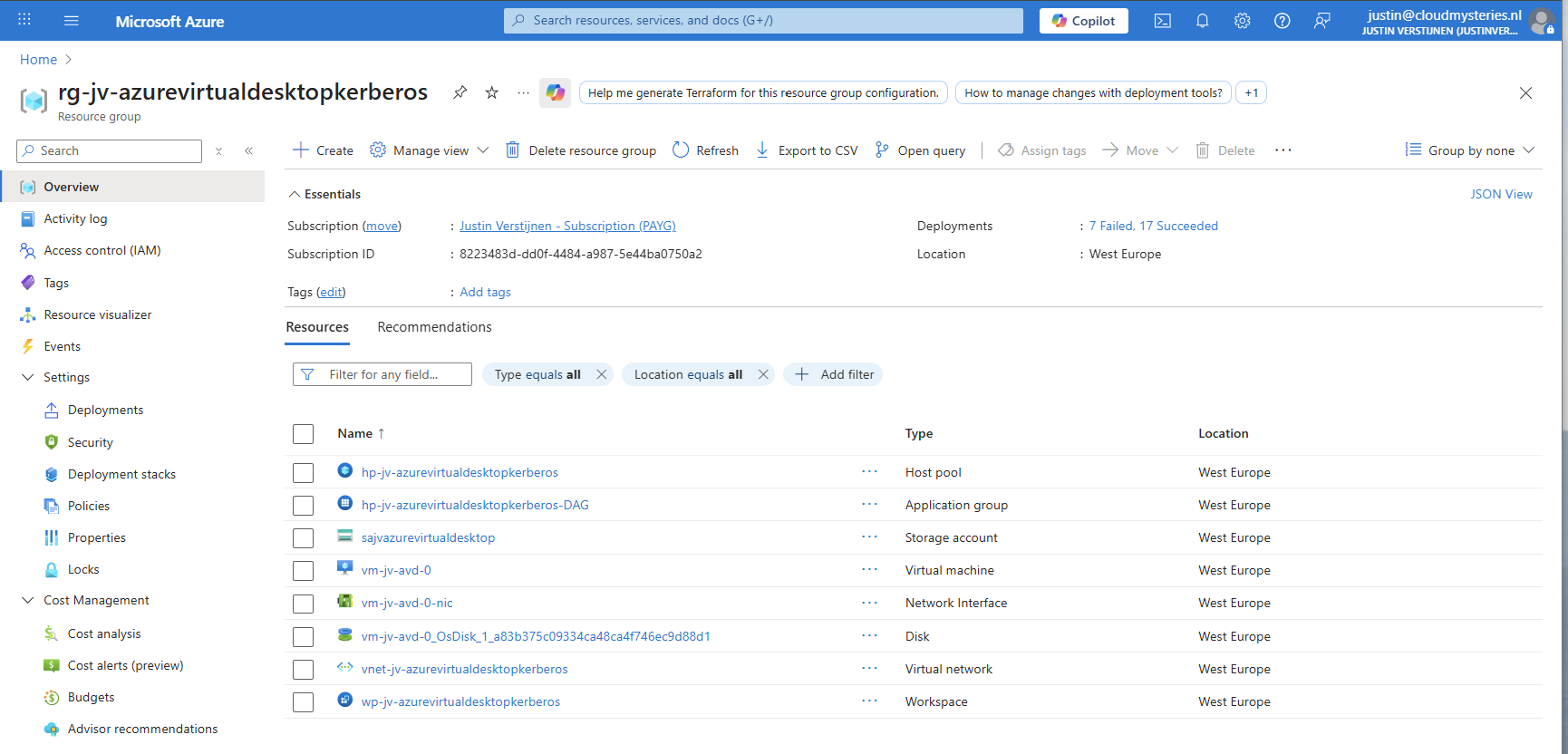

And this with only these resources:

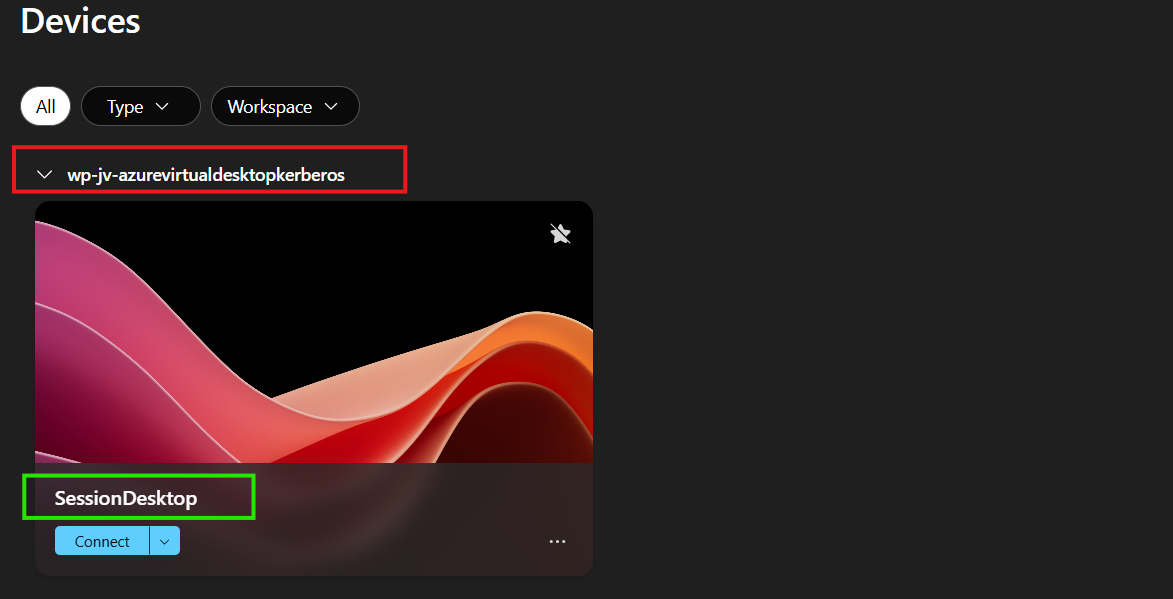

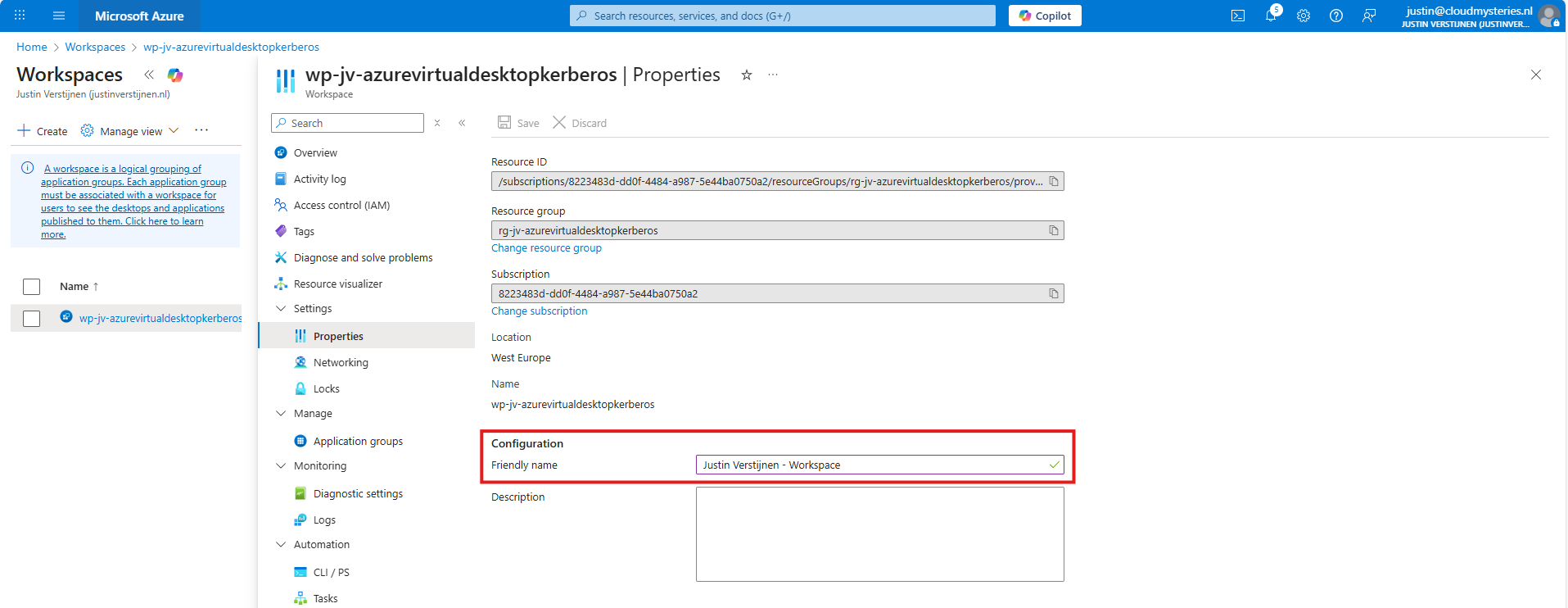

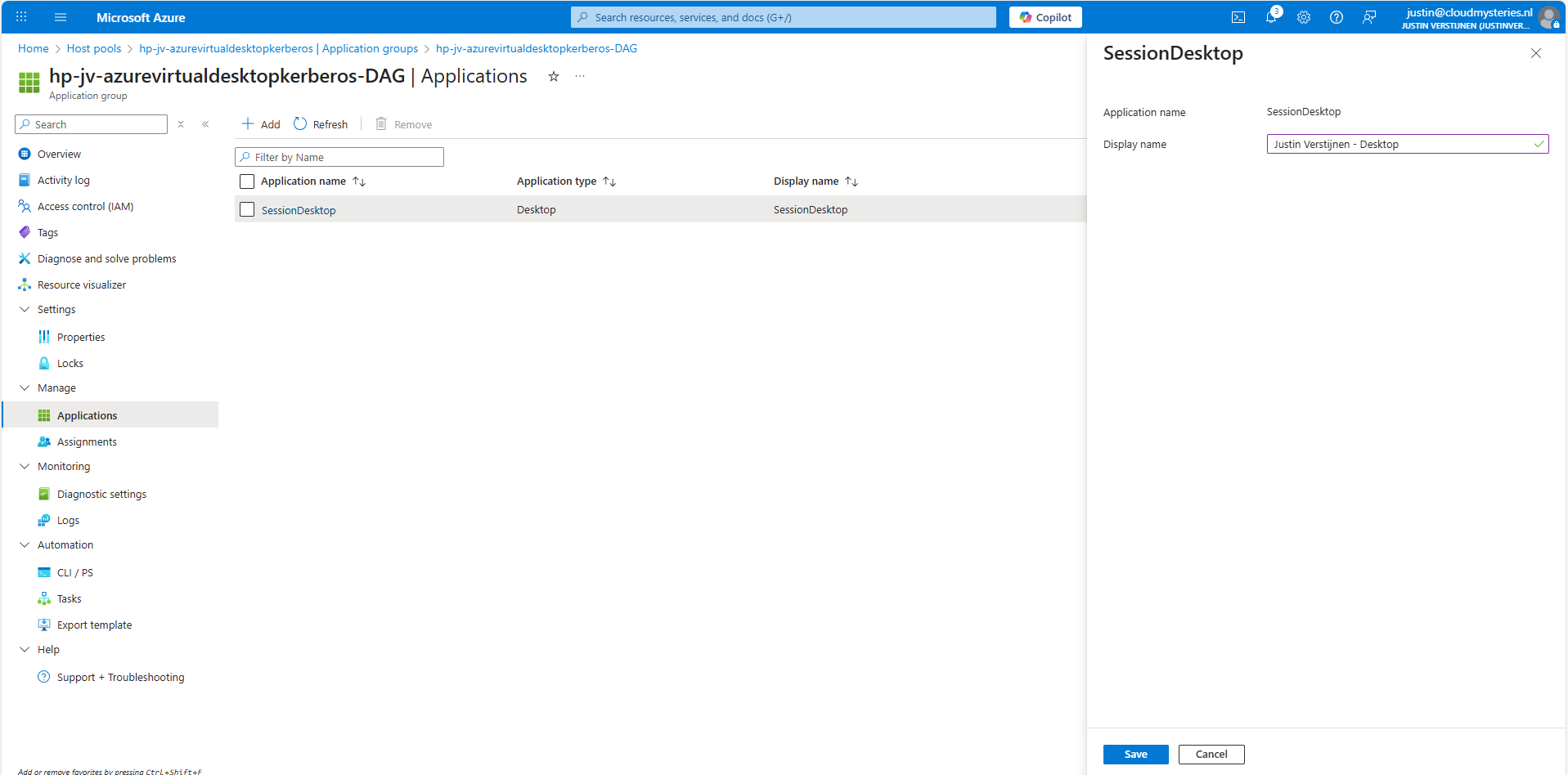

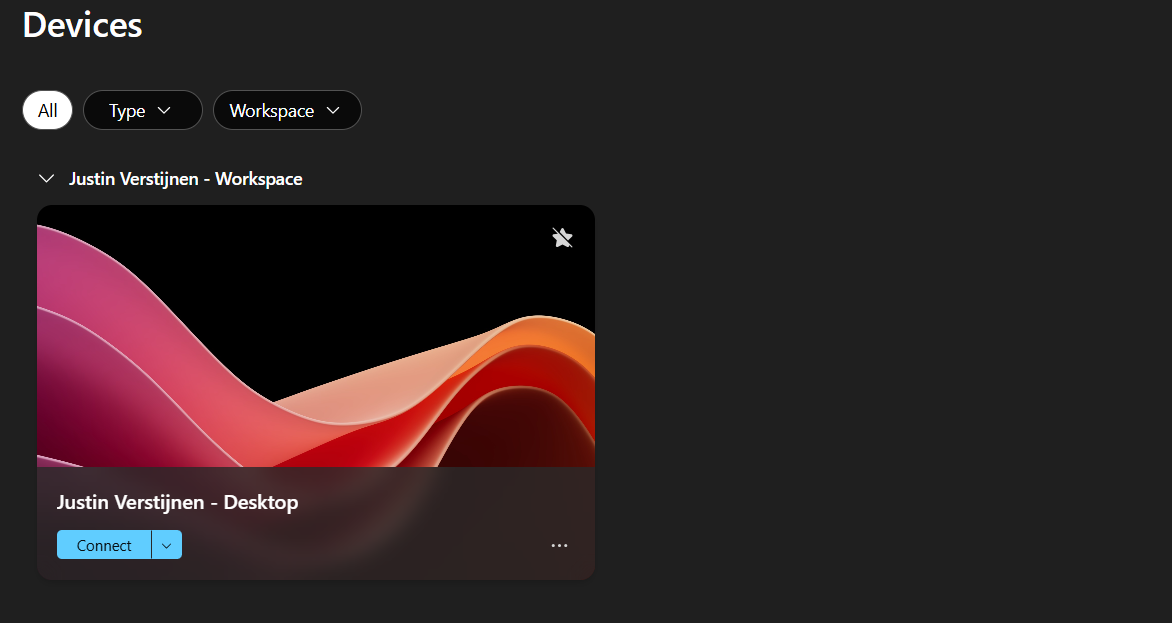

11: Shaping your AVD Workspace (optional)

In the Windows app, you get a workspace to connect to your desktop. By default, these are filled in automatically but it is possible to change the names for a better user experience.

The red block can be changed in the Workspace -> Friendly name and the green block can be changed in the Application Group -> Application -> Session Desktop.

For the red block, go to your Workspace, then to Properties and change and save the friendly name:

For the green block, go to your application groups, and then the Desktop Application Group (DAG) and select the SessionDesktop application. You can change and save the name here.

After refreshing the workspace, this looks a lot better to the end user:

Building great solutions is having attention for the smallest details ;)

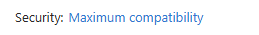

12: Setting maximum SMB encryption (optional)

This step is optional, but recommended for higher security.

In another guide, I dived into the SMB encryption settings to use the Maximum security preset of Azure Files. You can find that guide here:

Guide for maximum SMB encryption

Using the Maximum security preset for Azure Files ensures only the best encryption and safest protocols are being used between Session host and File share. For example, this only allows Kerberos and disables the older, unsafe NTLM authentication protocol.

13: Troubleshooting (optional)

It is possible that this setup doesn’t work at your first try. I have added some steps to troubleshoot the solution and come to the cause of the error.

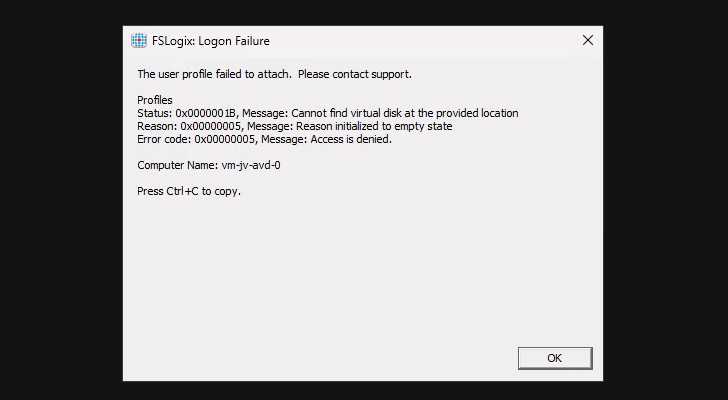

FSLogix profile errors

If you get an error like below picture, the profile failed to create or mount which can have various different causes based on the error.

In this case, the error is “Access is denied”. This is true because I did this on purpose. Check the configuration of step 6.

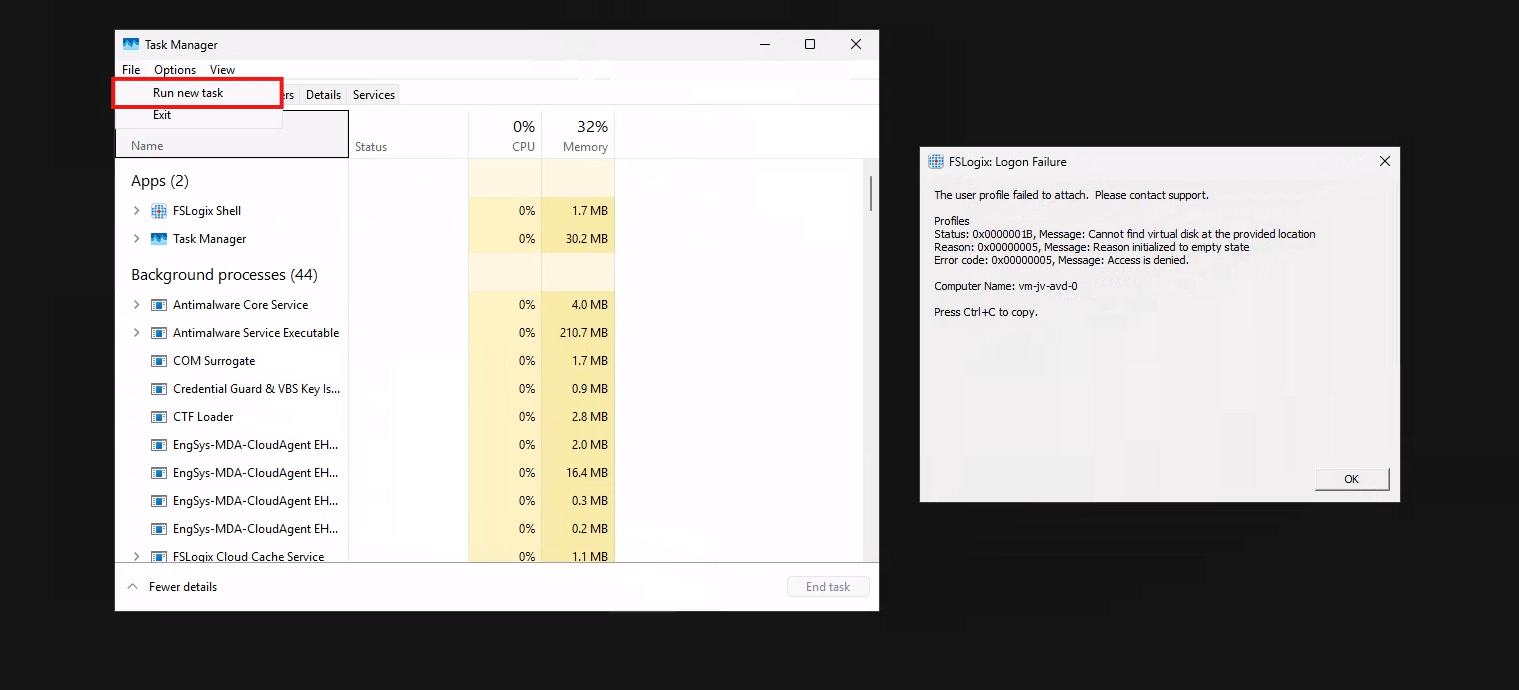

When presented this type of errors, you are able to get to CMD by pressing CTRL+SHIFT+ESC and run a new task there, which is CMD.

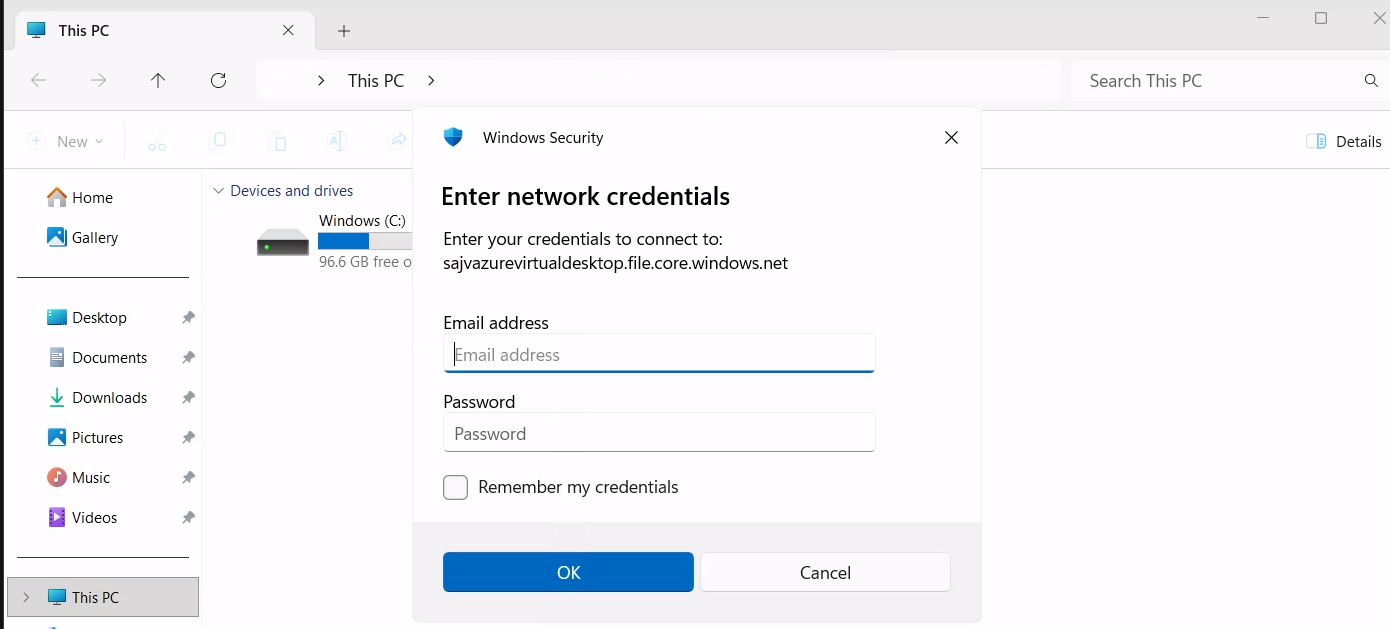

To check if you can navigate to the share, you can open explorer.exe here and navigate manually to the share to see if its working. If you get any authentication prompts or errors, this means that this is the reason FSLogix doesn’t work either.

If not getting any FSLogix error and no profile is created in the storage account after logging in, check your FSLogix configuration from step 8 and the assignments in Intune.

Kerberos errors

It is also possible that you get an error that the network path cannot be found. This states that the kerberos connection is not working. You can use this command to check the configuration:

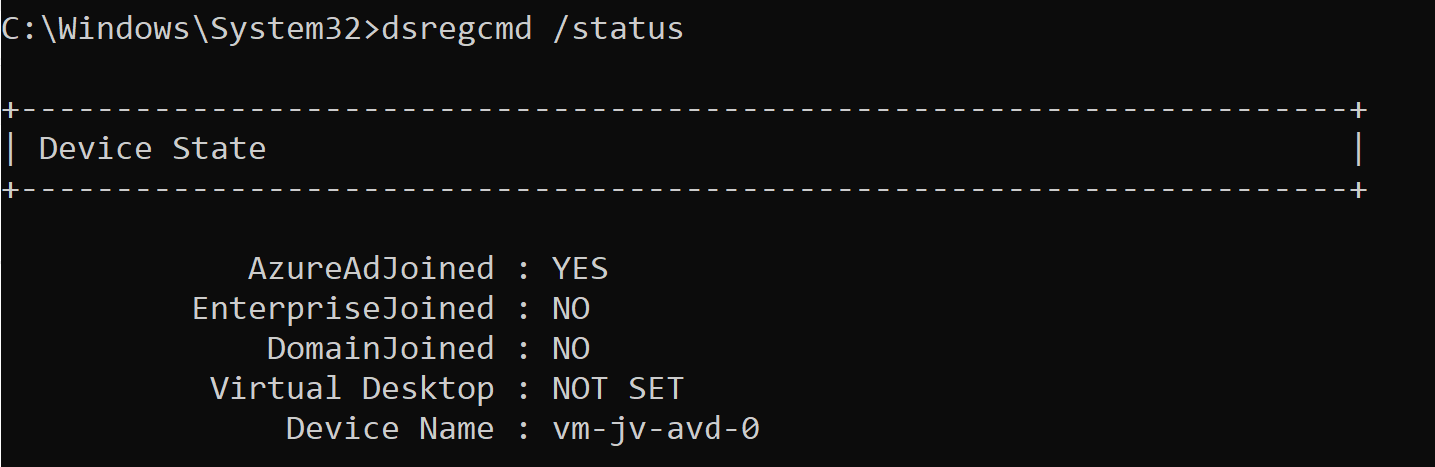

dsregcmd /statusThis returns an overview with the desktop configuration with Entra and Intune.

This overview shows that the Azure AD primary refresh token is active and that the Cloud TGT option is available. This must both be yes for the authentication to work.

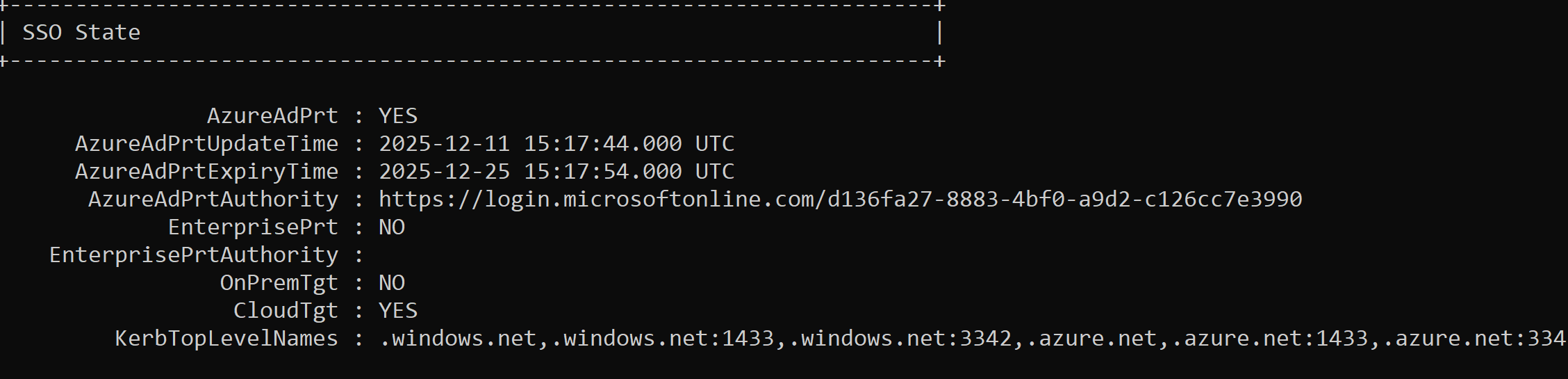

And to check if the Kerberos tickets is given, you can run this command:

klist get cifs/sajvazurevirtualdesktop.file.core.windows.netChange the name to your storage account name.

In my case, I get two tickets who are given to my user. If this shows nothing, there is anything wrong with your Kerberos configuration.

Summary

This new (in preview at the time of writing) Microsoft Entra Kerberos option is a great way to finally host an Azure VIrtual Desktop environment completely cloud only and without the need for extra servers for a traditional Active Directory. Hosting servers is a time consuming and less secure manner.

Going completely cloud only enhances the manageability of the environement keeps things simple to manage. It also makes your environment more secure which are things we like.

Thank you for reading this page and I hope it was helpful.

Sources

These sources helped me by writing and research for this post;

- https://learn.microsoft.com/en-us/entra/identity/authentication/kerberos#how-microsoft-entra-kerberos-works

- https://learn.microsoft.com/en-us/microsoft-365/enterprise/manage-microsoft-365-accounts?view=o365-worldwide#cloud-only

- https://learn.microsoft.com/en-us/azure/storage/files/storage-files-identity-assign-share-level-permissions?WT.mc_id=Portal-Microsoft_Azure_FileStorage&tabs=azure-portal#choose-how-to-assign-share-level-permissions

End of the page 🎉

You have reached the end of the page. You can navigate through other blog posts as well, share this post on X, LinkedIn and Reddit or return to the blog posts collection page. Thank you for visiting this post.

If you think something is wrong with this post or you want to know more, you can send me a message to one of my social profiles at: https://justinverstijnen.nl/about/

If you find this page and blog very useful and you want to leave a donation, you can use the button below to buy me a beer. Hosting and maintaining a website takes a lot of time and money. Thank you in advance and cheers :)

The terms and conditions apply to this post.

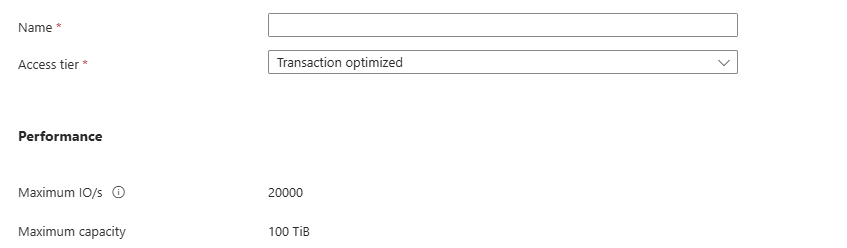

FSLogix and maximum Azure Files security

When using Azure Files and Windows 11 as operating system for Azure Virtual Desktop, we can leverage the highest SMB encryption/security available at the moment, which is AES-256. While we can change this pretty easily, the connection to the storage account will not work anymore by default.

In this guide I will show how I got this to work in combination with the newest Kerberos Authentication.

The Maximum Security preset in the Azure Portal

We can also run the SMB security on the Maximum security preset in the Azure Portal and still run FSLogix without problems. In the Azure Portal, go to the storage account and set the security of the File share to “Maximum security”:

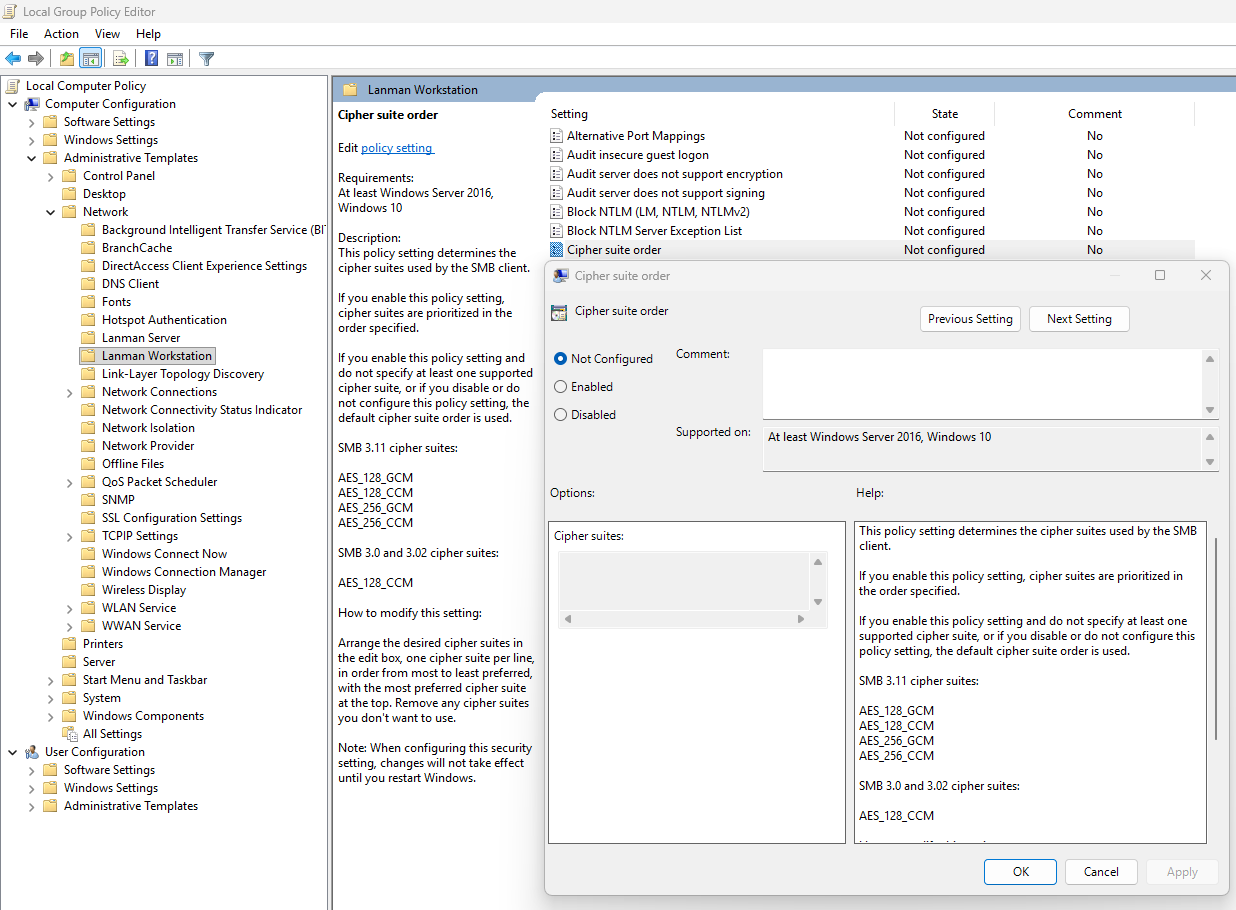

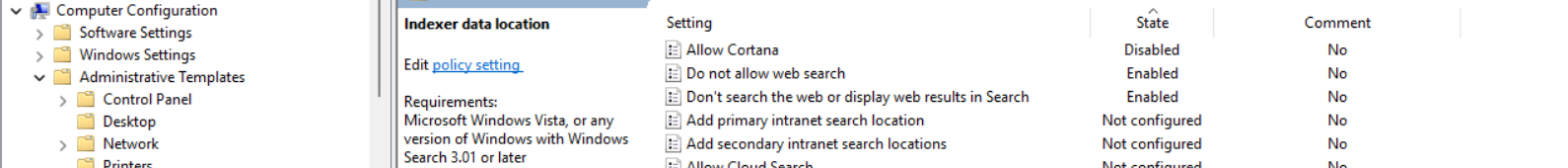

This will only allow the AES_256_GCM SMB Channel encryption, but Windows 11 defaults to the 128 version only. We now have to tell Windows to use the better secured 256 version instead, otherwise the storage account blocks your requests and logging in isn’t possible. I will do this through Intune, but you could do this with Group Policy in the same manner or with PowerShell.

Set-SmbClientConfiguration -EncryptionCiphers "AES_256_GCM" -Confirm:$falseConfigure SMB Encryption with Microsoft Intune

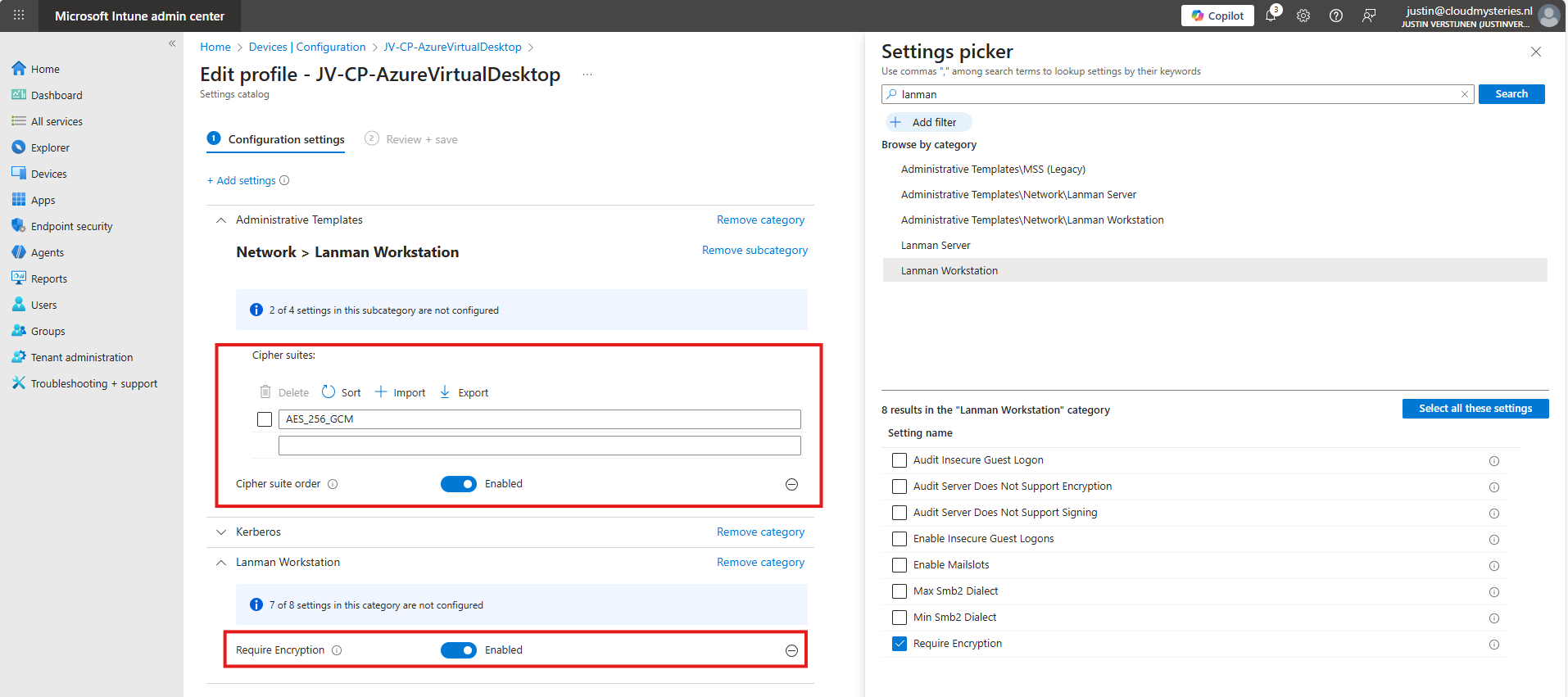

Go to the Intune Admin center (https://intune.microsoft.com). We need to create or change an existing policy in Intune to configure these 2 settings. This policy must be assigned to the Azure Virtual Desktop hosts.

Search for these 2 settings and select the settings:

- Administrative Templates -> Network -> Lanman Workstation

- Setting name: Cipher suites

- Lanman Workstation

- Setting name: Require Encryption

Both of these options are in different categories in Intune, altough they partly work with each other to facilitate SMB security.

Set the Encryption to “Enabled” and paste this line into the Cipher Suites field:

AES_256_GCMIf you still want to use more ciphers as backup options, you can add every cipher to a new item in Intune, where the top Cipher is used first.

AES_256_GCM

AES_256_CCM

AES_128_GCM

AES_128_CCMThis is stated by the local group policy editor (gpedit.msc):

After finishing this configuration, save the policy and assign it to the group with your session hosts. Then reboot to make this new changes active.

Let’s test the configuration

Now that we have set the configuration, I have rebooted the Azure Virtual Desktop session host, and let the Intune settings apply. This was seconds after reboot. When logged into the hostpool the sign in was working again, using the highest SMB ecryption settings:

Summary

The Maximum security preset for Azure Files applies the most restrictive security configuration available to minimize the attack surface. It enforces:

- Private network access only

- Encryption for data in transit

- Strong authentication and authorization controls (such as Entra-based access with Kerberos only) and blocks older SMB and NTLM protocols

This preset is intended for highly sensitive workloads with strict compliance and security requirements.

Thank you for reading this guide and I hope it was helpful.

Sources

These sources helped me by writing and research for this post;

End of the page 🎉

You have reached the end of the page. You can navigate through other blog posts as well, share this post on X, LinkedIn and Reddit or return to the blog posts collection page. Thank you for visiting this post.

If you think something is wrong with this post or you want to know more, you can send me a message to one of my social profiles at: https://justinverstijnen.nl/about/

If you find this page and blog very useful and you want to leave a donation, you can use the button below to buy me a beer. Hosting and maintaining a website takes a lot of time and money. Thank you in advance and cheers :)

The terms and conditions apply to this post.

Azure Virtual Desktop RDP Properties

In this post, we will be looking at the most popular different RDP Properties we can use in Azure Virtual Desktop.

I will be talking about local PC’s and remote PC’s alot, where the remote PC is of course the Azure Virtual Desktop host and the local PC is the device you can physically touch.

What are RDP properties?

RDP properties are specific settings to change your RDP experience. This can be to play sound on the remote or local PC, enable or disable printer redirection, enable or disable clipboard between computers and what to do if connection is lost.

In the previous years, this was also the case for normal RDP files or connections to Remote Desktop Services, but Azure Virtual Desktop brings this to a nice and centralized system which we can change to our and our users’ preference.

How to configure RDP properties

The 3 most popular RDP properties which I also used a lot in the past are these below.

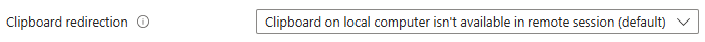

Clipboard redirection

redirectclipboard:i:0

This setting enables or disables if we are allowed to use the clipboard between the local PC and the remote PC. We can find this on the tab “Device redirection”:

The default option is “disabled”, so text and files are not transferable between computers. Enabling this means that users this can do, but we trade in some security. We can configure this in the Azure Portal GUI or by changing the setting on the “Advanced Settings” tab.

Display RDP connection bar

displayconnectionbar:i:0

We can hide the RDP connection bar by default for users. They can only bring it up with the shortcut “CTRL+ALT+HOME”. This makes the user experience a bit better as they don’t have that connection bar in place for the whole session. By default, this option is enabled, so 1.

There is no way to configure this in the GUI, only through the advanced settings. This also doesn’t have official AVD support but can confirm it works like expected.

Drive redirection

drivestoredirect:s:dynamicdrives

Changing the drive redirection setting ensures that drives are only redirected when you want this. We can use the option “DynamicDrives” which only redirects drives that are connected after the RDP session is connected.

My most used RDP settings

My full and most used configuration is here:

audioqualitymode:i:2;displayconnectionbar:i:0;drivestoredirect:s:dynamicdrives;usbdevicestoredirect:s:*;redirectclipboard:i:0;redirectprinters:i:1;audiomode:i:0;videoplaybackmode:i:1;devicestoredirect:s:*;redirectcomports:i:1;redirectsmartcards:i:1;enablecredsspsupport:i:1;redirectwebauthn:i:1;use multimon:i:1;enablerdsaadauth:i:0;autoreconnection enabled:i:1;audiocapturemode:i:1;camerastoredirect:s:*;screen mode id:i:2Mostly the default configuration, but I like the Connection bar hided by default.

The location to change RDP properties

We can find the RDP properties in the hostpool of your environment, and then on “RDP properties”:

We can find the advanced options at the “Advanced” page:

Full list of RDP properties

Here is a list with all RDP properties published, with the support for Azure Virtual Desktop and RDP files considered.

All RDP options are in the convention: option:type:value

You can search through the list with the search button, and support for AVD and seperate .RDP files is added.

RDP Settings Table

| Property | Type | Value (by default) | Support AVD | Support RDP | Description |

|---|---|---|---|---|---|

administrativesession | i | 0 | No | Yes | Connect to the administrative session (console) of the remote computer. 0 - Do not use the administrative session 1 - Connect to the administrative session |

allowdesktopcomposition | i | 0 | No | Yes | Determines whether desktop composition (needed for Aero) is permitted when you log on to the remote computer. 0 - Disable desktop composition in the remote session 1 - Desktop composition is permitted |

allowfontsmoothing | i | 0 | No | Yes | Determines whether font smoothing may be used in the remote session. 0 - Disable font smoothing in the remote session 1 - Font smoothing is permitted |

alternatefulladdress | s | No | Yes | Specifies an alternate name or IP address of the remote computer that you want to connect to. Will be overruled by RDP+. | |

alternateshell | s | No | Yes | Specifies a program to be started automatically when you connect to a remote computer. The value should be a valid path to an executable file. This setting only works when connecting to Windows Server instances. | |

audiocapturemode | i | 0 | No | Yes | Determines how sounds captured (recorded) on the local computer are handled when you are connected to the remote computer. 0 - Do not capture audio from the local computer 1 - Capture audio from the local computer and send to the remote computer |

audiomode | i | 0 | No | Yes | Determines how sounds on a remote computer are handled when you are connected to the remote computer. 0 - Play sounds on the local computer 1 - Play sounds on the remote computer 2 - Do not play sounds |

audioqualitymode | i | 0 | No | Yes | Determines the quality of the audio played in the remote session. 0 - Dynamically adjust audio quality based on available bandwidth 1 - Always use medium audio quality 2 - Always use uncompressed audio quality |

authenticationlevel | i | 2 | No | Yes | Determines what should happen when server authentication fails. 0 - If server authentication fails, connect without giving a warning 1 - If server authentication fails, do not connect 2 - If server authentication fails, show a warning and allow the user to connect or not 3 - Server authentication is not required This setting will be overruled by RDP+. |

autoreconnectmaxretries | i | 20 | No | Yes | Determines the maximum number of times the client computer will try to. |

autoreconnectionenabled | i | 1 | No | Yes | Determines whether the client computer will automatically try to reconnect to the remote computer if the connection is dropped. 0 - Do not attempt to reconnect 1 - Attempt to reconnect |

bandwidthautodetect | i | 1 | No | Yes | Enables the option for automatic detection of the network type. Used in conjunction with networkautodetect. Also see connection type. 0 - Do not enable the option for automatic network detection 1 - Enable the option for automatic network detection |

bitmapcachepersistenable | i | 1 | No | Yes | Determines whether bitmaps are cached on the local computer (disk-based cache). Bitmap caching can improve the performance of your remote session. 0 - Do not cache bitmaps 1 - Cache bitmaps |

bitmapcachesize | i | 1500 | No | Yes | Specifies the size in kilobytes of the memory-based bitmap cache. The maximum value is 32000. |

camerastoredirect | s | No | Yes | Determines which cameras to redirect. This setting uses a semicolon-delimited list of KSCATEGORY_VIDEO_CAMERA interfaces of cameras enabled for redirection.No | |

compression | i | 1 | No | Yes | Determines whether the connection should use bulk compression. 0 - Do not use bulk compression 1 - Use bulk compression |

connecttoconsole | i | 0 | No | Yes | Connect to the console session of the remote computer. 0 - Connect to a normal session 1 - Connect to the console screen |

connectiontype | i | 2 | No | Yes | Specifies pre-defined performance settings for the Remote Desktop session. 1 - Modem (56 Kbps) 2 - Low-speed broadband (256 Kbps - 2 Mbps) 3 - Satellite (2 Mbps - 16 Mbps with high latency) 4 - High-speed broadband (2 Mbps - 10 Mbps) 5 - WAN (10 Mbps or higher with high latency) 6 - LAN (10 Mbps or higher) 7 - Automatic bandwidth detection. Requires bandwidthautodetect. By itself, this setting does nothing. When selected in the RDC GUI, this option changes several performance related settings (themes, animation, font smoothing, etcetera). These separate settings always overrule the connection type setting. |

desktopsizeid | i | 0 | Yes | Yes | Specifies pre-defined dimensions of the Remote Desktop session. 0 - 640x480 1 - 800x600 2 - 1024x768 3 - 1280x1024 4 - 1600x1200 This setting is ignored when either /w and /h, or desktopwidth and desktopheight are already specified. |

desktopheight | i | 600 | Yes | Yes | The height (in pixels) of the Remote Desktop session. |

desktopwidth | i | 800 | Yes | Yes | The width (in pixels) of the Remote Desktop session. |

devicestoredirect | s | No | Yes | Determines which supported Plug and Play devices on the client computer will be redirected and available in the remote session. No value specified - Do not redirect any supported Plug and Play devices. * - Redirect all supported Plug and Play devices, including ones that are connected later. DynamicDevices - Redirect any supported Plug and Play devices that are connected later. The hardware ID for one or more Plug and Play devices - Redirect the specified supported Plug and Play device(s) | |

disablefullwindowdrag | i | 1 | No | Yes | Determines whether window content is displayed when you drag the window to a new location. 0 - Show the contents of the window while dragging 1 - Show an outline of the window while dragging |

disablemenuanims | i | 1 | No | Yes | Determines whether menus and windows can be displayed with animation effects in the remote session. 0 - Menu and window animation is permitted 1 - No menu and window animation |

disablethemes | i | 0 | No | Yes | Determines whether themes are permitted when you log on to the remote computer. 0 - Themes are permitted 1 - Disable theme in the remote session |

disablewallpaper | i | 1 | No | Yes | Determines whether the desktop background is displayed in the remote session. 0 - Display the wallpaper 1 - Do not show any wallpaper |

disableconnectionsharing | i | 0 | No | Yes | Determines whether a new Terminal Server session is started with every launch of a RemoteApp to the same computer and with the same credentials. 0 - No new session is started. The currently active session of the user is shared 1 - A new login session is started for the RemoteApp |

disableremoteappcapscheck | i | 0 | No | Yes | Specifies whether the Remote Desktop client should check the remote computer for RemoteApp capabilities. 0 - Check the remote computer for RemoteApp capabilities before logging in 1 - Do not check the remote computer for RemoteApp capabilities |

displayconnectionbar | i | 1 | No | Yes | Determines whether the connection bar appears when you are in full screen mode. Press CTRL+ALT+HOME to bring it back temporarily. 0 - Do not show the connection bar 1 - Show the connection bar Will be overruled by RDP+ when using the parameter. |

domain | s | No | Yes | Configures the domain of the user. | |

drivestoredirect | s | No | Yes | Determines which local disk drives on the client computer will be redirected and available in the remote session. No value specified - Do not redirect any drives. * - Redirect all disk drives, including drives that are connected later. DynamicDrives - Redirect any drives that are connected later. | |

enablecredsspsupport | i | 1 | No | Yes | Determines whether Remote Desktop will use CredSSP for authentication if it’s available. 0 - Do not use CredSSP, even if the operating system supports it 1 - Use CredSSP, if the operating system supports it |

enablesuperpan | i | 0 | No | Yes | Determines whether SuperPan is enabled or disabled. SuperPan allows the user to navigate a remote desktop in full-screen mode without scroll bars, when the dimensions of the remote desktop are larger than the dimensions of the current client window. The user can point to the window border, and the desktop view will scroll automatically in that direction. 0 - Do not use SuperPan. The remote session window is sized to the client window size. 1 - Enable SuperPan. The remote session window is sized to the dimensions specified through /w and /h, or through desktopwidth and desktopheight. |

encoderedirectedvideocapture | i | 1 | No | Yes | Enables or disables encoding of redirected video. 0 - Disable encoding of redirected video 1 - Enable encoding of redirected video |

fulladdress | s | No | Yes | Specifies the name or IP address (and optional port) of the remote computer that you want to connect to. | |

gatewaycredentialssource | i | 4 | No | Yes | Specifies the credentials that should be used to validate the connection with the RD Gateway. 0 - Ask for password (NTLM) 1 - Use smart card 4 - Allow user to select later |

gatewayhostname | s | No | Yes | Specifies the hostname of the RD Gateway. | |

gatewayprofileusagemethod | i | 0 | No | Yes | Determines the RD Gateway authentication method to be used. 0 - Use the default profile mode, as specified by the administrator 1 - Use explicit settings |

gatewayusagemethod | i | 4 | No | Yes | Specifies if and how to use a Gateway) server. 0 - Do not use an RD Gateway server 1 - Always use an RD Gateway, even for local connections 2 - Use the RD Gateway if a direct connection cannot be made to the remote computer (i.e. bypass for local addresses) 3 - Use the default RD Gateway settings |

keyboardhook | i | 2 | Yes | Yes | Determines how Windows key combinations are applied when you are connected to a remote computer. 0 - Windows key combinations are applied on the local computer 1 - Windows key combinations are applied on the remote computer 2 - Windows key combinations are applied in full-screen mode only |

negotiate security layer | i | 1 | No | Yes | Determines whether the level of security is negotiated. 0 - Security layer negotiation is not enabled and the session is started by using Secure Sockets Layer (SSL) 1 - Security layer negotiation is enabled and the session is started by using x.224 encryption |

networkautodetect | i | 1 | No | Yes | Determines whether to use auomatic network bandwidth detection or not. Requires the option bandwidthautodetect to be set and correlates with connection type 7. 0 - Use automatic network bandwitdh detection 1 - Do not use automatic network bandwitdh detection |

password51 | b | No | Yes | The user password in a binary hash value. | |

pinconnectionbar | i | 1 | No | Yes | Determines whether or not the connection bar should be pinned to the top of the remote session upon connection when in full screen mode. 0 - The connection bar should not be pinned to the top of the remote session 1 - The connection bar should be pinned to the top of the remote session |

promptforcredentials | i | 0 | No | Yes | Determines whether Remote Desktop Connection will prompt for credentials when connecting to a remote computer for which the credentials have been previously saved. 0 - Remote Desktop will use the saved credentials and will not prompt for credentials. 1 - Remote Desktop will prompt for credentials. This setting is ignored by RDP+. |

promptforcredentialsonclient | i | 0 | No | Yes | Determines whether Remote Desktop Connection will prompt for credentials when connecting to a server that does not support server authentication. 0 - Remote Desktop will not prompt for credentials 1 - Remote Desktop will prompt for credentials |

promptcredentialonce | i | 1 | No | Yes | When connecting through an RD Gateway, determines whether RDC should use the same credentials for both the RD Gateway and the remote computer. 0 - Remote Desktop will not use the same credentials 1 - Remote Desktop will use the same credentials for both the RD gateway and the remote computer |

publicmode | i | 0 | No | Yes | Determines whether Remote Desktop Connection will be started in public mode. 0 - Remote Desktop will not start in public mode 1 - Remote Desktop will start in public mode and will not save any user data (credentials, bitmap cache, MRU) on the local machine |

redirectclipboard | i | 1 | Yes | Yes | Determines whether the clipboard on the client computer will be redirected and available in the remote session and vice versa. 0 - Do not redirect the clipboard 1 - Redirect the clipboard |

redirectcomports | i | 0 | Yes | Yes | Determines whether the COM (serial) ports on the client computer will be redirected and available in the remote session. 0 - The COM ports on the local computer are not available in the remote session 1 - The COM ports on the local computer are available in the remote session |

redirectdirectx | i | 1 | No | Yes | Determines whether DirectX will be enabled for the remote session. 0 - Do not enable DirectX rendering 1 - Enable DirectX rendering in the remote session |

redirectedvideocaptureencodingquality | i | 0 | No | Yes | Controls the quality of encoded video. 0 - High compression video. Quality may suffer when there’s a lot of motion 1 - Medium compression 2 - Low compression video with high picture quality |

redirectlocation | i | 0 | No | Yes | Determines whether the location of the local device will be redirected and available in the remote session. 0 - The remote session uses the location of the remote computer 1 - The remote session uses the location of the local device |

redirectposdevices | i | 0 | No | Yes | Determines whether Microsoft Point of Service (POS) for .NET devices connected to the client computer will be redirected and available in the remote session. 0 - The POS devices from the local computer are not available in the remote session 1 - The POS devices from the local computer are available in the remote session |

redirectprinters | i | 1 | Yes | Yes | Determines whether printers configured on the client computer will be redirected and available in the remote session. 0 - The printers on the local computer are not available in the remote session 1 - The printers on the local computer are available in the remote session |

redirectsmartcards | i | 1 | Yes | Yes | Determines whether smart card devices on the client computer will be redirected and available in the remote session. 0 - The smart card device on the local computer is not available in the remote session 1 - The smart card device on the local computer is available in the remote session |

redirectwebauthn | i | 1 | Yes | Yes | Determines whether WebAuthn requests on the remote computer will be redirected to the local computer allowing the use of local authenticators (such as Windows Hello for Business and security key). 0 - WebAuthn requests from the remote session aren’t sent to the local computer for authentication and must be completed in the remote session 1 - WebAuthn requests from the remote session are sent to the local computer for authentication |

remoteapplicationicon | s | No | Yes | the file name of an icon file to be displayed in the while starting the RemoteApp. By default RDC will show the standard Note: Only .ico files are supported.No | |

remoteapplicationmode | i | 0 | No | Yes | Determines whether a RemoteApp shoud be launched when connecting 0 - Use a normal session and do not start a RemoteApp 1 - Connect and launch a RemoteApp |

remoteapplicationname | s | No | Yes | the name of the RemoteApp in the Remote Desktop interface while starting the RemoteApp. | |

remoteapplicationprogram | s | No | Yes | Specifies the alias or executable name of the RemoteApp. | |

screenmodeid | i | 2 | Yes | Yes | Determines whether the remote session window appears full screen when you connect to the remote computer. 1 - The remote session will appear in a window 2 - The remote session will appear full screen |

selectedmonitors | s | Yes | Yes | Specifies which local displays to use for the remote session. The selected displays must be contiguous. Requires use multimon to be set to 1. Comma separated list of machine-specific display IDs. You can retrieve IDs by calling mstsc.exe /l. The first ID listed will be set as the primary display in the session. Defaults to all displays. | |

serverport | i | 3389 | No | Yes | Defines an alternate default port for the Remote Desktop connection. Will be overruled by any port number appended to the server name. |

sessionbpp | i | 32 | No | Yes | Determines the color depth (in bits) on the remote computer when you connect. 8 - 256 colors (8 bit) 15 - High color (15 bit) 16 - High color (16 bit) 24 - True color (24 bit) 32 - Highest quality (32 bit) |

shellworkingdirectory | s | No | Yes | The working directory on the remote computer to be used if an alternate shell is specified. | |

signature | s | No | Yes | The encoded signature when using .rdp file signing. | |

signscope | s | No | Yes | Comma-delimited list of .rdp file settings for which the signature is generated when using .rdp file signing. | |

smartsizing | i | 0 | Yes | Yes | Determines whether the client computer should scale the content on the remote computer to fit the window size of the client computer when the window is resized. 0 - The client window display will not be scaled when resized 1 - The client window display will automatically be scaled when resized |

spanmonitors | i | 0 | No | Yes | Determines whether the remote session window will be spanned across multiple monitors when you connect to the remote computer. 0 - Monitor spanning is not enabled 1 - Monitor spanning is enabled |

superpanaccelerationfactor | i | 1 | No | Yes | Specifies the number of pixels that the screen view scrolls in a given direction for every pixel of mouse movement by the client when in SuperPan mode. |

usbdevicestoredirect | s | Yes | Yes | which supported RemoteFX USB devices on the client computer will be redirected and available in the remote session when you connect to a remote session that supports RemoteFX USB redirection. No value specified - Do not redirect any supported RemoteFX USB devices * - Redirect all supported RemoteFX USB devices for redirection that are not | |

usemultimon | i | 0 | Yes | Yes | Determines whether the session should use true multiple monitor support when connecting to the remote computer. 0 - Do not enable multiple monitor support 1 - Enable multiple monitor support |

username | s | No | Yes | the name of the user account that will be used to log on to the remote computer. | |

videoplaybackmode | i | 1 | No | Yes | Determines whether RDC will use RDP efficient multimedia streaming for video playback. 0 - Do not use RDP efficient multimedia streaming for video playback 1 - Use RDP efficient multimedia streaming for video playback when possible |

winposstr | s | 0,3,0,0,800,600 | No | Yes | Specifies the position and dimensions of the session window on the client computer. |

workspaceid | s | No | Yes | This setting defines the RemoteApp and Desktop ID associated with the RDP file that contains this setting. |

Summary

This page contains a lot of different RDP settings which we can still use today. Some of the RDP settings are categorized by Microsoft as not supported but will do their work in Azure Virtual Desktop too, for example the option to hide the connection bar by default.

Sources

These sources helped me by writing and research for this post;

Thank you for reading this post and I hope it was helpful!

End of the page 🎉

You have reached the end of the page. You can navigate through other blog posts as well, share this post on X, LinkedIn and Reddit or return to the blog posts collection page. Thank you for visiting this post.

If you think something is wrong with this post or you want to know more, you can send me a message to one of my social profiles at: https://justinverstijnen.nl/about/

If you find this page and blog very useful and you want to leave a donation, you can use the button below to buy me a beer. Hosting and maintaining a website takes a lot of time and money. Thank you in advance and cheers :)

The terms and conditions apply to this post.

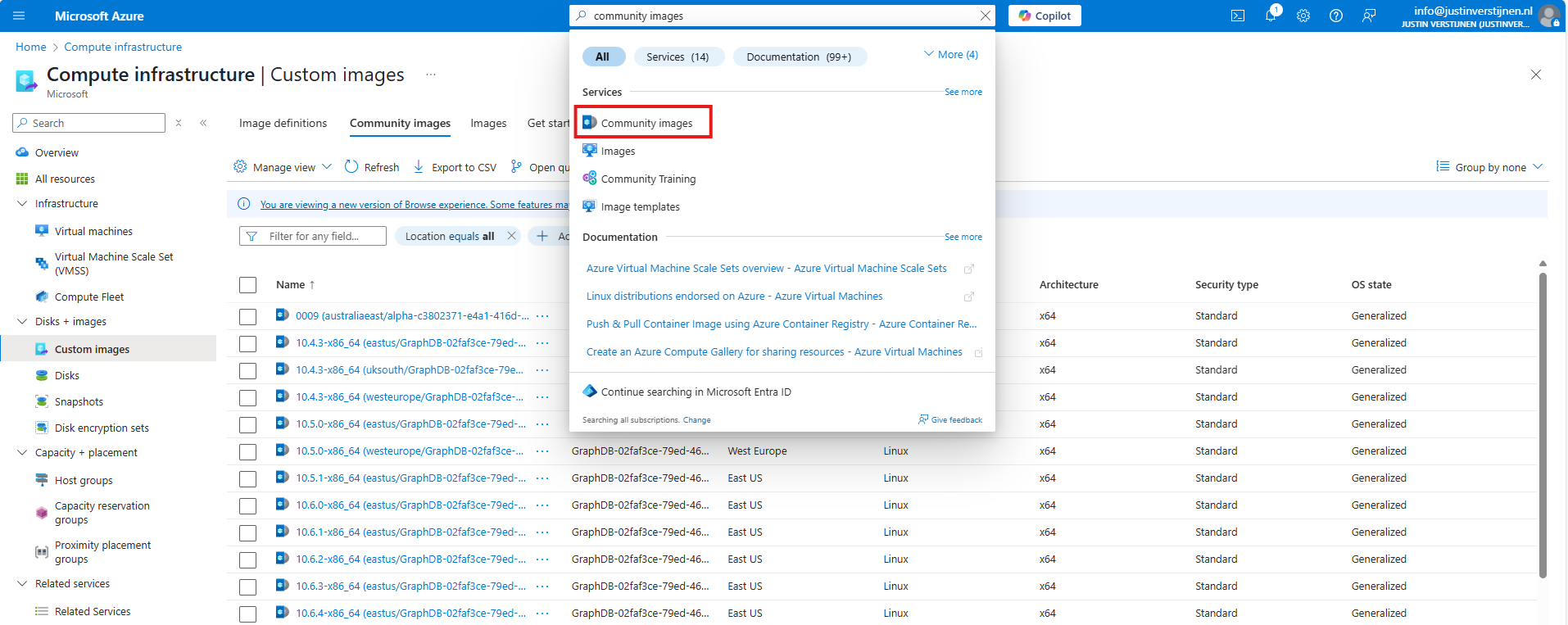

Azure Compute Gallery and (AVD) VM images

Azure Compute Gallery is a great service in Azure to store, capture and maintain your VM images. This can be helpful when deploying multiple similar VMs. Use cases of this can be VM Scale Sets, webservers , containers or Azure Virtual Desktop session hosts.

In this blog post, I will tell more about Azure Compute Gallery, how to use it when imaging VMs and how it can help you storing and maintaining images for your VMs.

Requirements

- Around 40 minutes of your time

- Basic knowledge of (Windows) VMs

- Basic knowledge of Azure

- An Azure subscription to test the functionality

What is Azure Compute Gallery?

Azure Compute Gallery (ACG) is a service in Azure that helps you storing, categorizing and maintaining images of your virtual machines. This can be really helpful when needing to deploy similar virtual machines, which we do for Virtual Machine Scale Sets but also for Azure Virtual Desktop. Those are 2 services where similar images needs to be deployed. You can also build “specialized” images for different use cases where similarity is not a requirement, like Active Directory Domain Controllers or SQL/Application servers.

The features of Azure Compute Gallery:

- Image versioning: We can build our own versioning and numbering for images, storing newer images under a new version number for documentation and testing purposes. This makes it easy to rollback to a previous version if something is wrong.

- Global Replication: Images can be distributed across multiple regions for more availability and faster deployment

- Sharing of images: You can share Azure Compute Gallery images with tenants outside of your own organization, especially useful when you have Azure Landing Zones

- Security and Access control: Access to different images and versions can be restricted through Azure RBAC.

How does Azure Compute Gallery work?

Azure Compute Gallery itself is a sort specialized storage account for storing images only. In the gallery, you have a VM definition, which is a group of images for a specific use case and under the definitions, we put the images itself. All of this looks like this:

This is an example of a use-case of Azure Compute Gallery, where we store images for Azure Virtual Desktop VMs and for our Webservers, which we re-image every month in this case.

Azure Compute Gallery vs Managed Images

Azure Compute Gallery has some advantages over the “older” and more basic Managed Images which you may use. Let’s dive into the key differences:

| Feature | Azure Compute Gallery | Managed Images |

| Creating and storing generalized and specialized images | ✅ | ✅ |

| Region availability | ✅ | ❌ |

| Versioning | ✅ | ❌ |

| Trusted Launch VMs (TPM/Secure Boot) | ✅ | ❌ |

Pricing of Azure Compute Gallery

The costs of Azure Compute Gallery is based on:

- How much images you store

- How many regions you store a copy for availability

- The storage tier you run the images on